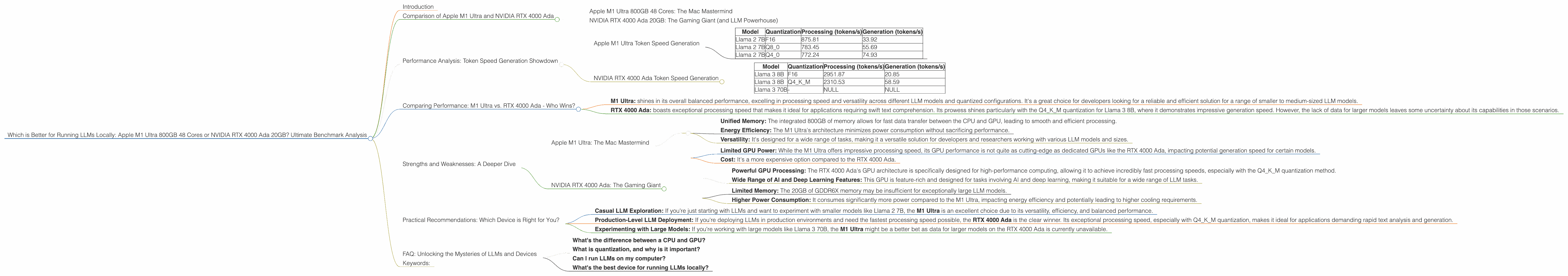

Which is Better for Running LLMs locally: Apple M1 Ultra 800gb 48cores or NVIDIA RTX 4000 Ada 20GB? Ultimate Benchmark Analysis

Introduction

The ability to run large language models (LLMs) locally is becoming increasingly important. This allows developers and researchers to experiment with LLMs without relying on cloud services, which can be costly and have latency issues. But choosing the right hardware for your needs can be a real head-scratcher!

This article compares the performance of two popular devices for running LLMs locally: the Apple M1 Ultra 800GB 48 Cores and the NVIDIA RTX 4000 Ada 20GB. We’ll analyze their performance on different tasks, delve into their strengths and weaknesses, and provide practical recommendations for various use cases.

Buckle up, it's about to get geeky!

Comparison of Apple M1 Ultra and NVIDIA RTX 4000 Ada

Apple M1 Ultra 800GB 48 Cores: The Mac Mastermind

The Apple M1 Ultra is a powerful chip designed for high-performance computing, boasting 48 CPU cores and a massive 800GB of unified memory. It's known for its incredible performance and efficient energy usage.

NVIDIA RTX 4000 Ada 20GB: The Gaming Giant (and LLM Powerhouse)

The NVIDIA RTX 4000 Ada is a graphics processing unit (GPU) designed for gaming and high-performance computing. It offers 20GB of GDDR6X memory and boasts impressive performance in AI and deep learning tasks.

Performance Analysis: Token Speed Generation Showdown

We'll delve into the performance of each device based on tokens per second (tokens/s), which measures the speed at which the device can process and generate text from an LLM. Think of it like the words per minute test, but for AI models!

Apple M1 Ultra Token Speed Generation

Here's the performance breakdown of the Apple M1 Ultra running different LLM models and configurations:

| Model | Quantization | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|---|

| Llama 2 7B | F16 | 875.81 | 33.92 |

| Llama 2 7B | Q8_0 | 783.45 | 55.69 |

| Llama 2 7B | Q4_0 | 772.24 | 74.93 |

Key Highlights:

- Consistent Performance: The M1 Ultra demonstrates impressive and consistent performance across different quantized configurations.

- Faster Processing: It excels in processing tokens, meaning it can quickly understand and analyze text.

- Slower Generation: It lags behind in text generation, which can impact real-time chat or creative writing applications.

NVIDIA RTX 4000 Ada Token Speed Generation

Here’s the performance of the RTX 4000 Ada:

| Model | Quantization | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|---|

| Llama 3 8B | F16 | 2951.87 | 20.85 |

| Llama 3 8B | Q4KM | 2310.53 | 58.59 |

| Llama 3 70B | - | NULL | NULL |

Key Highlights:

- Exceptional Processing Speed: The RTX 4000 Ada blows the M1 Ultra out of the water in processing speed, making it ideal for tasks requiring rapid text analysis.

- Efficient Generation with Q4KM: The combination of Q4KM quantization and the RTX 4000 Ada yields impressive generation speeds for the Llama 3 8B model.

- Limited Larger Model Support: We lack data for the 70B Llama 3 model on the RTX 4000 Ada, highlighting a potential limitation for running extremely large models.

Comparing Performance: M1 Ultra vs. RTX 4000 Ada - Who Wins?

The comparison between the M1 Ultra and RTX 4000 Ada highlights the diverse strengths and weaknesses of each device:

- M1 Ultra: shines in its overall balanced performance, excelling in processing speed and versatility across different LLM models and quantized configurations. It's a great choice for developers looking for a reliable and efficient solution for a range of smaller to medium-sized LLM models.

- RTX 4000 Ada: boasts exceptional processing speed that makes it ideal for applications requiring swift text comprehension. Its prowess shines particularly with the Q4KM quantization for Llama 3 8B, where it demonstrates impressive generation speed. However, the lack of data for larger models leaves some uncertainty about its capabilities in those scenarios.

Strengths and Weaknesses: A Deeper Dive

Let's examine the strengths and weaknesses of each device in more detail:

Apple M1 Ultra: The Mac Mastermind

Strengths:

- Unified Memory: The integrated 800GB of memory allows for fast data transfer between the CPU and GPU, leading to smooth and efficient processing.

- Energy Efficiency: The M1 Ultra's architecture minimizes power consumption without sacrificing performance.

- Versatility: It's designed for a wide range of tasks, making it a versatile solution for developers and researchers working with various LLM models and sizes.

Weaknesses:

- Limited GPU Power: While the M1 Ultra offers impressive processing speed, its GPU performance is not quite as cutting-edge as dedicated GPUs like the RTX 4000 Ada, impacting potential generation speed for certain models.

- Cost: It's a more expensive option compared to the RTX 4000 Ada.

NVIDIA RTX 4000 Ada: The Gaming Giant

Strengths:

- Powerful GPU Processing: The RTX 4000 Ada's GPU architecture is specifically designed for high-performance computing, allowing it to achieve incredibly fast processing speeds, especially with the Q4KM quantization method.

- Wide Range of AI and Deep Learning Features: This GPU is feature-rich and designed for tasks involving AI and deep learning, making it suitable for a wide range of LLM tasks.

Weaknesses:

- Limited Memory: The 20GB of GDDR6X memory may be insufficient for exceptionally large LLM models.

- Higher Power Consumption: It consumes significantly more power compared to the M1 Ultra, impacting energy efficiency and potentially leading to higher cooling requirements.

Practical Recommendations: Which Device is Right for You?

Here's a breakdown of use cases to help you choose the best device for your needs:

- Casual LLM Exploration: If you're just starting with LLMs and want to experiment with smaller models like Llama 2 7B, the M1 Ultra is an excellent choice due to its versatility, efficiency, and balanced performance.

- Production-Level LLM Deployment: If you're deploying LLMs in production environments and need the fastest processing speed possible, the RTX 4000 Ada is the clear winner. Its exceptional processing speed, especially with Q4KM quantization, makes it ideal for applications demanding rapid text analysis and generation.

- Experimenting with Large Models: If you're working with large models like Llama 3 70B, the M1 Ultra might be a better bet as data for larger models on the RTX 4000 Ada is currently unavailable.

Quantization: A Little Bit of Magic for LLM Efficiency

Quantization is a technique that reduces the precision of the weights in the LLM model, resulting in smaller file sizes and faster loading times. It's like squeezing a large backpack into a smaller one - you might lose a little detail, but you gain portability and speed.

For the M1 Ultra, the Q40 quantization seems to strike a good balance between speed and accuracy. For the RTX 4000 Ada, the Q4K_M quantization shines with Llama 3 8B, but it's important to consider the specific needs of your model and application.

FAQ: Unlocking the Mysteries of LLMs and Devices

What's the difference between a CPU and GPU?

Think of the CPU as the brains of your computer, responsible for general tasks. The GPU is like a specialized co-processor, designed for graphics-intensive tasks like gaming or running AI models.

What is quantization, and why is it important?

Quantization is a technique for reducing the size of an LLM model without sacrificing too much accuracy. It's like summarizing a long book - you might lose some details, but you get the gist of it and can read it much faster. For LLMs, this means faster processing times and less memory usage.

Can I run LLMs on my computer?

Yes, you can! But the size and complexity of the LLM will determine what hardware you need. For smaller models, even a laptop might be sufficient. For larger models, you'll likely need a high-performance computer with a dedicated GPU.

What's the best device for running LLMs locally?

There's no one-size-fits-all answer. The best device depends on your specific needs and the LLM you want to run. For smaller models, the M1 Ultra is a great option. For larger models and those demanding the fastest processing speeds, the RTX 4000 Ada is the way to go.

Keywords:

LLMs, large language models, Apple M1 Ultra, NVIDIA RTX 4000 Ada, token speed generation, processing speed, generation speed, quantization, F16, Q80, Q40, Q4KM, Llama 2, Llama 3, performance analysis, benchmark, comparison, local LLM inference, GPU, CPU, performance, AI, deep learning, efficiency, practical recommendations, use cases, hardware, software, developers, researchers, cloud services, latency, unified memory, GDDR6X memory, energy consumption, cost, power consumption, cooling requirements.