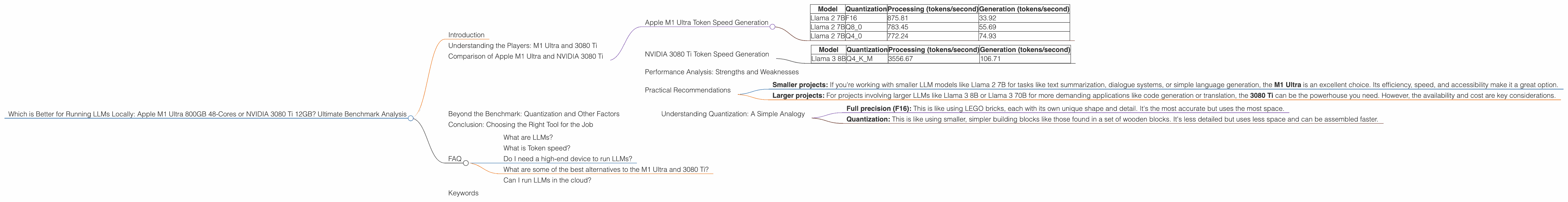

Which is Better for Running LLMs locally: Apple M1 Ultra 800gb 48cores or NVIDIA 3080 Ti 12GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, and with it, the need for powerful hardware to run these models locally. Two popular contenders in this race are the Apple M1 Ultra and the NVIDIA 3080 Ti. But which one reigns supreme when it comes to handling the processing demands of LLMs? This article delves deep into a head-to-head comparison of these two heavyweights, analyzing their performance on various LLM models and offering insights for developers looking to build and deploy their own local LLM systems.

Understanding the Players: M1 Ultra and 3080 Ti

Imagine LLMs as massive, complex puzzles. To solve them, you need powerful tools. The M1 Ultra and 3080 Ti are like two different sets of tools, each with its unique strengths.

The Apple M1 Ultra is a beast of a processor. It boasts 48 cores, 800GB of bandwidth, and a design optimized for parallel processing. Think of it as a team of highly skilled puzzle solvers, each working independently on different parts of the puzzle, communicating seamlessly to achieve the final solution.

On the other hand, the NVIDIA 3080 Ti is a dedicated GPU, a specialized chip built for graphics processing. It's like a hyper-efficient puzzle solver, focusing solely on a specific piece of the puzzle with lightning speed. It's also known for its ability to run complex calculations required for LLM inference.

Comparison of Apple M1 Ultra and NVIDIA 3080 Ti

Now, let's dive into the nitty-gritty, comparing the performance of these two devices for running popular LLM models. For this analysis, we'll be examining token speed, a crucial metric for LLM performance. Token speed represents how fast the device can process and generate text, measured in tokens per second.

Apple M1 Ultra Token Speed Generation

The M1 Ultra shines in terms of token speed for smaller LLM models. It excels in processing and generating text for the Llama 2 7B model, achieving impressive speeds across different quantization levels. This makes it a superior choice for developers working with smaller LLMs, like text summarization or dialogue systems.

| Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama 2 7B | F16 | 875.81 | 33.92 |

| Llama 2 7B | Q8_0 | 783.45 | 55.69 |

| Llama 2 7B | Q4_0 | 772.24 | 74.93 |

Note: Quantization reduces model size by compressing values, allowing for faster processing. F16 uses 16-bit floating point numbers, while Q80 and Q40 use 8-bit and 4-bit integers respectively.

NVIDIA 3080 Ti Token Speed Generation

The 3080 Ti flexes its muscles with larger models like the Llama 3 8B, delivering impressive token speed figures. This makes it a better choice for applications demanding more complex language processing or large-scale data analysis. However, there's no data available for the 3080 Ti running the Llama 3 70B model, so we can't compare performance for this larger model.

| Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 3556.67 | 106.71 |

Performance Analysis: Strengths and Weaknesses

Both the M1 Ultra and the 3080 Ti have their strengths and weaknesses.

The M1 Ultra is like a nimble sprinter for smaller models, excelling at processing and generating text with impressive speed. It's also relatively energy efficient, making it a good choice for smaller projects.

The 3080 Ti is like a marathon runner for larger models, able to tackle complex tasks with power and precision. Its strength lies in handling larger LLMs, making it ideal for advanced AI applications.

However, the 3080 Ti has its limitations. It's more power-hungry than the M1 Ultra and can be more expensive. Additionally, it's difficult to find the 3080 Ti on the market due to the global chip shortage, making it less accessible.

Practical Recommendations

The choice ultimately depends on your specific needs.

Here's a simple guide to help you decide:

Smaller projects: If you're working with smaller LLM models like Llama 2 7B for tasks like text summarization, dialogue systems, or simple language generation, the M1 Ultra is an excellent choice. Its efficiency, speed, and accessibility make it a great option.

Larger projects: For projects involving larger LLMs like Llama 3 8B or Llama 3 70B for more demanding applications like code generation or translation, the 3080 Ti can be the powerhouse you need. However, the availability and cost are key considerations.

Beyond the Benchmark: Quantization and Other Factors

Quantization, the process of reducing model size and increasing speed, is a game-changer in the world of LLMs. Both the M1 Ultra and the 3080 Ti benefit from quantization. However, the M1 Ultra shows remarkable performance even with lower quantization levels (Q80 and Q40). The 3080 Ti, on the other hand, benefits more from higher quantization levels (Q4KM), achieving impressive speeds.

Understanding Quantization: A Simple Analogy

Imagine you're building a miniature model of a skyscraper. You can use different materials:

- Full precision (F16): This is like using LEGO bricks, each with its own unique shape and detail. It's the most accurate but uses the most space.

- Quantization: This is like using smaller, simpler building blocks like those found in a set of wooden blocks. It's less detailed but uses less space and can be assembled faster.

Quantization allows LLMs to run faster while consuming less memory.

Remember, token speed is just one metric. Other factors like memory and power consumption also play a role. You'll need to consider these factors based on your project's specific requirements.

Conclusion: Choosing the Right Tool for the Job

The choice between the Apple M1 Ultra and the NVIDIA 3080 Ti depends on your project's requirements and your budget. The M1 Ultra provides a compelling balance of performance, affordability, and accessibility, making it suitable for smaller projects and developers. The 3080 Ti, on the other hand, is a powerful option for larger projects with more demanding needs, but faces challenges with accessibility and cost.

Ultimately, the best device for you depends on your specific needs and constraints. By carefully considering your project's requirements and understanding the capabilities of both the M1 Ultra and the 3080 Ti, you can make an informed decision and optimize your local LLM development environment.

FAQ

What are LLMs?

LLMs are large language models, advanced AI systems trained on massive datasets of text and code. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is Token speed?

Token speed is a measure of how fast a device can process and generate text. It's measured in tokens per second. A higher token speed means the device can handle language tasks more efficiently, resulting in faster results.

Do I need a high-end device to run LLMs?

You don't necessarily need the most powerful device to run LLMs. Smaller LLM models can be run on consumer-grade devices. However, for larger LLMs, more powerful hardware like the M1 Ultra or the 3080 Ti is recommended.

What are some of the best alternatives to the M1 Ultra and 3080 Ti?

If you're looking for alternatives to the M1 Ultra and the 3080 Ti, options like the NVIDIA A100 and the AMD MI250 are powerful GPUs specifically designed for AI processing. However, they come at a higher price point.

Can I run LLMs in the cloud?

Yes, you can run LLMs in the cloud using services like Google Colab or Amazon SageMaker. These services provide access to high-performance computing resources, making it easier to experiment with and deploy LLMs without the need for dedicated hardware.

Keywords

LLM, Large Language Model, Apple M1 Ultra, NVIDIA 3080 Ti, Token Speed, Quantization, F16, Q80, Q40, Q4KM, GPU, CPU, Performance, Benchmark, Local LLMs, Inference, Processing, Generation, Deep Learning, AI, Machine Learning, Developer, Programming, Code, Natural Language Processing, NLP, Text Summarization, Dialogue Systems, Code Generation, Translation, Cloud Computing, Google Colab, Amazon SageMaker, Data Science, Software Development, Tech Trends, AI Hardware, Performance Comparison, Technical Analysis, AI Applications, AI Development, AI Industry, AI Research, AI Education, AI Ethics, AI Future, AI Trends, AI News