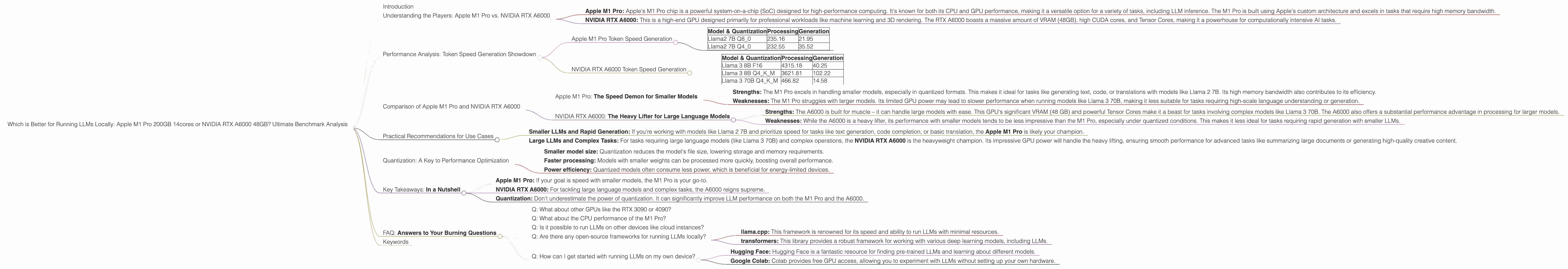

Which is Better for Running LLMs locally: Apple M1 Pro 200gb 14cores or NVIDIA RTX A6000 48GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, and it's getting easier than ever before to run these powerful AI models on your own computer. But with so many different hardware options available, choosing the right device for your LLM needs can be a challenge.

This article dives into the performance of two popular devices: the Apple M1 Pro 200GB 14cores and the NVIDIA RTX A6000 48GB, comparing their capabilities for running LLMs locally. We'll analyze their strengths and weaknesses, explore specific performance benchmarks, and provide practical recommendations for different use cases.

Understanding the Players: Apple M1 Pro vs. NVIDIA RTX A6000

Before we dive into the benchmarks, let's get a clear picture of the two contenders:

- Apple M1 Pro: Apple's M1 Pro chip is a powerful system-on-a-chip (SoC) designed for high-performance computing. It's known for both its CPU and GPU performance, making it a versatile option for a variety of tasks, including LLM inference. The M1 Pro is built using Apple's custom architecture and excels in tasks that require high memory bandwidth.

- NVIDIA RTX A6000: This is a high-end GPU designed primarily for professional workloads like machine learning and 3D rendering. The RTX A6000 boasts a massive amount of VRAM (48GB), high CUDA cores, and Tensor Cores, making it a powerhouse for computationally intensive AI tasks.

Performance Analysis: Token Speed Generation Showdown

Apple M1 Pro Token Speed Generation

The M1 Pro demonstrates its efficiency when running smaller LLM models, especially with quantized versions. Let's break down its performance:

- Llama 2 7B: The M1 Pro shines in this category. It achieves a token speed of 21.95 tokens/second for generation with Llama 2 7B in Q8_0 quantization, making it a solid choice for fast text generation with smaller models.

Table 1: Apple M1 Pro Token Speed Generation (tokens/second)

| Model & Quantization | Processing | Generation |

|---|---|---|

| Llama2 7B Q8_0 | 235.16 | 21.95 |

| Llama2 7B Q4_0 | 232.55 | 35.52 |

Note: We don't have data for Llama 2 7B in F16 for the M1 Pro with 14 cores. However, for the M1 Pro with 16 cores, the F16 performance is 12.75 tokens/second for generation.

NVIDIA RTX A6000 Token Speed Generation

The RTX A6000 stands out in its ability to handle larger models efficiently, thanks to its impressive GPU power. Here’s the performance breakdown:

- Llama 3 8B: The A6000 delivers a respectable generation speed of 40.25 tokens/second with Llama 3 8B in F16. The Q4KM quantization further boosts performance to 102.22 tokens/second, demonstrating the GPU's ability to handle larger models effectively.

- Llama 3 70B: The A6000's capability is highlighted by its performance with the larger Llama 3 70B model. The A6000 achieves a generation speed of 14.58 tokens/second in Q4KM quantization, showcasing its ability to handle significantly larger models.

Table 2: NVIDIA RTX A6000 Token Speed Generation (tokens/second)

| Model & Quantization | Processing | Generation |

|---|---|---|

| Llama 3 8B F16 | 4315.18 | 40.25 |

| Llama 3 8B Q4KM | 3621.81 | 102.22 |

| Llama 3 70B Q4KM | 466.82 | 14.58 |

Note: We don't have F16 performance data for Llama 3 70B on the RTX A6000.

Comparison of Apple M1 Pro and NVIDIA RTX A6000

Which device reigns supreme? It depends on your use case. Let's break down their strengths and weaknesses:

Apple M1 Pro: The Speed Demon for Smaller Models

- Strengths: The M1 Pro excels in handling smaller models, especially in quantized formats. This makes it ideal for tasks like generating text, code, or translations with models like Llama 2 7B. Its high memory bandwidth also contributes to its efficiency.

- Weaknesses: The M1 Pro struggles with larger models. Its limited GPU power may lead to slower performance when running models like Llama 3 70B, making it less suitable for tasks requiring high-scale language understanding or generation.

NVIDIA RTX A6000: The Heavy Lifter for Large Language Models

- Strengths: The A6000 is built for muscle – it can handle large models with ease. This GPU's significant VRAM (48 GB) and powerful Tensor Cores make it a beast for tasks involving complex models like Llama 3 70B. The A6000 also offers a substantial performance advantage in processing for larger models.

- Weaknesses: While the A6000 is a heavy lifter, its performance with smaller models tends to be less impressive than the M1 Pro, especially under quantized conditions. This makes it less ideal for tasks requiring rapid generation with smaller LLMs.

Practical Recommendations for Use Cases

Here's how to choose the right device based on your LLM needs:

- Smaller LLMs and Rapid Generation: If you're working with models like Llama 2 7B and prioritize speed for tasks like text generation, code completion, or basic translation, the Apple M1 Pro is likely your champion.

- Large LLMs and Complex Tasks: For tasks requiring large language models (like Llama 3 70B) and complex operations, the NVIDIA RTX A6000 is the heavyweight champion. Its impressive GPU power will handle the heavy lifting, ensuring smooth performance for advanced tasks like summarizing large documents or generating high-quality creative content.

Quantization: A Key to Performance Optimization

Quantization is a crucial concept when working with LLMs. It involves reducing the precision of the model's weights from 32-bit floating-point numbers (FP32) to smaller formats like 16-bit (FP16) or even 8-bit (Q8).

Why is it important?

- Smaller model size: Quantization reduces the model's file size, lowering storage and memory requirements.

- Faster processing: Models with smaller weights can be processed more quickly, boosting overall performance.

- Power efficiency: Quantized models often consume less power, which is beneficial for energy-limited devices.

How it works:

Think of quantization as a way to compress the model's information without losing too much detail. Instead of using the full range of numbers, quantization groups similar values together, reducing the overall size of the model.

In the context of our comparison:

Both the M1 Pro and the A6000 benefit from quantization. The M1 Pro, in particular, sees a significant performance boost when using quantized models like Llama 2 7B in Q8_0. The A6000 also benefits from quantization, especially when handling larger models like Llama 3 70B.

Key Takeaways: In a Nutshell

- Apple M1 Pro: If your goal is speed with smaller models, the M1 Pro is your go-to.

- NVIDIA RTX A6000: For tackling large language models and complex tasks, the A6000 reigns supreme.

- Quantization: Don't underestimate the power of quantization. It can significantly improve LLM performance on both the M1 Pro and the A6000.

FAQ: Answers to Your Burning Questions

Q: What about other GPUs like the RTX 3090 or 4090?

A: While those cards offer impressive performance, we focused on the M1 Pro and RTX A6000 due to their widespread use in LLM development and professional workflows. However, you can find benchmarks for other GPUs online to make a more informed decision for your specific needs.

Q: What about the CPU performance of the M1 Pro?

A: The M1 Pro packs a punch with its CPU, and you can definitely run LLMs on the CPU alone. However, for optimal performance, leveraging the GPU is highly recommended. This is especially true when dealing with larger models or tasks requiring high-speed text generation.

Q: Is it possible to run LLMs on other devices like cloud instances?

A: Absolutely! Cloud computing services like AWS, Google Cloud, and Azure offer powerful cloud instances that can handle even the largest LLMs. This is a great option for those who don't have the budget or space for dedicated hardware.

Q: Are there any open-source frameworks for running LLMs locally?

A: Yes! The open-source community is thriving, and several great frameworks exist for running LLMs locally. Popular options include:

- llama.cpp: This framework is renowned for its speed and ability to run LLMs with minimal resources.

- transformers: This library provides a robust framework for working with various deep learning models, including LLMs.

Q: How can I get started with running LLMs on my own device?

A: Here are some resources to jumpstart your journey:

- Hugging Face: Hugging Face is a fantastic resource for finding pre-trained LLMs and learning about different models.

- Google Colab: Colab provides free GPU access, allowing you to experiment with LLMs without setting up your own hardware.

Keywords

Apple M1 Pro, NVIDIA RTX A6000, LLM, Large Language Model, Token Speed, Generation, Processing, Llama 2, Llama 3, Quantization, Q8, Q4, F16, Benchmark, Performance Analysis, GPU, CPU, Memory Bandwidth, Use Cases, Recommendation, Open Source, Frameworks, llama.cpp, transformers, Hugging Face, Google Colab.