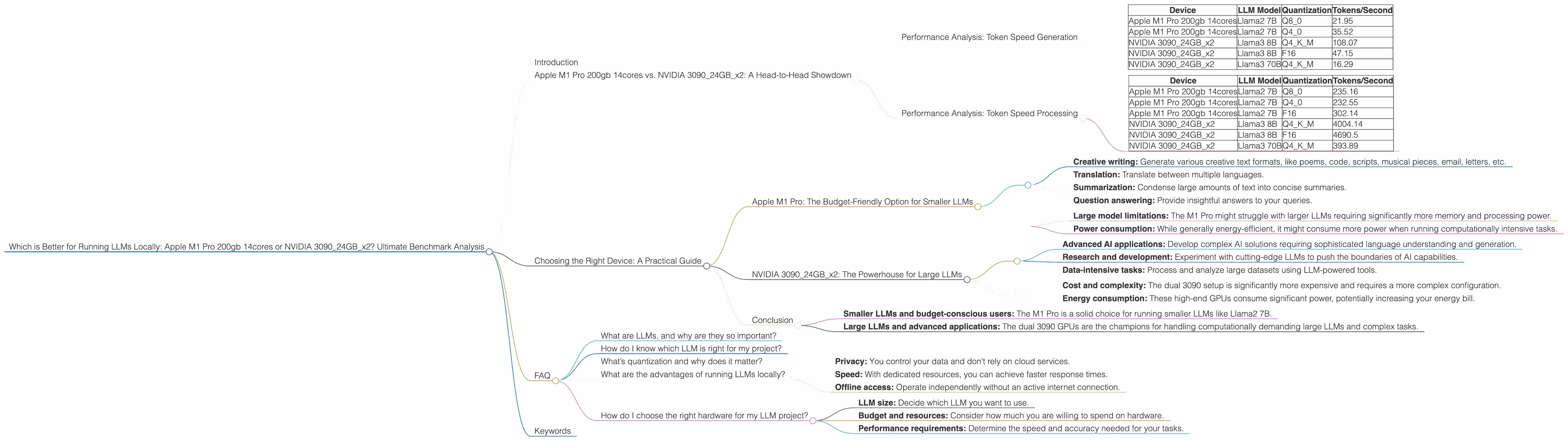

Which is Better for Running LLMs locally: Apple M1 Pro 200gb 14cores or NVIDIA 3090 24GB x2? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding! These powerful AI systems are revolutionizing how we interact with computers, from generating creative text to translating languages. But running LLMs locally can be a challenge, requiring powerful hardware capable of handling the massive computations involved.

This article dives deep into the performance of two popular devices for local LLM execution: the Apple M1 Pro chip with 200GB of memory and 14 cores, and dual NVIDIA 3090 GPUs with 24GB of memory each. We’ll compare their strengths and weaknesses, analyze their performance on various LLM models, and provide practical guidance for choosing the right setup for your needs.

Apple M1 Pro 200gb 14cores vs. NVIDIA 309024GBx2: A Head-to-Head Showdown

Performance Analysis: Token Speed Generation

Let’s start by comparing the token generation speeds of both devices for different LLM models. Here's a table summarizing the data:

| Device | LLM Model | Quantization | Tokens/Second |

|---|---|---|---|

| Apple M1 Pro 200gb 14cores | Llama2 7B | Q8_0 | 21.95 |

| Apple M1 Pro 200gb 14cores | Llama2 7B | Q4_0 | 35.52 |

| NVIDIA 309024GBx2 | Llama3 8B | Q4KM | 108.07 |

| NVIDIA 309024GBx2 | Llama3 8B | F16 | 47.15 |

| NVIDIA 309024GBx2 | Llama3 70B | Q4KM | 16.29 |

Key Observations:

- M1 Pro shines with smaller models: The Apple M1 Pro excels in token generation speed with the Llama2 7B model, particularly with Q4_0 quantization.

- NVIDIA 3090 triumphs with larger models: When it comes to larger models like Llama3 8B and 70B, the dual 3090 GPUs demonstrate superior performance.

- Quantization matters: Both devices exhibit a significant difference in token generation speeds depending on the quantization method used for the LLM.

Performance Analysis: Token Speed Processing

Now let's look at the processing speed of the devices, which is how quickly they can handle the internal calculations of the LLM.

| Device | LLM Model | Quantization | Tokens/Second |

|---|---|---|---|

| Apple M1 Pro 200gb 14cores | Llama2 7B | Q8_0 | 235.16 |

| Apple M1 Pro 200gb 14cores | Llama2 7B | Q4_0 | 232.55 |

| Apple M1 Pro 200gb 14cores | Llama2 7B | F16 | 302.14 |

| NVIDIA 309024GBx2 | Llama3 8B | Q4KM | 4004.14 |

| NVIDIA 309024GBx2 | Llama3 8B | F16 | 4690.5 |

| NVIDIA 309024GBx2 | Llama3 70B | Q4KM | 393.89 |

Interesting Insights:

- Processing vs. Generation: The M1 Pro exhibits a significant difference between processing and generation speeds, suggesting it may be more efficient at handling internal model calculations than producing text.

- NVIDIA's dominance in processing: The dual 3090 GPUs consistently outpace the M1 Pro in processing speed for both Llama3 8B and 70B models.

Choosing the Right Device: A Practical Guide

Apple M1 Pro: The Budget-Friendly Option for Smaller LLMs

The Apple M1 Pro offers a cost-effective way to run smaller LLMs locally. Its strong performance on the Llama2 7B model makes it suitable for tasks like:

- Creative writing: Generate various creative text formats, like poems, code, scripts, musical pieces, email, letters, etc.

- Translation: Translate between multiple languages.

- Summarization: Condense large amounts of text into concise summaries.

- Question answering: Provide insightful answers to your queries.

The M1 Pro’s limitations:

- Large model limitations: The M1 Pro might struggle with larger LLMs requiring significantly more memory and processing power.

- Power consumption: While generally energy-efficient, it might consume more power when running computationally intensive tasks.

NVIDIA 309024GBx2: The Powerhouse for Large LLMs

The dual NVIDIA 3090 GPUs are a powerful force for handling large LLMs. Their high processing and generation speeds make them ideal for:

- Advanced AI applications: Develop complex AI solutions requiring sophisticated language understanding and generation.

- Research and development: Experiment with cutting-edge LLMs to push the boundaries of AI capabilities.

- Data-intensive tasks: Process and analyze large datasets using LLM-powered tools.

The 3090’s considerations:

- Cost and complexity: The dual 3090 setup is significantly more expensive and requires a more complex configuration.

- Energy consumption: These high-end GPUs consume significant power, potentially increasing your energy bill.

Conclusion

The choice between Apple M1 Pro 200gb 14cores and NVIDIA 309024GBx2 depends on your specific needs:

- Smaller LLMs and budget-conscious users: The M1 Pro is a solid choice for running smaller LLMs like Llama2 7B.

- Large LLMs and advanced applications: The dual 3090 GPUs are the champions for handling computationally demanding large LLMs and complex tasks.

FAQ

What are LLMs, and why are they so important?

LLMs are a type of artificial intelligence that excels at understanding and generating human language. They can be used for a wide range of applications, from writing creative content to translating languages.

How do I know which LLM is right for my project?

The best LLM depends on your specific requirements. Smaller LLMs like Llama2 7B are more efficient for simple tasks, while larger LLMs like Llama3 8B and 70B are ideal for complex applications.

What’s quantization and why does it matter?

Quantization is a technique for reducing the size of LLM models, making them faster and more efficient. This is particularly important when running LLMs locally with limited resources.

What are the advantages of running LLMs locally?

Running LLMs locally offers several benefits:

- Privacy: You control your data and don’t rely on cloud services.

- Speed: With dedicated resources, you can achieve faster response times.

- Offline access: Operate independently without an active internet connection.

How do I choose the right hardware for my LLM project?

The choice depends on factors like:

- LLM size: Decide which LLM you want to use.

- Budget and resources: Consider how much you are willing to spend on hardware.

- Performance requirements: Determine the speed and accuracy needed for your tasks.

Keywords

LLM, Large Language Model, Apple M1 Pro, NVIDIA 3090, GPU, Token Speed, Generation, Processing, Quantization, Llama2, Llama3, Local Inference, Performance Benchmark, AI, Artificial Intelligence, Development, Research, Deep Learning, Machine Learning, Software, Hardware, Technology, Data Science, Cloud Computing