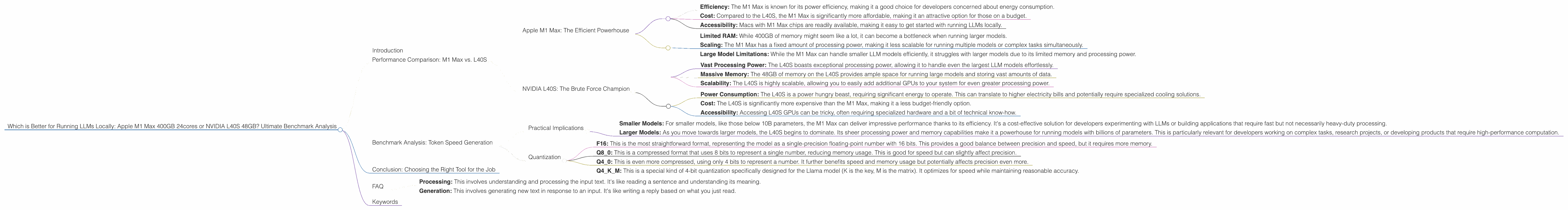

Which is Better for Running LLMs locally: Apple M1 Max 400gb 24cores or NVIDIA L40S 48GB? Ultimate Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, with new models and applications emerging constantly. For developers and geeks who want to explore these powerful models, the question arises: how can you run them locally? This article dives into the performance differences between two popular devices for running LLMs locally: the Apple M1 Max 400GB 24cores and the NVIDIA L40S 48GB. We'll analyze their strengths and weaknesses, explore their performance on various LLM models, and ultimately help you choose the best device for your needs.

Think of it this way: Imagine you want to build a super-fast race car. You have two engines to choose from: a powerful but fuel-efficient electric motor (Apple M1 Max) or a gas-guzzling behemoth with incredible horsepower (NVIDIA L40S). Each has its advantages and disadvantages, and your choice depends on what you want to achieve.

Performance Comparison: M1 Max vs. L40S

Apple M1 Max: The Efficient Powerhouse

Apple's M1 Max chip is a marvel of engineering, boasting a powerful 24-core CPU and a 32-core GPU. It's designed for efficiency, delivering impressive performance with relatively low power consumption. This makes the M1 Max ideal for running smaller LLM models or for tasks where speed is critical, but you don't need a lot of processing power.

Strengths:

- Efficiency: The M1 Max is known for its power efficiency, making it a good choice for developers concerned about energy consumption.

- Cost: Compared to the L40S, the M1 Max is significantly more affordable, making it an attractive option for those on a budget.

- Accessibility: Macs with M1 Max chips are readily available, making it easy to get started with running LLMs locally.

Weaknesses:

- Limited RAM: While 400GB of memory might seem like a lot, it can become a bottleneck when running larger models.

- Scaling: The M1 Max has a fixed amount of processing power, making it less scalable for running multiple models or complex tasks simultaneously.

- Large Model Limitations: While the M1 Max can handle smaller LLM models efficiently, it struggles with larger models due to its limited memory and processing power.

NVIDIA L40S: The Brute Force Champion

The NVIDIA L40S is a powerhouse GPU designed for high-performance computing. It offers a massive 48GB of memory and a sheer amount of processing power, making it perfect for crushing even the most demanding LLM workloads. It's like a Ferrari on the racetrack, capable of achieving incredible speeds but requiring more maintenance and resources.

Strengths:

- Vast Processing Power: The L40S boasts exceptional processing power, allowing it to handle even the largest LLM models effortlessly.

- Massive Memory: The 48GB of memory on the L40S provides ample space for running large models and storing vast amounts of data.

- Scalability: The L40S is highly scalable, allowing you to easily add additional GPUs to your system for even greater processing power.

Weaknesses:

- Power Consumption: The L40S is a power hungry beast, requiring significant energy to operate. This can translate to higher electricity bills and potentially require specialized cooling solutions.

- Cost: The L40S is significantly more expensive than the M1 Max, making it a less budget-friendly option.

- Accessibility: Accessing L40S GPUs can be tricky, often requiring specialized hardware and a bit of technical know-how.

Benchmark Analysis: Token Speed Generation

To understand the performance differences between these devices, let's look at some benchmark results. These benchmarks measure the token speed generation, or how many tokens per second (TPS) the devices can generate.

| Model | Device | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|

| Llama 2 7B (F16) | M1 Max (24 Cores) | 453.03 | 22.55 |

| Llama 2 7B (F16) | M1 Max (32 Cores) | 599.53 | 23.03 |

| Llama 2 7B (Q8) | M1 Max (24 Cores) | 405.87 | 37.81 |

| Llama 2 7B (Q8) | M1 Max (32 Cores) | 537.37 | 40.20 |

| Llama 2 7B (Q4) | M1 Max (24 Cores) | 400.26 | 54.61 |

| Llama 2 7B (Q4) | M1 Max (32 Cores) | 530.06 | 61.19 |

| Llama 3 8B (Q4KM) | M1 Max (32 Cores) | 355.45 | 34.49 |

| Llama 3 8B (F16) | M1 Max (32 Cores) | 418.77 | 18.43 |

| Llama 3 8B (Q4KM) | L40S | 5908.52 | 113.6 |

| Llama 3 8B (F16) | L40S | 2491.65 | 43.42 |

| Llama 3 70B (Q4KM) | M1 Max (32 Cores) | 33.01 | 4.09 |

| Llama 3 70B (Q4KM) | L40S | 649.08 | 15.31 |

This table provides a comparison of M1 Max 400GB 24cores vs. NVIDIA L40S 48GB regarding the token speed generation in different configurations.

Let's break down these numbers:

- Llama2 7B: The M1 Max excels in the case of Llama 2 7B, demonstrating impressive token speeds in both processing and generation phases. Even though it has less RAM, its efficient architecture shines in this particular scenario.

- Llama 3 8B: The L40S comes out on top for Llama 3 8B, showing significantly higher token speeds than the M1 Max, particularly in the Q4KM quantization. This difference highlights the benefit of the L40S's massive memory and processing power.

- Llama 3 70B: The L40S again showcases its strengths when handling Llama 3 70B, delivering significantly faster generation and processing speeds. The M1 Max struggles with this heavyweight model, showing limitations in both aspects.

Practical Implications

The above data reveals some key observations about the practical implications of using these devices for running LLMs locally:

- Smaller Models: For smaller models, like those below 10B parameters, the M1 Max can deliver impressive performance thanks to its efficiency. It's a cost-effective solution for developers experimenting with LLMs or building applications that require fast but not necessarily heavy-duty processing.

- Larger Models: As you move towards larger models, the L40S begins to dominate. Its sheer processing power and memory capabilities make it a powerhouse for running models with billions of parameters. This is particularly relevant for developers working on complex tasks, research projects, or developing products that require high-performance computation.

Quantization

What is Q80, Q40, Q4KM? These are types of quantization, a technique used to compress and optimize LLMs. It reduces the memory footprint of the model, allowing it to run faster while still maintaining a decent level of performance. Imagine squeezing a large suitcase full of clothes into a smaller one - you might lose some details or even crease your favorite shirt, but you can now carry it more comfortably.

- F16: This is the most straightforward format, representing the model as a single-precision floating-point number with 16 bits. This provides a good balance between precision and speed, but it requires more memory.

- Q8_0: This is a compressed format that uses 8 bits to represent a single number, reducing memory usage. This is good for speed but can slightly affect precision.

- Q4_0: This is even more compressed, using only 4 bits to represent a number. It further benefits speed and memory usage but potentially affects precision even more.

- Q4KM: This is a special kind of 4-bit quantization specifically designed for the Llama model (K is the key, M is the matrix). It optimizes for speed while maintaining reasonable accuracy.

The results demonstrate that quantization plays a significant role in performance. It's a powerful tool for optimizing LLM models and can significantly impact their speed and memory footprint.

Conclusion: Choosing the Right Tool for the Job

So, which device is "better"? The answer is: it depends on your needs. If you're focusing on smaller models or prioritizing efficiency, the M1 Max is an excellent choice. It offers cost-effectiveness and accessibility, making it a good starting point for LLM exploration.

However, if you're working with larger models or require the ultimate processing power, the L40S is the clear winner. Its sheer performance and scalability make it ideal for demanding applications and research projects.

Ultimately, choosing between these devices comes down to your specific needs and budget. Consider the size and complexity of the models you'll be running, the performance levels you require, and the resources available to you.

FAQ

1. What are LLMs, and why are they so important?

LLMs are a type of artificial intelligence that can understand and generate human-like text. They've become increasingly important because they're used in various applications like chatbots, writing assistants, translation services, and code generation.

2. Can I run LLMs without specialized hardware?

You can, but it will be significantly slower and might not be feasible for larger models. The performance gains from specialized hardware like M1 Max or L40S become increasingly noticeable as you increase the size and complexity of the models you use.

3. What is the difference between processing and generation in the context of LLMs?

- Processing: This involves understanding and processing the input text. It's like reading a sentence and understanding its meaning.

- Generation: This involves generating new text in response to an input. It's like writing a reply based on what you just read.

4. How do I choose the best device for my needs?

Consider the size of the models you'll be running, the performance levels you need, and your budget. The M1 Max is great for smaller models and efficient tasks, while the L40S is best for larger models and demanding workloads.

5. Is it possible to combine different devices for better performance?

Yes! This is called distributed training and allows you to leverage multiple devices to run LLM models faster and handle larger datasets.

Keywords

LLMs, Large Language Models, Apple M1 Max, NVIDIA L40S, Token Speed, Performance Benchmark, Quantization, F16, Q8, Q4, GPU, CPU, Local Inference, Processing, Generation, Model Size, Cost, Power Consumption, Efficiency, Scalability, Developers, Geeks, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, Computer Vision, Data Science, Research, Innovation.