Which is Better for Running LLMs locally: Apple M1 68gb 7cores or NVIDIA RTX 4000 Ada 20GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, and everyone wants a piece of the action. But running these complex models locally can be a challenge. The question arises: which device is the best for running LLMs? This article dives deep into the performance of two popular devices – the Apple M1 with 68GB of RAM and the NVIDIA RTX4000Ada with 20GB of VRAM – comparing their strengths and weaknesses when running LLMs locally. We'll analyze their performance on various LLM models, examining their ability to handle tasks like text generation and processing, and help you decide which device is right for your needs.

Performance Analysis of Apple M1 and NVIDIA RTX4000Ada for Running LLMs

This analysis focuses on the Apple M1 with 68GB of RAM and the NVIDIA RTX4000Ada with 20GB of VRAM. The performance data is based on benchmarks conducted by various developers, including ggerganov and XiongjieDai.

Key Performance Metrics:

- Tokens per Second (Tokens/Second): This metric assesses the speed at which a device can generate new tokens, which build the text output of an LLM. A higher number of tokens per second translates to faster response times and smoother interactions.

- Model Size: How large an LLM a device can handle efficiently.

- Quantization: A technique used to reduce the size of LLMs, which makes them run faster and require less memory. We'll explore different quantization levels: F16, Q80, and Q40.

Apple M1: A Solid Choice for Smaller LLMs

The Apple M1 is a powerful processor known for its energy efficiency and solid performance. It can handle smaller LLMs reasonably well, particularly when using quantization techniques.

Apple M1 Token Speed Generation

Table 1: Apple M1 Token Speed Performance (Tokens/Second)

| Model | Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 108.21 | 7.92 |

| Llama 2 7B | Q4_0 | 107.81 | 14.19 |

| Llama 3 8B | Q4KM | 87.26 | 9.72 |

Observations:

- Smaller Models: The M1 excels at running smaller models like Llama 2 7B.

- Quantization Benefits: Using quantization techniques (Q80 and Q40) significantly speeds up processing times, reducing memory requirements for Llama 2 7B.

- Generation Bottleneck: While the M1 is adept at processing, it struggles with generation speed (creating new tokens). This is particularly noticeable when using higher quantization levels (Q4_0).

Summary: The Apple M1 is a capable device for running smaller LLMs like Llama 2 7B. By using quantization, you can achieve decent processing speed. However, the M1's generation speed is a limitation, especially when working with larger models like Llama 3 8B.

NVIDIA RTX4000Ada: A Powerhouse for Larger LLMs

The NVIDIA RTX4000Ada is a dedicated GPU designed for high-performance computing tasks. It's known for its raw processing power and is well-suited for large LLMs.

NVIDIA RTX4000Ada Token Speed Generation

Table 2: NVIDIA RTX4000Ada Token Speed Performance (Tokens/Second)

| Model | Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 2310.53 | 58.59 |

| Llama 3 8B | F16 | 2951.87 | 20.85 |

Observations:

- Superior Processing: The RTX4000Ada delivers significantly faster processing speeds compared to the M1, particularly when dealing with larger models like Llama 3 8B.

- F16 Performance: While F16 quantization sacrifices some processing speed, it allows for faster generation speed compared to Q4KM quantization.

Summary: The RTX4000Ada is the clear winner for running larger LLMs efficiently. Its high processing power allows for smooth text generation and processing. However, its generation speed remains slower compared to the M1 for smaller LLMs.

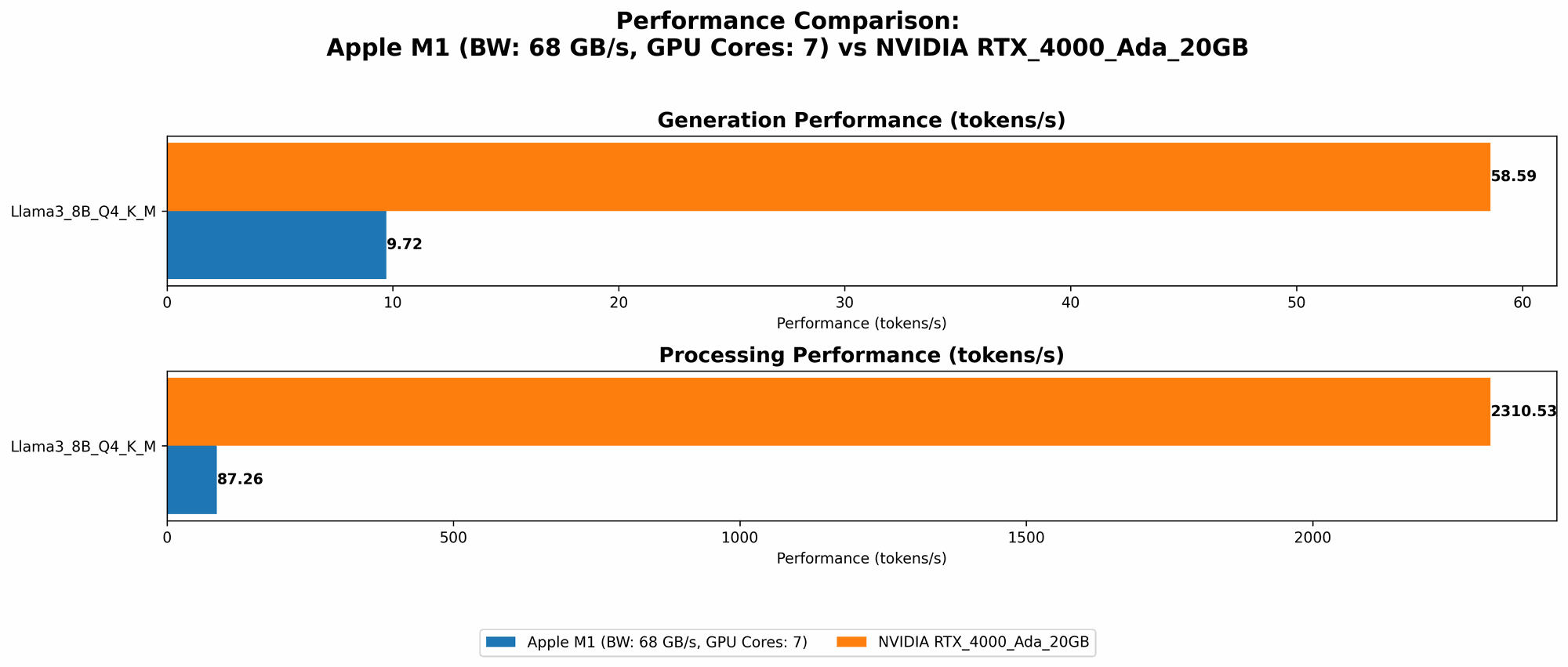

A Comparative Breakdown: Apple M1 vs. NVIDIA RTX4000Ada

Comparison of Apple M1 and NVIDIA RTX4000Ada for Processing LLMs

- Apple M1 excels in energy efficiency and is a cost-effective option, particularly for smaller LLMs and simple tasks.

- NVIDIA RTX4000Ada boasts superior raw processing power, making it the go-to device for large LLMs and complex tasks requiring high-speed computation.

Think of it this way: if processing LLMs is like driving a car, the M1 is like a fuel-efficient hatchback good for city driving, while the RTX4000Ada is a powerful sports car ideal for highway cruising and tackling demanding terrains.

Comparison of Apple M1 and NVIDIA RTX4000Ada for Generating Text from LLMs

- Apple M1 shines in text generation for smaller LLMs, particularly when using quantization techniques.

- NVIDIA RTX4000Ada is slower in generating text for smaller LLMs but remains powerful for large models and complex text generation tasks.

The M1 is like a sprinter when it comes to generating text for smaller models, while the RTX4000Ada is more like a marathon runner – strong and persistent for long-distance generation with large models.

Practical Recommendations

- For developers working primarily with smaller LLMs like Llama 2 7B: The Apple M1 is a compelling option due to its cost-effectiveness and energy efficiency. Utilizing quantization techniques can further enhance performance for these models.

- For users who require high-speed processing and complex text generation capabilities with large LLMs like Llama 3 8B : The NVIDIA RTX4000Ada is the preferred choice. Its raw processing power makes it ideal for tackling computationally demanding tasks.

Conclusion

The choice between the Apple M1 and the NVIDIA RTX4000Ada ultimately depends on your specific needs and requirements. While the M1 shines in energy efficiency and handling smaller LLMs, the RTX4000Ada emerges as the champion for processing and generating text from larger models.

FAQ: Frequently Asked Questions

What are LLMs and how do they work?

LLMs are a type of artificial intelligence model trained on massive amounts of text data. They are designed to understand and generate human-like text. Think of them as incredibly sophisticated text prediction engines – they learn patterns and relationships from the data they are trained on and use this knowledge to generate coherent and contextually relevant text.

What is Quantization?

Quantization is a technique used to reduce the size of LLMs. It's like compressing a file, making it smaller without losing too much information. This compression allows for faster loading times, less memory usage, and potentially faster processing. Imagine you have a large book with many pages. You can compress it by reducing the number of words on each page, creating a smaller book that still contains most of the information. Quantization works similarly – it reduces the number of "bits" used to represent each number in the LLM, resulting in a smaller model.

What are the limitations of running LLMs locally?

Running LLMs locally can be resource-intensive, requiring powerful hardware, especially for larger models. It can also consume significant memory and processing power, potentially affecting the performance of other applications running on your device.

Keywords:

Apple M1, NVIDIA RTX4000Ada, LLMs, Large Language Models, Llama 2, Llama 3, Token Speed, Quantization, F16, Q80, Q40, Processing, Generation, Benchmark, Performance, GPU, Local, Text Generation, Text Processing, Cost-Effective, Energy Efficiency, Hardware Requirements, Performance Comparison, Practical Recommendations, FAQ