Which is Better for Running LLMs locally: Apple M1 68gb 7cores or NVIDIA A100 PCIe 80GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, with new models being released every day. These models are powerful, capable of generating impressive text, translating languages, and even writing different kinds of creative content. But running them locally can be challenging due to the sheer computational demands.

This article will delve into the performance of two popular devices for running LLMs locally: the Apple M1 with 68GB memory and 7 cores, and the NVIDIA A100 PCIe with 80GB memory. We'll compare their strengths and weaknesses, analyze their performance with various LLM models, and provide practical recommendations for your local LLM adventures. Buckle up, it's gonna be a wild ride!

Understanding LLM Performance Metrics

Before we dive into the comparison, let's clarify what we mean by "performance" when it comes to LLMs. The two main metrics we'll be focusing on throughout this article are:

- Tokens per second (tokens/s): This measures how fast the device can process text. A higher number signifies faster processing and better performance.

- Generation speed: This refers to the speed at which the model generates new text, measured in tokens per second. A faster generation speed means you get your results faster.

Comparison of Apple M1 68gb 7cores and NVIDIA A100PCIe80GB

Apple M1 Token Speed Generation: A Little Horse with a Big Heart

The Apple M1 is known for its energy efficiency and impressive performance for its size. But how does it handle the demanding world of LLMs? Let's check those numbers:

| Model | Quantization | Processing Speed (tokens/s) | Generation Speed (tokens/s) |

|---|---|---|---|

| Llama2 7B | Q8_0 | 108.21 | 7.92 |

| Llama2 7B | Q4_0 | 107.81 | 14.19 |

| Llama3 8B | Q4KM | 87.26 | 9.72 |

The Apple M1 proves its efficiency with the Llama2 7B model, achieving a decent processing speed of around 108 tokens/s for both Q80 and Q40 quantization. However, the generation speed is significantly lower, sitting around 7.92 tokens/s for Q80 and 14.19 tokens/s for Q40. While the M1 can handle smaller models like Llama2 7B, it struggles with larger models like Llama3 8B, demonstrating a clear performance bottleneck with complex models.

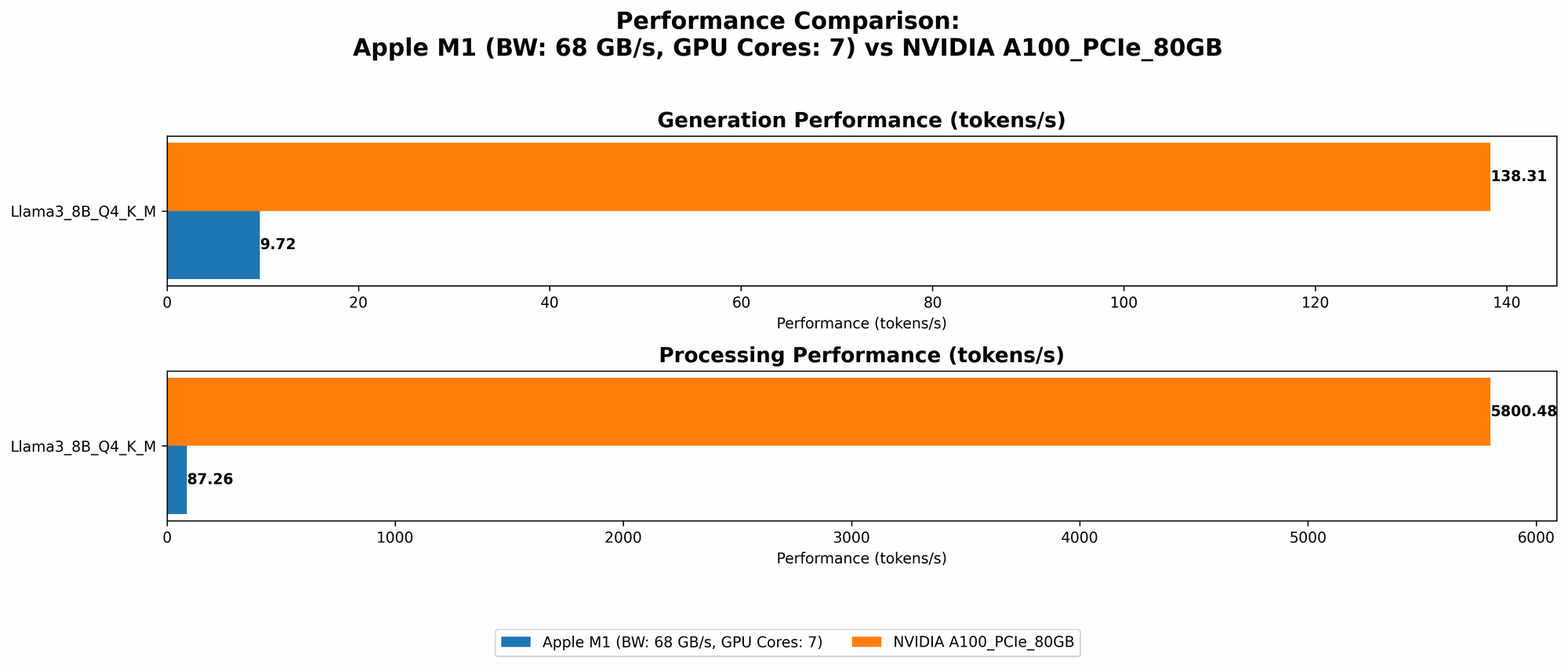

NVIDIA A100PCIe80GB: The Muscle of the LLM World

The NVIDIA A100, a powerhouse GPU, is designed for high-performance computing and is often the go-to choice for AI and machine learning tasks. Here's how it performs with different Llama models:

| Model | Quantization | Processing Speed (tokens/s) | Generation Speed (tokens/s) |

|---|---|---|---|

| Llama3 8B | Q4KM | 5800.48 | 138.31 |

| Llama3 8B | F16 | 7504.24 | 54.56 |

| Llama3 70B | Q4KM | 726.65 | 22.11 |

The A100 showcases its raw power by achieving significantly higher processing and generation speeds compared to the M1. With Llama3 8B, the A100 achieves a processing speed of around 5800 tokens/s for Q4KM and 7504 tokens/s for F16, which is almost 70 times faster than the M1. The generation speed also shows a significant advantage, with the A100 hitting 138 tokens/s for Q4KM and 54 tokens/s for F16. It's worth noting that the A100 also demonstrates good performance with the larger Llama3 70B model, with a processing speed of 726 tokens/s for Q4KM, achieving much faster speeds than the M1 with the smaller models.

Performance Analysis: Power vs. Portability

Apple M1: The Powerhouse of Portability

The Apple M1 shines when it comes to portability and power consumption. Its smaller size and lower power requirements make it ideal for running LLMs on the go. If you're working with smaller models and value portability, the Apple M1 is an excellent choice. It's like a nimble, well-trained athlete who can sprint with surprising speed, but may struggle with heavier lifting.

NVIDIA A100: The Ultimate LLM Performance Engine

The NVIDIA A100 is designed for maximum performance and is the clear winner when tackling larger and more complex LLM models. It's like a massive, powerful bulldozer, capable of moving mountains of data with ease. However, the A100 demands substantial power and comes with a hefty price tag, making it less suitable for mobile environments.

Practical Recommendations: Choosing the Right Device for Your Needs

So, how do you decide which device is right for you? Here's a quick breakdown:

- For smaller LLMs (like Llama2 7B) and portability: The Apple M1 is an excellent choice. It's energy-efficient, compact, and delivers a decent performance for smaller models. Think of it like a sleek, handy tool for everyday tasks.

- For larger LLMs (like Llama3 70B) and high-performance computing: The NVIDIA A100 is the undisputed king. It's a beast designed for handling the heavier workloads of larger models, offering unparalleled processing speed and performance. Think of it like a powerful workhorse for demanding projects.

The Future of Local LLM Computing

The landscape of local LLM computing is rapidly changing. New devices and advancements in hardware and software are constantly emerging, making it an exciting field to watch. We can expect to see further improvements in performance, energy efficiency, and accessibility, bringing LLM capabilities within reach of an even wider audience.

FAQ

What are LLMs and why are they so important?

LLMs are Large Language Models, powerful AI systems trained on massive amounts of text data. They can understand and generate human-like text, making them incredibly valuable for tasks like chatbots, language translation, and creative writing. They're like the "smartest" version of a text-based assistant.

What are the key differences between the Apple M1 and NVIDIA A100?

The Apple M1 is a system-on-a-chip (SoC) designed for power efficiency and general-purpose computing. The NVIDIA A100 is a high-performance GPU specifically designed for AI and machine learning tasks. The A100 is much more powerful but also larger, more expensive, and consumes more power.

What is quantization, and how does it affect LLM performance?

Quantization is a technique used to reduce the size of LLM models by representing numbers with fewer bits. This can significantly improve performance, especially on devices with limited memory, but can also lead to some loss of accuracy. It's like a "lossy compression" for LLMs, trading some detail for faster speeds.

Can I run LLMs on my laptop?

The answer depends on your laptop's specifications. If you have a powerful GPU with enough memory, you may be able to run smaller LLM models effectively. However, running larger models might require a dedicated GPU or specialized hardware. It's like learning to play an instrument – you need the right tools to play the right music.

Keywords

LLMs, Apple M1, NVIDIA A100, performance, benchmark, generation speed, processing speed, quantization, portability, local computing, GPU, AI, machine learning, Llama2, Llama3, tokens per second, tokens/s, FAQ, keywords