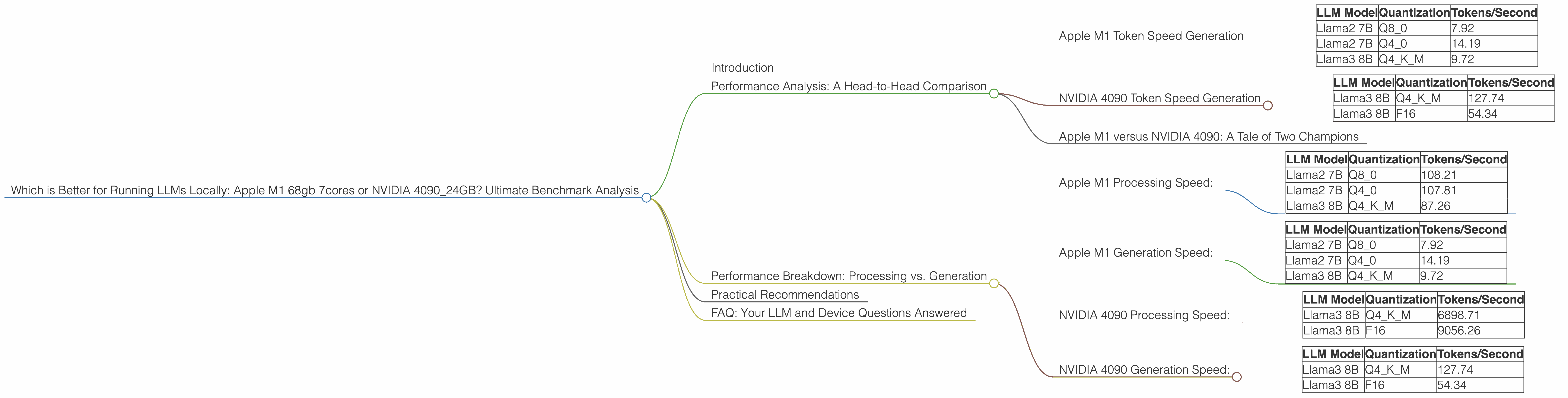

Which is Better for Running LLMs locally: Apple M1 68gb 7cores or NVIDIA 4090 24GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming, capturing the imagination of developers and enthusiasts alike. But running these complex models locally can be a challenge, demanding powerful hardware to handle the massive computations. This article dives deep into the performance of two popular devices: the Apple M1 68 GB 7-core and the NVIDIA 4090 24 GB, benchmarking them against different LLM configurations to determine which is the better choice for local LLM execution.

Think of LLMs like incredibly smart robots that can understand and generate human-like text. They can translate languages, write poetry, and even generate code! But they require serious computing power to operate. This comparison serves as your roadmap to pick the best weapon for your LLM adventures, whether you're a researcher, developer, or simply a curious tech enthusiast.

Performance Analysis: A Head-to-Head Comparison

Apple M1 Token Speed Generation

The Apple M1 chip, known for its efficiency and power, performs exceptionally well with smaller LLMs.

Apple M1 Token Speed Generation:

| LLM Model | Quantization | Tokens/Second |

|---|---|---|

| Llama2 7B | Q8_0 | 7.92 |

| Llama2 7B | Q4_0 | 14.19 |

| Llama3 8B | Q4KM | 9.72 |

Observations:

- The Apple M1 demonstrates competitive token generation speeds for smaller models like Llama2 7B and Llama3 8B.

- The use of quantization (Q80 and Q40) significantly improves the token generation speed. Quantization is like compressing the LLM model to make it smaller and faster. Think of it like zipping a file to make it smaller and easier to share!

- The Apple M1 shines with its energy efficiency. It's a great option if you are working on a budget or want to reduce your carbon footprint.

NVIDIA 4090 Token Speed Generation

The NVIDIA 4090, a top-of-the-line graphics processing unit (GPU), is the heavyweight champion for large LLMs.

NVIDIA 4090 Token Speed Generation:

| LLM Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 127.74 |

| Llama3 8B | F16 | 54.34 |

Observations:

- The NVIDIA 4090 delivers blazing-fast token generation speeds, especially for larger models like Llama3 8B.

- The use of F16 quantization (a type of compression for LLMs) achieves impressive performance.

- The NVIDIA 4090 is a powerhouse for demanding LLM tasks. If speed is your priority, this is the card to choose.

Apple M1 versus NVIDIA 4090: A Tale of Two Champions

For smaller LLMs (like Llama 7B), the Apple M1 offers a solid balance of performance and efficiency. Its ability to effectively utilize quantization techniques makes it a viable option for developers focused on optimizing resource usage.

For larger LLMs (like Llama 70B and Llama3 8B), the NVIDIA 4090 reigns supreme. Its robust processing power and ability to handle complex models make it the clear winner for demanding tasks.

Here's a simple analogy: Imagine needing to move a couch. A strong but smaller person (Apple M1) could handle a single-seater couch just fine, while a massive, powerful person (NVIDIA 4090) could effortlessly lift a gigantic six-seater sofa.

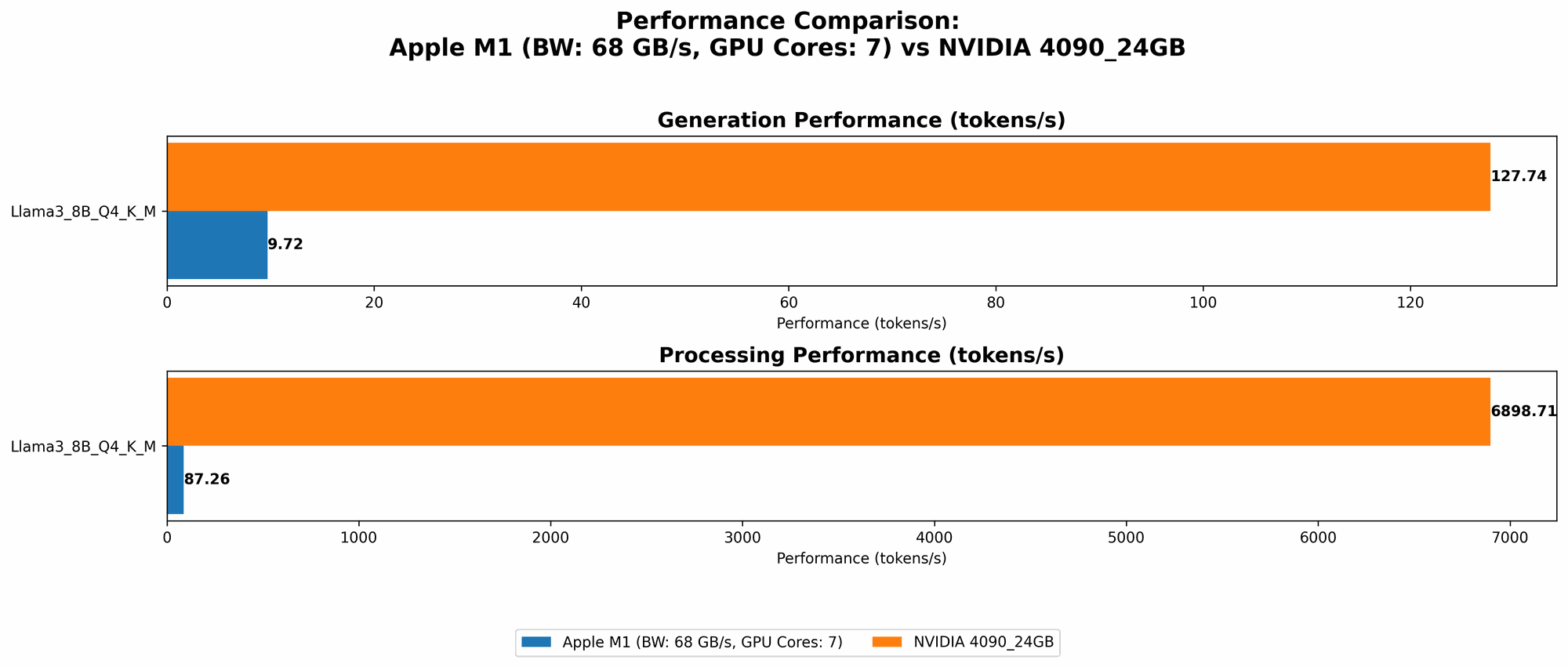

Performance Breakdown: Processing vs. Generation

Apple M1 Processing Speed:

| LLM Model | Quantization | Tokens/Second |

|---|---|---|

| Llama2 7B | Q8_0 | 108.21 |

| Llama2 7B | Q4_0 | 107.81 |

| Llama3 8B | Q4KM | 87.26 |

Apple M1 Generation Speed:

| LLM Model | Quantization | Tokens/Second |

|---|---|---|

| Llama2 7B | Q8_0 | 7.92 |

| Llama2 7B | Q4_0 | 14.19 |

| Llama3 8B | Q4KM | 9.72 |

NVIDIA 4090 Processing Speed:

| LLM Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 6898.71 |

| Llama3 8B | F16 | 9056.26 |

NVIDIA 4090 Generation Speed:

| LLM Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 127.74 |

| Llama3 8B | F16 | 54.34 |

Key Takeaways:

- Processing Power: The NVIDIA 4090 absolutely dominates, clocking in with thousands of tokens per second. The Apple M1 holds its own, but with significantly lower speeds. Think of it as a sprinter versus a leisurely stroll.

- Generation Speed: Interestingly, the NVIDIA 4090's advantage in generation speed isn't as dramatic. The Apple M1 achieves respectable generation speeds, especially with the Llama2 7B model.

What it means for you: If your tasks involve a high volume of processing (like training an LLM), the NVIDIA 4090 is the obvious choice. But for tasks like generating text, where speed is less crucial, the Apple M1 might be sufficient.

Practical Recommendations

Scenario 1: Budget-Conscious Developer

If you are starting out with LLMs and are mindful of your budget, the Apple M1 is an excellent starting point. It can handle smaller models efficiently, allowing you to experiment and learn without breaking the bank.

Scenario 2: Research and Development

For serious research and development involving larger LLMs, the NVIDIA 4090 is the powerhouse that can handle demanding tasks. It's ideal for training models, exploring advanced architectures, and pushing the boundaries of LLM capabilities.

Scenario 3: Real-Time Applications

If your goal is to build real-time applications powered by LLMs, the Apple M1's efficiency might be a better fit.

Scenario 4: Gaming Enthusiasts

While both devices are capable gaming machines, the NVIDIA 4090 is the undisputed champion. It’s designed for high-end gaming and can handle even the most demanding games with ease.

The Bottom Line: The best device for you depends on your specific needs and budget. The Apple M1 is an excellent value option for smaller models, while the NVIDIA 4090 is the ultimate choice for the most demanding LLM tasks.

FAQ: Your LLM and Device Questions Answered

Q1: What is quantization?

Quantization is a technique used to reduce the size of LLM models by compressing the data they use. It's like storing a photo in a smaller file format to save space.

Q2: How do I choose the right device?

Consider your budget, the size and type of LLM you're working with, and the specific tasks you want to perform. If budget is a constraint and your tasks involve smaller models, the Apple M1 is a great option. If speed and power are your priorities, go for the NVIDIA 4090.

Q3: Can I run LLMs locally on my laptop?

Yes, you can! The Apple M1 chip and the NVIDIA 4090 are both available on laptops. However, keep in mind that the performance might be slightly lower than desktop versions due to thermal constraints and power limitations.

Q4: What about other GPUs?

While the NVIDIA 4090 is the top-of-the-line GPU, other options like the 3090 or 4080 can still offer good performance for LLMs.

Q5: Are there other devices I should consider?

Yes! Several other options are available, including cloud computing platforms like Google Colab and Amazon SageMaker. These platforms provide access to powerful hardware and allow you to scale your LLM workloads effectively.

Keywords:

LLM, Large Language Models, Apple M1, NVIDIA 4090, Token Speed, Generation, Processing, Quantization, Q4KM, F16, Llama, Llama2, Llama3, Performance Benchmark, Local Execution, GPU, CPU, Gaming, Budget, Research, Development, Real-Time, Cloud Computing, Google Colab, Amazon SageMaker