Which is Better for Running LLMs locally: Apple M1 68gb 7cores or NVIDIA 3080 Ti 12GB? Ultimate Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, and with it, the need for powerful hardware to run these complex models locally. Two popular contenders for this task are the Apple M1 chip and the NVIDIA 3080 Ti graphics card. Both offer impressive processing power, but which one reigns supreme for running LLMs locally?

This article dives deep into a benchmark analysis comparing the performance of an Apple M1 chip with 68GB memory and 7 cores against an NVIDIA 3080 Ti with 12GB of dedicated memory. We'll explore the strengths and weaknesses of each device, analyze their token speed generation across various LLM models, and ultimately provide clear recommendations for which device is best suited for different use cases.

Buckle up – it's time to see who takes the crown in the ultimate LLM local showdown!

Understanding LLMs and Tokenization

Before we dive into the benchmark analysis, let's quickly unpack what LLMs are and how they work.

LLMs are machine learning models trained on massive amounts of text data enabling them to understand and generate human-like text. They can translate languages, write different creative content, and even answer your questions in a comprehensive way.

Tokenization is a key process in LLM processing. It involves breaking down text into smaller units (tokens) which the model can understand and process. Think of tokens as the building blocks of language for LLMs. For instance, the sentence "I love running" can be tokenized into four tokens: "I", "love", "running", and a special token for the end of the sentence.

This process is crucial for efficient LLM inference, which is the process of feeding an input to an LLM and getting a response back.

Comparison of Apple M1 and NVIDIA 3080 Ti for LLM Inference

Apple M1 Token Speed Generation

The Apple M1 chip, known for its impressive power efficiency and speed, offers a compelling option for running LLMs locally.

Here's a closer look at its token speed generation for various models:

| Model | Model Size | Quantization | Tokens/second (Processing) | Tokens/second (Generation) |

|---|---|---|---|---|

| Llama 2 7B | 7 billion | Q8_0 | 108.21 | 7.92 |

| Llama 2 7B | 7 billion | Q4_0 | 107.81 | 14.19 |

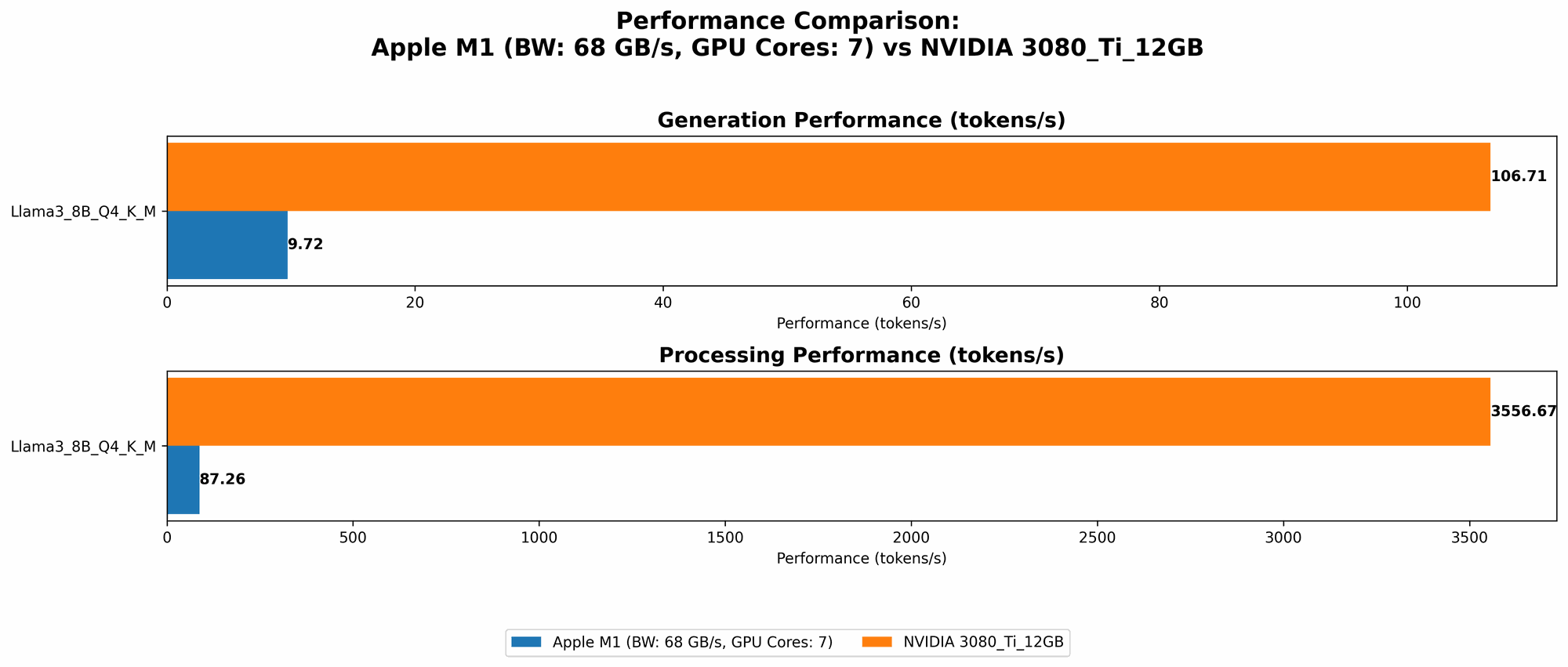

| Llama 3 8B | 8 billion | Q4KM | 87.26 | 9.72 |

Please note: There is currently no data available for the M1 chip running Llama 2 7B in F16 quantization or any Llama 3 models in F16 quantization.

Apple M1 for Smaller LLM Models

As the benchmark analysis shows, the M1 chip excels at running smaller LLM models like Llama 2 7B in quantized formats. This means it's ideal for tasks involving simple text generation, summarization, and translation.

Limitations of Apple M1 for Large Models

However, the M1 chip struggles with larger models like Llama 3 8B and Llama 3 70B. This is because the memory bandwidth and the number of cores become limiting factors for processing the massive amount of data required for these larger models.

NVIDIA 3080 Ti Token Speed Generation

The NVIDIA 3080 Ti, a high-end graphics card known for its parallel processing prowess, is a strong contender for running LLMs. Let's see how it performs:

| Model | Model Size | Quantization | Tokens/second (Processing) | Tokens/second (Generation) |

|---|---|---|---|---|

| Llama 3 8B | 8 billion | Q4KM | 3556.67 | 106.71 |

Please note: There is currently no data available for the 3080 Ti running Llama 3 70B or any model in F16 quantization.

NVIDIA 3080 Ti for Large LLMs

As evident from the numbers, the 3080 Ti significantly outperforms the M1 chip in processing speed for the Llama 3 8B model. This makes it an excellent choice for applications requiring high-speed inference of larger LLMs. The GPU's parallel processing capabilities handle the heavy computational demands with ease.

Limitations of NVIDIA 3080 Ti for Smaller Models

While the 3080 Ti shines with large models, its performance drops significantly for smaller models like Llama 2 7B. This is because they are not complex enough to leverage the full potential of the GPU's processing power. Additionally, running smaller models on a high-end GPU like the 3080 Ti can be inefficient in terms of power consumption and cost, as the GPU's resources are not fully utilized.

Performance Analysis: Comparing Apple M1 and NVIDIA 3080 Ti

Processing Speed: A Tale of Two Champions

When it comes to processing speed, the NVIDIA 3080 Ti reigns supreme for larger models. Its massively parallel processing capabilities enable it to handle the complex computations involved in running Llama 3 8B with incredible speed. Think of it like a Formula 1 car on the track, leaving the competition in the dust.

The Apple M1 chip, while impressive for smaller models, falls behind the 3080 Ti in processing speed for larger models. It's like comparing a nimble sports car to a heavy-duty truck; both have their strengths, but the truck excels in moving heavy loads.

Generation Speed: A Close Call

In terms of generation speed, the difference between the two devices is more subtle. The 3080 Ti shows a slight advantage over the M1 with the Llama 3 8B model, showcasing its ability to handle the generation process quicker. However, the difference is not as pronounced as with processing speed.

Efficiency and Cost: A Trade-off

Here's where things get interesting. While the 3080 Ti delivers unmatched performance for larger models, it comes at a higher cost both in terms of purchase price and power consumption. The M1 chip, on the other hand, offers a more affordable and energy-efficient option, especially for running smaller models.

Think of it like this: if you need to move a large truckload of goods, a powerful truck is the best choice, even if it's more expensive. But if you're just hauling groceries, a compact car will do the job just fine and cost less.

Practical Recommendations for Use Cases

So, when should developers choose the M1 chip, and when is the 3080 Ti the better option for running LLMs locally?

Apple M1: The Budget-Friendly Choice for Smaller LLMs

The Apple M1 chip is a great choice if you are:

- Working with smaller models like Llama 2 7B: Its efficiency and affordability are ideal for tasks involving simple text generation, translation, and summarization.

- Prioritizing power efficiency and budget: If you're tight on budget and looking to minimize energy consumption, the M1 chip is a solid choice.

NVIDIA 3080 Ti: Powerhouse for Large Models and High-Performance Tasks

The NVIDIA 3080 Ti is the recommended choice if you need:

- High-speed inference for larger LLMs like Llama 3 8B or even larger models: Its processing power can handle the heavy lifting required for computationally demanding tasks.

- Real-time applications with low latency requirements: If you need fast response times for applications like interactive chatbots or AI-powered tools, the 3080 Ti's speed is advantageous.

Frequently Asked Questions

How do I choose the right device for running LLMs locally?

The best device depends on the specific LLM model you're using and your performance requirements.

- For smaller models: The M1 chip offers a good balance of performance and efficiency.

- For larger models and high-performance applications: The 3080 Ti is the way to go.

What is quantization, and why is it important for running LLMs?

Quantization is a technique used to reduce the size of LLM models by using smaller numbers to represent weights. This allows for faster processing and lower memory usage, making it easier to run LLMs on devices with limited resources.

What are the advantages and disadvantages of using a GPU for LLM inference?

Advantages:

- High processing speed: GPUs excel at parallel processing, making them ideal for handling the complex computations involved in LLM inference.

- Improved efficiency for larger models: GPUs can handle the massive amount of data required for large models without sacrificing performance.

Disadvantages:

- Higher cost: GPUs can be expensive to purchase.

- Higher power consumption: GPUs require more power than CPU-based solutions.

- Less efficient for smaller models: Using a GPU for smaller models can be wasteful, as its full potential is not utilized.

Keywords

LLM, large language model, Apple M1, NVIDIA 3080 Ti, token speed, generation speed, processing speed, Llama 2, Llama 3, quantization, Q80, Q40, Q4KM, F16, GPU, CPU, inference, benchmark analysis, local, hardware, performance comparison, use cases, efficiency, cost, advantages, disadvantages.