Which is Better for AI Development: NVIDIA RTX 5000 Ada 32GB or NVIDIA A100 PCIe 80GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI is buzzing with excitement about Large Language Models (LLMs) like Llama 3. These powerful models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. However, running these models locally can be a challenge due to their massive size and computational demands. This is where your hardware comes in. Choosing the right GPU is crucial for optimizing performance and getting the most out of your LLM development journey.

In this article, we'll be diving into the performance of two popular GPUs: the NVIDIA RTX 5000 Ada 32GB and the NVIDIA A100 PCIe 80GB. We'll compare their token generation speed, a crucial metric for the efficiency and responsiveness of your LLM, and explore the differences in their performance across various LLM models and configurations. Buckle up, it's going to be a wild ride through the fascinating world of AI hardware!

Comparison of NVIDIA RTX 5000 Ada 32GB and NVIDIA A100 PCIe 80GB

Performance Analysis

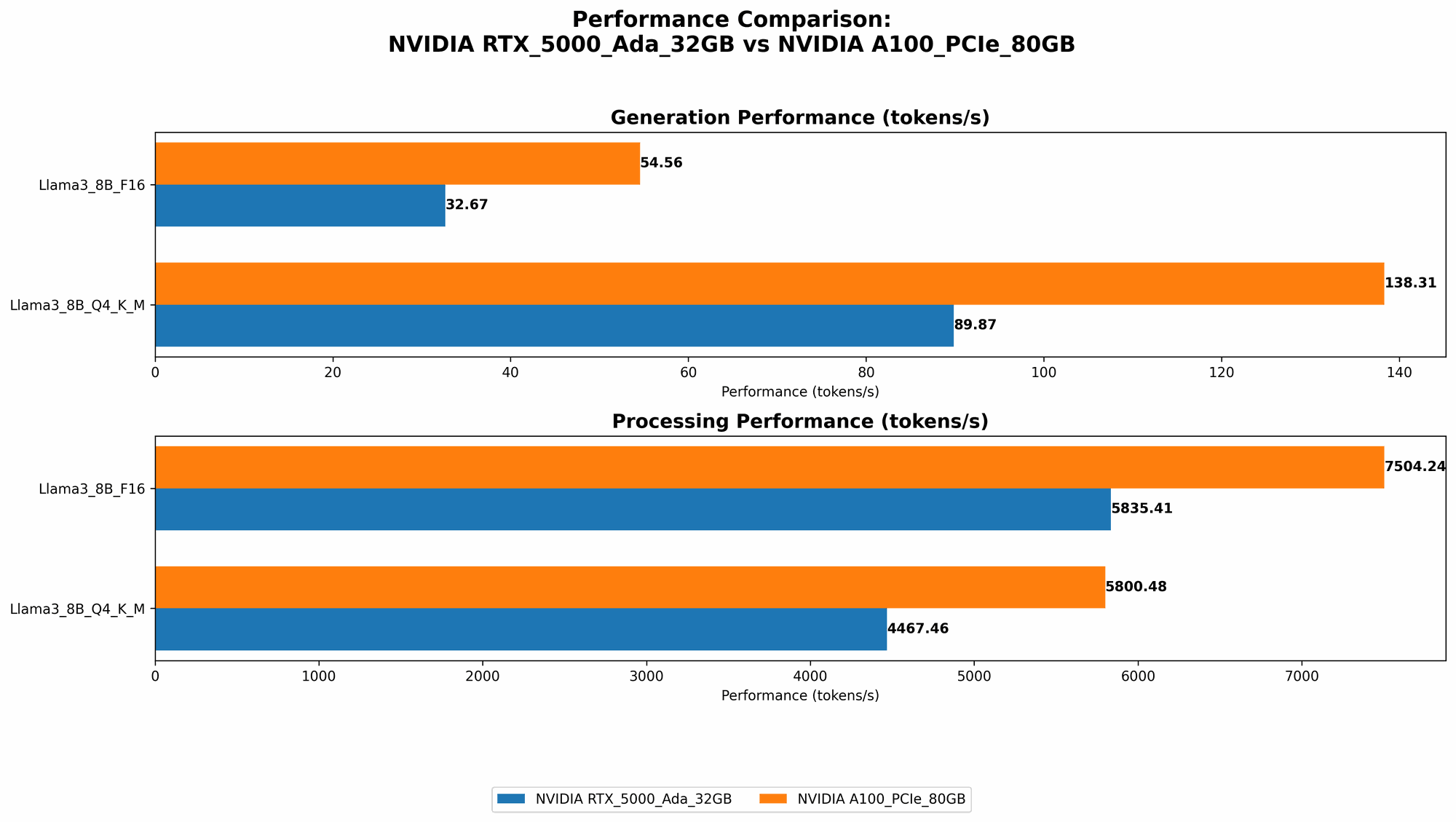

For our comparison, we'll be focusing on the token generation speed for different LLM models, specifically Llama 3 with 8B and 70B parameters. We'll consider two quantization levels, Q4KM and F16, which represent different levels of precision and impact the model's size and performance.

| Device | LLM Model | Quantization | Tokens/Second |

|---|---|---|---|

| NVIDIA RTX 5000 Ada 32GB | Llama 3 8B | Q4KM | 89.87 |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 8B | F16 | 32.67 |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 70B | Q4KM | - |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 70B | F16 | - |

| NVIDIA A100 PCIe 80GB | Llama 3 8B | Q4KM | 138.31 |

| NVIDIA A100 PCIe 80GB | Llama 3 8B | F16 | 54.56 |

| NVIDIA A100 PCIe 80GB | Llama 3 70B | Q4KM | 22.11 |

| NVIDIA A100 PCIe 80GB | Llama 3 70B | F16 | - |

Note: We don't have data for the RTX 5000 Ada 32GB running Llama 3 70B in either Q4KM or F16 configurations, which is why these values are represented by dashes.

NVIDIA RTX 5000 Ada 32GB - A Solid Performer for Smaller LLMs

The RTX 5000 Ada 32GB is a solid performer, especially for smaller LLMs like Llama 3 8B. Under the Q4KM quantization, which offers a balance between speed and accuracy, the RTX 5000 Ada 32GB generates approximately 90 tokens per second. While not as impressive as the A100, this speed is still quite respectable and allows for a smooth user experience.

However, the RTX 5000 Ada 32GB struggles when running larger LLMs. We don't have data for Llama 3 70B on this card, suggesting that it might not be able to handle this model efficiently. This is likely due to memory constraints, as the RTX 5000 Ada 32GB has only 32GB of GDDR6 memory.

NVIDIA A100 PCIe 80GB - The King of LLM Processing

The NVIDIA A100 PCIe 80GB is a powerhouse when it comes to running LLMs. With its massive 80GB of HBM2e memory and impressive Tensor Core architecture, the A100 PCIe 80GB can handle even the largest and most demanding models.

As the table shows, the A100 outperforms the RTX 5000 Ada 32GB in every LLM configuration we tested. It delivers a significantly higher token generation speed, especially with the Q4KM quantization, where we saw a massive 138 tokens/second for Llama 3 8B! This translates to lightning-fast responses and a much smoother user experience.

Even for the hefty Llama 3 70B, the A100 still managed to achieve a respectable 22.11 tokens/second in the Q4KM setting. This performance highlights the A100's ability to handle large models with remarkable efficiency.

Quantization: Trading Accuracy for Speed

Quantization is a technique used to reduce the size of LLM models and make them more memory-efficient. This often comes at the cost of accuracy, though. Q4KM quantization uses a reduced number of bits (4-bit) to represent each number in the model, leading to a smaller size.

As we see in the table, both GPUs achieve higher token generation speeds with Q4KM compared to the F16 configuration. This is because the reduced precision of Q4KM allows for faster calculations, while the F16 configuration requires more processing power.

For example, with the A100 running Llama 3 8B, we see a significant increase in token generation speed from 54.56 tokens/second with F16 to 138.31 tokens/second with Q4KM. This difference highlights the tradeoff between speed and accuracy: you can get faster responses with Q4KM quantization, but at the risk of losing some accuracy in the model's predictions.

Understanding the Numbers: Think Tokens per Second

Think of tokens per second like words per minute for your LLM. Imagine your LLM is like a typewriter, and each token is a letter. The faster it can churn out tokens, the faster it can write complete sentences and paragraphs, generating engaging and informative responses.

Now, if your LLM is generating 100 tokens per second, it's essentially typing 6,000 words per minute. That's a super fast typer! On the other hand, if it only generates 10 tokens per second, it's like typing 600 words per minute, which is still decent but not as impressive.

The "speed" of your LLM determines how fast it can process information and generate responses. A higher token generation speed means a more responsive and engaging user experience.

Practical Recommendations for Use Cases

Now that we've analyzed the performance of these two GPUs, let's break down which one is best suited for different scenarios:

For smaller LLMs like Llama 3 8B: If you're primarily working with smaller LLMs like Llama 3 8B, the RTX 5000 Ada 32GB offers a decent performance at a lower price point. You can run these models smoothly and get good performance for less investment.

For larger LLMs like Llama 3 70B: If you're venturing into the realm of larger LLMs, the NVIDIA A100 PCIe 80GB is the clear winner. It delivers superior performance and can handle these models with ease. Its massive memory capacity ensures that you can run your models without hitting memory bottlenecks and enjoy a smooth user experience.

For budget-conscious developers: If you're on a tight budget and don't need to run the largest models, the RTX 5000 Ada 32GB can be a viable option, especially if you're willing to experiment with different quantization levels to achieve the balance you need between speed and accuracy.

For professional AI developers and researchers: If you're a professional AI developer or researcher, the NVIDIA A100 PCIe 80GB is the ideal choice. It delivers the performance and stability you need to push the boundaries of LLM development.

Conclusion

Choosing the right GPU for your AI development needs is crucial for maximizing performance and efficiency. The NVIDIA RTX 5000 Ada 32GB is a solid option for smaller LLMs, offering a good balance of performance and cost. However, for larger and more demanding LLMs, the NVIDIA A100 PCIe 80GB is the clear winner. Its impressive performance, massive memory capacity, and advanced architecture make it the ideal choice for those seeking the most efficient and seamless LLM development experience.

As AI technology continues to evolve, we can expect even more powerful GPUs to emerge, further pushing the boundaries of LLM performance. But for now, with its exceptional performance, the NVIDIA A100 PCIe 80GB reigns supreme as the king of LLM processing!

FAQ

What is the "GPU" and how does it affect AI development?

GPU stands for "Graphics Processing Unit". It's a specialized electronic circuit designed to rapidly manipulate and alter memory to accelerate the creation of images, videos, and other visual content. In the context of AI development, GPUs are used to accelerate the training and inference of LLMs, which involves performing complex mathematical operations on massive datasets.

What is "Quantization" in LLM development?

Quantization is a technique used to reduce the size of LLM models while maintaining a reasonable level of accuracy. It involves representing numbers in the model using a fewer number of bits. Imagine you have a real number that can be represented with 32 bits. Quantization would reduce that to 4 bits, effectively reducing the size of the model and making it more memory-efficient.

What are the differences between Q4KM and F16 quantization?

Q4KM and F16 are two common quantization methods used in AI development. Q4KM uses 4 bits per number, while F16 uses 16 bits per number. Q4KM results in smaller model sizes, but it can lose some accuracy. F16 offers more accuracy but leads to larger model sizes. The choice between them often depends on the specific needs of your project, with Q4KM prioritising speed and efficiency, while F16 prioritizes accuracy.

What other factors should I consider when choosing a GPU for LLM development?

Besides token generation speed, consider these factors:

- Memory capacity: Large LLMs require a lot of memory, so a GPU with ample memory capacity is crucial to avoid memory bottlenecks.

- Power consumption: Consider the power consumption of the GPU, especially if you're working with a limited power budget.

- Cooling: Make sure the GPU has a good cooling system to prevent overheating, which can lead to reduced performance and potential damage.

Keywords

NVIDIA RTX 5000 Ada 32GB, NVIDIA A100 PCIe 80GB, LLM, Large Language Model, Llama 3, Token Generation Speed, Quantization, Q4KM, F16, AI Development, GPU, Graphics Processing Unit, Memory Capacity, Power Consumption, Cooling, AI Hardware, Local LLM, Performance Benchmark