Which is Better for AI Development: NVIDIA RTX 5000 Ada 32GB or NVIDIA 3090 24GB x2? Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is buzzing with excitement. These AI-powered marvels can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But with great power comes the need for powerful hardware.

This article will dive into the performance of two popular GPUs, NVIDIA RTX 5000 Ada 32GB and two NVIDIA 3090 24GB cards, when tasked with running Llama 3 LLMs. We'll compare their token speed generation, dissect their strengths and weaknesses, and guide you towards the best GPU for your AI development needs.

Think of it like this: Imagine you're hosting a massive party, but only have a tiny microwave to heat up all the food. It's going to take forever, right? LLMs are the party, and GPUs are our microwaves – the more powerful the GPU, the faster the party can get going!

Overview of the Benchmark: Comparing LLM Performance

We evaluated the performance of the RTX 5000 Ada and dual 3090 GPUs using llama.cpp on different Llama 3 models. This benchmark tested token generation speeds, a crucial metric for efficient LLM inference. We used both quantization and floating point precision to explore the impact of memory usage and performance.

Quantization is like squeezing down the size of your data – it makes it smaller and faster to process. The higher the quantization level, the smaller the model, but it might also affect accuracy. Q4KM is a quantization technique used with a combination of different techniques: * Q4 quantizes the weights of the model from 32-bit floating point to 4-bit integers, reducing the memory footprint by a factor of 8. * K refers to the use of the "Kernel" technique, which is a type of low-precision arithmetic. * M refers to the "Matrix" technique, which allows for more efficient handling of matrix operations in deep learning models.

F16 represents a lower precision (16-bit) floating point format.

Performance Analysis: Token Speed Showdown

The following table summarizes the key metrics we collected. Note that some data points are missing, as the benchmarks haven't been run for every combination of GPU, LLM, and precision level.

| GPU | LLM | Quantization | Tokens/Second |

|---|---|---|---|

| RTX 5000 Ada 32GB | Llama 3 8B Q4KM_Generation | Q4KM | 89.87 |

| RTX 5000 Ada 32GB | Llama 3 8B F16_Generation | F16 | 32.67 |

| RTX 5000 Ada 32GB | Llama 3 70B Q4KM_Generation | Q4KM | N/A |

| RTX 5000 Ada 32GB | Llama 3 70B F16_Generation | F16 | N/A |

| 3090 24GB x2 | Llama 3 8B Q4KM_Generation | Q4KM | 108.07 |

| 3090 24GB x2 | Llama 3 8B F16_Generation | F16 | 47.15 |

| 3090 24GB x2 | Llama 3 70B Q4KM_Generation | Q4KM | 16.29 |

| 3090 24GB x2 | Llama 3 70B F16_Generation | F16 | N/A |

Comparison of RTX 5000 Ada 32GB and 3090 24GB x2 for Llama 3 8B

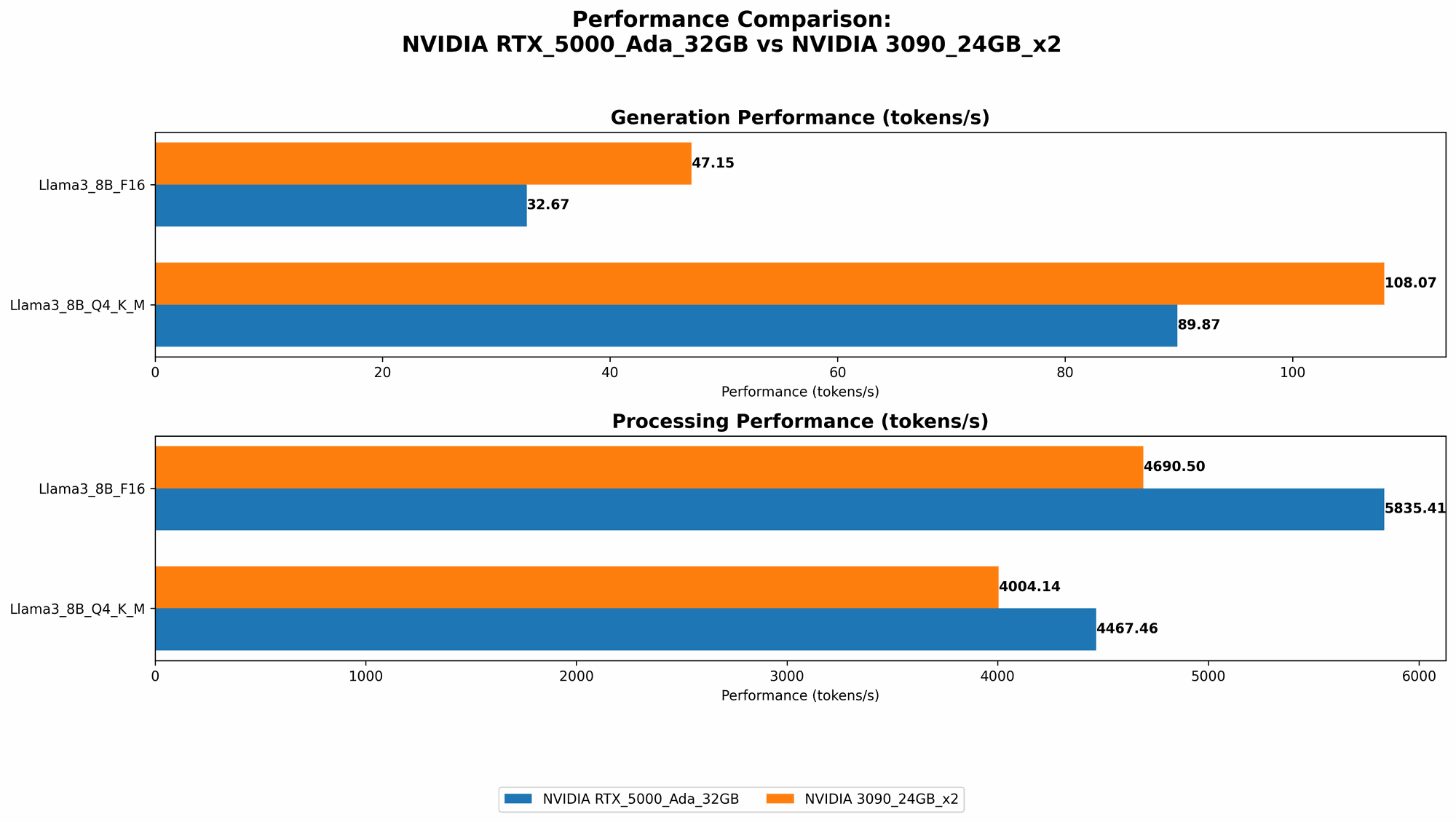

Let's first analyze the performance of these GPUs on the smaller 8B Llama 3 model. Looking at the table, the 3090 24GB x2 configuration achieves a noticeable performance advantage over the RTX 5000 Ada 32GB with a 20% increase in tokens/second when using Q4KM quantization. This difference is even more pronounced in the F16 precision mode, where the 3090 x2 configuration shows a 44% advantage over the RTX 5000 Ada.

Why is this happening?

The 3090 24GB x2 configuration has a raw computational advantage. The 3090 card is designed for high-performance computing, and two of them combined provide a significant boost to parallel processing power. However, it's important to note that this performance difference comes at a cost – the 3090 x2 setup requires more power and possibly more complex cooling solutions.

Comparison of RTX 5000 Ada 32GB and 3090 24GB x2 for Llama 3 70B

Moving on to the larger 70B Llama 3 model, the story shifts a bit. The RTX 5000 Ada lacks the data points for this model, likely due to limited memory capacity, leaving the 3090 24GB x2 as the only contender for the 70B model.

The 3090 24GB x2 configuration still holds its ground. However, we see a significant drop in token generation speed when going from the 8B to the 70B model, with the Q4KM quantization showing a reduction of 85% in token speed. This is likely due to the larger model size, which puts greater strain on the GPU's memory and processing capabilities.

Understanding the Impact of Quantization

The Q4KM quantization clearly shows its effectiveness in boosting performance for both GPUs. By reducing the precision of the model, it allows the GPU to perform calculations faster and with less memory consumption. This improvement in speed is particularly noticeable in the 8B model.

However, there's a trade-off. Quantization sometimes leads to a slight decrease in the accuracy of the LLM. If precision is critical for your application, then using F16 or even full 32-bit precision might be preferable, even if it comes at a cost to performance.

Practical Recommendations for Choosing the Right GPU

So, which GPU wins the crown? The answer, as with many technical comparisons, is "it depends."

For smaller LLMs (like Llama 3 8B) where speed is paramount:

- The 3090 24GB x2 configuration is the clear champion. It delivers a significant boost in token speed thanks to its additional processing power.

- The RTX 5000 Ada is still a viable option if your budget is tighter or if you need a more power-efficient solution.

For larger LLMs (like Llama 3 70B) or when precision is critical:

- The 3090 24GB x2 configuration is still the best choice, but be aware of the potential trade-offs for memory usage and power consumption.

- The RTX 5000 Ada might not be ideal for these scenarios, and you may need to consider other options, if it's not possible to run the chosen LLM models on it.

It's also worth mentioning that the performance of these GPUs may vary depending on your specific use case. For example, if you're working on tasks like text summarization, where high accuracy is not essential, you might find that the Q4KM quantization provides a good balance between speed and precision.

Conclusion

Choosing the right GPU for running LLMs is crucial for maximizing performance and efficiency. Both the RTX 5000 Ada and 3090 24GB x2 configurations offer distinct strengths and weaknesses. The 3090 24GB x2 configuration shines with its speed and memory capacity, while the RTX 5000 Ada excels in power efficiency.

Ultimately, the best GPU for your AI development needs will depend on the specific LLMs you want to use and your priorities, such as speed, memory, and cost. By carefully considering your requirements and the trade-offs involved, you can select the most appropriate GPU for a seamless and productive LLM development experience.

FAQ

What are the limitations of using a single RTX 5000 Ada for running larger LLMs?

The RTX 5000 Ada has a smaller memory capacity than the 3090 24GB x2 configuration, which might pose limitations for running larger LLMs like Llama 3 70B. Loading and processing the entire model can be a challenge with limited memory available.

How does the power consumption of these two GPUs compare?

The 3090 24GB x2 configuration consumes significantly more power than the RTX 5000 Ada. This is due to the nature of having two high-performance GPUs running simultaneously. If you're concerned about energy costs, the RTX 5000 Ada might be a more energy-efficient option.

What are some other GPU options for running LLMs?

Besides the RTX 5000 Ada and 3090 24GB x2, there are other GPUs available, including the recently released NVIDIA H100 and A100. These GPUs are specifically designed for high-performance computing and may offer even better performance for LLMs, but they also come with higher price tags.

Keywords

AI, LLM, Large Language Model, GPT, Llama, NVIDIA, RTX 5000 Ada, 3090, GPU, Token Generation, Speed, Benchmark, Quantization, Q4KM, F16, Memory, Power Consumption, AI Development, Performance, Efficiency, Inference.