Which is Better for AI Development: NVIDIA RTX 4000 Ada 20GB or NVIDIA A40 48GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, and with it, the need for powerful hardware to run these models locally. Whether you are a developer building AI-powered applications or a researcher experimenting with cutting-edge language technologies, choosing the right GPU can significantly impact your workflow.

In this article, we'll delve into the performance of two popular NVIDIA GPUs, the RTX 4000 Ada 20GB and the A40 48GB, for running Llama 3 models locally. We'll conduct a comprehensive benchmark focusing on token speed generation, comparing their performance across different model sizes and quantization levels. We'll break down the performance characteristics of each card, highlighting their strengths and weaknesses, and provide practical recommendations based on your specific needs.

Think of this article as your personal guide to navigating the exciting but complex world of local LLM training and inference! Let's dive into the details and see which GPU reigns supreme!

Comparison of NVIDIA RTX 4000 Ada 20GB and NVIDIA A40 48GB

Hardware Specifications

Let's start with a quick overview of the hardware contenders in our ring.

NVIDIA RTX 4000 Ada 20GB

- Architecture: Ada Lovelace

- Memory: 20GB GDDR6

- Cores: 7680 CUDA cores

- TDP: 285W

NVIDIA A40 48GB

- Architecture: Ampere

- Memory: 48GB HBM2e

- Cores: 7680 CUDA cores

- TDP: 300W

As you can see, both cards boast a respectable number of CUDA cores, but the key difference lies in the memory configuration. The RTX 4000 Ada 20GB packs 20GB of GDDR6 memory, while the A40 48GB has a whopping 48GB of HBM2e memory. This difference in memory capacity and speed impacts the performance of LLMs significantly, especially for large models.

Understanding Quantization and its Impact on Performance

Before we jump into the benchmark results, let's quickly understand the concept of quantization. Imagine trying to store detailed information about a beautiful sunset, but you only have a limited number of colors available in your paintbox. You'd need to simplify the colors and details to capture the essence of the sunset. Quantization works similarly in LLMs – it reduces the precision of weights and activations (think of them as the paintbrush strokes in our sunset analogy) to use less memory and compute power.

In our benchmarks, we'll explore two quantization levels:

- Q4KM: This is a highly compressed quantization that reduces the memory footprint and computational requirements significantly. It's like using a limited palette of colors to capture the sunset.

- F16: This is a less compressed quantization, offering a balance between memory consumption and performance. It's like having a slightly larger palette of colors for more detail.

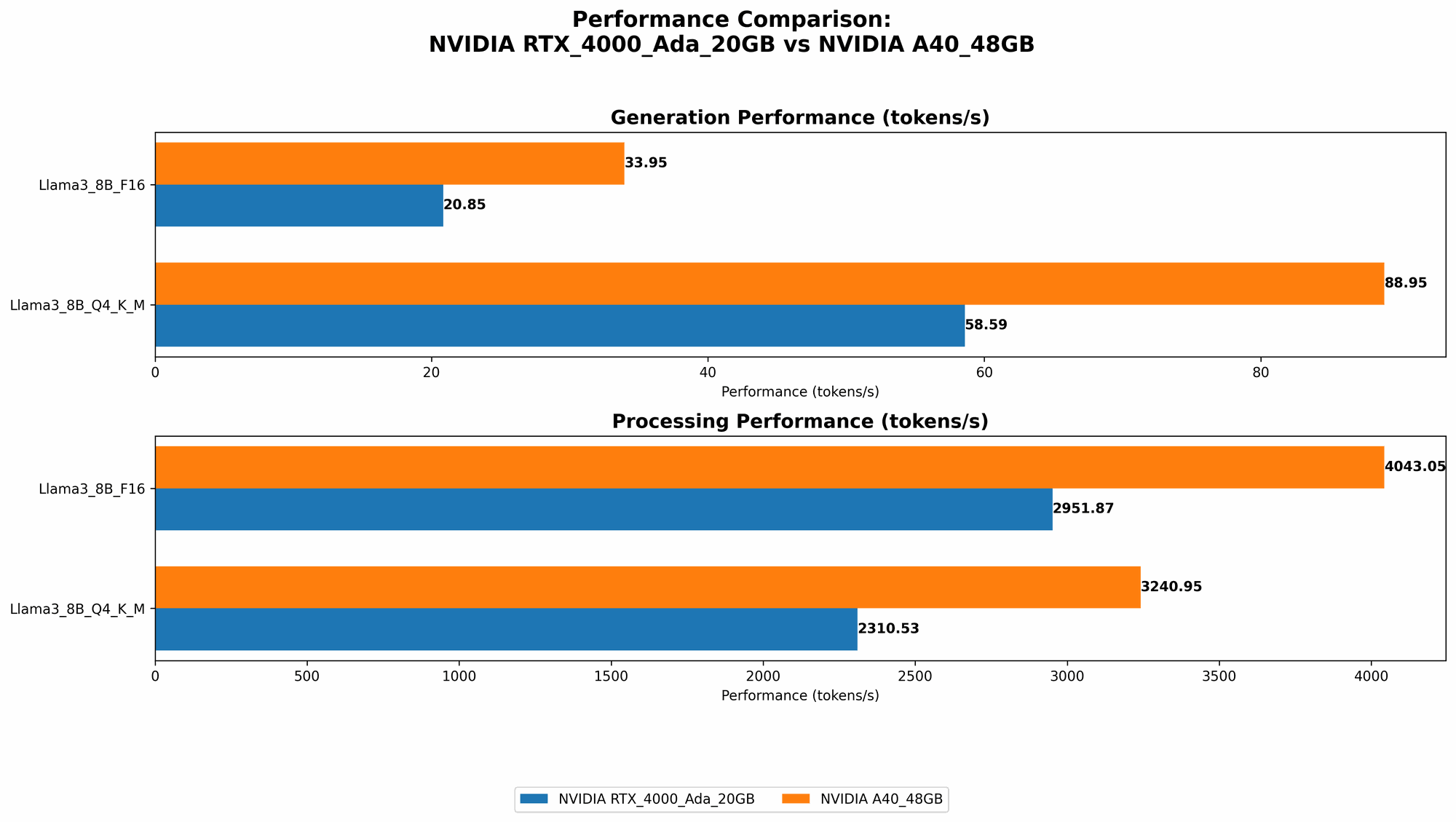

Local LLM Token Speed Generation Benchmark Results

Now, let's get to the heart of our comparison – the local LLM token speed generation benchmarks. The numbers you see in the table represent tokens per second (tokens/s), which essentially measures how fast the GPU can process and generate text.

| Model | NVIDIA RTX 4000 Ada 20GB | NVIDIA A40 48GB |

|---|---|---|

| Llama 3 8B Q4KM Generation | 58.59 tokens/s | 88.95 tokens/s |

| Llama 3 8B F16 Generation | 20.85 tokens/s | 33.95 tokens/s |

| Llama 3 70B Q4KM Generation | N/A | 12.08 tokens/s |

| Llama 3 70B F16 Generation | N/A | N/A |

A40 48GB

- Overall Performance: The A40 48GB consistently outperforms the RTX 4000 Ada 20GB across both model sizes and quantization levels. This is likely due to its larger memory capacity and the faster HBM2e memory.

- Strengths: Exceptionally high token speed for both Llama 3 8B and 70B, particularly when using Q4KM quantization. The larger memory capacity makes it suitable for running larger LLMs, which require more memory to store their parameters.

- Weaknesses: The A40 48GB is a high-end card designed primarily for data centers and professional applications. Its price tag might be a barrier for individual developers.

RTX 4000 Ada 20GB

- Overall Performance: The RTX 4000 Ada 20GB provides a good performance, especially for the Llama 3 8B model. However, it falls behind the A40 48GB, particularly when handling larger models like Llama 3 70B.

- Strengths: The RTX 4000 Ada 20GB offers a balance between performance and cost. It's a more affordable option compared to the A40 48GB, making it attractive for individual developers and smaller research projects.

- Weaknesses: The 20GB memory capacity might be limiting for running larger LLMs like Llama 3 70B. It also lags behind the A40 48GB in terms of token speed generation, especially for F16 quantization.

Performance Analysis: Strengths and Weaknesses

To make a more informed decision, let's dive deeper into the performance characteristics of both cards.

NVIDIA A40 48GB: The King of Large Language Models

The A40 48GB is undoubtedly the king of large language models. Its robust memory capacity allows it to handle even the most demanding LLMs like Llama 3 70B with ease. The faster HBM2e memory further accelerates processing, resulting in impressive token speed generation.

Think of it like this: The A40 48GB is like a high-performance sports car with a massive tank. It can easily handle long journeys with full power, while the RTX 4000 Ada 20GB is like a sporty hatchback; a great performer for shorter trips but might struggle with longer ones.

But here's the catch: The A40 48GB is specifically designed for data centers and comes with a hefty price tag. If you're a developer working on a personal project or a researcher with limited budget, it might not be the most practical choice.

NVIDIA RTX 4000 Ada 20GB: The Balancing Act

The RTX 4000 Ada 20GB offers a more balanced approach. It provides solid performance, especially for smaller LLMs like Llama 3 8B, and is much more budget-friendly compared to powerful behemoths like the A40 48GB.

Here's the thing: While the RTX 4000 Ada 20GB is a commendable performer, it's less suitable for running the larger 70B models. The smaller memory capacity might become a bottleneck, affecting the overall performance and causing memory-related issues.

Think of it as: The RTX 4000 Ada 20GB is like a well-equipped mountain bike – it can handle most terrains but might face challenges on steeper slopes. The A40 48GB is like a heavy-duty mountain bike, capable of conquering any mountain with ease.

Practical Recommendations for Use Cases

Now that we've analyzed their strengths and weaknesses, let's translate this knowledge into practical recommendations based on your specific use case.

NVIDIA RTX 4000 Ada 20GB: Ideal for Smaller Projects and Budget-Conscious Users

- Use Cases:

- Individual developers working on personal AI projects or smaller research projects.

- Applications that require fast inference on smaller LLMs like Llama 3 8B.

- Advantages: Affordable price point and sufficient performance for smaller models.

- Disadvantages: May struggle with larger LLMs due to limited memory.

NVIDIA A40 48GB: For Data Centers and Large Language Models

- Use Cases:

- Data centers and large enterprises running large-scale AI applications.

- Research labs experimenting with cutting-edge LLMs.

- Advantages: Exceptional performance for both large and smaller models, sufficient memory capacity for even the most demanding LLMs.

- Disadvantages: Expensive and might be overkill for smaller projects.

Conclusion

So, which GPU is better? It all depends on your specific needs and budget. The NVIDIA A40 48GB is the undisputed champion if you're looking for raw performance and can handle the high price tag. However, for individual developers or research projects with budget constraints, the NVIDIA RTX 4000 Ada 20GB offers a compelling alternative, especially for smaller LLM models.

No matter your choice, remember to factor in your budget, the size of the LLM you're using, and your overall performance requirements. Choosing the right GPU can significantly impact your AI development journey, making it faster, smoother, and more rewarding!

FAQ

What are LLMs?

LLMs, or Large Language Models, are powerful AI systems that use deep learning to understand and generate human-like text. They are trained on massive datasets of text and code, enabling them to perform various tasks like translation, text summarization, and even creative writing.

What is token speed generation?

Token speed generation is a measure of how quickly a GPU can process and generate text tokens. A token is a basic unit of text, like a word or punctuation mark. A higher token speed means the GPU can process and generate text faster, resulting in more efficient and responsive AI applications.

What is the difference between Q4KM and F16?

Quantization is a technique used to reduce the memory footprint of LLMs without sacrificing too much accuracy. Q4KM is a highly compressed quantization that offers excellent memory efficiency but might slightly degrade accuracy. F16 is a less compressed quantization that provides a balance between memory efficiency and accuracy.

What are CUDA cores?

CUDA cores are specialized processing units on NVIDIA GPUs optimized for parallel computing tasks, including AI model training and inference. The more CUDA cores a GPU has, the more parallel computations it can perform, resulting in faster processing.

Keywords

LLM, Large Language Models, AI Development, NVIDIA RTX 4000 Ada 20GB, NVIDIA A40 48GB, token speed generation, benchmark, quantization, Q4KM, F16, CUDA cores, memory, performance, GPU, llama.cpp, Llama 3, processing, inference, GPU Benchmarks, data center, research, developer, budget, model size