Which is Better for AI Development: NVIDIA RTX 4000 Ada 20GB or NVIDIA 3090 24GB? Local LLM Token Speed Generation Benchmark

Introduction

In the ever-evolving world of AI, Large Language Models (LLMs) are taking center stage. These powerful algorithms are becoming increasingly sophisticated, enabling us to generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But training and running these models demand significant computational resources.

Choosing the right hardware for your AI development journey can be a daunting task, especially when considering the vast array of GPUs available in the market. This article focuses on two popular contenders, the NVIDIA RTX 4000 Ada 20GB and the NVIDIA 3090 24GB, comparing their performance in generating tokens for various Llama3 models. We'll dive deep into the numbers, analyze their strengths and weaknesses, and help you decide which card is the perfect fit for your LLM needs.

The Battle of the Titans: NVIDIA RTX 4000 Ada 20GB vs. NVIDIA 3090 24GB

The NVIDIA RTX 4000 Ada 20GB and NVIDIA 3090 24GB are both powerful GPUs, but they cater to different needs and offer distinct advantages. The RTX 4000 Ada 20GB is the newer generation card, boasting improved performance and efficiency. The 3090 24GB, on the other hand, is a tried-and-true powerhouse, known for its ample memory and stability. Let's see how they stack up in the real world when it comes to running LLMs.

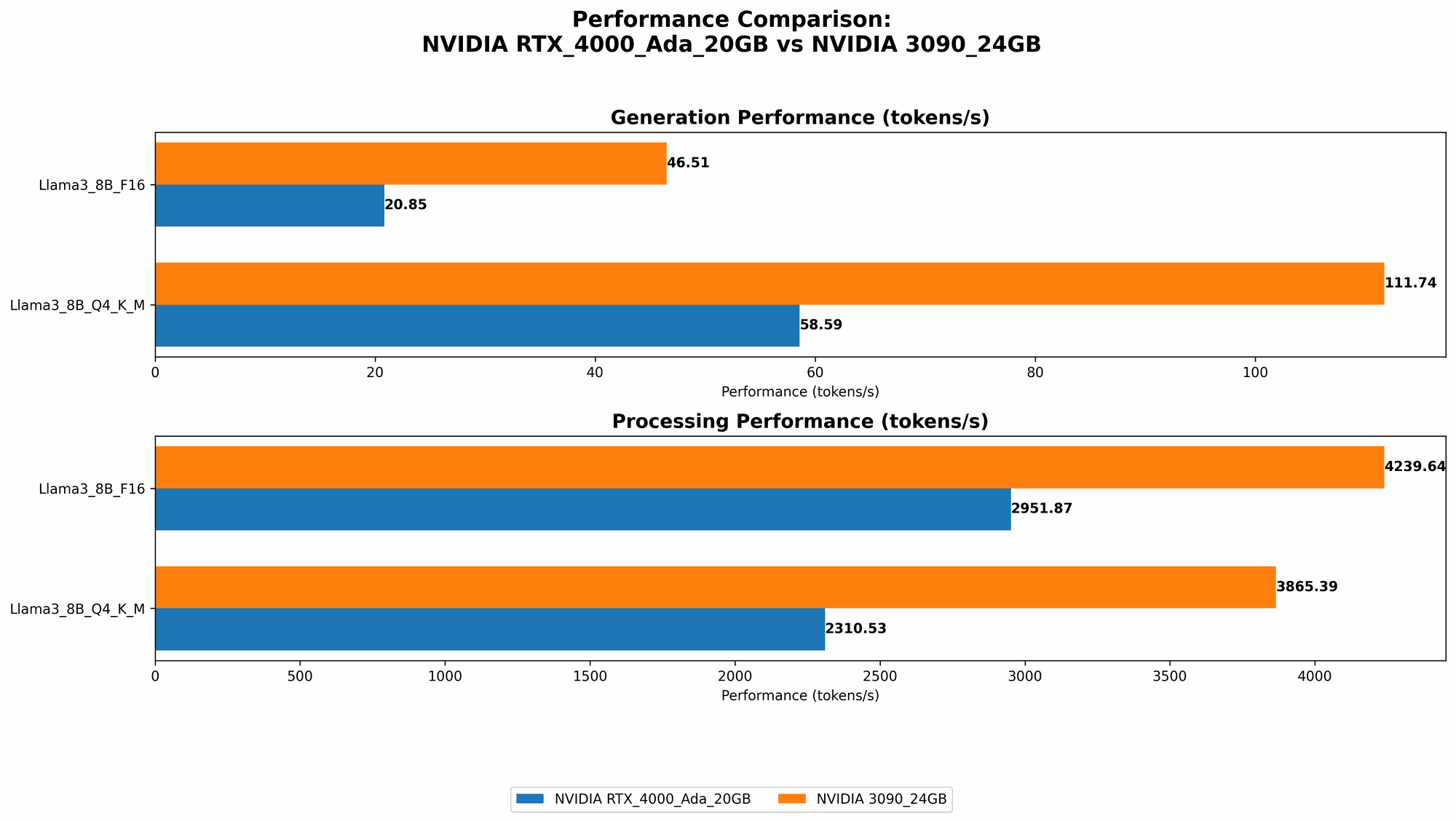

Comparison of NVIDIA RTX 4000 Ada 20GB and NVIDIA 3090 24GB for Llama3 Token Generation

We'll compare the two GPUs based on their token generation speed for different Llama3 models. The data in the table below is measured in tokens per second (tokens/second), representing the rate at which each GPU can process and generate text. As a general rule of thumb, the higher the number of tokens per second, the faster your LLM model will run.

| Model | NVIDIA RTX 4000 Ada 20GB (tokens/second) | NVIDIA 3090 24GB (tokens/second) |

|---|---|---|

| Llama3 8B Q4KM Generation | 58.59 | 111.74 |

| Llama3 8B F16 Generation | 20.85 | 46.51 |

| Llama3 70B Q4KM Generation | Null | Null |

| Llama3 70B F16 Generation | Null | Null |

What the numbers tell us:

- NVIDIA 3090 24GB consistently outperforms the RTX 4000 Ada 20GB in token generation speed for both the 8B Q4KM and 8B F16 models. This indicates the 3090 24GB has a significant advantage in processing and generating text for smaller LLM models.

- Data for the 70B models are unavailable. This is likely due to the larger size of these models, requiring more memory than the tested GPUs can handle.

A Deeper Dive into the Token Generation Numbers

Let's break down the results into smaller chunks and analyze the performance of each GPU for specific model configurations:

NVIDIA RTX 4000 Ada 20GB Token Speed Analysis

- Llama3 8B Q4KM Generation: The RTX 4000 Ada 20GB generates tokens at a rate of 58.59 tokens/second. This is a respectable speed, especially when you consider the 20GB memory limit. However, it falls short compared to the 3090.

- Llama3 8B F16 Generation: The RTX 4000 Ada 20GB's performance drops to 20.85 tokens/second with the 8B F16 model. This suggests that the GPU struggles with models using half-precision floating-point numbers (F16), which can lead to accuracy issues.

NVIDIA 3090 24GB Token Speed Analysis

- Llama3 8B Q4KM Generation: The 3090 24GB excels at 111.74 tokens/second for the 8B Q4KM model. This is almost double the speed of the RTX 4000 Ada 20GB, demonstrating its strength in handling smaller models.

- Llama3 8B F16 Generation: The 3090 24GB also outperforms the RTX 4000 Ada 20GB for the 8B F16 model, generating tokens at 46.51 tokens/second. This suggests that the 3090 24GB handles half-precision floating-point numbers more efficiently.

Performance Analysis: Strengths and Weaknesses

NVIDIA RTX 4000 Ada 20GB:

Strengths:

- Lower Power Consumption: The RTX 4000 Ada 20GB boasts improved energy efficiency compared to older generations, minimizing your electricity bills, especially if you run your LLM models for extended periods.

- Smaller Footprint: The RTX 4000 Ada 20GB is physically smaller than the 3090 24GB, making it easier to fit in smaller cases or workstations.

- New Features: The RTX 4000 Ada 20GB benefits from the new Ada architecture, which brings advancements in ray tracing, DLSS, and tensor cores, potentially enhancing your LLM training experience.

Weaknesses:

- Limited Memory: The 20GB memory capacity limits the size of LLMs you can run effectively.

- Slower Token Generation: Across the tested models, the RTX 4000 Ada 20GB falls behind the 3090 24GB in token generation speed.

NVIDIA 3090 24GB:

Strengths:

- Higher Memory: The 24GB memory provides more flexibility to handle larger LLM models, allowing you to experiment with more complex architectures without running out of memory.

- Faster Token Generation: As evident from the benchmark data, the 3090 24GB consistently outperforms the RTX 4000 Ada 20GB in generating tokens for the tested Llama3 models.

Weaknesses:

- Higher Power Consumption: The 3090 24GB requires more power than the RTX 4000 Ada 20GB, translating to higher electricity costs, especially for long-term LLM training.

- Larger Footprint: The physical size of the 3090 24GB can be a constraint for smaller cases or setups.

Practical Recommendations for Use Cases

The ideal GPU choice depends on your specific requirements:

NVIDIA RTX 4000 Ada 20GB:

- Budget-Conscious Users: The RTX 4000 Ada 20GB offers a more cost-effective solution compared to the 3090 24GB, making it suitable for users on a tighter budget.

- Smaller LLMs: If you plan to work primarily with smaller LLMs, such as the Llama3 8B, the RTX 4000 Ada 20GB can handle these models effectively.

- Focus on Training Efficiency: If you are emphasizing energy efficiency and minimizing power consumption, the RTX 4000 Ada 20GB might be the better option.

NVIDIA 3090 24GB:

- Larger LLMs: If you intend to work with larger LLMs, such as Llama3 70B or even larger models in the future, the 3090 24GB's memory capacity will be invaluable.

- Maximum Throughput: If speed is your primary concern, the 3090 24GB delivers a significantly faster token generation rate, making it ideal for performance-critical tasks.

- Research and Development: The 3090 24GB's superior memory and processing power are well-suited for research and development activities where you need to experiment with different models and configurations.

Quantization: A Key Optimization Technique

Quantization is a technique used to reduce the memory footprint and improve the computational efficiency of LLMs. It involves converting the model's parameters (weights) from high-precision floating-point numbers (F32) to lower-precision formats like F16 or even integer values (INT8).

Imagine it like reducing the number of colors in an image from millions to a smaller number of colors. While the image might lose some detail, the overall picture remains recognizable, and the file size becomes significantly smaller. Similarly, quantizing an LLM can reduce its memory requirements without compromising accuracy too much.

How Quantization Affects Performance:

- Reduced Memory Footprint: Quantization significantly reduces the memory needed to store the model's weights, allowing you to run larger LLMs.

- Improved Performance: The GPU can process quantized models much faster, leading to faster inference speeds.

The Trade-Off:

- Potential Accuracy Loss: While quantization can drastically improve performance, some accuracy might be lost due to the reduced precision of the model parameters.

The Future of LLM Hardware

The race for LLM hardware is just getting started. We can expect to see even more powerful GPUs with higher memory capacities and better energy efficiency emerge in the coming years. New architectures and techniques are constantly being developed to improve performance further.

FAQ

1. What is the best GPU for running Llama3?

The best GPU for running Llama3 depends on the specific model and your needs. For smaller models like Llama3 8B, the NVIDIA 3090 24GB offers superior performance. For larger models like Llama3 70B, you might need a more powerful GPU with higher memory capacity.

2. What is the advantage of using a GPU for LLMs?

GPUs are designed for parallel processing, making them ideal for handling the massive number of computations required for LLM training and inference. They offer significant speedups compared to CPUs, accelerating the model development cycle.

3. How much memory is needed for Llama3 70B models?

The Llama3 70B model requires a minimum of 24GB of memory, and ideally more to operate efficiently. It's important to ensure your GPU has sufficient memory to handle the model's weight parameters.

4. What impact does memory have on LLM performance?

Memory plays a crucial role in LLM performance. If your GPU does not have enough memory to store the model's weights, it will need to keep swapping data between the GPU and RAM, leading to significant slowdowns.

5. How can I improve the token generation speed of my LLM?

There are several ways to enhance token generation speed:

- Quantization: Reduce the memory footprint of the model by quantizing its weights.

- CPU Optimization: Explore using optimized libraries for CPU-based LLM inference.

- Hardware Upgrades: Consider upgrading to a more powerful GPU with higher memory capacity and better computational capabilities.

Keywords:

LLM, LLM Models, NVIDIA RTX 4000 Ada 20GB, NVIDIA 3090 24GB, Llama3, Token Speed Generation, AI Development, Local LLM, GPU, GPU Benchmark, Performance Analysis, Quantization, Memory, GPU Comparison, Hardware Requirements, AI Hardware, LLM Training, LLM Inference, GPU Memory.