Which is Better for AI Development: NVIDIA A40 48GB or NVIDIA RTX 4000 Ada 20GB x4? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI is buzzing with excitement! Large Language Models (LLMs) are becoming more powerful and accessible, opening up endless possibilities for developers to build innovative applications. But with this incredible potential comes a need for robust hardware to handle the computational demands of these models.

This article dives into the fascinating world of local LLM token speed generation, comparing two popular GPU options: NVIDIA A4048GB and NVIDIA RTX4000Ada20GB_x4. We'll analyze performance benchmarks, delve into the pros and cons of each device, and provide practical recommendations for choosing the right tool for your AI projects. Buckle up, it's going to be a wild ride through the realm of AI hardware!

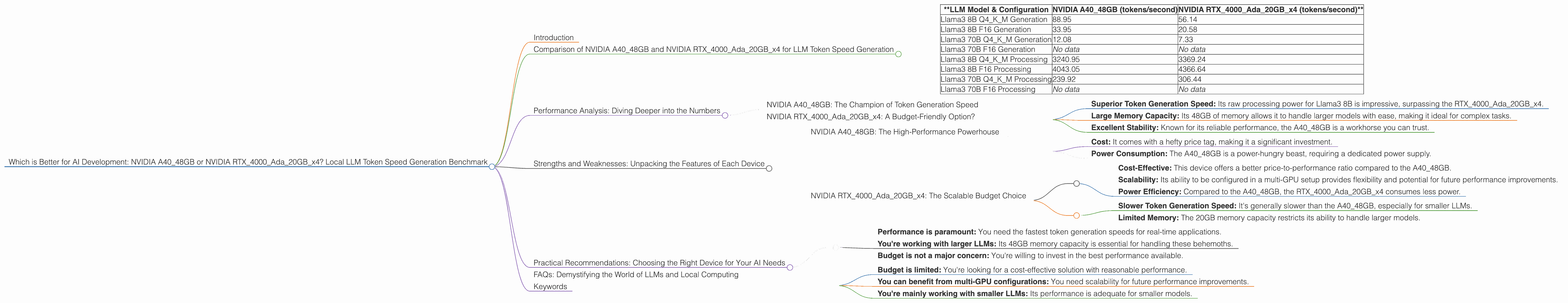

Comparison of NVIDIA A4048GB and NVIDIA RTX4000Ada20GB_x4 for LLM Token Speed Generation

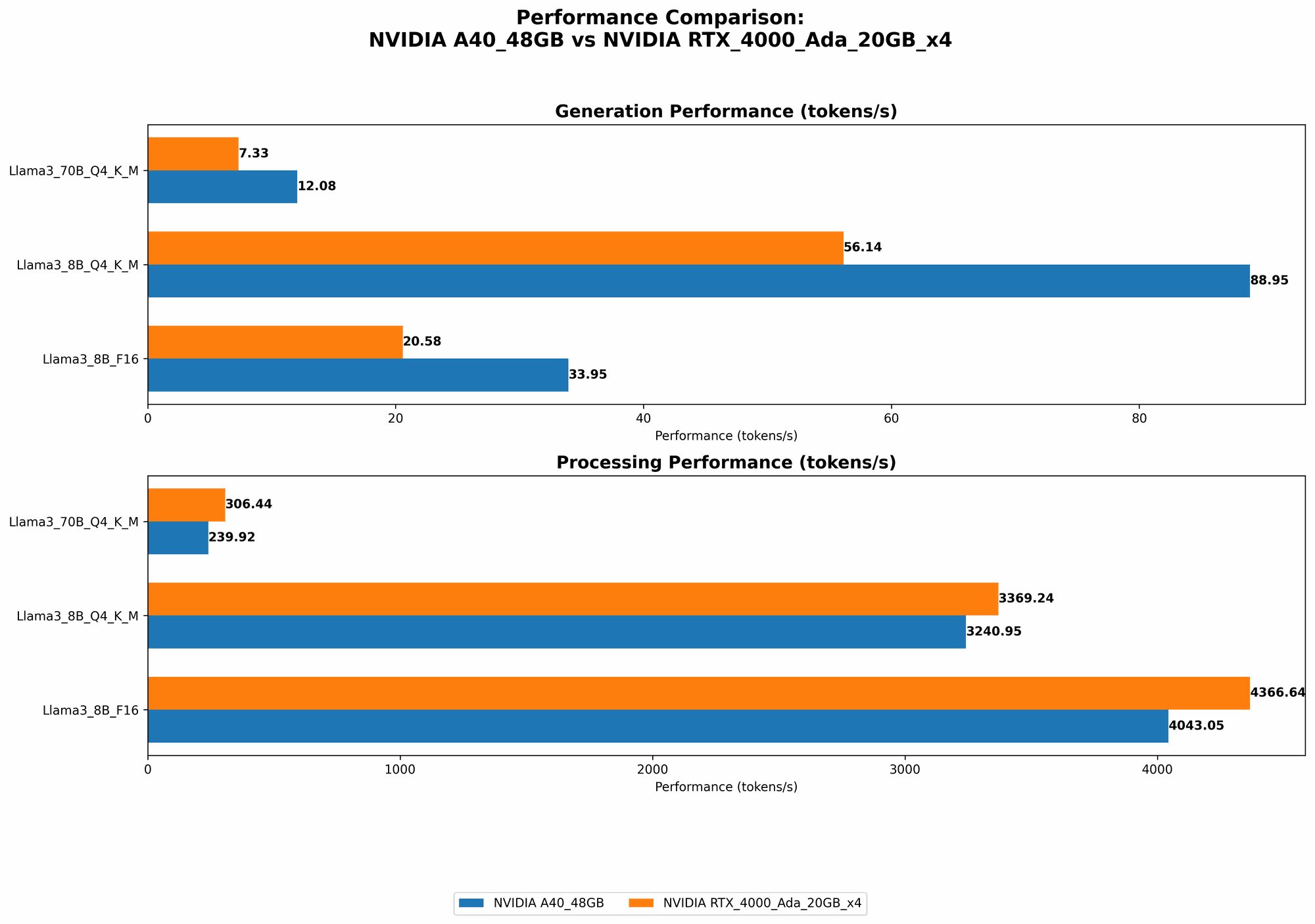

Let's jump right into the heart of the matter: comparing the performance of these two GPUs for local LLM token speed generation. We'll be focusing on the speed at which these devices can generate tokens, which is a crucial metric for real-time applications like chatbots, text generation, and code completion.

Here's a breakdown of our data, showcasing tokens per second (tokens/second) for various LLM configurations:

| **LLM Model & Configuration | NVIDIA A40_48GB (tokens/second) | NVIDIA RTX4000Ada20GBx4 (tokens/second)** |

|---|---|---|

| Llama3 8B Q4KM Generation | 88.95 | 56.14 |

| Llama3 8B F16 Generation | 33.95 | 20.58 |

| Llama3 70B Q4KM Generation | 12.08 | 7.33 |

| Llama3 70B F16 Generation | No data | No data |

| Llama3 8B Q4KM Processing | 3240.95 | 3369.24 |

| Llama3 8B F16 Processing | 4043.05 | 4366.64 |

| Llama3 70B Q4KM Processing | 239.92 | 306.44 |

| Llama3 70B F16 Processing | No data | No data |

What's the deal with these fancy terms? * Q4KM: A type of quantization used to shrink the model size, making it more efficient for specific tasks. * F16: Represents a specific type of floating-point precision used in training LLMs, leading to faster processing. * Generation: This refers to the process of generating new text tokens, which is what you'd use for applications like chatbots or text completion. * Processing: This represents the overall processing power of the GPU, including operations like token generation and other calculations.

Performance Analysis: Diving Deeper into the Numbers

Now that we have some raw data, let's analyze it to see what it tells us about the performance capabilities of these two powerhouses!

NVIDIA A40_48GB: The Champion of Token Generation Speed

For smaller LLMs like Llama3 8B, the A4048GB emerges as the clear winner. Its processing speeds are significantly faster than the RTX4000Ada20GBx4, especially in the Q4 KM configuration. This means that the A40_48GB can generate tokens at a much faster rate, leading to smoother and more responsive real-time applications.

NVIDIA RTX4000Ada20GBx4: A Budget-Friendly Option?

While the A4048GB takes the crown for token speed, the RTX4000Ada20GB_x4 has its own advantages. It's a much more cost-effective solution, offering decent performance with a lower price tag.

Consider this: the RTX4000Ada20GBx4 can be configured in a multi-GPU setup (x4 in our data), which allows for significant performance gains. This makes it an attractive option if you're working within a tighter budget or if your needs align with the performance of the RTX4000Ada20GBx4.

Strengths and Weaknesses: Unpacking the Features of Each Device

NVIDIA A40_48GB: The High-Performance Powerhouse

Strengths:

- Superior Token Generation Speed: Its raw processing power for Llama3 8B is impressive, surpassing the RTX4000Ada20GBx4.

- Large Memory Capacity: Its 48GB of memory allows it to handle larger models with ease, making it ideal for complex tasks.

- Excellent Stability: Known for its reliable performance, the A40_48GB is a workhorse you can trust.

Weaknesses:

- Cost: It comes with a hefty price tag, making it a significant investment.

- Power Consumption: The A40_48GB is a power-hungry beast, requiring a dedicated power supply.

NVIDIA RTX4000Ada20GBx4: The Scalable Budget Choice

Strengths:

- Cost-Effective: This device offers a better price-to-performance ratio compared to the A40_48GB.

- Scalability: Its ability to be configured in a multi-GPU setup provides flexibility and potential for future performance improvements.

- Power Efficiency: Compared to the A4048GB, the RTX4000Ada20GB_x4 consumes less power.

Weaknesses:

- Slower Token Generation Speed: It's generally slower than the A40_48GB, especially for smaller LLMs.

- Limited Memory: The 20GB memory capacity restricts its ability to handle larger models.

Practical Recommendations: Choosing the Right Device for Your AI Needs

Now comes the fun part: deciding which device is best for your AI project! Here's a simplified guide to help you choose:

Go for the A40_48GB if:

- Performance is paramount: You need the fastest token generation speeds for real-time applications.

- You're working with larger LLMs: Its 48GB memory capacity is essential for handling these behemoths.

- Budget is not a major concern: You're willing to invest in the best performance available.

Consider the RTX4000Ada20GBx4 if:

- Budget is limited: You're looking for a cost-effective solution with reasonable performance.

- You can benefit from multi-GPU configurations: You need scalability for future performance improvements.

- You're mainly working with smaller LLMs: Its performance is adequate for smaller models.

Imagine this:

Think of the A4048GB as a Formula One race car: blazing-fast, luxurious, but expensive. The RTX4000Ada20GB_x4 is more like a well-tuned sports car; not as blazing-fast, but still powerful and affordable.

FAQs: Demystifying the World of LLMs and Local Computing

Q. What are LLMs?

LLMs are massive AI models that can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. Think of them as the ultimate language wizards!

Q. Why are LLMs so exciting?

They're revolutionizing the way we interact with computers and information. They can be used to build intelligent chatbots, personalize user experiences, automate tasks, and even create art.

Q. What is quantization?

Imagine you have a massive book filled with information. Quantization is like creating a simplified version of that book, removing unnecessary details while preserving the core information. This makes the book smaller and easier to carry, but you might lose some nuance. In the case of LLMs, quantization helps to reduce the model size, making it faster and more efficient for specific tasks.

Q. What about cloud-based LLMs?

Cloud-based LLMs offer many advantages, including scalability and access to powerful hardware. But running LLMs locally can be more cost-effective in some cases and offer greater control over your environment.

Keywords

LLM, large language model, AI, NVIDIA A4048GB, NVIDIA RTX4000Ada20GB_x4, GPU, token speed, generation, processing, quantization, F16, performance, benchmark, AI development, local, cloud, chatbot, text generation, code completion, cost-effective, scalability, power consumption, AI hardware,