Which is Better for AI Development: NVIDIA 4090 24GB x2 or NVIDIA RTX 6000 Ada 48GB? Local LLM Token Speed Generation Benchmark

Introduction

In the ever-evolving world of AI, Large Language Models (LLMs) are taking center stage. These powerful models, capable of generating human-like text, translating languages, and even writing code, are revolutionizing various industries. To unleash the full potential of LLMs, developers need powerful hardware to execute these models efficiently. This article dives deep into the performance comparison of two popular GPU configurations: NVIDIA 409024GBx2 and NVIDIA RTX6000Ada_48GB, specifically focusing on their token speed generation for local LLM models. We'll use real data to analyze each device's strengths and weaknesses to help you choose the best hardware for your AI development projects. So, fasten your seatbelts, fellow AI enthusiasts – it's time for a thrilling performance showdown!

The Battle of the Titans: NVIDIA 409024GBx2 vs. NVIDIA RTX6000Ada_48GB

Imagine you have a massive AI model, like a super smart robot, that needs rapid-fire thinking to process information. Each "thought" is a token, and your GPU - the robot's brain - needs to crank them out quickly. This is where our two contestants, NVIDIA 409024GBx2 and NVIDIA RTX6000Ada_48GB, step into the ring!

A Closer Look at the Contenders

- NVIDIA 409024GBx2: This setup packs a punch with two of the most powerful consumer GPUs on the market. The 4090 boasts incredible processing power and generous memory, making it a top choice for demanding tasks. The "x2" configuration gives you a double dose of horsepower!

- NVIDIA RTX6000Ada_48GB: While not as "flashy" as the 4090, the RTX 6000 is a workhorse specifically designed for professional workloads. With massive 48GB of GDDR6 memory, it's built for handling massive datasets and complex models.

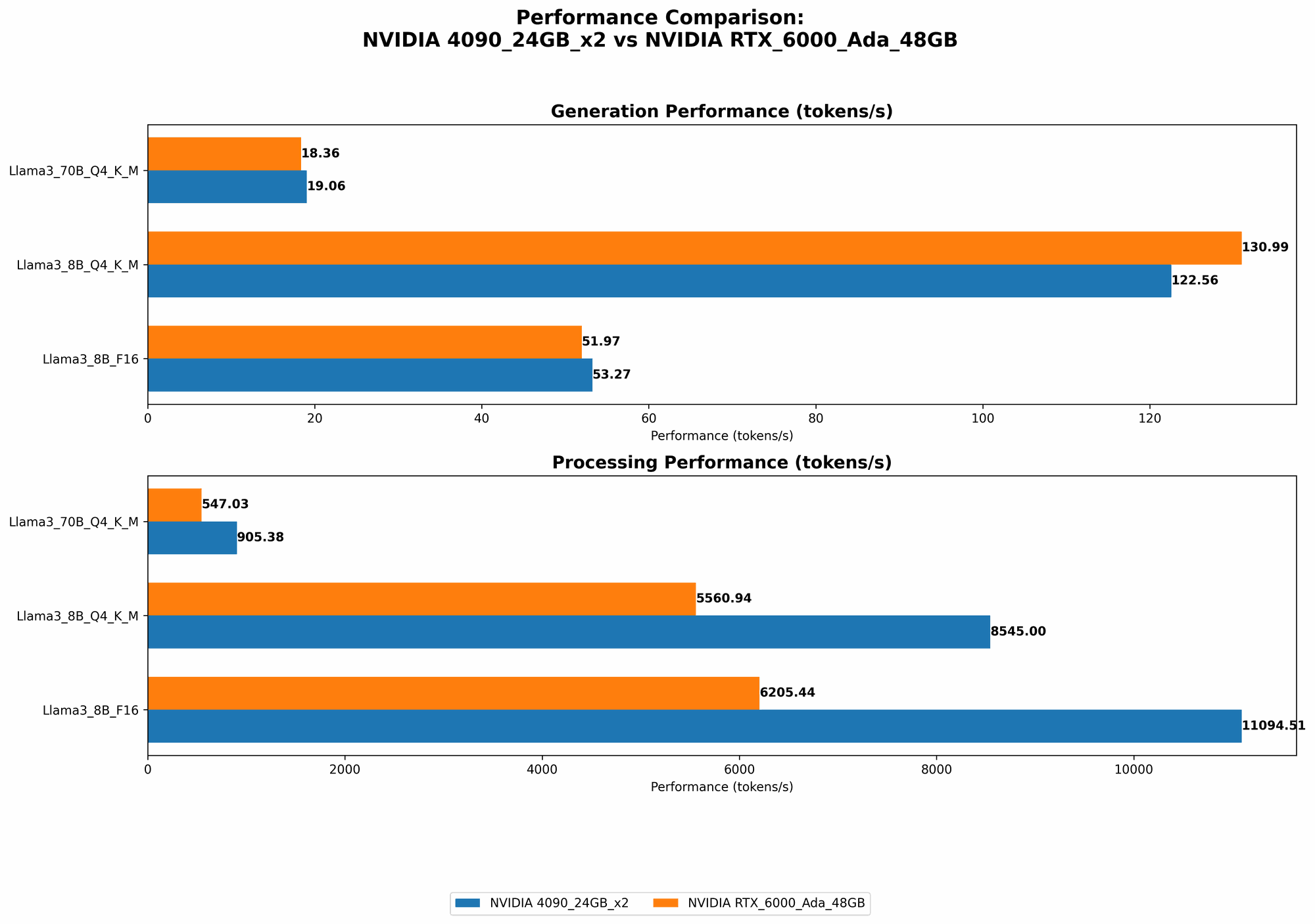

Performance Analysis: Token Speed Generation [Llama3 Models]

Let's dissect these GPUs on their ability to process tokens, the fundamental building blocks of language models. The numbers used in our analysis are tokens per second (tokens/sec) and are based on benchmarks from prominent sources.

Llama3 Token Speed Generation: A Head-to-Head Comparison

| Model | NVIDIA 409024GBx2 (tokens/sec) | NVIDIA RTX6000Ada_48GB (tokens/sec) |

|---|---|---|

| Llama38BQ4KM_Generation | 122.56 | 130.99 |

| Llama38BF16_Generation | 53.27 | 51.97 |

| Llama370BQ4KM_Generation | 19.06 | 18.36 |

| Llama370BF16_Generation | N/A | N/A |

- Llama 38BQ4KMGeneration: The RTX6000Ada48GB takes the lead in token generation speed. While the advantage isn't massive, it's clear that the RTX 6000 holds a slight edge in handling smaller models like Llama 3_8B.

- Llama 38BF16Generation: Interestingly, the 409024GBx2 performs slightly better with F16 (half-precision) quantization, while the RTX 6000Ada48GB exhibits better speeds for Q4K_M (quantization with kernel and matrix multiplication acceleration) This suggests that the choice of the best GPU might depend on the specific quantization technique you're using.

- Llama 370BQ4KMGeneration: Again, the RTX6000Ada48GB maintains its lead over the 409024GBx2 for larger models.

- Llama 370BF16Generation: Data is not available for either configuration for this scenario, so we can't say which GPU is better when dealing with F16 quantization for the Llama 370B model.

Llama3 Token Processing: A Speed Showdown

| Model | NVIDIA 409024GBx2 (tokens/sec) | NVIDIA RTX6000Ada_48GB (tokens/sec) |

|---|---|---|

| Llama38BQ4KM_Processing | 8545.0 | 5560.94 |

| Llama38BF16_Processing | 11094.51 | 6205.44 |

| Llama370BQ4KM_Processing | 905.38 | 547.03 |

| Llama370BF16_Processing | N/A | N/A |

- Llama 38BQ4KMProcessing: The 409024GBx2 clearly outperforms the RTX 6000Ada48GB in terms of token processing speed for smaller models. This is a significant difference, suggesting that if you're using a smaller LLM and need fast processing, the 409024GB_x2 is the way to go.

- Llama 38BF16Processing: The 409024GBx2 shows remarkable speed with F16 quantization, maintaining a significant lead over the RTX 6000Ada_48GB.

- Llama 370BQ4KMProcessing: Even with the larger model, the 409024GB_x2 showcases its processing prowess.

- Llama 370BF16_Processing: Data is unavailable for both GPUs for this scenario, so we can't determine which device offers better performance.

Interpreting the Results: A Deep Dive into the Numbers

So, which GPU emerges as the champion of token speed? It's not a clear-cut victory! Both devices excel in different areas, making the choice more about your specific needs than a universally superior option.

Strengths of NVIDIA 409024GBx2

- Unmatched Processing Power: The 409024GBx2 shines when it comes to processing tokens, especially with both Llama38B and Llama 370B models. It's a beast of a GPU, capable of handling heavy workloads with ease.

- F16 Quantization Advantage: The 409024GBx2 shows remarkable performance with F16 quantization, suggesting it might be ideal if you're using this technique for model optimization.

Strengths of NVIDIA RTX6000Ada_48GB

- Massive Memory Capacity: The 48GB of GDDR6 memory on the RTX 6000Ada48GB allows it to handle massive datasets and complex models, which is crucial for large LLM deployments.

- Q4KM Quantization Efficiency: The RTX 6000Ada48GB demonstrates noticeable efficiency with Q4KM quantization.

Choosing the Right Weapon: Practical Recommendations for AI Development

Now that we've dissected the performance of our contenders, how do you choose the best GPU for your projects?

When to Choose NVIDIA 409024GBx2

- High-Speed Token Processing: If you prioritize token processing speed, the 409024GBx2 is your best bet. It delivers lightning-fast speeds for both smaller and larger models.

- F16 Quantization Preference: If you're working with F16 quantization techniques, the 409024GBx2 shows promising results.

When to Choose NVIDIA RTX6000Ada_48GB

- Working with Massive Models: If you're dealing with large LLMs, the RTX 6000Ada48GB's enormous memory capacity is a game-changer. It can comfortably handle the memory requirements of these behemoth models.

- Q4KM Quantization Focus: If Q4KM quantization is your preferred method, the RTX 6000Ada48GB exhibits impressive efficiency.

- Budget Considerations: While not as powerful as the 409024GBx2, the RTX 6000Ada48GB is often more budget-friendly, making it a solid option for developers with limited budgets.

Beyond the Numbers: Quantization & Performance Considerations

Now that we've reviewed the token speeds, let's delve deeper into some crucial performance factors:

What is Quantization?

Quantization is a technique for reducing the size of AI models without sacrificing too much accuracy. It's like taking a high-resolution image and compressing it into a smaller file size. With quantization, you're essentially reducing the number of bits used to represent the model's parameters, saving memory and speeding up processing.

Quantization Explained: A Real-World Analogy

Imagine a dictionary with millions of words. Each word is like a parameter in the AI model. You could represent each word with its full spelling (using many bits), or you could use abbreviations (fewer bits). Using abbreviations would save space and make it easier to look up words, much like quantization makes AI models smaller and faster!

Impact of Quantization on Performance

Quantization can significantly affect performance. The specific type of quantization employed (e.g., Q4KM, F16) and the model's size will determine how much impact it has. You might see a noticeable speed boost with certain quantization techniques, while others might lead to minimal improvements or even a slight decline in performance.

Beyond Token Generation: Other Performance Factors

Token generation speed isn't the be-all and end-all of LLM performance. Here are some additional factors to consider:

- Memory Bandwidth: The speed at which data can be transferred between the GPU and main memory (RAM) is crucial for model loading and training. A higher memory bandwidth can significantly improve overall performance.

- Parallelism: The number of parallel processing units (cores) in a GPU determines how many tasks it can perform simultaneously. More cores generally mean faster processing speeds.

Conclusion

Choosing the right GPU for LLM development isn't a one-size-fits-all decision. Both the NVIDIA 409024GBx2 and NVIDIA RTX6000Ada_48GB offer unique capabilities.

The 409024GBx2 excels in processing tokens, especially when using F16 quantization. On the other hand, the RTX 6000Ada48GB shines with its massive memory capacity, making it ideal for handling large models, and its efficiency with Q4KM quantization.

Ultimately, the best GPU for you depends on your specific project requirements, model size, quantization preferences, and budget. No matter your choice, be prepared to be amazed by the power and potential of LLMs running on these cutting-edge GPUs!

FAQ: Unraveling LLM and GPU Mysteries

What are LLMs, and why are they so important?

Large Language Models (LLMs) are a type of artificial intelligence that can understand and generate human-like text. They're used in a wide range of applications, such as:

- Chatbots: Engaging in natural-sounding conversations.

- Translation: Translating languages with high accuracy.

- Writing: Generating creative content, articles, and even code.

LLMs are revolutionizing the way we interact with technology and are opening up new possibilities for automation, creativity, and knowledge discovery.

Why do I need a powerful GPU for LLMs?

LLMs are incredibly complex models that require massive computational power to function. GPUs, with their parallel processing capabilities and large memory capacities, are ideal for handling this task. They accelerate model training and inference (the process of running a model on new data), making it possible to use LLMs effectively.

What does "tokens/second" mean?

"Tokens/second" is a measure of how fast a GPU can process individual tokens of text. Think of tokens as building blocks of language: words, punctuation marks, and other units of meaning. A GPU can process millions of tokens every second, enabling LLMs to understand and generate text at incredible speeds.

What is the difference between "Generation" and "Processing" in the benchmark data?

- Generation: The GPU is running the LLM to generate new text based on a prompt.

- Processing: The GPU is running the LLM to analyze and understand existing text. For example, it might be used for translation or question answering tasks.

Keywords

LLMs, Large Language Models, NVIDIA 4090, NVIDIA 409024GB, NVIDIA RTX6000, NVIDIA RTX6000Ada, NVIDIA RTX6000Ada48GB, AI, Artificial Intelligence, Token Speed, Token Generation, Token Processing, Quantization, Q4KM, F16, GPU, Graphics Processing Unit, Performance Benchmark, AI Development, Local LLM Models, Hardware Comparison, Llama3, Llama 38B, Llama 3_70B