Which is Better for AI Development: NVIDIA 4090 24GB or NVIDIA RTX 4000 Ada 20GB x4? Local LLM Token Speed Generation Benchmark

Introduction: Navigating the World of Local LLM Models

In the rapidly evolving world of artificial intelligence, large language models (LLMs) have become the stars of the show. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, making them incredibly useful for a wide range of applications.

However, running LLMs on a local machine can be challenging due to their massive size and processing demands. For developers who want to experiment with LLMs, optimize their performance, and build custom AI applications, choosing the right hardware is crucial. This article takes a deep dive into the performance of two popular graphics cards, the NVIDIA 409024GB and the NVIDIA RTX4000Ada20GB_x4, when running local LLM models.

Showdown: NVIDIA 409024GB vs. NVIDIA RTX4000Ada20GB_x4

This battle pits a single, powerful GPU (the NVIDIA 409024GB) against a multi-GPU setup (the NVIDIA RTX4000Ada20GB_x4). Both configurations have individual strengths and weaknesses. Let's dive into the numbers and see which one comes out on top!

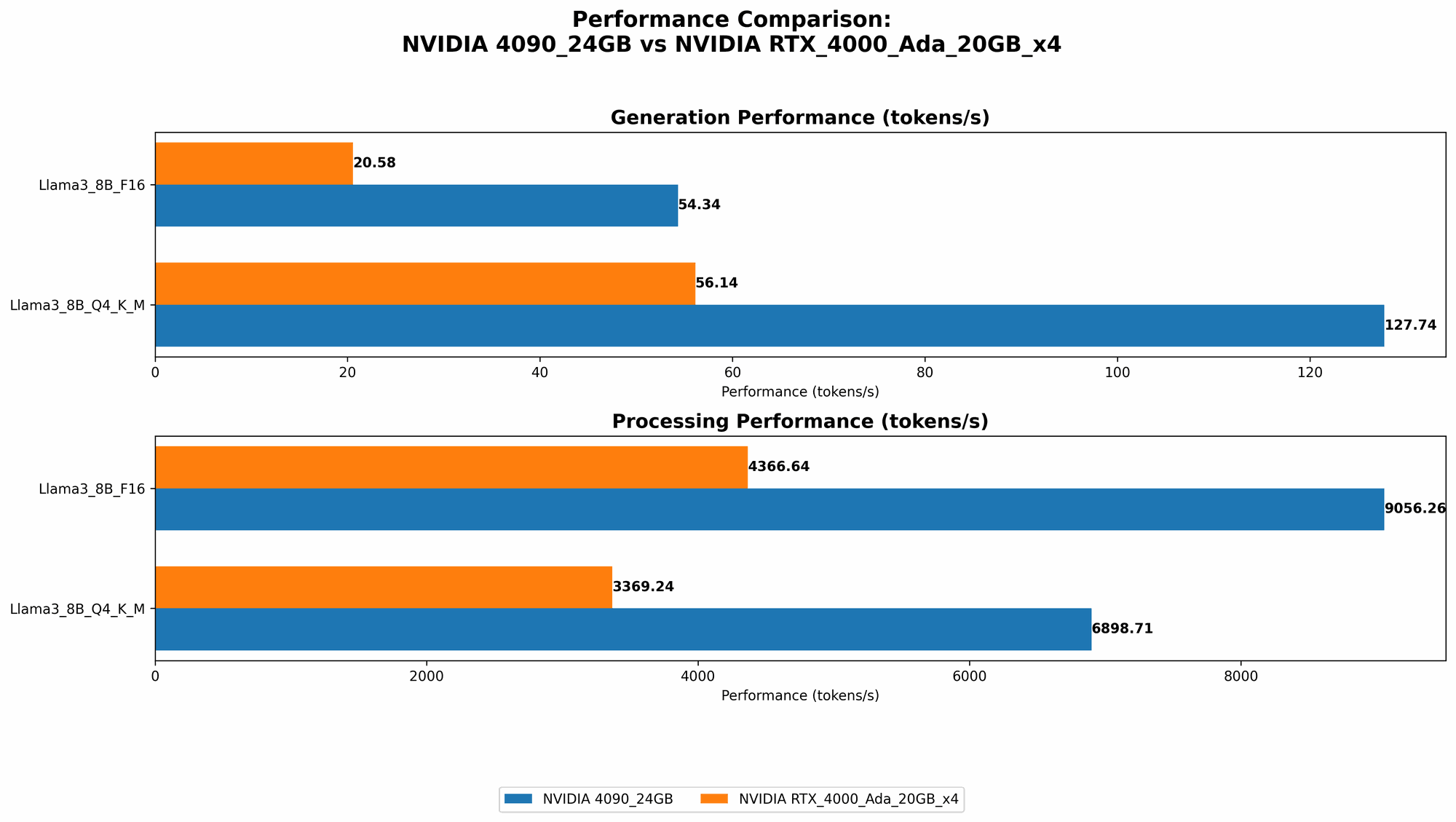

Performance Comparison: Token Speed Generation

To understand the performance of each configuration, we'll focus on token speed generation, a critical metric for evaluating LLM performance. Tokens are the basic units of text in LLMs, representing individual words or subwords. The speed at which a device can generate tokens directly translates to the speed of LLM inference, which is when the model processes input and produces output.

Here's a table summarizing the token speed generation benchmark results for different LLM models and quantization techniques (Q4KM and F16):

| Configuration | LLM Model | Quantization | Tokens/second |

|---|---|---|---|

| NVIDIA 4090_24GB | Llama3_8B | Q4KM | 127.74 |

| NVIDIA 4090_24GB | Llama3_8B | F16 | 54.34 |

| NVIDIA 4090_24GB | Llama3_70B | Q4KM | N/A |

| NVIDIA 4090_24GB | Llama3_70B | F16 | N/A |

| NVIDIA RTX4000Ada20GBx4 | Llama3_8B | Q4KM | 56.14 |

| NVIDIA RTX4000Ada20GBx4 | Llama3_8B | F16 | 20.58 |

| NVIDIA RTX4000Ada20GBx4 | Llama3_70B | Q4KM | 7.33 |

| NVIDIA RTX4000Ada20GBx4 | Llama3_70B | F16 | N/A |

Key Observations:

- 409024GB Dominates for Llama38B: The NVIDIA 409024GB delivers significantly faster token speeds than the RTX4000Ada20GBx4 for the Llama38B model, regardless of quantization techniques (Q4KM or F16). This suggests that for smaller LLMs, the raw power of a single high-end GPU can be more beneficial than a multi-GPU setup.

- 70B Model Performance: The NVIDIA RTX4000Ada20GBx4 can handle larger models like Llama370B, while the 409024GB doesn't have enough memory to run them effectively. This highlights the importance of memory capacity when dealing with larger LLMs. The RTX4000Ada20GBx4's multi-GPU setup provides the necessary memory, enabling it to handle larger models with higher token speeds.

- Quantization Trade-offs: The table shows the impact of quantization on performance. Q4KM generally achieves faster token speeds than F16, but it comes with a potential decrease in accuracy. F16 uses a smaller data representation (16 bits) than Q4KM (4 bits), which can lead to faster inference speeds but might result in reduced model accuracy.

Deep Dive into Performance Analysis

To understand the performance differences, we'll break down the factors contributing to the results:

GPU Architecture and Power

The NVIDIA 409024GB boasts a powerful architecture, delivering a significant performance advantage for the Llama38B model. Its massive GPU cores and powerful processing capabilities excel at handling smaller models.

When it comes to larger models, the RTX4000Ada20GBx4 shines. Its multi-GPU setup provides the necessary memory capacity and processing power to efficiently handle the increased demands of the Llama370B model. The 409024GB simply doesn't have enough VRAM to keep up with this larger model.

Memory Considerations

The RTX4000Ada20GBx4 configuration with its multiple GPUs is the clear winner in terms of memory. Having 80GB of VRAM combined (20GB per GPU) allows it to run larger models like Llama370B efficiently. Conversely, the 409024GB's single 24GB VRAM might prove insufficient for large LLMs, limiting its ability to handle complex models.

The Importance of Quantization

Quantization is a technique that helps to optimize models for faster inference and memory efficiency by using a reduced representation of model weights. Q4KM and F16 are common quantization techniques, offering trade-offs between speed and accuracy.

- Q4KM: This method reduces the number of bits used to store model weights, resulting in faster inference. However, it can lead to a decrease in model accuracy. Imagine compressing an image by reducing its resolution; you'll get a smaller file size, but potentially a loss of detail.

- F16: This technique uses a wider range of bits (16) than Q4KM (4 bits). This allows for higher accuracy, but it might come at the cost of speed.

The performance results show that Q4KM generally leads to faster token speeds, but it's important to consider the potential impact on model accuracy. F16, while offering lower token speeds, can provide better accuracy for certain applications.

Practical Recommendations for Use Cases

Choosing the right configuration for your LLM development depends on your specific use case:

- Small Model Experiments: Need speed and efficiency for experimenting with smaller models like Llama38B? The NVIDIA 409024GB is a formidable choice, offering impressive token speed generation.

- Large Model Workloads: Planning to work with large models like Llama370B? The NVIDIA RTX4000Ada20GB_x4, with its ample memory and processing power, is the superior choice for handling these complex LLMs.

- Balancing Accuracy and Performance: Looking for a balance between accuracy and speed? F16 quantization might be your best bet, offering a middle ground between Q4KM's reduced accuracy and potentially slower processing.

Conclusion: Selecting the Ideal LLM Hardware

Choosing the right hardware for local LLM development can seem daunting. However, understanding the strengths and weaknesses of different configurations, like the NVIDIA 409024GB and the NVIDIA RTX4000Ada20GB_x4, will help you make informed decisions based on your specific needs.

The NVIDIA 409024GB stands out for its speed and efficiency with smaller models like Llama38B. For larger models and resource-intensive workloads, the NVIDIA RTX4000Ada20GBx4 provides the necessary memory and processing power. Quantization techniques offer further opportunities for optimization, allowing you to fine-tune your model for specific performance goals.

As LLM technology continues to evolve, hardware advancements will keep pace, offering even more powerful and efficient options for developers and researchers. By carefully considering your needs and understanding the capabilities of different configurations, you can choose the ideal hardware to power your AI journey.

FAQ: Addressing Common Questions

What is quantization?

Quantization is a technique used in machine learning to reduce the size of model weights, making them easier to store and faster to process. It's like compressing a video file by reducing the number of colors, leading to a smaller file size but potentially a loss of visual quality.

Why is token speed generation important?

Token speed generation determines how fast a device can process and generate text from an LLM. A higher token speed means faster responses and more efficient inference, crucial for real-time applications.

What are LLM inference and processing?

LLM inference is the process of using a trained LLM to generate outputs, such as text predictions, translations, or summaries. LLM processing refers to the overall computation and memory operations involved in running the model.

Keywords

LLMs, Large Language Models, NVIDIA 409024GB, NVIDIA RTX4000Ada20GBx4, Token Speed Generation, Llama38B, Llama370B, Q4K_M, F16, Quantization, GPU, VRAM, AI Development, Local LLM Models, Inference, Processing, Performance Benchmark, Hardware Comparison