Which is Better for AI Development: NVIDIA 4090 24GB or NVIDIA L40S 48GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI development is buzzing with excitement, especially around Large Language Models (LLMs). These powerful AI systems, like the ever-popular ChatGPT, can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models on your own machine is a different story.

This article delves into the fascinating world of local LLM token speed generation, comparing the performance of two high-end GPUs: the NVIDIA 409024GB and NVIDIA L40S48GB, which are often considered top contenders for AI development. We'll break down key performance metrics, explore their strengths and weaknesses, and provide practical recommendations for your AI development journey.

The Battle of the Titans: NVIDIA 409024GB vs. NVIDIA L40S48GB

Both the NVIDIA 409024GB and NVIDIA L40S48GB are heavy-duty graphics cards designed to tackle demanding tasks. However, they have different strengths and weaknesses.

Comparing the Giants: Understanding the Players

NVIDIA 4090_24GB: The Consumer Champion

- Built for Gaming and High-End Performance: This GPU is a beast for gaming and professional creative applications. Its 24GB of GDDR6X memory is a force to be reckoned with.

- Focus on Speed and Efficiency: The 4090_24GB is optimized for speed, aiming to deliver maximum performance in single-precision (FP32) calculations.

- Lower Memory Capacity: While it has a healthy 24GB of memory, this might not be enough for truly massive LLMs, especially when working with larger context windows.

NVIDIA L40S_48GB: The Server Powerhouse

- Designed for AI and Data Centers: This card is specifically engineered for AI workloads and data centers, offering exceptional performance on a massive scale.

- Massive Memory: The L40S_48GB boasts a colossal 48GB of HBM3e memory, making it a champion when it comes to handling large models and datasets.

- Mixed Precision Power: The L40S_48GB is known for its prowess in mixed precision (FP16 and INT8) calculations, which can significantly boost performance while maintaining accuracy.

Token Speed Generation: The Heart of the Matter

Let's get down to brass tacks: how fast can these GPUs generate tokens for popular LLM models? We'll focus on the Llama 3 family, a powerful open-source LLM, and its different variations:

- Llama 3 8B: A compact yet capable model for general-purpose tasks.

- Llama 3 70B: A more substantial model offering increased capabilities for complex tasks.

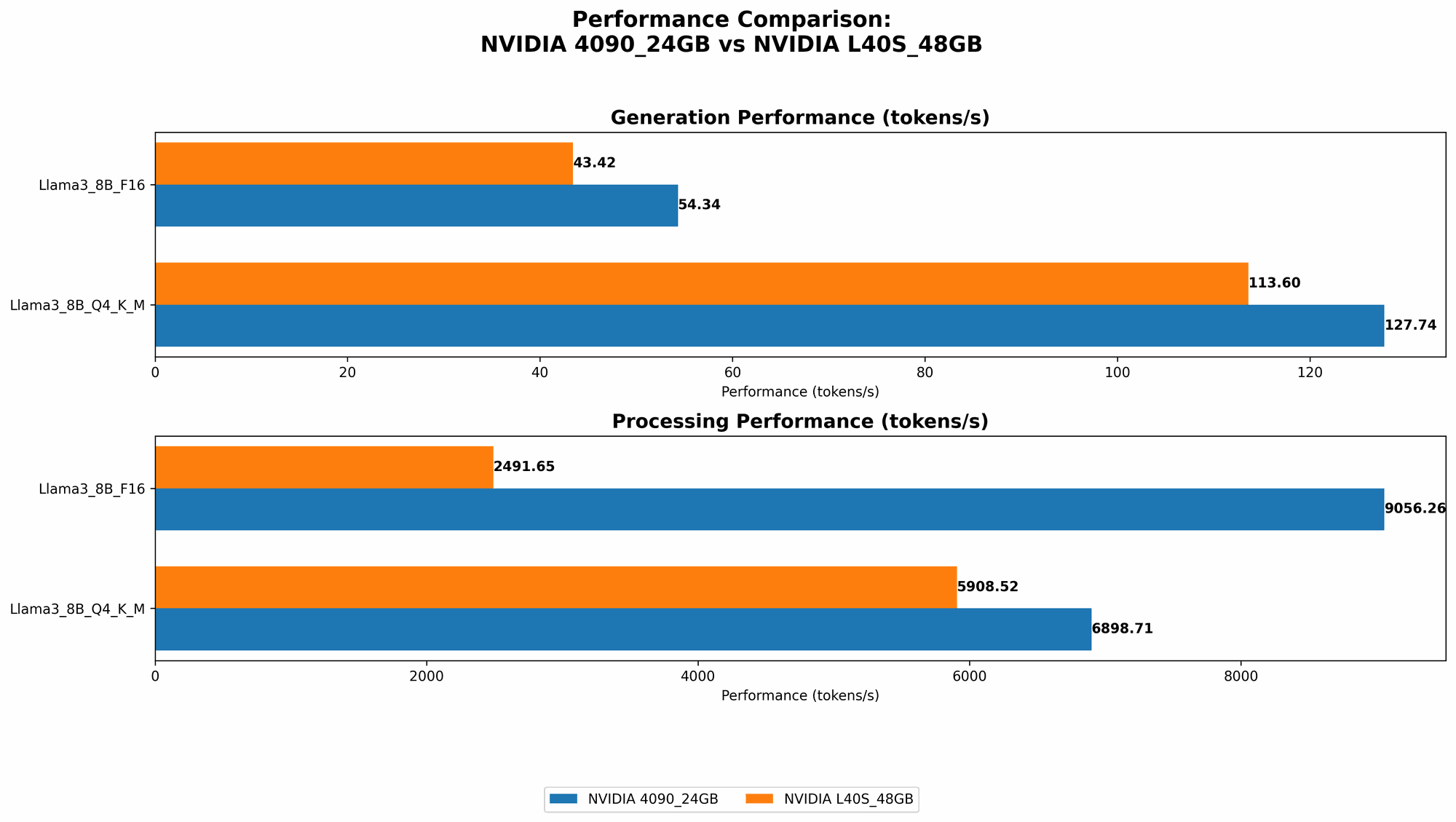

Llama 3 Speed Showdown: Data Tells the Story

The table below presents our benchmark results, showcasing the number of tokens generated per second:

| Device | Model | Quantization | Tokens/Second |

|---|---|---|---|

| NVIDIA 4090_24GB | Llama 3 8B | Q4KM | 127.74 |

| NVIDIA 4090_24GB | Llama 3 8B | F16 | 54.34 |

| NVIDIA L40S_48GB | Llama 3 8B | Q4KM | 113.6 |

| NVIDIA L40S_48GB | Llama 3 8B | F16 | 43.42 |

| NVIDIA L40S_48GB | Llama 3 70B | Q4KM | 15.31 |

Important: Data for Llama 3 70B with FP16 quantization and Llama 3 70B with Q4KM and F16 quantization for the NVIDIA 4090_24GB are not available at this time.

Token Speed Analysis: Deciphering the Results

Llama 3 8B: A Battle for the Top Spot

- NVIDIA 409024GB takes the Lead: For the smaller Llama 3 8B model, the NVIDIA 409024GB emerges as the speed champion, churning out tokens at a significantly faster rate.

- Quantization Matters: The use of Q4KM quantization (a technique that reduces the size of the model and its memory footprint) delivers a significant performance boost compared to F16.

- L40S48GB: A Close Second: The L40S48GB follows closely but slightly trails the 4090_24GB, likely due to its focus on mixed precision rather than pure speed in FP32 calculations.

Llama 3 70B: The Power of Larger Memory

- L40S48GB Takes the Stage: When working with the larger Llama 3 70B, the L40S48GB shines, showcasing its ability to handle larger models and datasets.

- The Memory Advantage: This result reflects the value of the L40S_48GB's 48GB of HBM3e memory. It can store and access the entire model with ease, enabling efficient processing.

Performance Comparisons: Delving Deeper

Speed and Efficiency: A Deeper Dive

- FP32 Speed: When it comes to pure single-precision (FP32) speed, the NVIDIA 4090_24GB reigns supreme. It's ideal for applications where speed is paramount, especially for smaller LLM models that fit within its memory capacity.

- Mixed Precision Power: The L40S_48GB excels in mixed precision computations (FP16 and INT8), balancing speed with reduced memory consumption. This makes it suitable for applications where you need the power to handle larger models without sacrificing too much speed.

Memory and Capacity: The Battle for Space

- 409024GB: A Strong Starting Point: The NVIDIA 409024GB is a solid choice for developers working with smaller LLMs, offering ample memory for a wide range of applications.

- L40S48GB: No Limits: The L40S48GB is a true beast in terms of memory capacity. Its 48GB of HBM3e memory removes limitations and enables you to work with enormous models and datasets, opening up new possibilities for research and development.

Processing Speed: Taking the Next Step

- 409024GB: Fast and Furious: The NVIDIA 409024GB excels in processing, reaching speeds of up to 9056 tokens/second for Llama 3 8B with F16 quantization. This showcases its computational prowess.

- L40S48GB: A Balanced Player: The L40S48GB demonstrates a balance between speed and memory capacity. Its processing speeds are impressive, reaching 649 tokens/second for Llama 3 70B with Q4KM quantization.

Practical Recommendations: Finding Your Perfect Match

NVIDIA 4090_24GB: When Speed Is Your Top Priority

- Ideal for Smaller LLMs: If you primarily work with smaller LLM models, like the Llama 3 8B, the 4090_24GB is an excellent choice. Its speed and performance make it a top pick for developing applications that require fast token generation.

- Cost-Effective Solution: For those on a budget, the 4090_24GB offers a compelling balance of price and performance.

NVIDIA L40S_48GB: When Size and Scale Are Your Goals

- Embrace the Giants: If you delve into larger LLMs like the Llama 3 70B or plan to explore even larger models in the future, the L40S_48GB is the way to go. Its massive memory allows you to work with massive datasets and explore the full potential of expansive AI models.

- Unleash Your AI Research: Perfect for researchers and developers who need the power to handle complex AI tasks and push the boundaries of LLM capabilities.

FAQ: Your AI Development Questions Answered

Q: What is quantization?

A: Quantization is a technique that reduces the precision of numerical values used in a neural network. Think of it like shrinking a photo: you lose some detail, but the overall image remains recognizable. Quantization helps make LLMs more efficient by reducing their size and memory footprint, allowing them to run on less powerful hardware or with faster processing speeds.

Q: What is the difference between FP16 and Q4KM quantization?

A: FP16 (half-precision floating point) maintains a reasonable level of accuracy while reducing memory usage. Q4KM (4-bit quantization with kernel-matrix-multiplication) pushes the limits of compression, drastically reducing memory requirements but potentially sacrificing some accuracy.

Q: What is a good practice for testing LLM models on different devices?

A: Start with a smaller model and use a standard benchmark like the benchmark dataset from the Hugging Face website. This will allow you to get an idea of the performance you can expect from different devices and model configurations. Remember to always check the documentation of the specific LLM model for its recommended settings.

Q: Is a GPU truly necessary for running LLMs?

A: While you can run basic LLMs on a CPU, GPUs offer significantly faster processing speeds, especially as model sizes grow larger. GPUs are also excellent for training LLMs, where parallel processing is key.

Q: What are the latest advancements in LLM hardware?

A: The field of AI hardware is continually evolving. New chip architectures are being developed, and companies like NVIDIA are pushing the boundaries of performance and efficiency. Keep an eye out for advancements in specialized AI accelerators, which promise to further enhance the capabilities of LLMs.

Keywords

NVIDIA 409024GB, NVIDIA L40S48GB, LLM, Llama 3, GPU, token speed, generation, processing, quantization, AI development, performance benchmark, benchmark results, AI hardware, mixed precision, FP16, INT8, Q4KM, memory capacity, cost-effective, AI research, Hugging Face, developer, advanced hardware