Which is Better for AI Development: NVIDIA 4090 24GB or NVIDIA A40 48GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and applications emerging every day. LLMs are complex and computationally demanding, requiring powerful hardware for training and inference. If you're a developer working with LLMs, choosing the right hardware can be a game-changer.

In this article, we'll dive deep into a performance comparison of two popular GPUs—the NVIDIA GeForce RTX 409024GB and the NVIDIA A4048GB—to see how they stack up in handling the demanding task of running LLMs locally. We'll focus on the token speed generation, a crucial factor for real-time applications like chatbots and text generation.

We'll use data from a benchmark conducted by ggerganov and XiongjieDai to provide a comprehensive analysis of how these GPUs perform with various LLM models and configurations. We'll also discuss the trade-offs associated with these powerful GPUs and help you determine which one is ideal for your AI development needs.

Performance Comparison of NVIDIA 409024GB and NVIDIA A4048GB for LLM Token Speed Generation

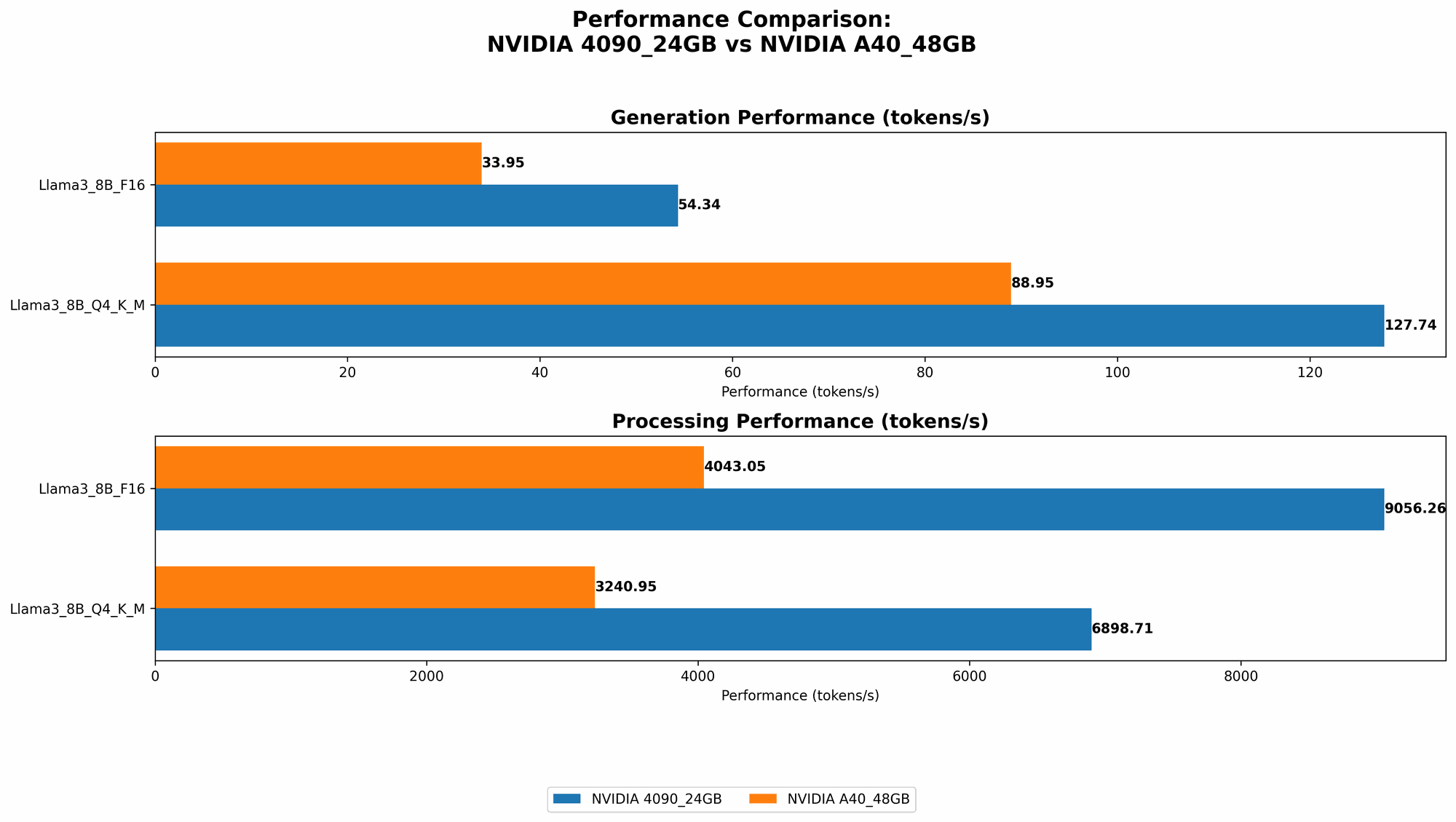

To get a clear picture of the performance differences between these GPUs, let's analyze their token speed generation rates for various LLM models and configurations. We'll focus on Llama 3 models - 8B and 70B, popular choices for local development and experimentation.

Here's a breakdown of the performance metrics:

- Q4KM: This refers to the quantization level of the LLM. Q4KM is a technique that compresses the model to a smaller size, allowing for faster inference on lower-end hardware. The "K" stands for the kernel, and "M" stands for the matrix.

- F16: This is the standard 16-bit floating-point format used in many machine learning tasks. It's a good compromise between accuracy and performance.

- Generation: This metric measures the speed of generating new tokens, which is crucial for interactive applications like chatbots.

- Processing: This metric measures the speed of processing existing tokens, which is important for tasks like reading and understanding large volumes of text.

Token Speed Generation for Llama 3 8B Models

| GPU | Model | Quantization | Generation (Tokens/Second) | Processing (Tokens/Second) |

|---|---|---|---|---|

| NVIDIA 4090_24GB | Llama3_8B | Q4KM | 127.74 | 6898.71 |

| NVIDIA 4090_24GB | Llama3_8B | F16 | 54.34 | 9056.26 |

| NVIDIA A40_48GB | Llama3_8B | Q4KM | 88.95 | 3240.95 |

| NVIDIA A40_48GB | Llama3_8B | F16 | 33.95 | 4043.05 |

Analysis:

The NVIDIA 409024GB consistently outperforms the NVIDIA A4048GB in both generation and processing speeds for the Llama 3 8B model across both quantization levels. This difference is especially significant for Q4KM, where the 409024GB achieves 44% higher generation speed and 113% higher processing speed compared to the A4048GB.

The 409024GB shows a more pronounced advantage in processing speed, indicating that it's better suited for tasks involving large amounts of text. This is likely due to the 409024GB's higher memory bandwidth and faster memory access speeds.

Token Speed Generation for Llama 3 70B Models

| GPU | Model | Quantization | Generation (Tokens/Second) | Processing (Tokens/Second) |

|---|---|---|---|---|

| NVIDIA A40_48GB | Llama3_70B | Q4KM | 12.08 | 239.92 |

Analysis:

The A4048GB is the only GPU tested for the Llama 3 70B model as the 409024GB doesn't have enough memory capacity to handle the larger model. This demonstrates the significance of memory capacity for large LLMs.

Despite its larger memory capacity, the A40_48GB exhibits a notable drop in token speed generation compared to the 8B model. This highlights the trade-off associated with running larger models: while you can technically run them, the performance drops significantly due to the increased processing demands.

For non-technical readers: Think of it like driving a car. You can squeeze a ton of luggage into a minivan, but you'll be slower and less fuel-efficient. The 409024GB might be like a sporty car, great for speed and agility with smaller models, while the A4048GB is like a minivan, capable of carrying more but sacrificing speed.

Performance Analysis: NVIDIA 409024GB vs. NVIDIA A4048GB

NVIDIA 4090_24GB: The Speed Demon

The 4090_24GB emerges as the clear winner for faster token speed generation, especially with smaller models like Llama 3 8B. This makes it ideal for applications where real-time responsiveness is crucial, like chatbots, text generation, and interactive AI experiences.

Strengths:

- High memory bandwidth: The 409024GB has a significantly higher memory bandwidth compared to the A4048GB, enabling faster access to data during processing.

- Faster processing speed: This advantage translates to faster token processing, making it well-suited for tasks requiring quick processing of large volumes of text.

- Ideal for smaller models: Its memory capacity is sufficient for smaller LLMs, allowing for faster inference and better performance.

Weaknesses:

- Limited memory: The 409024GB's memory capacity is smaller than the A4048GB, limiting its ability to run larger LLMs, such as Llama 3 70B.

- Higher price: While the price of both GPUs is high, the 4090_24GB tends to be more expensive.

NVIDIA A40_48GB: The Workhorse

The A40_48GB shines when handling larger LLMs, thanks to its ample memory capacity. While it may fall behind in token speed generation for smaller models, it's a reliable choice for tackling complex tasks involving larger models and more demanding workloads.

Strengths:

- Large memory capacity: The A4048GB's 48GB of HBM2e memory allows it to handle large LLMs that would overwhelm the 409024GB.

- Stable performance: With its extensive memory, the A40_48GB exhibits more stable performance when dealing with larger models, minimizing crashes and errors.

Weaknesses:

- Slower token speed: The A4048GB generally lags behind the 409024GB in token speed generation for smaller models, especially with Q4KM quantization.

- Higher power consumption: The A4048GB consumes more power than the 409024GB, leading to higher operating costs.

Practical Recommendations: Which GPU Should You Choose?

Here's a breakdown to help you decide which GPU is right for your AI development needs:

Choose the NVIDIA 4090_24GB if:

- You prioritize speed and efficiency for smaller LLMs.

- You're working on interactive applications like chatbots, text generation, or real-time AI experiences.

- Your budget allows for the higher cost.

Choose the NVIDIA A40_48GB if:

- You need to run large LLMs, such as Llama 3 70B or models beyond that.

- You're dealing with complex workloads that require stable performance and ample memory.

- Power consumption and cost are less of a concern.

Choosing the Right LLM Model and Configuration

Remember, the performance of an LLM is not solely determined by the GPU. The chosen model and its configuration also play a crucial role.

Here are some factors to consider:

- Model size: Larger models require more memory and processing power, potentially affecting performance.

- Quantization: Techniques like Q4KM can significantly reduce memory requirements and improve inference speeds, but they might sacrifice some accuracy.

- Fine-tuning: Fine-tuning your LLM to your specific task can lead to better results, but it requires additional training and resources.

Conclusion: Choosing the Best Tool for the Job

The choice between the NVIDIA 409024GB and NVIDIA A4048GB ultimately boils down to your specific needs and priorities. If you're focused on speed and efficiency for smaller LLMs, the 409024GB is a powerful choice. If you need to run larger LLMs or handle complex workloads, the A4048GB offers the necessary memory capacity and stability.

Remember that these GPUs are just one component of your local AI development setup. Choosing the right LLM model and configuration, as well as optimizing your workflow and code, are equally crucial for achieving optimal performance and results.

FAQs

What are LLMs?

LLMs, or Large Language Models, are advanced AI models trained on massive datasets of text. These models can understand and generate human-quality text, perform various language-related tasks, and even translate languages.

How do GPUs affect LLM performance?

GPUs provide the raw computing power needed for LLMs to process vast amounts of data and generate complex outputs. They handle matrix multiplication, which is crucial for training and inference. Higher memory bandwidth and large memory capacity improve performance.

Are there any other GPUs suitable for running LLMs?

Yes, there are other GPUs from NVIDIA and AMD suitable for running LLMs. However, the 409024GB and A4048GB represent the current top tier in terms of performance and memory capacity.

How can I know which GPU is better for my specific LLM project?

Experimentation is key! Try running your LLM on different hardware configurations to assess performance and see which one best meets your needs.

Keywords

NVIDIA 409024GB, NVIDIA A4048GB, LLM, Large Language Model, AI Development, Token Speed Generation, Llama 3, Quantization, Q4KM, F16, GPU, Memory Bandwidth, Memory Capacity, Inference Speed, Model Size, Local Development, Performance Comparison, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, Chatbots, Text Generation, Real-time AI, GPU Benchmark, GPU Speed, GPU Memory,