Which is Better for AI Development: NVIDIA 4070 Ti 12GB or NVIDIA RTX 6000 Ada 48GB? Local LLM Token Speed Generation Benchmark

Introduction: The Quest for Local LLM Power

The world of large language models (LLMs) is buzzing with excitement. LLMs are transforming how we interact with computers, offering capabilities like generating text, translating languages, and even writing code. While cloud-based LLMs are widely accessible, the allure of running LLMs locally is growing. This allows for faster processing, greater control over data privacy, and the potential to build custom AI applications. But choosing the right hardware for local LLM development can be a daunting task.

This article compares two popular GPUs, the NVIDIA GeForce RTX 4070 Ti 12GB and the NVIDIA RTX 6000 Ada 48GB, to help you determine which is best suited for your LLM adventures. We’ll delve into their performance in generating tokens (the building blocks of text) for various popular LLM models. Buckle up, it's going to be a thrilling ride through the world of local LLM development!

Comparison of NVIDIA 4070 Ti 12GB and NVIDIA RTX 6000 Ada 48GB

To get started, let's understand what we are dealing with:

- NVIDIA GeForce RTX 4070 Ti 12GB: This is a powerful gaming GPU known for its high-performance capabilities. It's a popular choice for gamers and content creators, but it's also increasingly being used for AI development. Think of it as the high-end gaming PC that can also handle some hardcore AI tasks.

- NVIDIA RTX 6000 Ada 48GB: This is a professional-grade GPU designed for demanding workloads like AI, machine learning, and scientific computing. It boasts a massive 48GB of memory, which is ideal for handling large LLM models. Think of it as the beast of a GPU designed to crush even the most demanding AI projects.

The Token Generation Showdown: Llama 3 Models

Now, let's dive into the heart of the matter: how do these GPUs perform with popular LLM models? We'll focus on the Llama 3 family of models, known for their impressive capabilities.

For this comparison, we'll examine the token generation speed across two key variables:

- Model Size: We'll look at both Llama 3 8B (smaller model) and Llama 3 70B (a heavyweight contender).

- Quantization: We'll consider both Q4KM (quantization for smaller models) and F16 (higher precision for larger models).

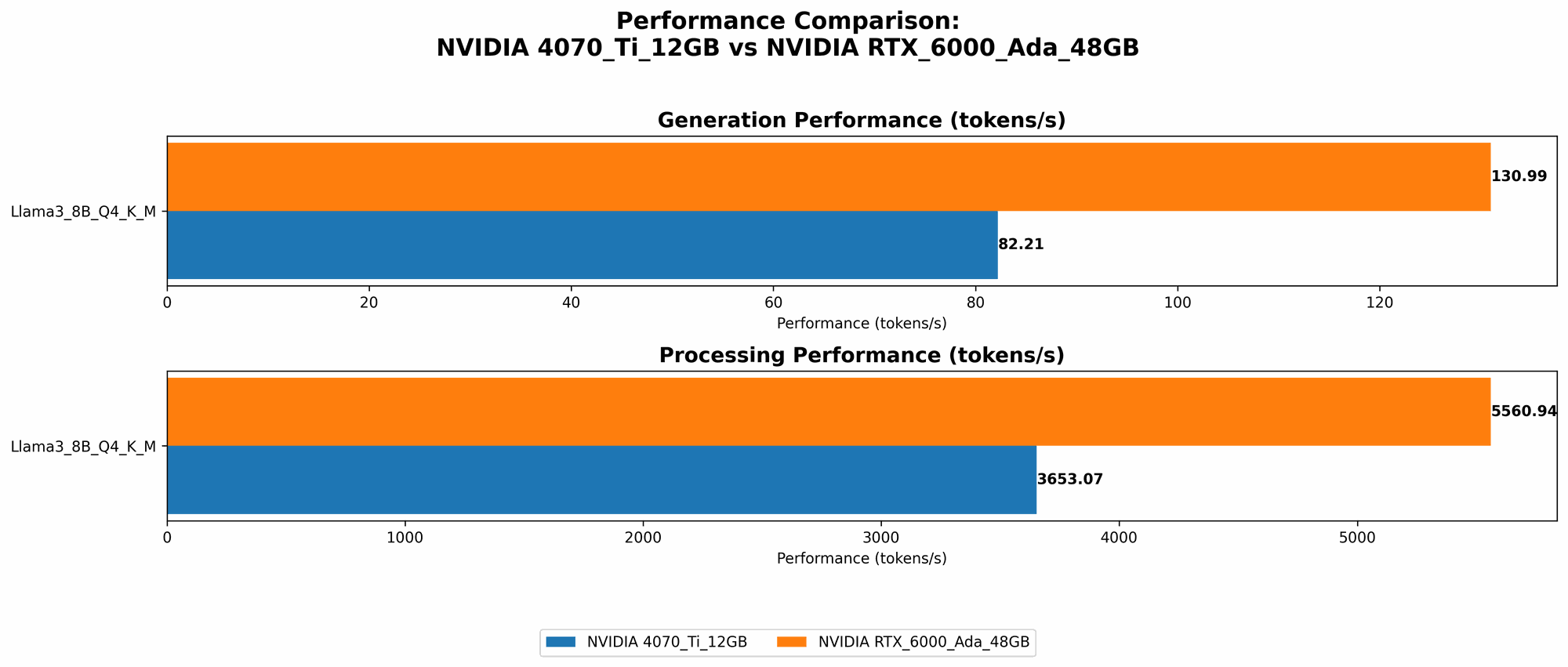

Here’s what we found:

| GPU | Model | Quantization | Tokens/second (Generation) |

|---|---|---|---|

| NVIDIA GeForce RTX 4070 Ti 12GB | Llama 3 8B | Q4KM | 82.21 |

| NVIDIA RTX 6000 Ada 48GB | Llama 3 8B | Q4KM | 130.99 |

| NVIDIA RTX 6000 Ada 48GB | Llama 3 8B | F16 | 51.97 |

| NVIDIA RTX 6000 Ada 48GB | Llama 3 70B | Q4KM | 18.36 |

- Note: We don't have data for the RTX 4070 Ti 12GB with Llama 3 70B and F16 quantization due to memory limitations.

Performance Analysis: Strengths and Weaknesses

NVIDIA RTX 6000 Ada 48GB: The Heavyweight Champion

- Strength: The RTX 6000 Ada 48GB consistently wins the token generation race, especially with larger models. It handles the Llama 3 70B model with ease, while the RTX 4070 Ti 12GB struggles to even load this model.

- Weakness: The RTX 6000 Ada 48GB is significantly more expensive than the RTX 4070 Ti 12GB, which might be a barrier for some developers.

NVIDIA GeForce RTX 4070 Ti 12GB: The Versatile Challenger

- Strength: This GPU is a powerful option for the smaller Llama 3 8B model and offers a good balance of performance and affordability. It is an excellent choice for developers who are starting with LLMs or have budget constraints.

- Weakness: Its limited memory capacity prevents it from handling large models like Llama 3 70B.

Practical Recommendations: Choosing the Right Tool for the Job

For Smaller Models:

If your primary focus is working with smaller LLM models like Llama 3 8B, the RTX 4070 Ti 12GB is a great choice. It offers excellent performance at a more reasonable price point.

For Larger Models:

For working with large models like Llama 3 70B, the RTX 6000 Ada 48GB is the undisputed champion. Its large memory capacity and processing power make it ideal for handling these complex models.

For Budget-Conscious Developers:

If affordability is a primary concern, the RTX 4070 Ti 12GB offers a good balance of performance and cost. However, remember its memory limitations and its inability to handle larger models.

Analogy Time:

Think of your choice as picking a car for a road trip.

- RTX 4070 Ti 12GB: This is like a sporty sedan. It’s fast and affordable, but it might not have enough space for a long road trip with a lot of luggage.

- RTX 6000 Ada 48GB: This is like a spacious SUV. It’s designed for long hauls and can handle any load. However, it comes with a higher price tag.

Beyond Token Generation: Other Considerations

The token generation speed is just one aspect of LLM performance. Other factors also play a significant role, including:

- Memory Bandwidth (BW): Higher memory bandwidth allows for faster data transfer between the GPU and its memory, ultimately leading to faster processing.

- GPU Cores: The number of GPU cores influences the parallel processing capability of the GPU, allowing it to handle more complex tasks simultaneously.

Let's Look at the Numbers: Bandwidth and GPU Cores

| GPU | Memory Bandwidth (GB/s) | GPU Cores |

|---|---|---|

| NVIDIA GeForce RTX 4070 Ti 12GB | 504 | 7680 |

| NVIDIA RTX 6000 Ada 48GB | 960 | 10752 |

The RTX 6000 Ada 48GB outperforms the RTX 4070 Ti 12GB in both categories. It boasts significantly higher memory bandwidth and has a larger number of GPU cores, making it a powerhouse for demanding AI tasks.

Quantization: A Simple Explanation

Quantization is a technique used to reduce the size of LLM models without sacrificing too much performance. It involves converting the model's values from 32-bit floating-point numbers to smaller, more efficient representations.

Imagine a model as a giant recipe for generating text. Quantization is like replacing some of the ingredients with smaller, more compact versions, making the recipe easier to store and use.

Q4KM is a smaller quantization scheme commonly used for smaller models. It's a good balance between compactness and performance. F16 is a higher-precision quantization scheme that can be used for larger models.

FAQ: Your LLM and GPU Questions Answered

What's the best device for running LLMs locally?

The best device for you depends on your specific needs:

- Smaller models: The RTX 4070 Ti 12GB is a great option for smaller models like Llama 3 8B.

- Larger models: The RTX 6000 Ada 48GB is the clear choice for handling models like Llama 3 70B.

- Budget: If affordability is a concern, the RTX 4070 Ti 12GB is more accessible.

What if I need to run multiple LLM models simultaneously?

In this case, you might need a device with more processing power and memory, potentially requiring multiple GPUs or a powerful server setup.

What are other GPUs suitable for LLM development?

Other popular options include the NVIDIA A100, A40, and H100, specifically designed for AI workloads. However, these GPUs are more expensive and might not be suitable for general-purpose computing.

What is the difference between local and cloud-based LLM deployment?

Local deployment gives you more control over your data and offers potential speed advantages. Cloud-based deployment is often more accessible and requires less technical expertise.

Where can I learn more about LLMs and GPU acceleration?

There are many resources available online, including:

- Llama.cpp project — This popular repository provides instructions for compiling and running LLMs locally:

- Hugging Face — Excellent platform for exploring and experimenting with LLMs:

- Hugging Face Transformers — A library for working with pre-trained LLMs:

Keywords:

LLM, Large Language Model, NVIDIA, GPU, RTX 4070 Ti, RTX 6000 Ada, token generation, Llama 3, quantization, Q4KM, F16, performance, benchmark, AI, machine learning, deep learning, development, local, cloud, memory bandwidth, GPU cores, AI development, AI applications, AI hardware, GPU acceleration, Hugging Face, Llama.cpp