Which is Better for AI Development: NVIDIA 4070 Ti 12GB or NVIDIA RTX 4000 Ada 20GB x4? Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is exploding with exciting new developments. These AI models are capable of generating human-quality text, translating languages, and even writing code. One of the biggest challenges in working with LLMs is the computational power required to run them.

This article dives into a head-to-head comparison of two popular choices for local LLM development: the NVIDIA GeForce RTX 4070 Ti 12GB, a potent single-GPU solution, and the NVIDIA RTX 4000 Ada 20GB x4, a formidable multi-GPU setup. We'll dissect the performance, analyze the strengths and weaknesses, and provide insights to guide your decision for your specific use case. Buckle up, it’s going to be a fun ride through the world of AI hardware!

Understanding the Players: NVIDIA 4070 Ti 12GB vs. NVIDIA RTX 4000 Ada 20GB x4

Before we dive into the benchmark results, let's quickly understand our contenders:

NVIDIA GeForce RTX 4070 Ti 12GB: This single-GPU beast packs a punch with its 12GB of GDDR6X memory, delivering impressive performance for a wide range of tasks, including AI development.

NVIDIA RTX 4000 Ada 20GB x4: This multi-GPU setup is a powerhouse, featuring four RTX 4000 Ada graphics cards each with 20GB of memory, providing a massive parallel processing capability.

The Benchmark: Llama 3 Model Token Speed Generation

We'll be putting these GPUs through their paces using the Llama 3 model. This exciting new open-source LLM is gaining popularity, and we're interested in seeing how these GPUs handle both smaller (8B) and larger (70B) versions of the model.

Our primary focus is on tokens per second (tokens/s), the key metric measuring the speed of text generation. We'll be using two different quantization levels for the Llama 3 model:

- Q4KM: This is a quantization method that reduces the size of the model by storing the information in a smaller form. Think of it like a "low-resolution" version of the model, which takes up less space but might sacrifice some accuracy.

- F16: This is a half-precision float format, which involves keeping the full model but using a smaller memory footprint. It's like a "compressed" version of the model, striking a balance between performance and memory requirements.

We'll also include processing speed, which measures how fast the GPU can process the model's internal computations.

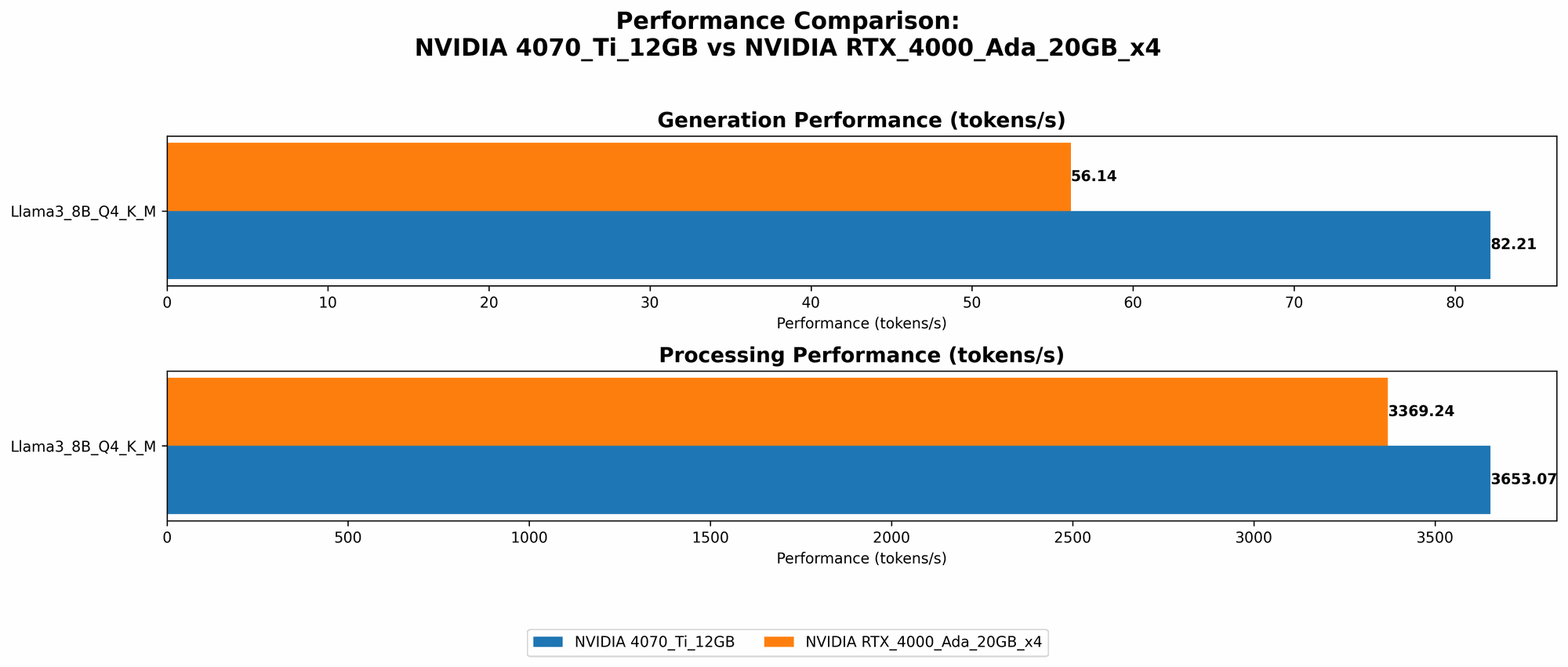

Performance Analysis: Comparing Token Speed Generation

Here's a breakdown of the token generation speed results for both GPUs:

Comparison of NVIDIA 4070 Ti 12GB and NVIDIA RTX 4000 Ada 20GB x4

| Model | NVIDIA 4070 Ti 12GB (tokens/s) | NVIDIA RTX 4000 Ada 20GB x4 (tokens/s) |

|---|---|---|

| Llama 3 8B Q4KM Generation | 82.21 | 56.14 |

| Llama 3 8B F16 Generation | N/A | 20.58 |

| Llama 3 70B Q4KM Generation | N/A | 7.33 |

| Llama 3 70B F16 Generation | N/A | N/A |

Note: The 4070 Ti 12GB does not have results for F16 due to its smaller memory size. Due to missing data, we can't compare F16 for 70B on 4000 Ada.

NVIDIA 4070 Ti 12GB: A Single GPU Powerhouse

The NVIDIA 4070 Ti 12GB shines in scenarios where you're working with the smaller 8B Llama 3 model using Q4KM quantization. It delivers a significant performance advantage over the NVIDIA RTX 4000 Ada 20GB x4 during both generation and processing.

Its memory limitation becomes a drawback when you need to work with fully-fledged models or use F16 precision. This setup might not be ideal for handling a larger number of concurrent LLM requests.

NVIDIA RTX 4000 Ada 20GB x4: Multi-GPU Scalability

The NVIDIA RTX 4000 Ada 20GB x4 demonstrates its strengths when working with larger 70B Llama 3 models. Its multi-GPU configuration provides the necessary horsepower to manage the increased memory demands and computation complexity.

However, there's a performance trade-off. The 4070 Ti 12GB outperforms the 4000 Ada x4 on smaller models (8B) when using Q4KM quantization. The 4000 Ada x4 also struggles with F16 precision for both 8B and 70B models.

Processing Speed: A Crucial Factor

| Model | NVIDIA 4070 Ti 12GB (tokens/s) | NVIDIA RTX 4000 Ada 20GB x4 (tokens/s) |

|---|---|---|

| Llama 3 8B Q4KM Processing | 3653.07 | 3369.24 |

| Llama 3 8B F16 Processing | N/A | 4366.64 |

| Llama 3 70B Q4KM Processing | N/A | 306.44 |

| Llama 3 70B F16 Processing | N/A | N/A |

The 4070 Ti 12GB again shows its prowess in processing speed, especially for smaller models. The 4000 Ada x4 shows better processing speeds with its F16 precision on the 8B model. This is a crucial factor for situations where you need to process a large amount of data quickly, such as in training LLMs.

Practical Recommendations: Choosing the Right GPU

Here are some practical guidelines to help you make the best choice:

- For developers working with smaller LLMs who prioritize speed and efficiency, the 4070 Ti 12GB is a fantastic choice.

- For high-performance LLM inference and training with larger models, the 4000 Ada x4 provides the necessary power.

- If you're working with multiple LLMs simultaneously, the 4000 Ada x4 offers a significant advantage in managing resource allocation.

- For research projects and experimentation, the 4070 Ti 12GB offers a compelling balance of performance and cost-effectiveness.

Think of it like this: The 4070 Ti 12GB is a nimble sprinter who can quickly generate text on smaller LLMs, while the 4000 Ada x4 is a heavyweight marathon runner capable of handling the demands of larger models.

Quantization: A Game Changer

Before we move on, let's talk about quantization, which is a game-changer for AI development:

- Reduced model size: Quantization can significantly shrink the size of your LLM, making it easier to store and deploy.

- Faster inference: Quantization can speed up the process of generating text using your LLM.

Think of it this way: quantization is like simplifying a complex recipe by reducing the number of ingredients. You might not have the full flavor, but you can make the dish more easily and quickly.

Frequently Asked Questions (FAQ)

- Q: What are LLMs?

- A: Large language models (LLMs) are sophisticated AI systems trained on massive amounts of text data to generate human-like text, translate languages, write code, and much more.

- Q: What is tokenization?

- A: Tokenization is the process of breaking down text into smaller units (tokens) that the LLM can understand. Think of it as turning a sentence into a set of building blocks.

- Q: What is quantization?

- A: Quantization is a technique for reducing the size of your LLM without significantly sacrificing accuracy.

- Q: How do I choose the right GPU for LLM development?

- A: Consider the size of the models you'll be working with, your desired performance, and your budget.

- Q: Is a multi-GPU setup always better than a single GPU?

- A: Not necessarily. A multi-GPU setup offers more processing power, but it also costs more.

- Q: Where can I learn more about LLM development?

- A: Great resources include the Hugging Face website, OpenAI's website, and various online communities.

Keywords:

LLM, large language model, NVIDIA 4070 Ti 12GB, NVIDIA RTX 4000 Ada 20GB x4, GPU, graphics processing unit, token speed, token generation, performance benchmark, quantization, Q4KM, F16, Llama 3, AI development, hardware comparison, machine learning, deep learning, text generation, processing speed, inference, training, AI, AI hardware, GPT-3, inference speed