Which is Better for AI Development: NVIDIA 4070 Ti 12GB or NVIDIA 4090 24GB x2? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI development is experiencing a rapid evolution, largely powered by the advancements in Large Language Models (LLMs). LLMs are incredibly complex, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But this power comes with a price: computational resources.

Choosing the right hardware for running LLMs locally is crucial for developers and researchers. This is where the eternal debate starts: GPUs vs CPUs, single GPU vs multi-GPU systems, and which model is the best for your specific needs. Today, we are diving into the depths of this debate by analyzing two popular contenders: NVIDIA 4070 Ti 12GB and NVIDIA 4090 24GB x2. We'll be comparing their performance in terms of token speed generation for Llama models, a family of open-source LLMs known for their flexibility and efficiency.

NVIDIA 4070 Ti 12GB vs. NVIDIA 4090 24GB x2: A Token Speed Showdown

The numbers speak for themselves, but we need to decipher their meaning and understand what's happening beneath the surface. The real question is: which of these GPU configurations is the "better" choice for AI development? This answer is not straightforward, as the "better" choice depends heavily on the specific requirements of your LLM project.

Llama3 8B: A Tale of Two Titans (and a Quantization Quest)

Let's begin with a familiar face in the LLM world: Llama 3 8B. This model is considered a good starting point for many developers due to its reasonable size and impressive performance. We'll analyze its token speed generation on both GPU configurations, considering both Quantized (Q4) and Full Precision (F16) variants:

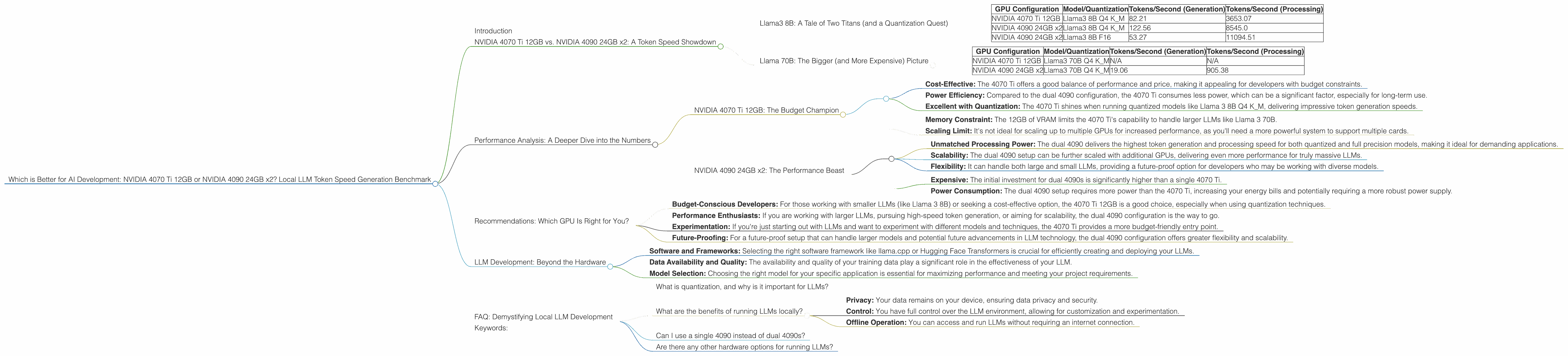

| GPU Configuration | Model/Quantization | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

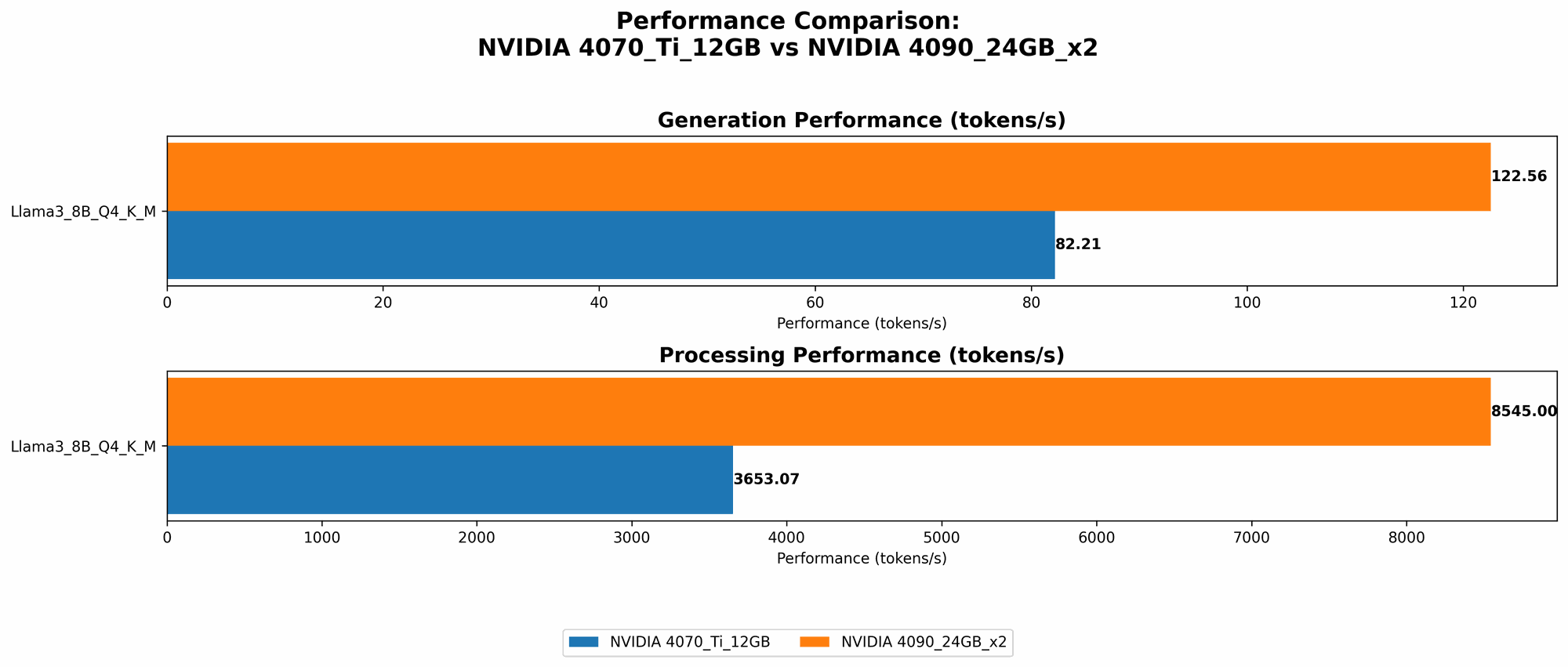

| NVIDIA 4070 Ti 12GB | Llama3 8B Q4 K_M | 82.21 | 3653.07 |

| NVIDIA 4090 24GB x2 | Llama3 8B Q4 K_M | 122.56 | 8545.0 |

| NVIDIA 4090 24GB x2 | Llama3 8B F16 | 53.27 | 11094.51 |

Observations:

- Quantization Power: The 4070 Ti 12GB shines when using the Quantized Q4 K_M variant of Llama 3 8B, generating tokens at a rate of 82.21 tokens per second. This performance is quite impressive, especially considering the single-GPU setup.

- Dual 4090 Reigns Supreme: The dual 4090 configuration takes the lead when it comes to both Q4 K_M generation (122.56 tokens per second) and F16 generation (53.27 tokens per second). This indicates that dual 4090 is more powerful for both quantized and full precision models.

- Processing Power: The 4090 x2 configuration demonstrates an impressive lead in terms of processing speed, exceeding the 4070 Ti with roughly 2.3x faster processing for Q4 K_M and approximately 3x faster for F16.

What’s the Deal with Quantization?

Quantization is like putting your LLM on a diet! It involves reducing the precision of the model's weights, resulting in a smaller file size and potentially faster inference. Think of it as using a smaller bucket to store your data, allowing your GPU to work faster. Q4 K_M is a popular choice for quantization as it strikes a balance between accuracy and speed.

Practical Implications:

- Budget-Conscious Development: For developers working with Llama 3 8B and seeking a budget-friendly option, the 4070 Ti 12GB delivers impressive performance with quantization.

- Peak Performance: If your project demands the highest token generation speed possible, the dual 4090 configuration is the clear winner. It can handle both quantized and full precision models without breaking a sweat.

Llama 70B: The Bigger (and More Expensive) Picture

Let's move on to a bigger beast, the Llama 3 70B model. This model is significantly more complex than the 8B version and requires more resources to operate efficiently.

| GPU Configuration | Model/Quantization | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| NVIDIA 4070 Ti 12GB | Llama3 70B Q4 K_M | N/A | N/A |

| NVIDIA 4090 24GB x2 | Llama3 70B Q4 K_M | 19.06 | 905.38 |

Observations:

- 4070 Ti Limitation: Unfortunately, the 4070 Ti 12GB is not powerful enough to handle Llama 3 70B, even with quantization. This is due to its limited memory capacity, as LLMs with larger parameter counts require more memory to operate effectively.

- Dual 4090: Still a Champion: The dual 4090 configuration can handle Llama 3 70B, delivering a respectable 19.06 tokens per second generation speed.

The Memory Game: Why Does Size Matter?

Think of an LLM as a giant dictionary with tons of words (parameters) that it uses to understand and generate text. The bigger the dictionary, the more complex language it can understand and the more creative text it can generate. But with this complexity comes a need for bigger buckets (memory) to store all those words.

Practical Implications:

- Budget-Conscious Choice: The 4070 Ti 12GB might be a good choice for smaller LLMs like Llama 3 8B but falls short when it comes to larger models.

- Scaling Up: If you are planning to work with larger LLMs in the future, the dual 4090 configuration offers the scalability needed to tackle these memory-intensive models.

Performance Analysis: A Deeper Dive into the Numbers

Looking at the raw token speed numbers alone doesn't tell the whole story. Let's analyze each GPU's strengths and weaknesses to gain a clearer understanding of their performance characteristics.

NVIDIA 4070 Ti 12GB: The Budget Champion

The 4070 Ti 12GB is a great entry-level option for developers looking to get started with local LLM development. Here are its key advantages:

- Cost-Effective: The 4070 Ti offers a good balance of performance and price, making it appealing for developers with budget constraints.

- Power Efficiency: Compared to the dual 4090 configuration, the 4070 Ti consumes less power, which can be a significant factor, especially for long-term use.

- Excellent with Quantization: The 4070 Ti shines when running quantized models like Llama 3 8B Q4 K_M, delivering impressive token generation speeds.

However, the 4070 Ti has its limitations:

- Memory Constraint: The 12GB of VRAM limits the 4070 Ti's capability to handle larger LLMs like Llama 3 70B.

- Scaling Limit: It's not ideal for scaling up to multiple GPUs for increased performance, as you'll need a more powerful system to support multiple cards.

NVIDIA 4090 24GB x2: The Performance Beast

The dual 4090 configuration boasts impressive performance, especially with its 48GB of combined VRAM, allowing it to handle even the largest LLMs with ease.

- Unmatched Processing Power: The dual 4090 delivers the highest token generation and processing speed for both quantized and full precision models, making it ideal for demanding applications.

- Scalability: The dual 4090 setup can be further scaled with additional GPUs, delivering even more performance for truly massive LLMs.

- Flexibility: It can handle both large and small LLMs, providing a future-proof option for developers who may be working with diverse models.

However, this power comes at a cost:

- Expensive: The initial investment for dual 4090s is significantly higher than a single 4070 Ti.

- Power Consumption: The dual 4090 setup requires more power than the 4070 Ti, increasing your energy bills and potentially requiring a more robust power supply.

Recommendations: Which GPU Is Right for You?

The "better" GPU depends heavily on your specific needs and project scope. Here's our recommendation breakdown:

- Budget-Conscious Developers: For those working with smaller LLMs (like Llama 3 8B) or seeking a cost-effective option, the 4070 Ti 12GB is a good choice, especially when using quantization techniques.

- Performance Enthusiasts: If you are working with larger LLMs, pursuing high-speed token generation, or aiming for scalability, the dual 4090 configuration is the way to go.

- Experimentation: If you're just starting out with LLMs and want to experiment with different models and techniques, the 4070 Ti provides a more budget-friendly entry point.

- Future-Proofing: For a future-proof setup that can handle larger models and potential future advancements in LLM technology, the dual 4090 configuration offers greater flexibility and scalability.

LLM Development: Beyond the Hardware

Choosing the right GPU is only one piece of the puzzle. Other factors influence your LLM development journey, such as:

- Software and Frameworks: Selecting the right software framework like llama.cpp or Hugging Face Transformers is crucial for efficiently creating and deploying your LLMs.

- Data Availability and Quality: The availability and quality of your training data play a significant role in the effectiveness of your LLM.

- Model Selection: Choosing the right model for your specific application is essential for maximizing performance and meeting your project requirements.

FAQ: Demystifying Local LLM Development

What is quantization, and why is it important for LLMs?

Quantization is a technique used to reduce the precision of an LLM's weights, resulting in a smaller file size and potentially faster inference. It's like using a smaller bucket to store your data, allowing your GPU to work faster. This is especially beneficial for developers with limited memory resources or those seeking to optimize performance.

What are the benefits of running LLMs locally?

Running LLMs locally offers several advantages, including:

- Privacy: Your data remains on your device, ensuring data privacy and security.

- Control: You have full control over the LLM environment, allowing for customization and experimentation.

- Offline Operation: You can access and run LLMs without requiring an internet connection.

Can I use a single 4090 instead of dual 4090s?

Yes, a single 4090 will be sufficient for running most LLMs, including Llama 3 70B. However, if you need the highest performance possible or plan to work with extremely large models in the future, the dual 4090 setup offers greater computational power and scalability.

Are there any other hardware options for running LLMs?

While GPUs are generally preferred for LLM development, CPUs can also be used, especially for smaller models or tasks like text processing. Cloud computing services like Google Cloud Platform and Amazon Web Services provide powerful infrastructure for training and deploying large LLMs.

Keywords:

NVIDIA 4070 Ti, NVIDIA 4090, LLM, Llama 3, Llama 70B, Llama 8B, token speed generation, local LLM development, GPU, benchmark, quantization, AI development, hardware, processing power, performance, cost-effectiveness, scalability, memory, VRAM, software frameworks, llama.cpp, Hugging Face Transformers, data availability, model selection