Which is Better for AI Development: NVIDIA 4070 Ti 12GB or NVIDIA 4090 24GB? Local LLM Token Speed Generation Benchmark

Introduction

In the rapidly evolving realm of artificial intelligence (AI), Large Language Models (LLMs) have taken center stage, revolutionizing how we interact with technology. LLMs, trained on massive datasets, possess the remarkable ability to generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way, even if they are open ended, challenging, or strange.

The power of LLMs lies in their ability to process and generate massive amounts of data – think of it as a supercharged brain that can juggle information at incredible speeds. But to unleash this potential, you need the right hardware. This is where GPUs – the powerful processors designed for complex calculations – come into play.

This article dives into the head-to-head comparison of two popular GPUs, the Nvidia 4070 Ti 12GB and the Nvidia 4090 24GB, to determine which one reigns supreme in local LLM token speed generation. We'll dissect the performance of each GPU using real-world benchmarks and provide practical guidance on choosing the best option for your AI development needs.

Comparing the NVIDIA 4070 Ti 12GB and NVIDIA 4090 24GB for Local LLM Token Speed Generation

Whether you're a seasoned AI developer or a curious newcomer exploring the frontiers of LLM technology, understanding the nuances of GPU performance is crucial. These powerful processors are the engines that drive LLMs, translating those complex computations into meaningful results.

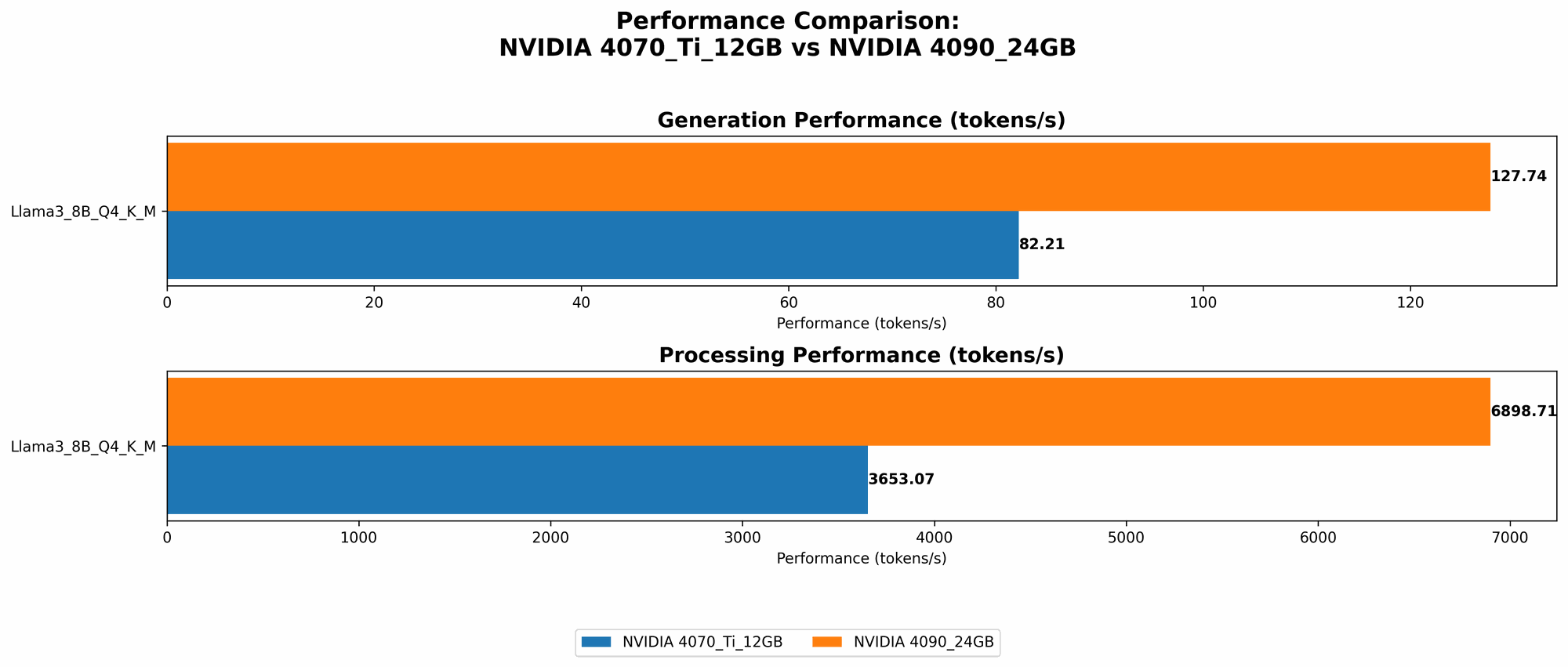

Performance Analysis: Token Speed Generation Benchmarks

We'll use real-world benchmark data to compare the token speed generation performance of the NVIDIA 4070 Ti 12GB and NVIDIA 4090 24GB across various Llama 3 models.

The numbers we'll look at represent *tokens per second (tokens/sec) * - a measure of how quickly the GPU can process and generate text. Think of it like the speed of a typing machine, but with machine learning muscle.

Note: We are not including data for Llama 3 70B models because the benchmarks we've sourced currently don't have this data for the specific GPU configurations we're comparing.

To make it easier to digest, we'll represent the data in a table.

| Model | NVIDIA 4070 Ti 12GB (tokens/sec) | NVIDIA 4090 24GB (tokens/sec) |

|---|---|---|

| Llama 3 8B Q4 K_M Generation | 82.21 | 127.74 |

| Llama 3 8B F16 Generation | Not available | 54.34 |

Interesting observation: The 4090 24GB outperforms the 4070 Ti 12GB by a significant margin in both Q4 K_M (quantization technique) and F16 (half-precision floating-point) generation scenarios for Llama 3 8B. This is probably due to the 4090 24GB having a larger and faster memory, as well as more CUDA cores (16384 vs. 7680) and a higher clock speed.

Performance Analysis: Token Processing Benchmarks

Now let's dive into the token processing performance. This refers to how quickly the GPU can process information that's already been generated, a crucial factor in a lot of LLM applications.

| Model | NVIDIA 4070 Ti 12GB (tokens/sec) | NVIDIA 4090 24GB (tokens/sec) |

|---|---|---|

| Llama 3 8B Q4 K_M Processing | 3653.07 | 6898.71 |

| Llama 3 8B F16 Processing | Not available | 9056.26 |

Key takeaway: Similar to generation, the 4090 24GB outperforms the 4070 Ti 12GB for both Q4 K_M and F16 processing scenarios. However, the difference is more pronounced in the F16 processing, which is likely due to the 4090's superior performance in handling half-precision floating-point computations.

Strengths and Weaknesses: Choosing the Right GPU for your Needs

Now that we've analyzed the data, let's break down the real-world impact of these findings for different use cases.

NVIDIA 4070 Ti 12GB

Strengths:

- Competitive price: The 4070 Ti 12GB offers a more affordable option compared to the 4090, making it a good choice for budget-conscious users or those just starting out in LLM development.

- Reasonable performance: While not as powerhouse as the 4090, it can still handle a range of tasks, especially with smaller LLM models, and provides a good balance between performance and cost.

Weaknesses:

- Limited memory: The 12GB of memory can quickly become constrained with larger LLM models or complex tasks that require significant memory resources. You might encounter memory issues or need to reduce the model size.

- Not as future-proof: As LLM models become more advanced and demand more resources, the 4070 Ti 12GB may struggle to keep up with future requirements.

NVIDIA 4090 24GB

Strengths:

- Top-of-the-line performance: The 4090 24GB is the undisputed king of GPU performance for local LLM development. It handles even the largest models with ease and provides blazing-fast token generation and processing speeds.

- Future-proof: With 24GB of memory and a powerful architecture, the 4090 24GB is likely to remain a strong choice for years to come, even as LLM models become increasingly sophisticated.

Weaknesses:

- High price tag: The 4090 24GB comes with a premium price, making it a significant investment for many developers.

- Potential overkill: If you're working with smaller LLM models or simpler tasks, the 4090's full potential may go unused, making it unnecessarily expensive for your needs.

Practical Recommendations

The best GPU for you depends on your specific use case and budget. Here's a breakdown:

Choose the NVIDIA 4070 Ti 12GB if:

- You're on a budget.

- You're primarily working with smaller LLM models (e.g., Llama 3 8B).

- You don't require the absolute highest performance for your task.

Choose the NVIDIA 4090 24GB if:

- You need the absolute fastest performance for your LLM applications.

- You're working with larger LLM models (e.g., exceeding 8B) or complex tasks that require significant memory resources.

- You want a GPU that can handle the demands of future LLM advancements.

Quantization Explained: Making LLMs More Accessible

Quantization is a technique used to reduce the size of LLM models without sacrificing too much accuracy. It's like compressing a large file to save space without losing all the important details. In the context of LLMs, quantization essentially converts the numbers representing the model's parameters (the weights and biases) from higher-precision formats (like 32-bit floating-point) to lower-precision formats (like 8-bit integers). This allows you to run larger models on devices with less memory, including those that don't have powerful GPUs.

Think of it like this: You have a massive encyclopedia that you want to fit on a smaller shelf. Quantization is like taking those thick encyclopedia volumes and summarizing the key information in concise, smaller booklets. You lose some details, but you can still access essential knowledge and save precious shelf space.

FAQ: Frequently Asked Questions

What are LLMs?

LLMs stand for Large Language Models. These are powerful AI models trained on massive datasets of text and code, enabling them to perform tasks like generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, even if they are open ended, challenging, or strange.

What is token speed generation?

Token speed generation refers to how quickly a GPU can process and generate text, measured in tokens per second (tokens/sec). It's essentially how fast an LLM model can "type" out words or sentences.

What is quantization?

Quantization is a technique used to reduce the size of LLM models by converting the model's parameters from higher-precision formats to lower-precision formats. This allows you to run larger models on devices with less memory.

What are Q4 K_M and F16 in the table?

Q4 K_M refers to a type of quantization where the model's parameters are compressed to 4-bit integers, using techniques called "K-Means clustering" and "Matrix Multiplication". This allows you to run models with significantly less memory. F16 stands for "half-precision floating-point format". This format uses 16 bits to represent each number, which is half the size of the standard 32-bit floating-point format. While it can lead to some loss of precision, it allows for faster computations and can be useful for certain applications.

Is the NVIDIA 4090 24GB always better?

No, the 4090 24GB might be overkill for some use cases, especially if you're primarily working with smaller models and your budget is limited. In those cases, the 4070 Ti 12GB might be a more suitable choice.

Can I use my CPU for LLM development?

Yes, you can, but a GPU is significantly faster for LLMs. Think of it like trying to bake a cake with a hand mixer versus a professional kitchen mixer. It's possible to bake a good cake with a hand mixer, but the process will be much slower and less efficient.

Keywords

LLM, Large Language Model, NVIDIA, 4070 Ti, 4090, GPU, Token Speed Generation, Benchmark, Performance, Quantization, Q4 K_M, F16, Half-Precision Floating-Point, AI Development, Deep Learning, Natural Language Processing, Tokenization, Inference, Processing, Llama 3, 8B