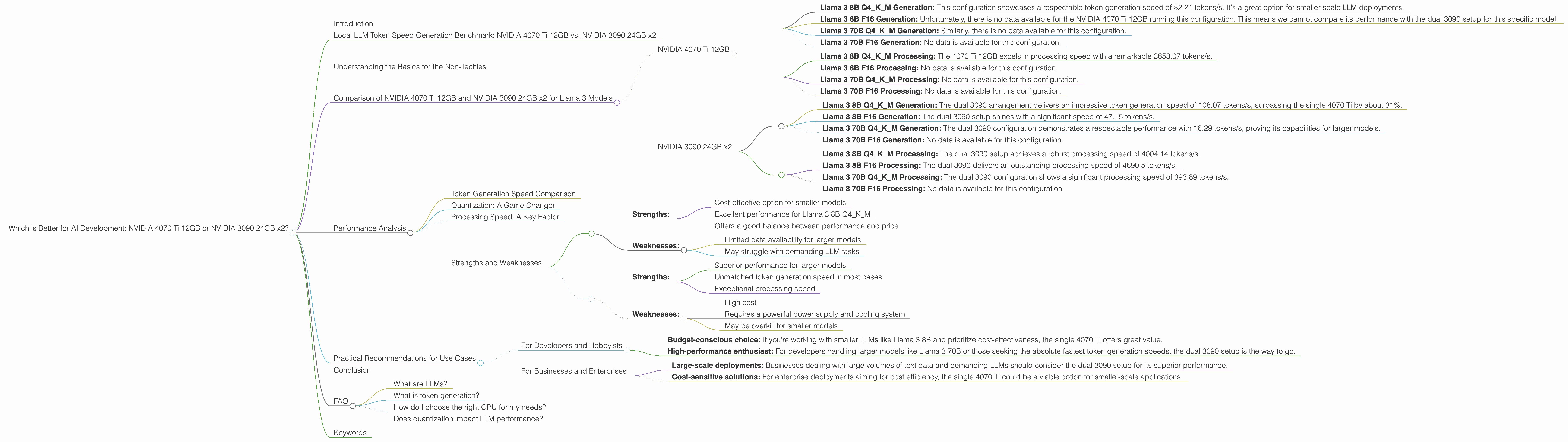

Which is Better for AI Development: NVIDIA 4070 Ti 12GB or NVIDIA 3090 24GB x2? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. From generating creative content and answering complex questions to translating languages and writing code, LLMs are revolutionizing the way we interact with technology. But the power behind these revolutionary applications is heavily reliant on hardware. Whether you're a seasoned developer or a curious tinkerer, choosing the right GPU for running LLMs locally is crucial. This article will delve into a head-to-head comparison of two popular GPU configurations: the NVIDIA 4070 Ti 12GB and the dual NVIDIA 3090 24GB, focusing specifically on their performance in generating tokens for Llama 3 models.

Local LLM Token Speed Generation Benchmark: NVIDIA 4070 Ti 12GB vs. NVIDIA 3090 24GB x2

Imagine LLMs as a super-powered brain, churning out words, ideas, and code at an impressive rate. To feed this brain and keep it humming, you need a powerful engine, and that's where GPUs come into play. In this benchmark, we'll compare the NVIDIA 4070 Ti 12GB and the dual NVIDIA 3090 24GB in terms of their token generation speed for different Llama 3 models, with and without quantization. Basically, we're measuring how fast each GPU can spit out words when running various versions of the Llama 3 model.

Understanding the Basics for the Non-Techies

Think of token generation as a super-fast typist. The more tokens per second (tokens/s) a GPU can churn out, the faster your LLM can generate text, translate languages, or write code. This is a crucial metric for developers who need real-time language processing capabilities.

Comparison of NVIDIA 4070 Ti 12GB and NVIDIA 3090 24GB x2 for Llama 3 Models

NVIDIA 4070 Ti 12GB

The NVIDIA 4070 Ti 12GB is a single GPU card that packs a punch. It's a popular choice for gamers and creators, but its performance in AI development deserves a closer look. Let's analyze its performance on various Llama 3 models:

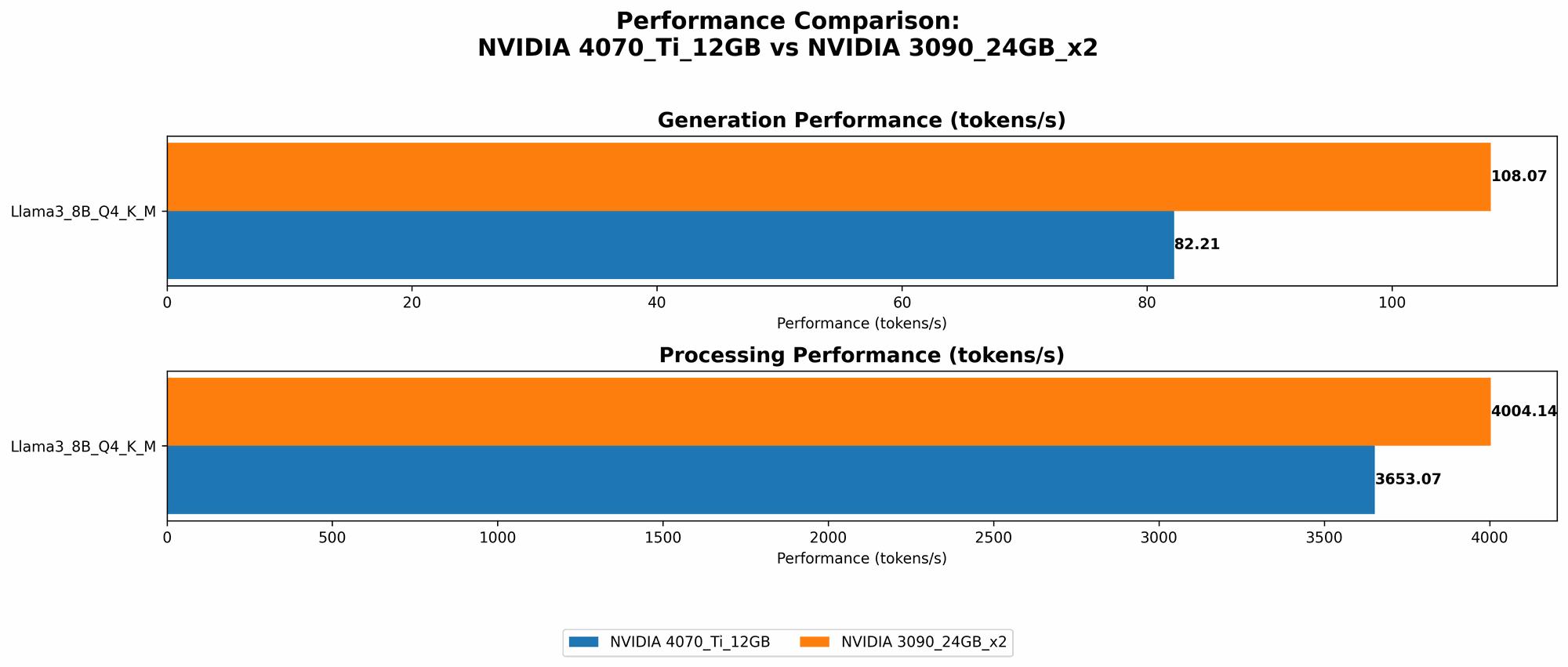

- Llama 3 8B Q4KM Generation: This configuration showcases a respectable token generation speed of 82.21 tokens/s. It's a great option for smaller-scale LLM deployments.

- Llama 3 8B F16 Generation: Unfortunately, there is no data available for the NVIDIA 4070 Ti 12GB running this configuration. This means we cannot compare its performance with the dual 3090 setup for this specific model.

- Llama 3 70B Q4KM Generation: Similarly, there is no data available for this configuration.

- Llama 3 70B F16 Generation: No data is available for this configuration.

Processing Speed:

- Llama 3 8B Q4KM Processing: The 4070 Ti 12GB excels in processing speed with a remarkable 3653.07 tokens/s.

- Llama 3 8B F16 Processing: No data is available for this configuration.

- Llama 3 70B Q4KM Processing: No data is available for this configuration.

- Llama 3 70B F16 Processing: No data is available for this configuration.

NVIDIA 3090 24GB x2

The NVIDIA 3090 24GB is a beast of a GPU, and having two of them working in tandem creates a truly powerful computing setup. Let's see how this configuration performs:

- Llama 3 8B Q4KM Generation: The dual 3090 arrangement delivers an impressive token generation speed of 108.07 tokens/s, surpassing the single 4070 Ti by about 31%.

- Llama 3 8B F16 Generation: The dual 3090 setup shines with a significant speed of 47.15 tokens/s.

- Llama 3 70B Q4KM Generation: The dual 3090 configuration demonstrates a respectable performance with 16.29 tokens/s, proving its capabilities for larger models.

- Llama 3 70B F16 Generation: No data is available for this configuration.

Processing Speed:

- Llama 3 8B Q4KM Processing: The dual 3090 setup achieves a robust processing speed of 4004.14 tokens/s.

- Llama 3 8B F16 Processing: The dual 3090 delivers an outstanding processing speed of 4690.5 tokens/s.

- Llama 3 70B Q4KM Processing: The dual 3090 configuration shows a significant processing speed of 393.89 tokens/s.

- Llama 3 70B F16 Processing: No data is available for this configuration.

Performance Analysis

Token Generation Speed Comparison

The dual 3090 configuration emerges as the clear winner when it comes to token generation speed for most Llama 3 models. In the case of the Llama 3 8B Q4KM model, the dual 3090 setup offers a 31% increase in speed compared to the single 4070 Ti. However, the single 4070 Ti shines for smaller models like Llama 3 8B Q4KM, offering a decent balance between performance and cost.

Quantization: A Game Changer

Quantization is like a diet for your LLM. It reduces the size of the model, requiring less memory and making it faster to run. For the 8B Llama 3 model, both the 4070 Ti and the dual 3090 setup show impressive token generation speeds with quantization (Q4KM). However, the data for F16 configurations is incomplete, making a direct comparison difficult. It's important to note that quantization (Q4KM) can slightly reduce the quality of the generated text, but the performance gain is often worth the trade-off.

Processing Speed: A Key Factor

The processing speed is another crucial factor to consider. It measures how quickly the GPU can process the model's internal calculations. The dual 3090 setup consistently outperforms the single 4070 Ti in processing speed, indicating its inherent advantage in handling complex computational tasks.

Strengths and Weaknesses

NVIDIA 4070 Ti 12GB

- Strengths:

- Cost-effective option for smaller models

- Excellent performance for Llama 3 8B Q4KM

- Offers a good balance between performance and price

- Weaknesses:

- Limited data availability for larger models

- May struggle with demanding LLM tasks

NVIDIA 3090 24GB x2

- Strengths:

- Superior performance for larger models

- Unmatched token generation speed in most cases

- Exceptional processing speed

- Weaknesses:

- High cost

- Requires a powerful power supply and cooling system

- May be overkill for smaller models

Practical Recommendations for Use Cases

For Developers and Hobbyists

- Budget-conscious choice: If you're working with smaller LLMs like Llama 3 8B and prioritize cost-effectiveness, the single 4070 Ti offers great value.

- High-performance enthusiast: For developers handling larger models like Llama 3 70B or those seeking the absolute fastest token generation speeds, the dual 3090 setup is the way to go.

For Businesses and Enterprises

- Large-scale deployments: Businesses dealing with large volumes of text data and demanding LLMs should consider the dual 3090 setup for its superior performance.

- Cost-sensitive solutions: For enterprise deployments aiming for cost efficiency, the single 4070 Ti could be a viable option for smaller-scale applications.

Conclusion

Choosing the right GPU for running LLMs locally is a critical decision that depends on your specific needs and budget. The NVIDIA 4070 Ti 12GB provides a good value proposition for smaller models and budget-conscious users, while the dual NVIDIA 3090 24GB configuration delivers unmatched performance for larger LLMs and demanding tasks. By carefully analyzing the performance data and understanding the strengths and weaknesses of each option, you can make an informed decision that aligns with your AI development goals.

FAQ

What are LLMs?

Large Language Models (LLMs) are powerful AI systems trained on massive datasets of text and code. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is token generation?

Token generation refers to the process of breaking down text into individual units called tokens, which are then processed by the LLM. These tokens can be individual words, punctuation marks, or even sub-word units.

How do I choose the right GPU for my needs?

Consider the size of the LLM you're running, your budget, and the performance requirements for your specific use case. For smaller models and budget-conscious users, the NVIDIA 4070 Ti 12GB is a solid choice. For demanding tasks and larger models, the dual NVIDIA 3090 24GB configuration offers unmatched performance but comes at a higher cost.

Does quantization impact LLM performance?

Quantization, a technique for reducing the size of your LLM, can significantly improve performance by reducing memory requirements. While it may slightly reduce the quality of the generated text, the performance gain is often worth the trade-off.

Keywords

LLM, Large Language Model, NVIDIA, 4070 Ti, 3090, GPU, Token Generation Speed, Quantization, Processing Speed, AI Development, Llama 3, Benchmark, Performance Comparison, F16, Q4KM, Cost-Effective, High-Performance, Practical Recommendations, Use Cases, FAQ, Developers, Hobbyists, Businesses, Enterprises