Which is Better for AI Development: NVIDIA 3090 24GB x2 or NVIDIA RTX 6000 Ada 48GB? Local LLM Token Speed Generation Benchmark

Introduction

You've got your hands on an awesome LLM, but you're struggling to get it to generate text fast enough. You're looking for a powerful GPU to speed things up, but with so many options out there, it's hard to decide which one's right for you. Should you go with the tried and true NVIDIA 309024GBx2, or is the newer NVIDIA RTX6000Ada_48GB the better pick?

This article dives into the performance of these two popular GPUs when it comes to running large language models (LLMs) locally. We'll compare their token generation speeds, explore their strengths and weaknesses, and help you determine which one is the best fit for your AI development needs. Think of this as a one-stop shop for all your GPU-related LLM dilemmas – get ready to unleash your AI's true potential!

Comparing NVIDIA 309024GBx2 and NVIDIA RTX6000Ada_48GB for Local LLM Token Speed Generation

Performance Analysis

To make an informed decision, let's take a look at the raw performance numbers. We'll use data gathered from various benchmarks for token speed generation of Llama 3 models using both the 309024GBx2 and RTX6000Ada_48GB GPUs. It's important to note, we only have data for certain model sizes and quantization levels.

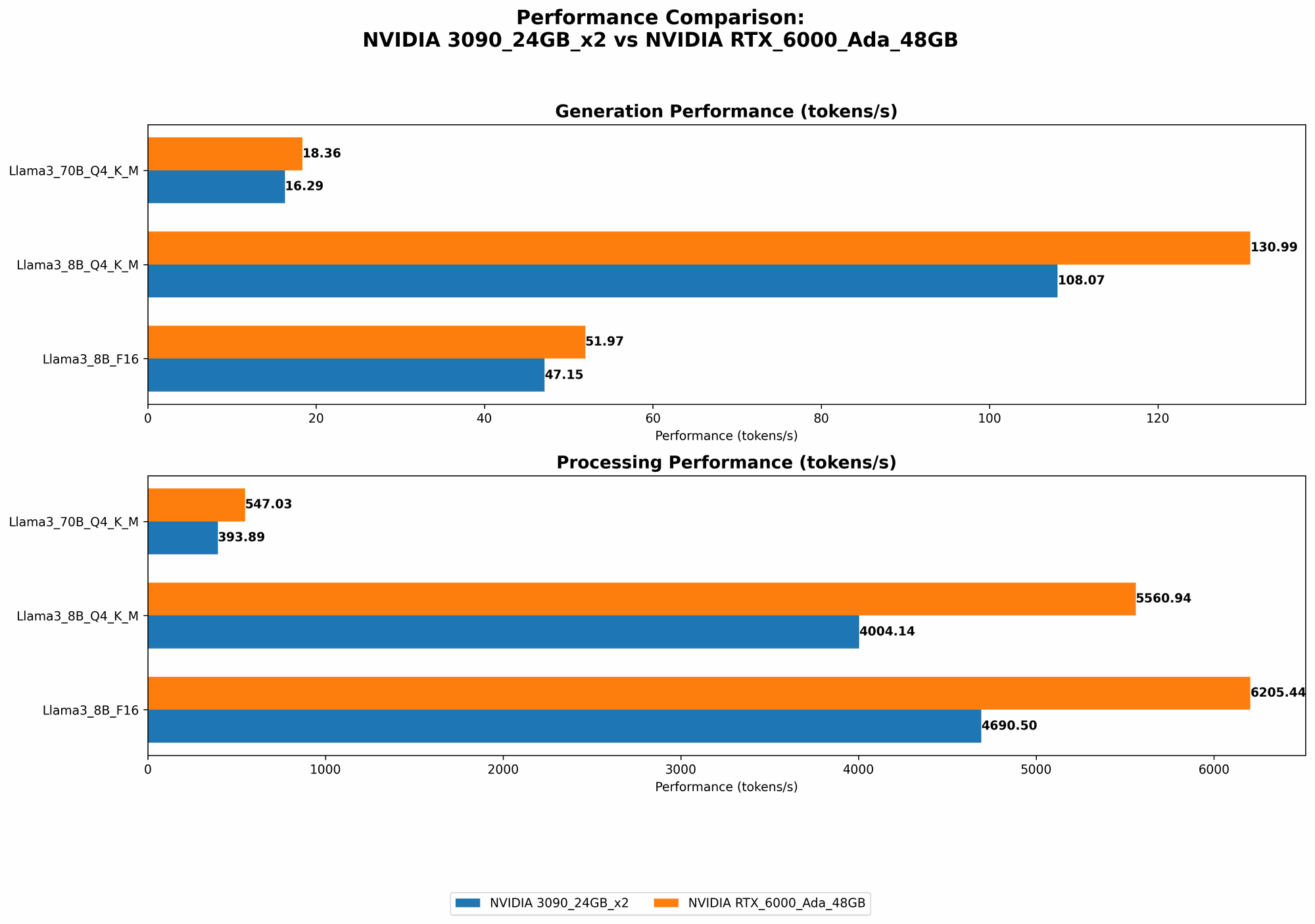

Token Generation Speed: A Tale of Two Titans

The numbers tell a story! They reveal that the RTX6000Ada_48GB is the clear winner in terms of token generation speed for both Llama 3 8B and 70B models.

| Model | Quantization | NVIDIA 309024GBx2 (tokens/s) | NVIDIA RTX6000Ada_48GB (tokens/s) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 108.07 | 130.99 |

| Llama 3 8B | F16 | 47.15 | 51.97 |

| Llama 3 70B | Q4KM | 16.29 | 18.36 |

- Llama 3 8B: The RTX6000Ada48GB generates tokens 21% faster than the 309024GBx2 with Q4K_M quantization, and 10% faster with F16 quantization.

- Llama 3 70B: The RTX6000Ada48GB is 13% faster at token generation compared to the 309024GBx2 with Q4K_M quantization.

Processing Speed: A Closer Look

Let's examine the processing speed for both GPUs. This metric measures how fast the GPUs handle tasks other than token generation, such as processing the model's context and parameters.

| Model | Quantization | NVIDIA 309024GBx2 (tokens/s) | NVIDIA RTX6000Ada_48GB (tokens/s) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 4004.14 | 5560.94 |

| Llama 3 8B | F16 | 4690.5 | 6205.44 |

| Llama 3 70B | Q4KM | 393.89 | 547.03 |

- Llama 3 8B: The RTX6000Ada48GB boasts a 39% advantage in processing speed over the 309024GBx2 with Q4K_M quantization, and a 32% advantage with F16.

- Llama 3 70B: The RTX6000Ada48GB exhibits a 39% increase in processing speed compared to the 309024GBx2 with Q4K_M quantization.

Which GPU Reigns Supreme?

Clearly, the RTX6000Ada_48GB emerges as the champion in both token generation and overall processing speed for the Llama 3 models tested. It's a testament to the Ada architecture's prowess in accelerating LLM workloads.

Strengths and Weaknesses: Understanding the Battleground

NVIDIA 309024GBx2: The Reliable Veteran

Strengths:

- Price: The 309024GBx2 is a more budget-friendly option compared to the RTX6000Ada_48GB, especially when you consider the need for two GPUs.

- Availability: Generally, 3090 cards are more readily available than the newer RTX_6000 series.

- Solid Performance: It remains a solid choice for a wide range of AI tasks, especially when working with smaller LLMs.

Weaknesses:

- Lower Performance: The 309024GBx2 falls behind in generating tokens and processing speed when compared to the RTX6000Ada_48GB, especially with larger LLMs.

- Power Consumption: The 309024GBx2 can have a higher power consumption, which might be a concern for some users.

NVIDIA RTX6000Ada_48GB: The Upgraded Challenger

Strengths:

- Superior Performance: The RTX6000Ada_48GB delivers significantly faster token generation speeds and processing speeds, making it ideal for demanding LLM applications.

- Larger Memory: Its 48GB of GDDR6 memory allows you to work with larger LLMs or handle more complex AI tasks without running into memory constraints.

- Improved Efficiency: The Ada architecture is known for its efficiency, which means lower power consumption compared to previous generations.

Weaknesses:

- Price: The RTX6000Ada48GB comes at a premium price compared to the 309024GB_x2, which can be a significant factor for budget-conscious developers.

- Availability: The RTX6000Ada_48GB might be harder to find at certain times due to its popularity and demand.

Practical Recommendations: Choosing the Right Weapon for Your AI Journey

So, which GPU should you choose? The answer depends on your specific needs and priorities:

- Budget-Focused: If you're on a tighter budget and prefer a more affordable option, the 309024GBx2 could be a good choice, especially for working with smaller LLMs or for tasks that don't require super high performance.

- Performance-Driven: For those who demand the ultimate performance and are willing to invest in a top-of-the-line solution, the RTX6000Ada_48GB is the clear winner. It's ideal for handling large LLMs and tackling complex AI projects.

- Memory-Intensive Workloads: If your work involves large datasets or complex models with many parameters, the RTX6000Ada48GB's larger memory capacity gives you a significant edge. The 309024GB_x2 might encounter memory limitations, especially with larger models.

Conclusion

Choosing the right GPU for your LLM development is crucial for unlocking your model's full potential. While the 309024GBx2 remains a solid choice with its affordability and availability, the RTX6000Ada_48GB emerges as the undisputed champion with its superior performance and generous memory capacity.

Remember: the best GPU for you depends on your specific needs, budget, and project requirements. Do your research, consider your priorities, and make the decision that best suits your AI development journey!

FAQ: Unlocking Your AI Knowledge

What is quantization, and how does it affect performance?

Quantization is a technique used to reduce the size of a model by storing its weights and activations using a smaller number of bits. This can significantly improve performance, especially on GPUs with limited memory. Think of it like shrinking a giant book down to a pocket-sized version without losing too much of the original information.

What is F16 or Q4KM quantization?

F16 quantization refers to using 16 bits to store the weights and activations in the model. Q4KM quantization uses 4 bits, which is even more compact. F16 quantization often provides a balance between performance and model size, while Q4KM is best for achieving maximum memory efficiency.

Why is token generation speed important?

Token speed refers to how fast a GPU can generate tokens, which are the fundamental units of language in an LLM. The faster a GPU can generate tokens, the quicker your LLM can produce text, making it more responsive and efficient for tasks like text generation, translation, or question answering.

What is the difference between "generation" and "processing" performance?

"Generation" performance refers specifically to the speed at which a GPU generates tokens. This is the speed at which the LLM outputs text. "Processing" performance, on the other hand, encompasses all the other computations involved in running the LLM, such as handling the model's context, calculating the probabilities of different tokens, and performing other internal operations. The faster a GPU can process these tasks, the more efficiently the LLM can function.

What other factors should I consider besides GPU performance?

Besides GPU performance, you should also consider factors like:

- Power consumption: How much energy does each GPU consume? High power consumption can affect your electricity bill and even require a more powerful power supply.

- Cooling: Will the GPU require a powerful cooling solution? This is especially important if you're working with high-performance GPUs that generate a lot of heat.

- Software compatibility: Ensure that the GPU is compatible with your operating system and software tools.

- Driver support: Make sure that there are up-to-date drivers available for the GPU.

Keywords:

NVIDIA RTX 6000 Ada 48GB, NVIDIA 3090 24GB x2, LLM, Large Language Model, AI, Token Generation, Token Speed, GPU, Performance, Processing, Quantization, F16, Q4KM, Inference, Benchmark, AI Development, Deep Learning, GPU Selection, Local LLM, AI Hardware, Compute Power, Data Processing, Efficiency, Memory Capacity, Budget, Performance Optimization, AI Tools, AI Solutions