Which is Better for AI Development: NVIDIA 3090 24GB or NVIDIA RTX 5000 Ada 32GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of Artificial Intelligence is exploding, and one of the hottest areas is Large Language Models (LLMs). These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs can require a lot of computing power, especially if you want to do it locally on your own computer.

In this article, we'll dive into the performance comparison of two popular graphics cards, the NVIDIA 309024GB and the NVIDIA RTX5000Ada32GB, for running LLMs locally. These cards are frequently used for AI development, and we'll see how they stack up against each other in terms of token speed generation, a key metric for LLM performance.

Imagine you're creating a chatbot, and you want to make sure it can respond to your questions quickly. That's where token speed generation comes in. It's a measure of how many tokens - think of them as words or parts of words - your GPU can process per second. The higher the number, the faster your LLM can generate text and respond to your requests.

Ready to delve into the world of token speed generation? Let's explore!

Comparison of NVIDIA 309024GB and NVIDIA RTX5000Ada32GB

Performance Analysis: Token Speed Generation Benchmark

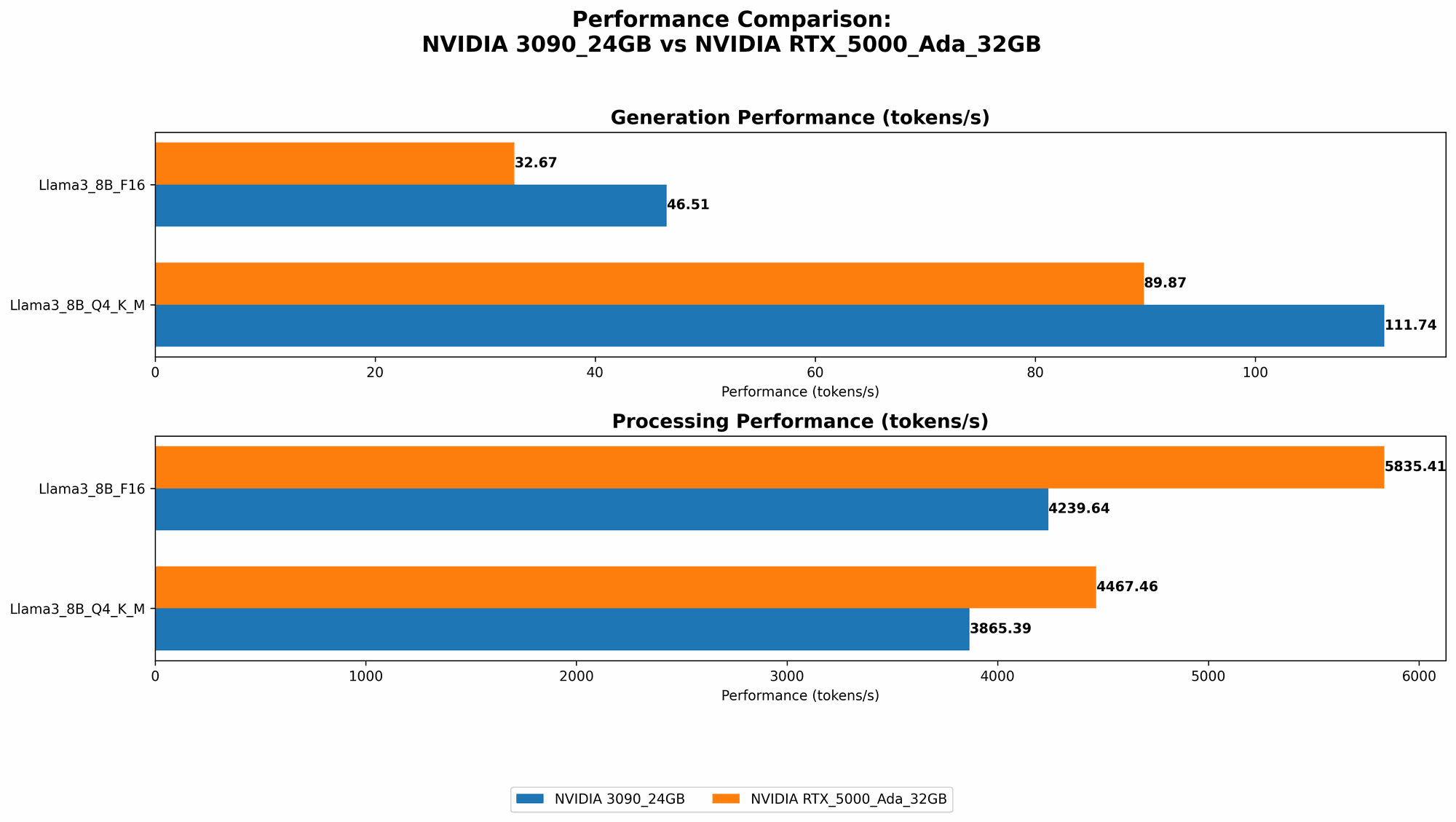

The charts below display the token speed generation performance of the two GPUs for various LLM models. The numbers listed represent tokens per second, indicating the processing speed.

| Model & Quantization | NVIDIA 3090_24GB (Tokens/Second) | NVIDIA RTX5000Ada_32GB (Tokens/Second) |

|---|---|---|

| Llama3 8B Q4KM Generation | 111.74 | 89.87 |

| Llama3 8B F16 Generation | 46.51 | 32.67 |

| Llama3 70B Q4KM Generation | N/A | N/A |

| Llama3 70B F16 Generation | N/A | N/A |

Please Note: The data currently available does not include performance for the Llama3 70B models on either GPU. We will update this comparison as new information becomes available.

Key Observations

- NVIDIA 309024GB leads in token speed generation for both Llama3 8B Q4K_M and Llama3 8B F16. This suggests that the 3090 might be a better choice if you prioritize speed in generating text with these specific LLM models.

- Both GPUs show a significant performance difference between Q4KM and F16 quantization. This is likely due to the different memory requirements and computational complexity associated with each quantization scheme. Q4KM offers higher accuracy but requires more resources, while F16 can be faster but less accurate.

- The performance differences between the two GPUs may not be drastic. It's important to consider the specific LLM model you're working with and your budget when deciding which GPU is right for you.

Practical Recommendations

- For Llama3 8B models, the NVIDIA 3090_24GB appears to be a stronger contender if speed is a top priority.

- If you're working with larger LLMs like Llama3 70B, the NVIDIA RTX5000Ada_32GB might be worth considering. However, we need more data to confirm this claim.

- Always weigh your needs against the cost and specifications of each GPU. The NVIDIA RTX5000Ada_32GB might be a more cost-effective option, especially if you don't require the absolute maximum token speed.

Understanding Token Speed Generation and its Significance

Token speed generation is a crucial metric for LLM performance because it directly impacts the responsiveness of your models. It's like the speed of your internet connection: the faster it is, the quicker you can load websites and stream videos.

Think of tokens as the building blocks of text, just like bricks are the building blocks of a house. The more tokens your GPU can process per second, the faster your LLM can "build" its responses and interact with you.

Quantization: A Simplified Explanation

Quantization is a technique used to reduce the size of LLM models while preserving their performance as much as possible. It's like compressing a large file: you reduce the file size, but you might lose some quality.

Think of it as turning a high-resolution image into a lower-resolution one. You're still able to see the image, but it might not be as sharp or detailed. In the same way, quantization can make your LLM faster, but it might slightly impact accuracy.

Conclusion

Choosing the right GPU for running LLMs locally involves balancing performance, cost, and your specific needs. While the NVIDIA 309024GB appears to have an edge in token speed generation for smaller models like Llama3 8B, the NVIDIA RTX5000Ada32GB might be a more cost-effective option, and its performance for larger models remains to be explored.

Ultimately, the best GPU for you depends on your specific use case and budget. By analyzing the data and understanding the concepts of token speed generation and quantization, you can make an informed decision that aligns with your AI development goals.

FAQ

1. What is token speed generation?

Token speed generation is a measure of how many tokens a GPU can process per second. Tokens are the units of text that LLMs use to generate and understand language, similar to words or parts of words.

2. What is quantization?

Quantization is a technique used to reduce the size of LLM models. This can make them faster and more efficient, but it might slightly impact accuracy. Imagine it like compressing a file: you reduce the file size, but you might lose some quality.

3. Is the NVIDIA 309024GB always better than the NVIDIA RTX5000Ada32GB?

Not necessarily. The 3090 might be better for smaller LLMs like Llama3 8B, but its performance for larger models like Llama3 70B is unknown.

4. Can I use a CPU to run LLMs?

Yes, you can use a CPU, but it will be significantly slower than using a GPU. GPUs are designed to perform parallel computing, making them ideal for the intense calculations required by LLMs.

5. What other factors should I consider when choosing a GPU for LLMs?

Besides token speed generation, consider factors like:

- Memory Capacity: Larger LLMs require more memory.

- Power Consumption: GPUs can consume a lot of power, which impacts energy costs.

- Cost: GPUs can be expensive, so consider your budget.

Keywords

NVIDIA 309024GB, NVIDIA RTX5000Ada32GB, LLM, Large Language Model, Token Speed Generation, GPU, AI Development, Llama3, Quantization, Q4KM, F16, Performance Benchmark