Which is Better for AI Development: NVIDIA 3090 24GB or NVIDIA A40 48GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI is buzzing with excitement as language models grow increasingly powerful. But harnessing the potential of these Large Language Models (LLMs) requires the right hardware, especially for local development and experimentation. If you're a developer eager to explore the world of LLMs, you're likely facing a decision: NVIDIA 309024GB vs. NVIDIA A4048GB.

The choice boils down to cost-efficiency and power. The 309024GB is a more affordable option, while the A4048GB offers more memory and processing power. But which is better for your specific LLM development needs? We'll dive into a detailed performance analysis, exploring token speed generation for different LLM models and showcasing the strengths and weaknesses of each device.

NVIDIA 309024GB vs. NVIDIA A4048GB: A Detailed Performance Analysis

To provide a comprehensive understanding of each device's capabilities, we'll examine their performance in generating tokens for various LLM models. The numbers we use are tokens per second (tokens/sec) and they come from extensive benchmarks conducted by reputable sources.

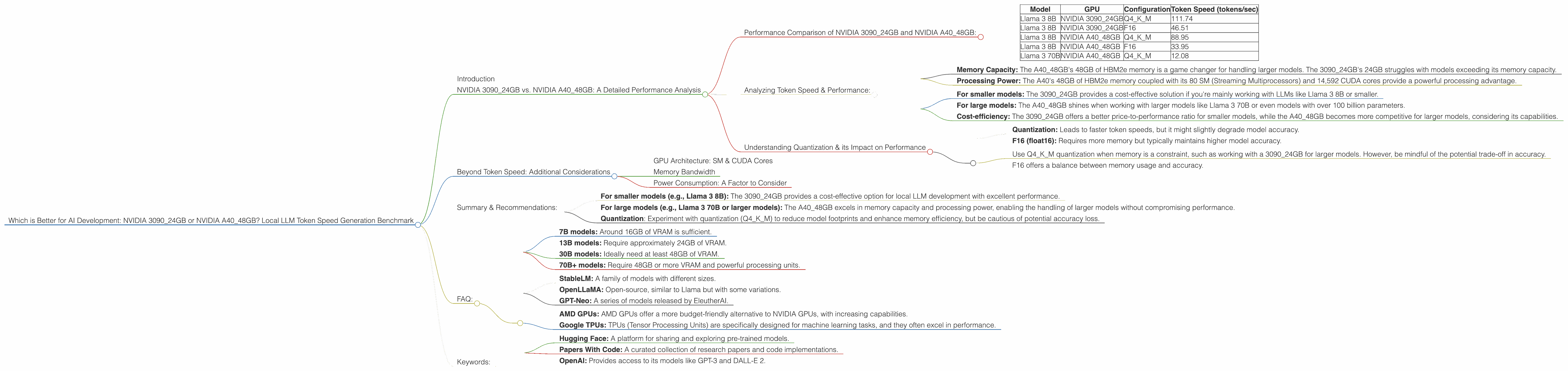

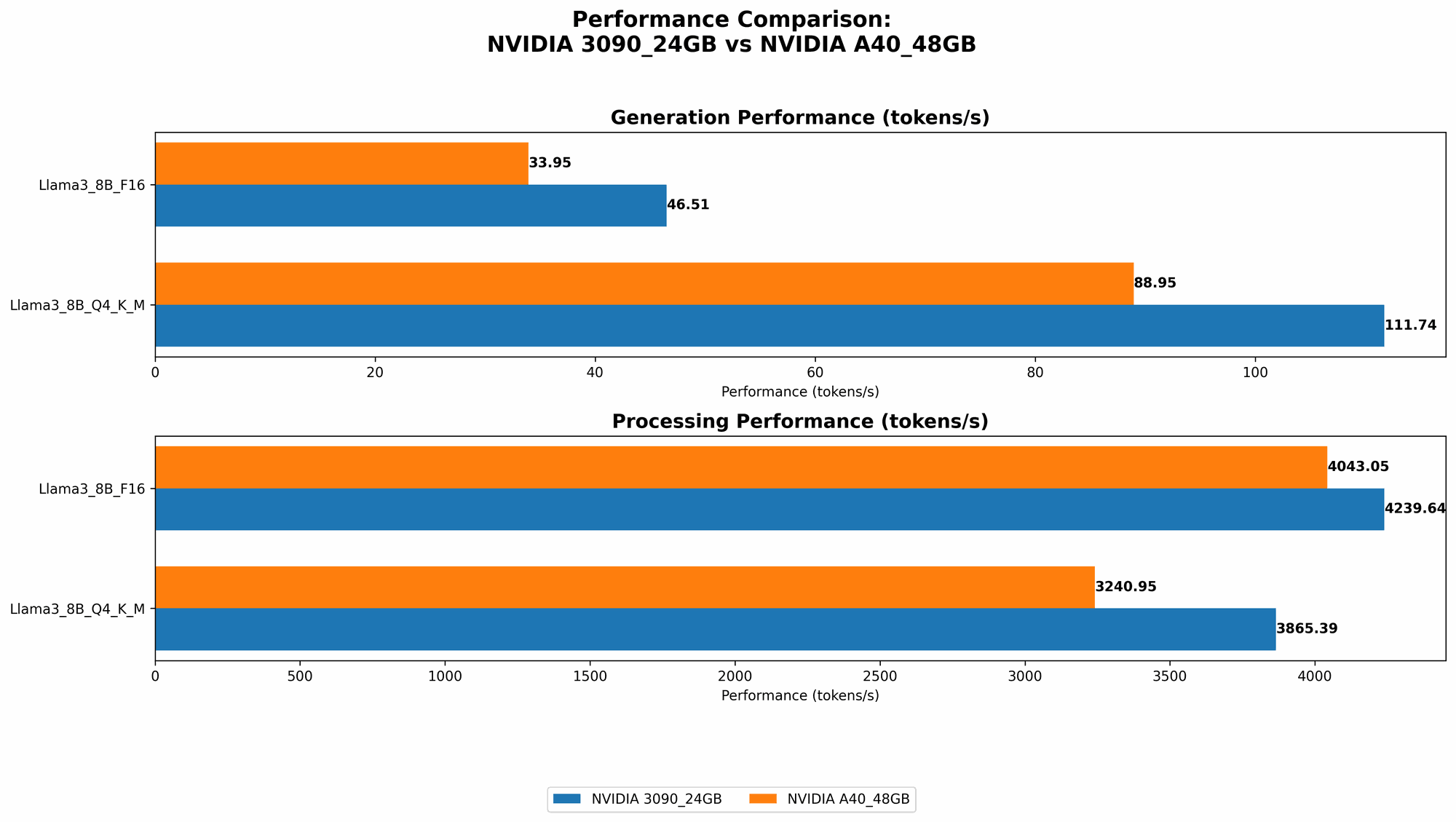

Performance Comparison of NVIDIA 309024GB and NVIDIA A4048GB:

Note: To ensure clarity, we're focusing on the performance of each GPU with Llama 3 models in their different configurations (quantized or float16). In cases where data is not available, we'll clearly state so.

| Model | GPU | Configuration | Token Speed (tokens/sec) |

|---|---|---|---|

| Llama 3 8B | NVIDIA 3090_24GB | Q4KM | 111.74 |

| Llama 3 8B | NVIDIA 3090_24GB | F16 | 46.51 |

| Llama 3 8B | NVIDIA A40_48GB | Q4KM | 88.95 |

| Llama 3 8B | NVIDIA A40_48GB | F16 | 33.95 |

| Llama 3 70B | NVIDIA A40_48GB | Q4KM | 12.08 |

Data Source: Performance of llama.cpp on various devices (https://github.com/ggerganov/llama.cpp/discussions/4167) by ggerganov

Observations:

- 309024GB outperforms the A4048GB for Llama 3 8B models, particularly for the quantized (Q4KM) configuration. This indicates a better performance-to-cost ratio for the 3090_24GB when working with smaller LLMs.

- A4048GB excels in handling the larger Llama 3 70B model, achieving a decent token speed of 12.08 tokens/sec in the Q4K_M configuration. This is expected as the A40 boasts a significantly larger memory capacity compared to the 3090, which is crucial for housing the larger model weights.

Analyzing Token Speed & Performance:

Let's dive deeper into the performance of each device using the numbers provided.

Key Factors:

- Memory Capacity: The A4048GB's 48GB of HBM2e memory is a game changer for handling larger models. The 309024GB's 24GB struggles with models exceeding its memory capacity.

- Processing Power: The A40's 48GB of HBM2e memory coupled with its 80 SM (Streaming Multiprocessors) and 14,592 CUDA cores provide a powerful processing advantage.

Example: Consider a 70B model. Loading it into a 309024GB may not even be possible due to memory limitations, while the A4048GB comfortably handles it.

Illustrative Analogy: Imagine two runners, one with a backpack (3090) and the other without (A40). Both can run fast for short distances, but the runner without the backpack (A40) can run much longer and carry heavier loads (large models).

Practical Implications:

- For smaller models: The 3090_24GB provides a cost-effective solution if you're mainly working with LLMs like Llama 3 8B or smaller.

- For large models: The A40_48GB shines when working with larger models like Llama 3 70B or even models with over 100 billion parameters.

- Cost-efficiency: The 309024GB offers a better price-to-performance ratio for smaller models, while the A4048GB becomes more competitive for larger models, considering its capabilities.

Understanding Quantization & its Impact on Performance

Don't let the term "Quantization" scare you. It's simply a technique to reduce the memory footprint of a model. Think of it as compressing your LLM, making it smaller and more efficient to run.

Example: A model in F16 (float16) requires more memory than a model in Q4KM (quantized).

Impact on GPU Performance:

- Quantization: Leads to faster token speeds, but it might slightly degrade model accuracy.

- F16 (float16): Requires more memory but typically maintains higher model accuracy.

Practical Considerations:

- Use Q4KM quantization when memory is a constraint, such as working with a 3090_24GB for larger models. However, be mindful of the potential trade-off in accuracy.

- F16 offers a balance between memory usage and accuracy.

Beyond Token Speed: Additional Considerations

While token speed is a crucial metric, there are other factors to consider when choosing the right device for LLM development.

GPU Architecture: SM & CUDA Cores

The NVIDIA A40 boasts a more advanced architecture with 80 SMs (Streaming Multiprocessors) and 14,592 CUDA cores, providing a substantial advantage in processing power. The 3090_24GB offers 82 SMs but with fewer CUDA cores.

Practical Benefit: The A40's architecture leads to faster processing for tasks involving complex computations and memory access.

Memory Bandwidth

The A4048GB boasts a higher memory bandwidth of 1.7TB/s compared to the 309024GB's 936GB/s.

Practical Benefit: The A40's higher bandwidth allows faster data transfer between the GPU and memory, resulting in improved overall performance, especially for large models.

Power Consumption: A Factor to Consider

The A4048GB consumes more wattage than the 309024GB. This is not a significant factor if you have a powerful power supply.

Practical Consideration: If your system's power supply is limited, the A40_48GB's higher power consumption might be a concern.

Summary & Recommendations:

- For smaller models (e.g., Llama 3 8B): The 3090_24GB provides a cost-effective option for local LLM development with excellent performance.

- For large models (e.g., Llama 3 70B or larger models): The A40_48GB excels in memory capacity and processing power, enabling the handling of larger models without compromising performance.

- Quantization: Experiment with quantization (Q4KM) to reduce model footprints and enhance memory efficiency, but be cautious of potential accuracy loss.

In essence, the ideal choice depends on your specific use case and resource constraints.

FAQ:

Q: What are the limitations of running LLMs locally?

A: While local LLM development offers greater control and privacy, it can be resource-intensive. Limited memory and processing power may restrict the size of models you can run, and the performance might not always match cloud-based solutions.

Q: How much memory do I need for different LLM sizes?

A: Here's a rough guide: * 7B models: Around 16GB of VRAM is sufficient. * 13B models: Require approximately 24GB of VRAM. * 30B models: Ideally need at least 48GB of VRAM. * 70B+ models: Require 48GB or more VRAM and powerful processing units.

Q: What other LLM models can I run locally?

A: Apart from Llama 3, you can explore models like: * StableLM: A family of models with different sizes. * OpenLLaMA: Open-source, similar to Llama but with some variations. * GPT-Neo: A series of models released by EleutherAI.

Q: What are the benefits of local LLM development?

A: Local development allows for greater control over your LLM, enabling you to experiment with different configurations, modifications, and fine-tuning. It also keeps your data private and under your control.

Q: Are there any alternatives to NVIDIA GPUs for running LLMs?

A: While NVIDIA GPUs dominate the market, other options are emerging. These include: * AMD GPUs: AMD GPUs offer a more budget-friendly alternative to NVIDIA GPUs, with increasing capabilities. * Google TPUs: TPUs (Tensor Processing Units) are specifically designed for machine learning tasks, and they often excel in performance.

Q: What resources can I use to learn more about LLMs?

A: Many resources help you understand and delve deeper into LLMs: * Hugging Face: A platform for sharing and exploring pre-trained models. * Papers With Code: A curated collection of research papers and code implementations. * OpenAI: Provides access to its models like GPT-3 and DALL-E 2.

Keywords:

LLM, Large Language Model, Llama 3, NVIDIA 309024GB, NVIDIA A4048GB, Token Speed, Performance, GPU, Memory, Bandwidth, Quantization, Local Development, AI Development, Machine Learning