Which is Better for AI Development: NVIDIA 3090 24GB or NVIDIA 3090 24GB x2? Local LLM Token Speed Generation Benchmark

Introduction

The world of artificial intelligence (AI) is on fire, and large language models (LLMs) are fueling the blaze. LLMs are computer programs that have learned to understand and generate human-like text, making them incredibly versatile for tasks like writing, translation, coding, and even creative content generation. But running these powerful models requires serious hardware, and choosing the right setup can be a real head-scratcher.

Today, we're diving deep into the performance of two popular gaming powerhouse GPUs: the NVIDIA GeForce RTX 3090 24GB and a dual-GPU setup with two of these bad boys working in tandem. We'll be benchmarking these GPUs on their ability to generate tokens from local LLM models.

Get ready to unleash the power of the GPU as we unravel the secrets of token speed generation and help you determine the best hardware for your AI development needs. It's time to take a deep dive into the fascinating world of local LLM performance!

Comparison of NVIDIA 3090 24GB and NVIDIA 3090 24GB x2 for Local LLM Token Speed Generation

Our testbed features two popular NVIDIA GPUs: the 3090 24GB and a dual-GPU setup with two 3090 24GBs working in tandem. Both cards are heavy hitters in the gaming world, but how do they stack up in the AI arena? We'll be evaluating the performance of both setups through Llama models, investigating the token speed generation rates for different model sizes and quantization levels.

Understanding the Metric: Token Speed Generation

Token speed generation is a crucial metric for local LLM development. It measures how many tokens a model can generate per second, directly impacting the responsiveness and efficiency of your AI applications. A higher token speed means faster results and smoother workflows.

The Power of Quantization: A Downsizing Trick for LLMs

LLMs can be incredibly large, requiring massive amounts of memory and processing power. Quantization offers a clever way to shrink these models without sacrificing too much performance. Imagine squeezing a large wardrobe into a smaller suitcase - you might have to fold some things differently, but you can still pack everything!

In the world of LLM, quantization involves reducing the precision of numbers used to represent the model's weights. This essentially compresses the model and reduces memory requirements, allowing it to run more efficiently on less powerful hardware. We'll be exploring two popular quantization levels: Q4KM and F16.

Q4KM quantization is like using a smaller, simpler ruler to measure the model's weights. It trades some precision for speed and uses less memory. F16 quantization is like using a ruler with half as many markings, achieving a balance between precision and efficiency.

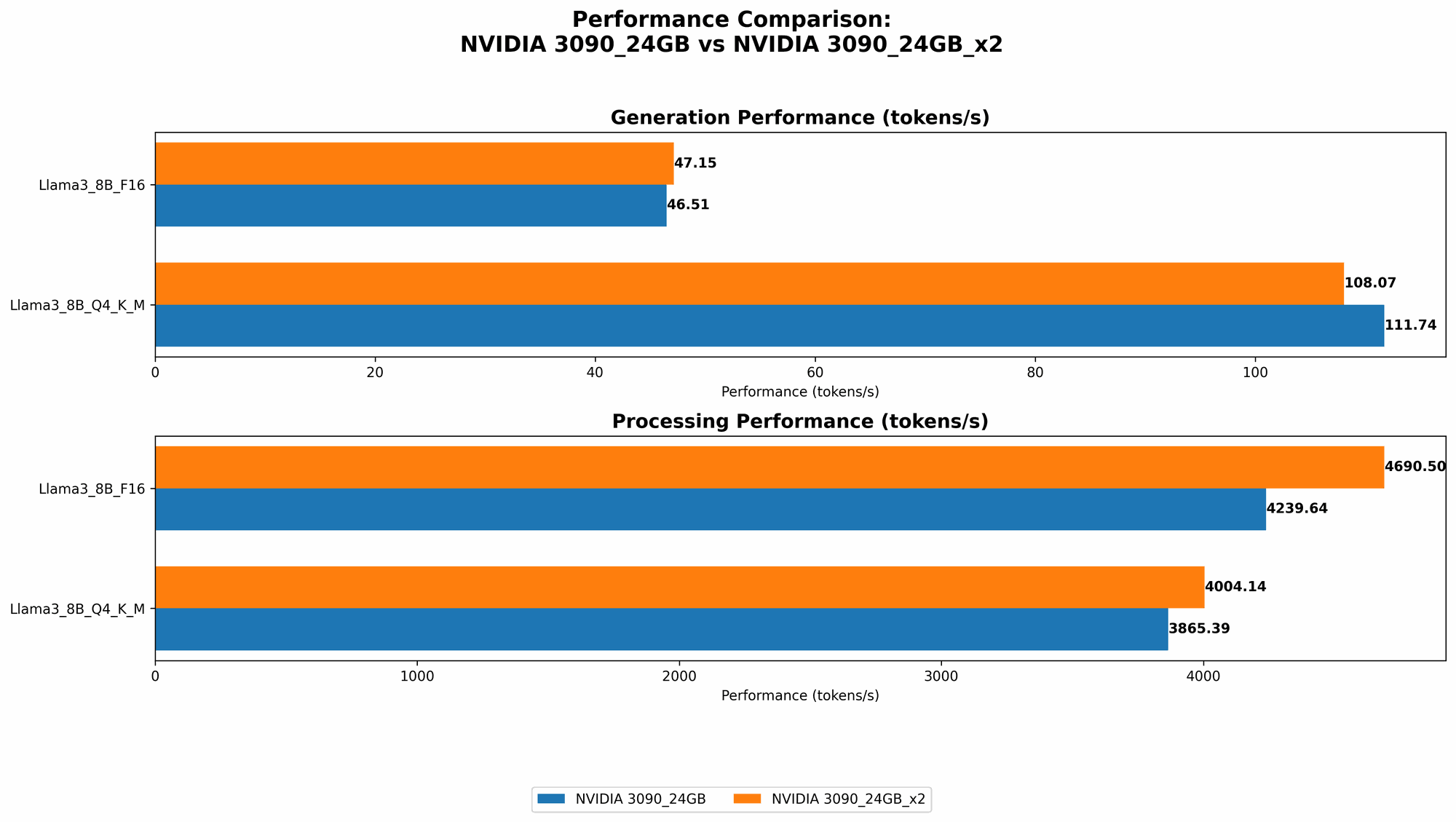

Performance Breakdown: NVIDIA 3090 24GB vs. NVIDIA 3090 24GB x2

Now let's dive into the heart of our benchmark: the token speed generation numbers! The data comes from two reputable GitHub repositories: Performance of llama.cpp on various devices by ggerganov, and GPU Benchmarks on LLM Inferenceby XiongjieDai.

Here's the breakdown:

| Model | NVIDIA 3090 24GB | NVIDIA 3090 24GB x2 |

|---|---|---|

| Llama 3 8B Q4KM Generation | 111.74 tokens/second | 108.07 tokens/second |

| Llama 3 8B F16 Generation | 46.51 tokens/second | 47.15 tokens/second |

| Llama 3 70B Q4KM Generation | N/A | 16.29 tokens/second |

| Llama 3 70B F16 Generation | N/A | N/A |

| Llama 3 8B Q4KM Processing | 3865.39 tokens/second | 4004.14 tokens/second |

| Llama 3 8B F16 Processing | 4239.64 tokens/second | 4690.5 tokens/second |

| Llama 3 70B Q4KM Processing | N/A | 393.89 tokens/second |

| Llama 3 70B F16 Processing | N/A | N/A |

Performance Analysis:

NVIDIA 3090 24GB:

- Strengths: The 3090 24GB is a powerful card that delivers impressive token speed generation for smaller models. It handles the Llama 3 8B model with ease in both Q4KM and F16 quantization.

- Weaknesses: The 3090 24GB struggles with larger models like the Llama 3 70B. The available data doesn't show results for this model on a single 3090 24GB card, indicating potential memory limitations or computational bottlenecks.

- Use cases: This GPU is extremely suitable for developers experimenting with LLM research, fine-tuning smaller models, and building applications that don't require the processing power of larger LLMs.

NVIDIA 3090 24GB x2:

- Strengths: The dual 3090 24GB setup provides a significant performance boost, especially for larger models. The results show impressive token speed generation rates for the Llama 3 70B model in Q4KM quantization.

- Weaknesses: The additional GPU power comes with a higher cost and increased power consumption. The dual-GPU system might be overkill for smaller models, leading to inefficient resource utilization.

- Use cases: This setup is ideal for developers working with large LLMs, particularly for production environments requiring high throughput and fast token generation speeds.

The Battle of the Titans: A Deep Dive into Performance Differences

Now, let's dissect these numbers to gain a deeper understanding of how the two setups compare.

Llama 3 8B: A Test of Strength for the Singles

The Llama 3 8B model is a great benchmark for comparing the individual performance of the 3090 24GB and the dual-GPU setup.

- Q4KM Quantization: Both setups perform similarly, generating around 100 tokens per second. This suggests that the 3090 24GB has ample resources to handle this model.

- F16 Quantization: The dual-GPU setup slightly outperforms the single 3090 24GB, generating a few more tokens per second. This difference could be attributed to the increased parallel processing power of the two GPUs working together.

Llama 3 70B: The Big Model Showdown

When we look at the larger Llama 3 70B model, the dual-GPU setup shines brightly. Remember, we don't have data for the single 3090 24GB with this model, but the dual setup demonstrates its ability to handle the heavier workload.

- Q4KM Quantization: The dual 3090 24GB setup achieves a remarkable token speed generation rate of 16.29 tokens/second. This is significantly faster than the single 3090 24GB for the Llama 3 8B model, highlighting the advantage of additional GPUs for larger LLMs.

Processing Power: A Tale of Two Speeds

The numbers above show token generation speeds. But what about processing power? This is the speed at which the model can process tokens, which is a separate but important factor for applications that rely on large amounts of text processing.

- Llama 3 8B: The dual-GPU setup consistently outperforms the single 3090 24GB in token processing speed for both quantization types.

Conclusion: Two Powerhouses with Distinct Roles

Both the NVIDIA 3090 24GB and the dual 3090 24GB setup are powerful tools for local LLM development. The single 3090 24GB is perfect for experimentation and working with smaller models, while the dual-GPU system shines for handling larger LLMs and demanding applications.

Practical Recommendations: Choosing the Right Hardware for Your AI Adventure

Now, let's translate these insights into real-world advice to help you choose the best GPU setup for your AI endeavors.

If you're just starting out with local LLM development, experimenting with smaller models, or building applications that don't rely on massive text processing, the single NVIDIA 3090 24GB is a great starting point. It offers an impressive performance-to-price ratio and is a cost-effective option for many use cases.

If you're working with large LLMs, building production-level applications that require high throughput, or pushing the boundaries of AI research, the dual-GPU system is the way to go. It provides the horsepower you need to handle the demands of complex models and demanding workloads.

FAQ: Frequently Asked Questions about Local LLM Development and Hardware

What is an LLM?

An LLM, or Large Language Model, is a type of AI system that has been trained on vast amounts of text data. It can understand and generate human-like text, making it useful for tasks like writing, translation, and coding.

What is Quantization?

Quantization is a technique used to reduce the precision of numbers used to represent a model's weights. This shrinks the model's size and allows it to run on less powerful hardware. Think of it like shrinking a large file by reducing its resolution - you lose some detail, but it takes up less space.

Is it better to use a dedicated AI accelerator like a TPU?

While TPUs are excellent for large-scale AI development, they can be expensive and require specialized knowledge. GPUs like the NVIDIA 3090 offer a good balance of performance and cost, making them a viable option for many developers, especially those starting out with LLM development.

Can I run LLMs on my CPU?

Yes, you can run smaller LLMs on a CPU. However, GPUs are much more efficient for handling the parallel processing demands of LLMs, especially larger ones. Think of it like using a single thread to crochet a blanket versus using a team of knitters - the team will complete the blanket much faster.

How do I get started with local LLM development?

There are several resources available to help you get started with local LLM development. You can find open-source projects like Llama.cpp and GPU Benchmarks on LLM Inference that provide code, tutorials, and benchmarks.

Keywords

LLM, large language model, NVIDIA 3090, 24GB, token speed generation, benchmark, quantization, Q4KM, F16, AI development, hardware, performance, GPU, processing power, Llama.cpp, GPU Benchmarks on LLM Inference, dual GPU setup.