Which is Better for AI Development: NVIDIA 3080 Ti 12GB or NVIDIA RTX 6000 Ada 48GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is rapidly evolving, and for developers keen on exploring the potential of these AI marvels, the choice of hardware becomes critical. Two popular choices are the NVIDIA 3080 Ti 12GB and the NVIDIA RTX 6000 Ada 48GB. But which one reigns supreme when it comes to running LLMs locally and generating tokens at lightning speed?

This article delves into the performance of these two GPUs, focusing on their token speed generation capabilities for Llama 3 models. We'll analyze benchmark results, compare their strengths and weaknesses, and provide practical recommendations for various use cases. Buckle up, it's about to get geeky!

Comparison of NVIDIA 3080 Ti 12GB & NVIDIA RTX 6000 Ada 48GB

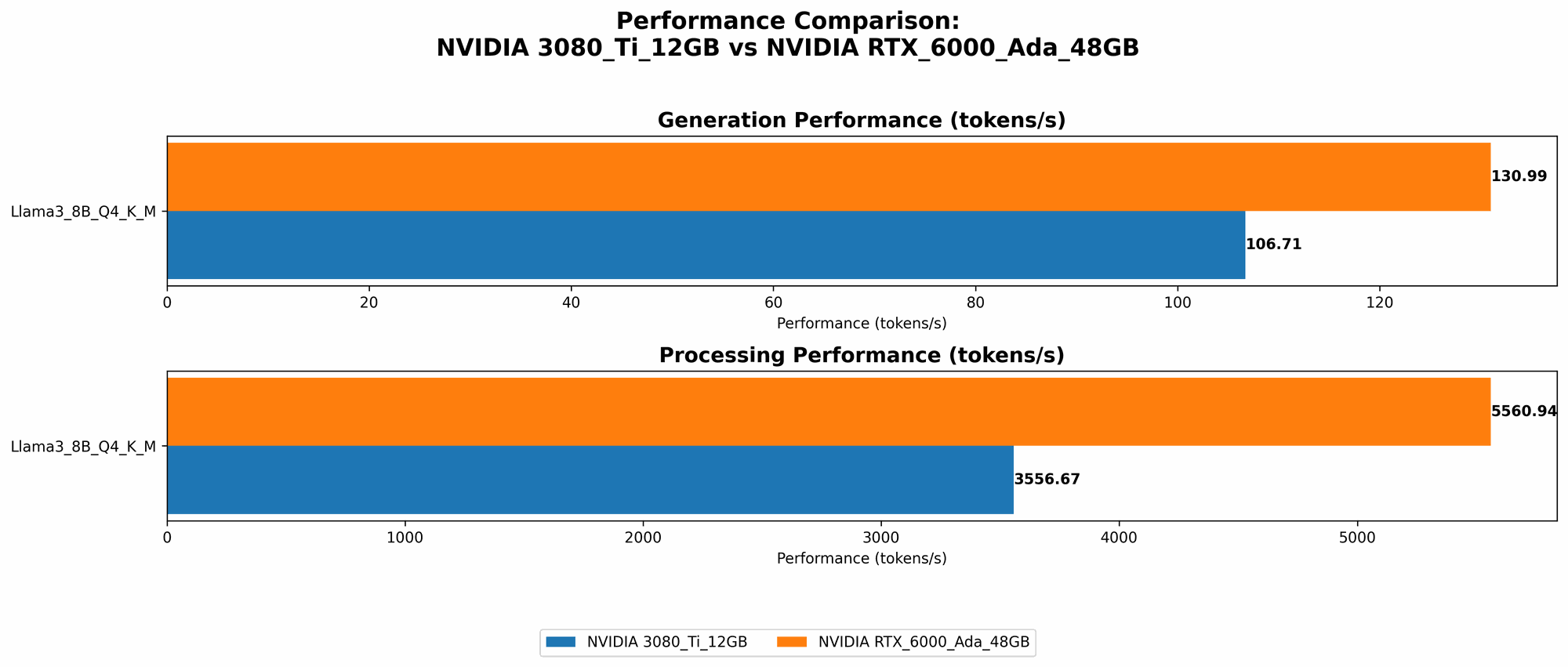

Token Speed Generation: Llama 3 8B Model

Let's start with the Llama 3 8B model, a popular choice for its balance of performance and size. Here's how the two GPUs stack up:

| GPU | Token Speed Generation (Tokens/Second) |

|---|---|

| NVIDIA 3080 Ti 12GB | 106.71 (Q4KM) |

| NVIDIA RTX 6000 Ada 48GB | 130.99 (Q4KM) |

| NVIDIA RTX 6000 Ada 48GB | 51.97 (F16) |

Key Observations:

- Q4KM Quantization: The RTX 6000 Ada 48GB clearly outperforms the 3080 Ti 12GB, generating tokens at a faster rate. This suggests the RTX 6000 Ada 48GB's architecture and larger memory capacity make it a better choice for this model and quantization method.

- F16 Quantization: The RTX 6000 Ada 48GB can also run the model using F16 quantization (half-precision floating point), while the 3080 Ti 12GB doesn't have data for this setting. This highlights the RTX 6000 Ada 48GB's versatility in handling different precision requirements.

Token Speed Generation: Llama 3 70B Model

Now things get interesting! We're scaling up to the massive Llama 3 70B model, a beast that demands serious hardware muscle.

| GPU | Token Speed Generation (Tokens/Second) |

|---|---|

| NVIDIA RTX 6000 Ada 48GB | 18.36 (Q4KM) |

Key Observations:

- Limited Data: While the 3080 Ti 12GB doesn't have any data available for the 70B model, the RTX 6000 Ada 48GB shows the potential for handling this larger model, albeit with a slower generation speed compared to the 8B model. This is expected, as 70B models are more demanding on GPU resources.

Token Speed Processing: Llama 3 Models

Let's shift gears to token processing, which refers to the speed at which the GPU handles the internal calculations involved in generating text.

| GPU | Token Speed Processing (Tokens/Second) |

|---|---|

| NVIDIA 3080 Ti 12GB | 3556.67 (Q4KM) |

| NVIDIA RTX 6000 Ada 48GB | 5560.94 (Q4KM) |

| NVIDIA RTX 6000 Ada 48GB | 6205.44 (F16) |

Key Observations:

- Faster Processing: Both GPUs show robust performance when it comes to processing tokens for the 8B model. Again, the RTX 6000 Ada 48GB outperforms the 3080 Ti 12GB, demonstrating its efficiency in handling these internal calculations.

- Llama 3 70B: The RTX 6000 Ada 48GB can also handle the 70B model, albeit at a slower speed than the 8B model.

Performance Analysis: Strengths & Weaknesses

NVIDIA 3080 Ti 12GB: Strengths & Weaknesses

Strengths:

- Excellent Performance for Smaller Models: The 3080 Ti 12GB shines when working with smaller LLM models like Llama 3 8B, offering impressive token generation speeds considering its price point.

- Reasonable Price Point: Compared to the RTX 6000 Ada 48GB, the 3080 Ti 12GB is a more affordable option.

Weaknesses:

- Limited Memory Capacity: Its 12GB of memory might be insufficient for larger models like Llama 3 70B.

- Limited Precision: The 3080 Ti 12GB appears not to support F16 quantization for the 8B model, limiting its flexibility.

- No 70B Model Data: This is a crucial area where the 3080 Ti 12GB lacks information, leaving room for speculation about its performance with larger LLMs.

NVIDIA RTX 6000 Ada 48GB: Strengths & Weaknesses

Strengths:

- Superior Performance for Larger Models: The RTX 6000 Ada 48GB demonstrates its capabilities with the Llama 3 70B model, showcasing its ability to handle larger models.

- Ample Memory: Its 48GB of memory comfortably accommodates even the most demanding LLMs.

- Versatility with Precision: The RTX 6000 Ada 48GB excels in both Q4KM and F16 quantization, offering more flexibility in choosing the optimal precision for your project.

Weaknesses:

- Price Premium: This advanced GPU comes at a hefty price, which might be a barrier for budget-conscious developers.

- Performance Decrement for Larger Models: While it manages to handle the 70B model, its performance is slower compared to the 8B model. This is expected due to the increased requirements of the 70B model, but it's an important point for developers to consider.

Practical Recommendations & Use Cases

For Developers Working with Smaller Models:

- NVIDIA 3080 Ti 12GB: This is a solid choice for developers focused on smaller LLMs like Llama 3 8B. Its price point and good performance make it an attractive option for budget-conscious developers.

For Developers Exploring Larger Models:

- NVIDIA RTX 6000 Ada 48GB: If you're venturing into the realm of 70B or even larger models, the RTX 6000 Ada 48GB is a clear frontrunner. Its ample memory, versatility, and decent performance with the 70B model make it ideal for handling these models.

Key Takeaway: The choice between these two GPUs ultimately depends on your specific needs and budget. If you're primarily working with smaller models, the 3080 Ti 12GB offers a good balance of performance and affordability. However, if you plan to explore larger models, the RTX 6000 Ada 48GB is the superior choice for its memory capacity and overall capabilities.

Quantization: A Quick Explanation for Non-Technical Users

Imagine you have a huge book filled with complex instructions, and you want to read it quickly. You could either read the book word-for-word, or you could use a simplified version that uses shorter words or symbols. Quantization is similar! LLMs are like those complex books, containing vast amounts of information. Quantization is a technique for shrinking the size of the LLM by using less precise numbers, which allows the GPU to process it faster.

- Q4KM: This is like using a simplified version with very short symbols. It's fast but loses some precision.

- F16: It's like using slightly less precise words. It's still fast but preserves a little more detail than Q4KM.

FAQs:

What is a token?

A token is a representation of a unit of text in an LLM. Think of it as a tiny piece of a word or punctuation mark. For example, the word "hello" could be broken down into the tokens "hel" and "lo".

How does token speed generation impact LLM performance?

The faster the GPU can generate tokens, the quicker the LLM can produce text. This is crucial for tasks like generating responses to user queries or creating creative content.

Why are larger LLMs more challenging to run?

Larger LLMs have more parameters (variables) and require more memory to store and process. This makes them computationally demanding and requires more powerful GPUs.

What other factors influence LLM performance besides GPU?

The performance of an LLM is also influenced by factors such as the model's architecture, the software used to run the LLM, and the dataset it was trained on.

Are there other GPUs suitable for running LLMs?

Yes, there are other GPUs available, including the NVIDIA A100, A40, and H100. These are often used for high-performance computing and AI workloads, but they come with a higher price tag.

Keywords:

NVIDIA 3080 Ti 12GB, NVIDIA RTX 6000 Ada 48GB, LLM, large language models, token generation, token speed, Llama 3, Llama 3 8B, Llama 3 70B, Q4KM quantization, F16 quantization, GPU, AI development, performance benchmark, local LLM, hardware comparison, AI, machine learning, deep learning.