Which is Better for AI Development: NVIDIA 3080 Ti 12GB or NVIDIA RTX 5000 Ada 32GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI development is buzzing with excitement, and the heart of this excitement lies in large language models (LLMs). LLMs are the brains behind advanced AI applications like chatbots, text generators, and even code assistants. These powerful tools are hungry for processing power, demanding specialized hardware to handle the demanding calculations.

This article dives deep into the performance of two popular GPUs, the NVIDIA 3080 Ti 12GB and the NVIDIA RTX 5000 Ada 32GB, when running local LLM models. We'll compare their token speed generation, analyze their strengths and weaknesses, and provide practical recommendations for developers looking to build the perfect AI development setup.

Showdown: NVIDIA 3080 Ti 12GB vs. NVIDIA RTX 5000 Ada 32GB

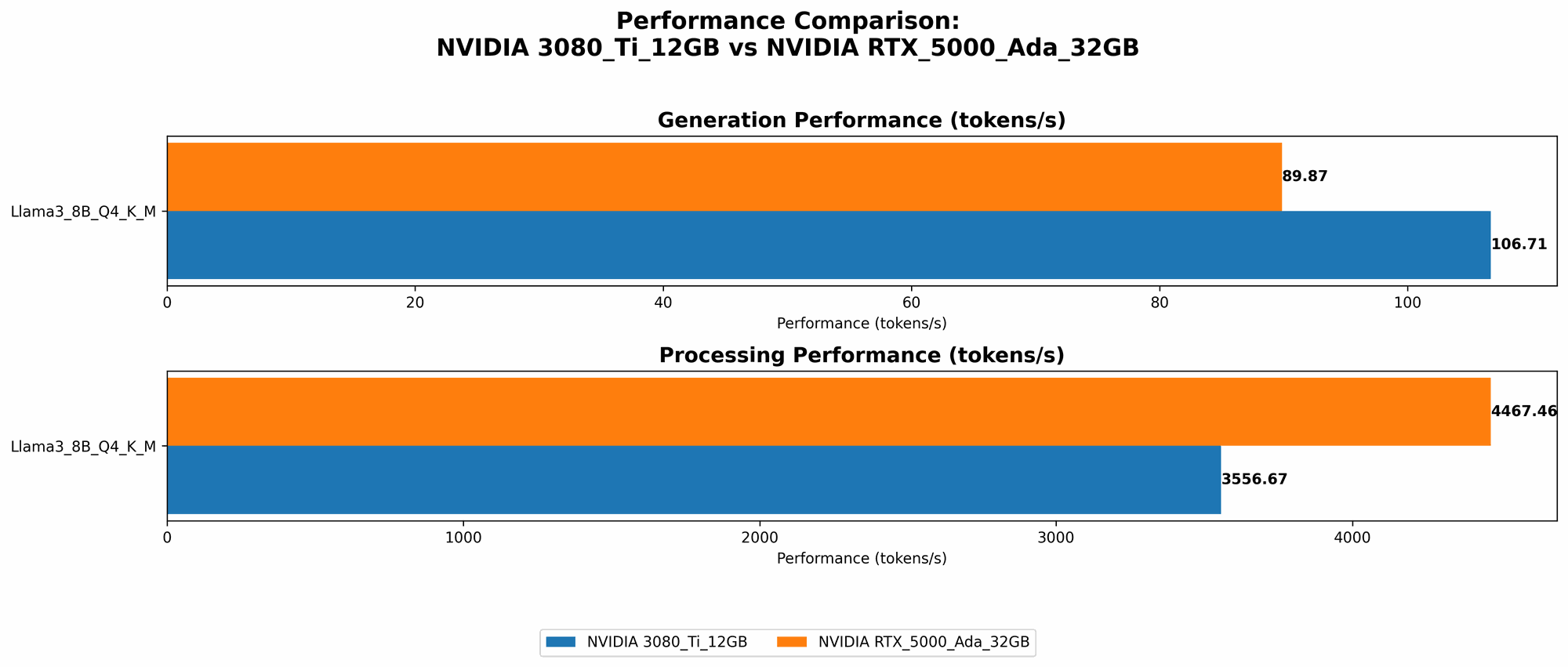

Let's get down to brass tacks! We're comparing the NVIDIA 3080 Ti 12GB and the NVIDIA RTX 5000 Ada 32GB in terms of their ability to generate tokens for popular LLM models.

For this benchmark, we're focusing on the token speed generation of the Llama 3 8B model in two configurations: * Q4KM: Quantized to 4 bits with a kernel and matrix multiplication acceleration * F16: Using half-precision floating-point numbers (16 bits)

Here's a table summarizing the key metrics:

| Metric | NVIDIA 3080 Ti 12GB | NVIDIA RTX 5000 Ada 32GB |

|---|---|---|

| Llama 3 8B Q4KM Generation (Tokens/second) | 106.71 | 89.87 |

| Llama 3 8B F16 Generation (Tokens/second) | N/A | 32.67 |

| Llama 3 8B Q4KM Processing (Tokens/second) | 3556.67 | 4467.46 |

| Llama 3 8B F16 Processing (Tokens/second) | N/A | 5835.41 |

Notes: * The data for Llama 3 70B, Llama 3 8B F16 Generation, and Llama 3 8B F16 Processing on the NVIDIA 3080 Ti 12GB is not available. * These are just token generation speeds. It’s important to consider the total time it takes to complete a task, which includes things like loading the model and generating the text.

Performance Analysis: Unveiling the Strengths and Weaknesses

Now, let's break down the performance numbers and uncover the key differences between these two GPUs for LLM development.

Comparison of NVIDIA 3080 Ti 12GB and NVIDIA RTX 5000 Ada 32GB in Token Speed Generation

- Q4KM:

- The NVIDIA 3080 Ti 12GB takes the lead in token generation speed with a score of 106.71 tokens per second for the Llama 3 8B model.

- The NVIDIA RTX 5000 Ada 32GB clocks in at 89.87 tokens per second, slightly slower than the 3080 Ti 12GB. The difference is notable, especially for real-time applications where faster token generation can translate to a more responsive user experience.

- F16:

- This is where the NVIDIA RTX 5000 Ada 32GB shines, with a significantly faster generation speed of 32.67 tokens per second compared to the 3080 Ti 12GB, which doesn't have data available.

In a nutshell, the NVIDIA 3080 Ti 12GB delivers higher token generation speeds for 4-bit quantized models, offering a smoother real-time experience. The RTX 5000 Ada 32GB shines in the F16 configuration and is a powerful option if you prioritize performance with half-precision floating-point numbers.

Understanding Token Generation Speed and Its Importance

Token generation speed refers to the rate at which a GPU can process and output tokens, which are essentially the building blocks of text. Think of it like typing, the more rapidly you can tap out letters (tokens), the faster you can form words and sentences.

In real-world scenarios, faster token generation speed translates into:

- More responsive AI applications: Think chatbots that respond instantly or text generators that provide output without noticeable lag.

- Faster model training and inference: Training and deploying LLMs require a lot of computational power, and faster token generation speeds can significantly expedite these processes.

- Improved user experience: Nobody likes waiting for AI applications to respond, and a faster token generation speed makes AI more accessible and enjoyable for users.

Deep Dive into the Numbers: Unveiling Performance Patterns

Now that we've established the general performance trends, let's dive deeper into the numbers and explore their implications.

Understanding Quantization and its Impact

Quantization is a technique used to reduce the size of LLM models and, consequently, their memory footprint.

Think of it like compressing a photo: You can reduce its file size without sacrificing significant quality. In LLM models, quantization allows us to trade some accuracy in exchange for smaller model sizes and faster processing speeds.

- Q4KM: The 4-bit quantization with kernel and matrix multiplication acceleration achieved by the 3080 Ti 12GB signifies a remarkable optimization. This configuration allows for a compact and efficient model, resulting in faster speeds.

- F16: The F16 configuration uses half-precision floating-point numbers, which is less accurate than full precision (32 bits) but offers a good balance between speed and precision for many LLM applications.

The Power of the RTX 5000 Ada 32GB: High Memory Bandwidth and Advanced Architecture

The RTX 5000 Ada 32GB benefits from a substantial 32GB of memory and a more advanced architecture. This combination allows it to excel in F16 processing, where it significantly outperforms the 3080 Ti 12GB.

The high memory bandwidth of the RTX 5000 Ada 32GB is especially beneficial for larger models like Llama 3 70B, where the sheer volume of data demands efficient memory access. However, we lack data on the 70B models for both GPUs, so the 3080 Ti 12GB’s performance in this scenario is unknown.

Practical Recommendations for Developers

Here are some practical recommendations for developers:

- If you prioritize responsiveness and speed for real-time applications: Opt for the NVIDIA 3080 Ti 12GB in Q4KM configuration. This setup guarantees rapid token generation, leading to a smoother user experience.

- If you need to work with larger models or prefer higher accuracy and are willing to sacrifice some speed: The NVIDIA RTX 5000 Ada 32GB in the F16 configuration is a powerful choice. It delivers high performance for larger models and excels in half-precision floating-point calculations.

- Consider your budget and power consumption: The 3080 Ti 12GB is a more budget-friendly option, while the RTX 5000 Ada 32GB demands a higher investment.

FAQ: Your AI Development Queries Answered

What is an LLM?

An LLM, or Large Language Model, is an AI system that excels at understanding and generating human-like text. It's trained on massive amounts of data, allowing it to perform tasks like:

- Text generation: Write stories, poems, articles, and even code.

- Translation: Convert text from one language to another.

- Summarization: Provide concise summaries of lengthy documents.

- Question answering: Respond to questions based on provided text.

Why is token speed generation important for LLMs?

Token speed generation determines how quickly an LLM can process and output text. Faster generation speeds lead to a more responsive and user-friendly experience, especially in real-time applications like chatbots.

What is quantization, and how does it affect LLM performance?

Quantization is a technique that reduces the size of LLM models by using lower-precision numbers to represent the model's weights. Think of it as reducing the number of colors in an image to make the file smaller. Quantization can improve token generation speeds and reduce memory usage, but it might slightly decrease accuracy.

How do I choose the right GPU for my LLM development?

Consider these factors:

- Model size: Larger models require more memory and processing power.

- Accuracy vs. speed: Do you prioritize accuracy or faster token generation?

- Budget: GPUs come with a range of price points.

- Power consumption: Some GPUs consume more power than others.

Keywords

LLM, NVIDIA 3080 Ti, NVIDIA RTX 5000 Ada, token speed generation, Llama 3, GPU performance, AI development, quantization, F16, Q4KM, model training, inference, memory bandwidth, speed, accuracy, budget, power consumption.