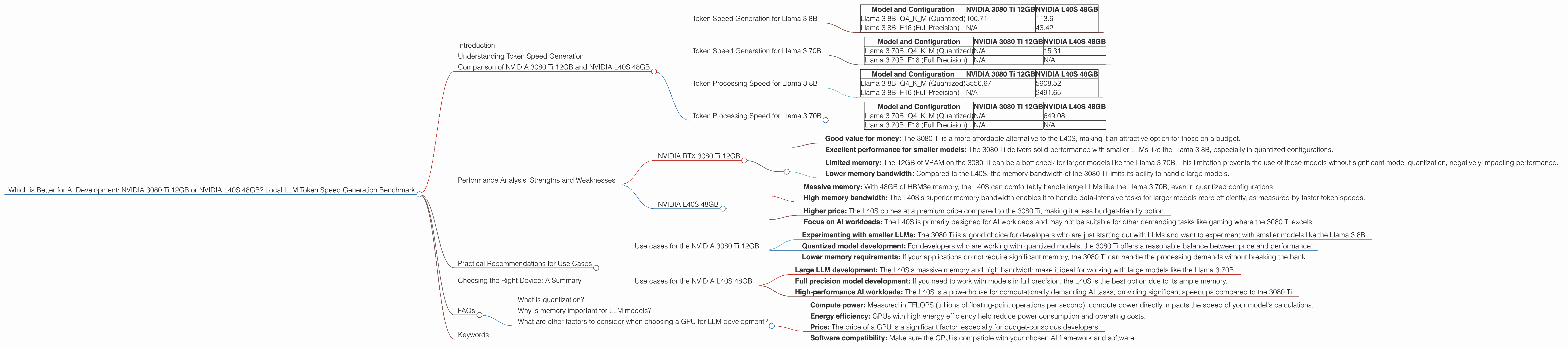

Which is Better for AI Development: NVIDIA 3080 Ti 12GB or NVIDIA L40S 48GB? Local LLM Token Speed Generation Benchmark

Introduction

If you are a developer working with Large Language Models (LLMs) locally, you know that choosing the right hardware is crucial. You need a powerful machine that can handle the massive processing power required for LLM inference and training. Two popular options are the NVIDIA GeForce RTX 3080 Ti 12GB and the NVIDIA L40S 48GB.

But which one is better for your AI development needs? This article will dive into the performance of these two powerful GPUs, comparing their token speed generation capabilities for popular LLM models like the Llama 3 8B and 70B. We'll analyze their strengths and weaknesses, helping you make an informed decision for your next AI project.

Understanding Token Speed Generation

Think of token speed generation as the pace at which your LLM processes and generates text. The higher the tokens per second, the faster your LLM can generate responses or perform other tasks. This is a critical metric for developers, as it directly impacts the overall performance and responsiveness of your AI applications.

Comparison of NVIDIA 3080 Ti 12GB and NVIDIA L40S 48GB

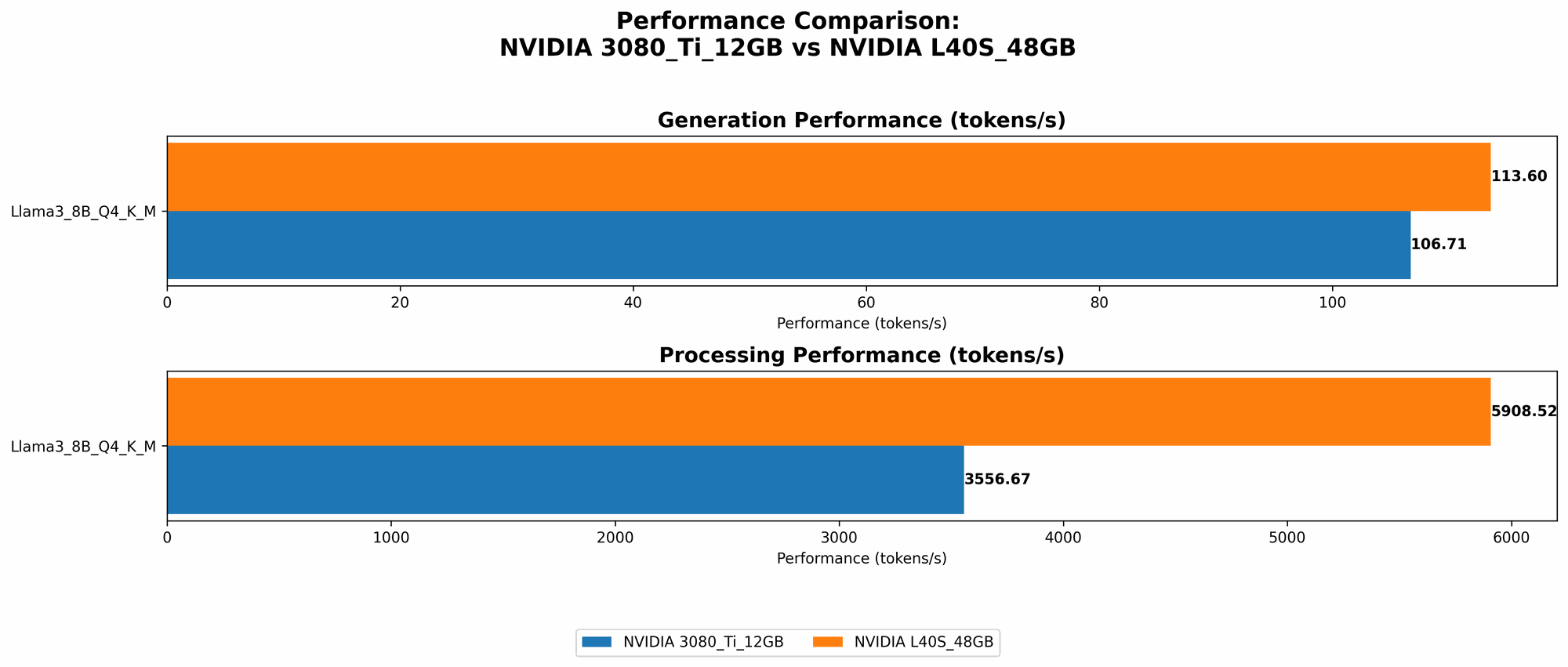

Token Speed Generation for Llama 3 8B

Let's start with the smaller Llama 3 8B model, often used for experimenting and learning. Here's a breakdown of the token speed generation for both GPUs, measured in tokens per second:

| Model and Configuration | NVIDIA 3080 Ti 12GB | NVIDIA L40S 48GB |

|---|---|---|

| Llama 3 8B, Q4KM (Quantized) | 106.71 | 113.6 |

| Llama 3 8B, F16 (Full Precision) | N/A | 43.42 |

Analysis:

- Quantized Performance: The L40S slightly outperforms the 3080 Ti when it comes to the quantized Llama 3 8B model. This difference can be attributed to the L40S's superior memory bandwidth and larger memory capacity, allowing for smoother data flow during processing.

- Full Precision: While the L40S outperforms the 3080 Ti in quantized mode, in full precision (F16), the 3080 Ti falls short due to the lack of memory bandwidth.

Conclusion: For Llama 3 8B, the L40S is the better choice, offering faster token generation for both quantized and full precision models. The 3080 Ti would be a good alternative if you are working with a smaller dataset and don't need the extra memory bandwidth of the L40S.

Token Speed Generation for Llama 3 70B

Now, let's move to the larger Llama 3 70B model. This model requires more memory and processing power to operate.

| Model and Configuration | NVIDIA 3080 Ti 12GB | NVIDIA L40S 48GB |

|---|---|---|

| Llama 3 70B, Q4KM (Quantized) | N/A | 15.31 |

| Llama 3 70B, F16 (Full Precision) | N/A | N/A |

Analysis:

- Quantized Performance: The L40S can handle the Llama 3 70B quantized model. Though the token speed generation is much slower than the Llama 3 8B, it still demonstrates the L40S's ability to process large models. The 3080 Ti, with its limited memory, cannot handle the Llama 3 70B model.

- Full Precision: Neither the 3080 Ti nor the L40S can accommodate the Llama 3 70B model in full precision. This showcases the demanding memory requirements of running large language models without quantization.

Conclusion: The L40S emerges as the winner again, offering support for the larger Llama 3 70B model, albeit with slower token generation compared to the 8B model. The 3080 Ti, due to its limited memory, is not suitable for running such large model.

Token Processing Speed for Llama 3 8B

Now, let's consider the total processing speed of these GPUs. This metric measures how efficiently the GPUs can handle the entire computation process, including both token generation and other operations like embedding lookups and activations.

| Model and Configuration | NVIDIA 3080 Ti 12GB | NVIDIA L40S 48GB |

|---|---|---|

| Llama 3 8B, Q4KM (Quantized) | 3556.67 | 5908.52 |

| Llama 3 8B, F16 (Full Precision) | N/A | 2491.65 |

Analysis:

- Quantized Performance: The L40S exhibits a significantly higher processing speed compared to the 3080 Ti for the Llama 3 8B model with Q4KM quantization. This indicates that the L40S can handle computationally intensive tasks more efficiently.

- Full Precision: For the Llama 3 8B model with F16 precision, the L40S, once again, surpasses the 3080 Ti with a noticeable difference in processing speed.

Conclusion: The L40S's superior processing speed is a clear advantage for handling complex computations associated with both quantized and full precision Llama 3 8B models.

Token Processing Speed for Llama 3 70B

Let's take a look at the processing speeds for the Llama 3 70B model:

| Model and Configuration | NVIDIA 3080 Ti 12GB | NVIDIA L40S 48GB |

|---|---|---|

| Llama 3 70B, Q4KM (Quantized) | N/A | 649.08 |

| Llama 3 70B, F16 (Full Precision) | N/A | N/A |

Analysis:

- Quantized Performance: The L40S can manage the Llama 3 70B model with Q4KM quantization, demonstrating its ability to handle computationally demanding tasks associated with larger models. The 3080 Ti, with its limited memory, cannot handle the Llama 3 70B model.

Conclusion: For the Llama 3 70B model, the L40S is again the clear winner due to its ability to handle the processing demands of a larger model.

Performance Analysis: Strengths and Weaknesses

NVIDIA RTX 3080 Ti 12GB

Strengths:

- Good value for money: The 3080 Ti is a more affordable alternative to the L40S, making it an attractive option for those on a budget.

- Excellent performance for smaller models: The 3080 Ti delivers solid performance with smaller LLMs like the Llama 3 8B, especially in quantized configurations.

Weaknesses:

- Limited memory: The 12GB of VRAM on the 3080 Ti can be a bottleneck for larger models like the Llama 3 70B. This limitation prevents the use of these models without significant model quantization, negatively impacting performance.

- Lower memory bandwidth: Compared to the L40S, the memory bandwidth of the 3080 Ti limits its ability to handle large models.

NVIDIA L40S 48GB

Strengths:

- Massive memory: With 48GB of HBM3e memory, the L40S can comfortably handle large LLMs like the Llama 3 70B, even in quantized configurations.

- High memory bandwidth: The L40S's superior memory bandwidth enables it to handle data-intensive tasks for larger models more efficiently, as measured by faster token speeds.

Weaknesses:

- Higher price: The L40S comes at a premium price compared to the 3080 Ti, making it a less budget-friendly option.

- Focus on AI workloads: The L40S is primarily designed for AI workloads and may not be suitable for other demanding tasks like gaming where the 3080 Ti excels.

Conclusion:

Both the 3080 Ti and the L40S have their strengths and weaknesses. The 3080 Ti is a good option for those on a budget and who are working with smaller LLM models. The L40S is best suited for handling larger LLMs and computationally demanding tasks.

Practical Recommendations for Use Cases

Use cases for the NVIDIA 3080 Ti 12GB

- Experimenting with smaller LLMs: The 3080 Ti is a good choice for developers who are just starting out with LLMs and want to experiment with smaller models like the Llama 3 8B.

- Quantized model development: For developers who are working with quantized models, the 3080 Ti offers a reasonable balance between price and performance.

- Lower memory requirements: If your applications do not require significant memory, the 3080 Ti can handle the processing demands without breaking the bank.

Use cases for the NVIDIA L40S 48GB

- Large LLM development: The L40S's massive memory and high bandwidth make it ideal for working with large models like the Llama 3 70B.

- Full precision model development: If you need to work with models in full precision, the L40S is the best option due to its ample memory.

- High-performance AI workloads: The L40S is a powerhouse for computationally demanding AI tasks, providing significant speedups compared to the 3080 Ti.

Choosing the Right Device: A Summary

The choice between the NVIDIA GeForce RTX 3080 Ti 12GB and the NVIDIA L40S 48GB depends on your specific AI development needs. If you're working with smaller models, the 3080 Ti offers a good balance of price and performance. But for larger models and computationally demanding tasks, the L40S is the clear winner.

In essence, choose the 3080 Ti for affordability and efficiency with smaller models and the L40S for power and scalability when dealing with large models.

FAQs

What is quantization?

Quantization is a technique used in AI to reduce the size of models without sacrificing too much accuracy. Simply put, imagine shrinking a large, detailed image into a smaller version for easier storage and processing. The image loses some detail, but it's still recognizable and useful. Quantization works similarly, but with model data, reducing the memory demands and improving performance.

Why is memory important for LLM models?

LLM models are large and complex, requiring a significant amount of memory to store their information. Think of it like a massive library filled with books. The more books (data) you have, the more space you need. Similarly, having enough memory ensures that your LLM can access all the information it needs to operate efficiently. Without enough memory, your model may crash or become slow, hindering its performance.

What are other factors to consider when choosing a GPU for LLM development?

Beyond token speed and memory, other factors play a role in choosing a GPU:

- Compute power: Measured in TFLOPS (trillions of floating-point operations per second), compute power directly impacts the speed of your model's calculations.

- Energy efficiency: GPUs with high energy efficiency help reduce power consumption and operating costs.

- Price: The price of a GPU is a significant factor, especially for budget-conscious developers.

- Software compatibility: Make sure the GPU is compatible with your chosen AI framework and software.

Keywords

LLM, large language model, token speed generation, NVIDIA 3080 Ti, NVIDIA L40S, GPU, AI development, benchmark, Llama 3 8B, Llama 3 70B, quantized model, full precision model, memory bandwidth, processing speed, software compatibility, price, energy efficiency, compute power.