Which is Better for AI Development: NVIDIA 3080 Ti 12GB or NVIDIA A100 SXM 80GB? Local LLM Token Speed Generation Benchmark

Introduction

In the world of artificial intelligence (AI), Large Language Models (LLMs) are revolutionizing how we interact with computers. LLMs like ChatGPT and Bard have captured the public imagination, but what about running these models locally? This article will dive into the performance of two popular GPUs – the NVIDIA 3080 Ti 12GB and the NVIDIA A100 SXM 80GB – when used to generate text with Llama 3, a powerful open-source LLM.

We'll compare their token speed generation capabilities, focusing on "Llama 3 8B", a model with 8 billion parameters, as it's a good balance of performance and computational cost.

Data and Methodology:

The data below comes from two sources: * Performance of llama.cpp on various devices by ggerganov, a well-known contributor to the llama.cpp project. * GPU Benchmarks on LLM Inference by XiongjieDai, who provides a comprehensive set of benchmarks for different LLMs.

The numbers below are tokens per second. Tokens are the basic units of text processing for LLMs, representing words, punctuation, and even spaces. This benchmark measures how quickly each GPU can generate tokens, which translates to the speed of text output.

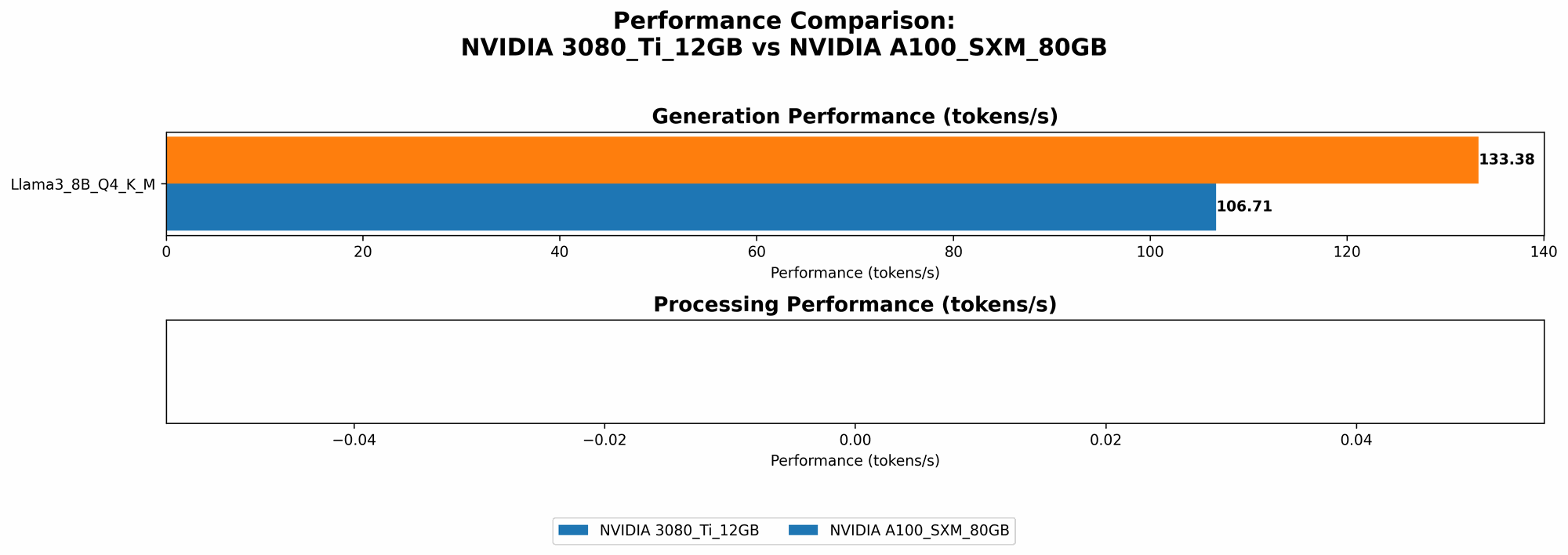

Comparison of the NVIDIA 3080 Ti 12GB and NVIDIA A100 SXM 80GB for Llama 3 8B

Token Speed Generation Performance:

| Device | Llama 3 8B Q4KM (tokens/second) | Llama 3 8B F16 (tokens/second) |

|---|---|---|

| NVIDIA 3080 Ti 12GB | 106.71 | NULL |

| NVIDIA A100 SXM 80GB | 133.38 | 53.18 |

- Q4KM: This refers to a quantization technique where the model parameters are reduced to 4 bits, sacrificing some accuracy for increased speed. The "KM" part indicates using the Kyber and MKL libraries for optimized matrix multiplications, often seen as the "brain" of these models.

- F16: This stands for "half-precision floating-point" and is a common way to reduce memory usage and improve performance. It can be seen as a middle ground between Q4KM and the full-precision 32-bit format (F32).

Key Findings:

- Overall, A100 SXM 80GB is faster, with a noticeable advantage in both Q4KM and F16 configurations.

- A100 SXM 80GB shines when using F16 quantization, achieving more than double the speed of 3080 Ti.

- Remember that this is just one benchmark, and performance can vary depending on the specific LLM you're using and your code optimization.

Analysis:

It's important to delve into why the A100 SXM 80GB outperforms the 3080 Ti, especially in the F16 configuration:

- Architecture: The A100 is a newer generation of NVIDIA's data center GPU, designed specifically for high-performance computing. It features a more powerful architecture with dedicated tensor cores optimized for matrix multiplications, which are crucial in LLMs.

- Memory: While the 3080 Ti boasts 12GB of VRAM, the A100 has a whopping 80GB of HBM2e memory. This can be crucial when dealing with larger LLMs like Llama 3 70B, where fitting the model and its context into memory becomes a major bottleneck.

- Quantization: The A100 has been optimized for various quantization techniques, and F16 is particularly well-suited for its architecture. This is why the A100 shows significantly better performance with F16.

Practical Considerations:

So, which GPU should you choose? It depends on your specific needs:

- Cost: The A100 SXM 80GB is significantly more expensive than the 3080 Ti. If you're budget-constrained, the 3080 Ti might be a better option, especially for smaller, quantized models.

- Model Size: For large LLMs like the "Llama 3 70B", the A100 SXM 80GB with its massive memory is the clear winner.

- Performance: If you prioritize speed, especially with F16 quantization, the A100 SXM 80GB is your best bet.

The Trade-Off: Performance vs. Cost

Imagine you're building a Lego spaceship. You could use a simple set with limited parts – that's like using a 3080 Ti, which is good for smaller models but won't handle the larger ones. Or, you could use the ultimate Lego set with thousands of parts – that's like the A100 SXM 80GB, powerful but expensive. The choice depends on your Lego spaceship's complexity and, of course, your budget.

Limitations and Future Trends:

While this article focused on the NVIDIA 3080 Ti and A100 SXM 80GB, several other GPUs are available, including the NVIDIA A100 40GB, NVIDIA A40, and AMD MI250. The landscape is rapidly evolving, with new models and optimizations being released regularly.

Frequently Asked Questions (FAQ):

What are LLMs?

LLMs are a type of AI system trained on massive datasets of text. They can understand and generate human-like text, making them incredibly powerful for tasks like translation, text summarization, and even creative writing.

What is quantization?

Quantization is a technique used to reduce the memory footprint and increase the inference speed of LLMs. It involves converting the model's parameters from 32-bit floating-point numbers to smaller, more compact formats like 4-bit integers or 16-bit floating-point numbers.

Can I run LLMs on my laptop?

You can, but it will likely be slow unless you have a powerful GPU like the NVIDIA 3080 Ti or A100. For smaller models, even a good CPU might be sufficient for experimentation.

How can I choose the right device for my LLM needs?

Consider the following:

- Model size: Larger models require GPUs with more memory.

- Performance requirements: Speed is crucial for real-time applications.

- Budget: Consider the cost of the GPU.

Keywords

LLM, Large Language Model, GPU, NVIDIA 3080 Ti, NVIDIA A100 SXM 80GB, Llama 3, Token Speed, Text Generation, Quantization, F16, Q4KM, Performance, AI Development, Benchmark, Inference, Cost, Memory, Architecture