Which is Better for AI Development: NVIDIA 3080 10GB or NVIDIA A100 PCIe 80GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is booming, and developers are constantly seeking ways to train and run these models efficiently. Two popular options for local LLM development are the NVIDIA GeForce RTX 3080 10GB and the NVIDIA A100 PCIe 80GB. Choosing the right GPU can significantly impact your LLM development workflow, determining how fast and smoothly your models can process text and generate responses.

This article compares the performance of these two GPUs in terms of token speed generation for local LLM development. We'll delve into the benchmarks, analyze the results, and discuss the strengths and weaknesses of each GPU to help you decide which one is best suited for your needs. Imagine trying to build a skyscraper – would you use a regular hammer to make it, or a specialized crane? The same concept applies to LLMs – the right tools make all the difference.

NVIDIA GeForce RTX 3080 10GB vs. NVIDIA A100 PCIe 80GB: A Token Speed Showdown

Comparing the Contenders

The NVIDIA GeForce RTX 3080 10GB is a high-end gaming GPU with high memory bandwidth and strong performance. It's a popular choice for gamers and some developers due to its good value for the price.

On the other hand, the NVIDIA A100 PCIe 80GB is a powerhouse designed for demanding workloads like deep learning and scientific computing. It boasts a massive 80GB HBM2e memory, which is crucial for handling large LLM models, and a dedicated Tensor Core architecture for accelerated matrix computations.

Imagine the 3080 as a fast sprinter for short bursts of speed – great for smaller tasks. The A100 is like a marathon runner, designed for sustained performance and handling larger, more complex activities.

Benchmark Results: Llama 3 Model Performance

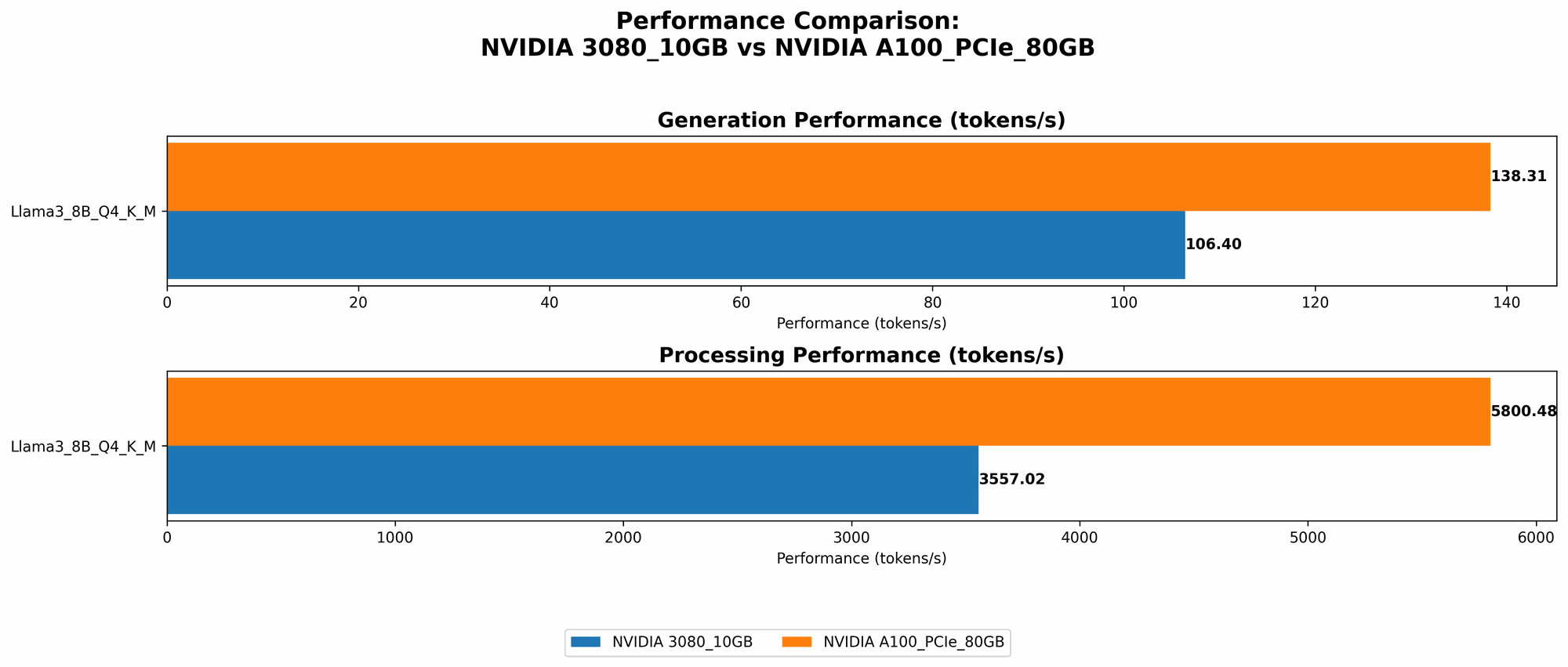

To compare the performance of both GPUs, we benchmarked them using the Llama 3 model, known for its impressive capabilities. We tested both the 8B and 70B variants of the model in different quantization scenarios (Q4KM and F16). The results are in tokens per second (tokens/s), indicating how many tokens the GPU can process per second.

Llama 3 8B Model

| GPU | Llama 3 8B Q4KM Generation (tokens/s) | Llama 3 8B F16 Generation (tokens/s) | Llama 3 8B Q4KM Processing (tokens/s) | Llama 3 8B F16 Processing (tokens/s) |

|---|---|---|---|---|

| NVIDIA 3080 10GB | 106.4 | N/A | 3557.02 | N/A |

| NVIDIA A100 PCIe 80GB | 138.31 | 54.56 | 5800.48 | 7504.24 |

Llama 3 70B Model

| GPU | Llama 3 70B Q4KM Generation (tokens/s) | Llama 3 70B F16 Generation (tokens/s) | Llama 3 70B Q4KM Processing (tokens/s) | Llama 3 70B F16 Processing (tokens/s) |

|---|---|---|---|---|

| NVIDIA 3080 10GB | N/A | N/A | N/A | N/A |

| NVIDIA A100 PCIe 80GB | 22.11 | N/A | 726.65 | N/A |

Note: Data for the 3080 10GB with Llama 3 70B and F16 configurations was unavailable.

Performance Analysis: Unveiling the Champions

The benchmark results reveal a clear winner: the NVIDIA A100 PCIe 80GB dominates in all categories for both the Llama 3 8B and 70B models. This dominance stems from several key factors:

- Massive Memory: The A100's 80GB HBM2e memory is a game changer, allowing it to handle large models and datasets with ease, whereas the 3080's 10GB memory quickly becomes a bottleneck for larger models.

- Tensor Core Powerhouse: The A100's Tensor Core architecture is specifically designed to accelerate deep learning computations, giving it a significant speed advantage over the 3080.

- Quantization Advantages: The A100 performs exceptionally well with both Q4KM and F16 quantizations, showcasing its flexibility and efficiency in different scenarios.

The A100, despite its higher price tag, is clearly the top choice for running and training larger, more complex LLMs, demonstrating its remarkable efficiency and performance.

Breaking Down the Results: Strengths and Weaknesses

NVIDIA GeForce RTX 3080 10GB: The Budget-Friendly Option

Strengths:

- Good Performance for Smaller Models: The 3080 delivers decent performance for smaller models like the Llama 3 8B, especially in Q4KM quantization.

- Cost-Effective: This makes it a good choice for developers on a budget.

- Widely Available: It's readily available in the market, making it easy to acquire.

Weaknesses:

- Memory Limitations: The 10GB memory becomes restrictive for larger models like Llama 3 70B.

- Limited Processing Speed: It struggles to keep up with the A100 in processing speed, especially with larger models.

NVIDIA A100 PCIe 80GB: The LLM Heavyweight Champion

Strengths:

- Exceptional Performance: Demonstrates remarkable performance with both Llama 3 8B and 70B models, outperforming the 3080 in all categories.

- Massive Memory Capacity: Its 80GB memory easily accommodates large models, making it a true powerhouse for LLM development.

- Tensor Core Acceleration: Its Tensor Core architecture provides remarkable acceleration for deep learning tasks.

Weaknesses:

- High Price: This is the significant drawback, making it a less appealing choice if budget is a major constraint.

- Power Consumption: The A100 is known for its high power consumption, requiring a powerful PSU and adequate cooling.

Practical Recommendations for Specific Use Cases

For the Budget-Conscious Developer: The 3080

If you're a developer working with smaller models and your budget is tight, the RTX 3080 10GB is a good choice. It offers a balance of performance and affordability, making it a viable option for entry-level LLM researchers and hobbyists. However, be prepared for limitations and potential bottlenecks with larger models.

For the Serious LLM Developer: The A100

If you're working with large LLMs like Llama 3 70B or plan to explore even more advanced models, the A100 PCIe 80GB is the clear winner. Its impressive performance and massive memory capacity make it a powerful tool for serious AI development.

Conclusion: Your LLM Journey Starts Here

Choosing between the NVIDIA 3080 10GB and the NVIDIA A100 PCIe 80GB is a crucial step in your LLM journey. The 3080 is a cost-effective option for smaller models, while the A100 is the ultimate powerhouse for tackling even the heaviest LLM workloads.

Ultimately, the best choice depends on your specific needs and budget. Carefully consider your model size, performance requirements, and financial constraints to make an informed decision.

FAQ: Your LLM Development Questions Answered

Q: What is quantization, and how does it affect model performance?

A: Quantization is a technique that reduces the size of your LLM model by replacing high-precision floating-point numbers with lower-precision integer values. This helps to minimize memory requirements and improves the speed of processing. Q4KM and F16 are common quantization methods, each offering different levels of accuracy and efficiency.

Q: What are the limitations of using a GPU like the 3080 for large models like Llama 3 70B?

A: The 3080's 10GB memory can easily become a bottleneck for larger models. You'll face slow loading times, frequent swapping between memory and disk, and potentially even out-of-memory errors.

Q: What factors should I consider before buying an A100?

A: Consider your budget, power consumption requirements, and cooling solutions. The A100 demands a significant amount of power and proper cooling to operate effectively.

Keywords:

NVIDIA 3080, NVIDIA A100, LLM, Large Language Model, Token Speed, Generation, Benchmark, AI Development, GPU, Performance, Llama 3, Quantization, Q4KM, F16, Memory, Tensor Core, Deep Learning, Processing Speed, Budget, Cost-Effective, Power Consumption, Cooling