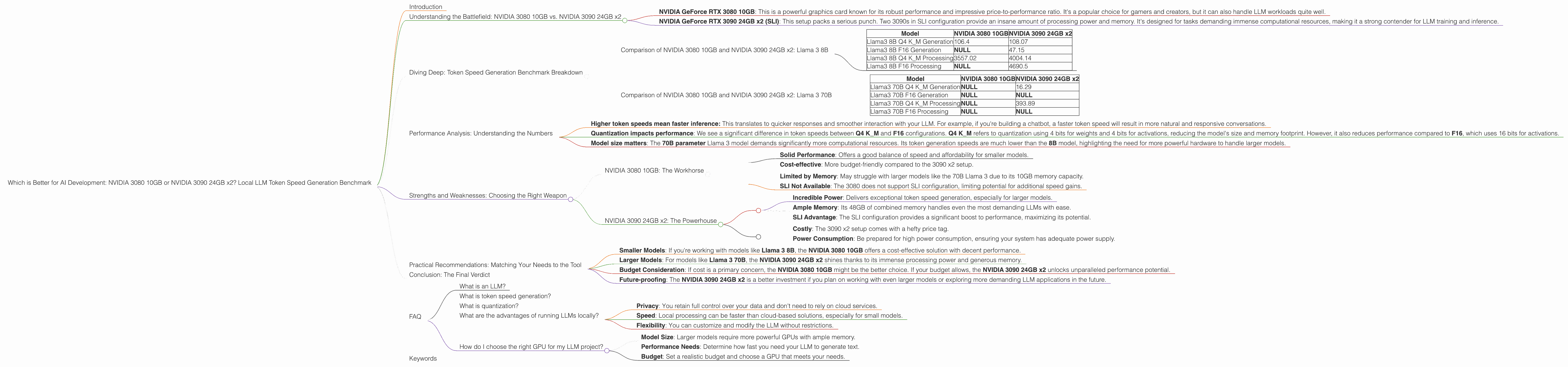

Which is Better for AI Development: NVIDIA 3080 10GB or NVIDIA 3090 24GB x2? Local LLM Token Speed Generation Benchmark

Introduction

Welcome to the thrilling world of local LLM model training! This article dives into the exciting realm of running large language models (LLMs) directly on your computer. We'll be comparing the performance of two popular NVIDIA graphics cards: the NVIDIA GeForce RTX 3080 10GB and two NVIDIA GeForce RTX 3090 24GB in SLI configuration.

Choosing the right hardware is crucial for achieving optimal performance when working with LLMs. This benchmark will help you decide which GPU setup is best suited for your needs based on token speed generation – an essential metric for evaluating LLM performance. We'll be focusing on the Llama 3 model, specifically the 8B and 70B parameter variants.

Buckle up, because we're about to embark on a journey of comparing these powerful graphics cards, analyzing their strengths and weaknesses, and ultimately, revealing the champion when it comes to local LLM token speed generation!

Understanding the Battlefield: NVIDIA 3080 10GB vs. NVIDIA 3090 24GB x2

Before diving into the token speed generation benchmark, let's briefly understand the contenders:

NVIDIA GeForce RTX 3080 10GB: This is a powerful graphics card known for its robust performance and impressive price-to-performance ratio. It's a popular choice for gamers and creators, but it can also handle LLM workloads quite well.

NVIDIA GeForce RTX 3090 24GB x2 (SLI): This setup packs a serious punch. Two 3090s in SLI configuration provide an insane amount of processing power and memory. It's designed for tasks demanding immense computational resources, making it a strong contender for LLM training and inference.

Diving Deep: Token Speed Generation Benchmark Breakdown

To understand which GPU setup reigns supreme, we need to analyze their performance in terms of token speed generation. This metric represents how many tokens per second a GPU can process, directly impacting the speed of generating text from the LLM.

We're using a dataset compiled from tests conducted on various devices. The numbers represent the token speed in tokens per second. Please note that some entries may be missing in the data due to the nature of the benchmark. We'll clearly indicate any missing data points in the analysis below.

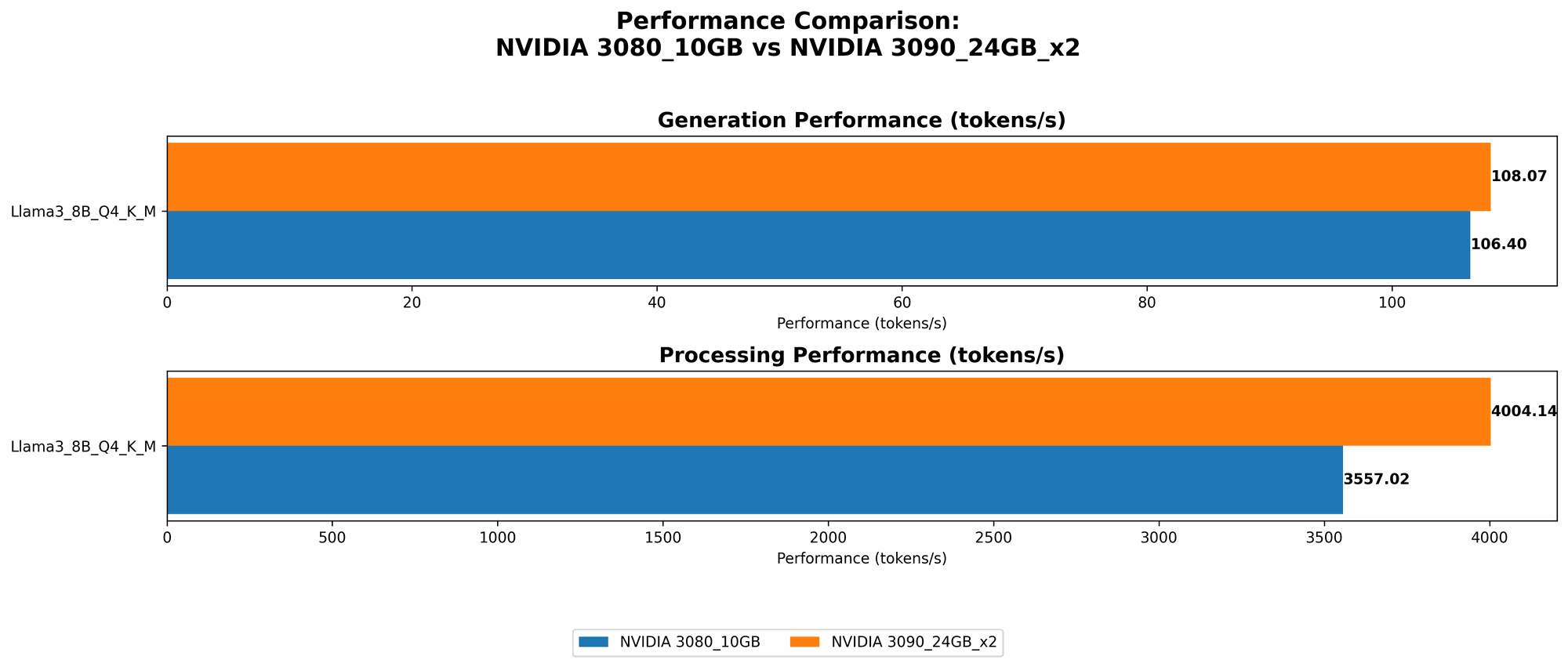

Comparison of NVIDIA 3080 10GB and NVIDIA 3090 24GB x2: Llama 3 8B

| Model | NVIDIA 3080 10GB | NVIDIA 3090 24GB x2 |

|---|---|---|

| Llama3 8B Q4 K_M Generation | 106.4 | 108.07 |

| Llama3 8B F16 Generation | NULL | 47.15 |

| Llama3 8B Q4 K_M Processing | 3557.02 | 4004.14 |

| Llama3 8B F16 Processing | NULL | 4690.5 |

Key Performance Insights:

- The NVIDIA 3090 24GB x2 edge out the NVIDIA 3080 10GB only slightly in Llama 3 8B Q4 K_M Generation. This difference is negligible and may not be noticeable in real-world use cases.

- NVIDIA 3090 24GB x2 shines in F16 Generation, achieving a significantly faster token generation speed compared to the NVIDIA 3080 10GB (which does not have data for this configuration).

- In terms of Processing, the NVIDIA 3090 24GB x2 again outperforms the NVIDIA 3080 10GB, offering a noticeable increase in speed with both Q4 K_M and F16 configurations.

Practical Implications:

Although the NVIDIA 3090 24GB x2 demonstrates slightly better overall performance, the NVIDIA 3080 10GB still offers a strong performance level. The 3090 24GB x2 might be worth considering for projects demanding maximum speed, while the 3080 10GB provides a great balance of power and affordability.

Comparison of NVIDIA 3080 10GB and NVIDIA 3090 24GB x2: Llama 3 70B

| Model | NVIDIA 3080 10GB | NVIDIA 3090 24GB x2 |

|---|---|---|

| Llama3 70B Q4 K_M Generation | NULL | 16.29 |

| Llama3 70B F16 Generation | NULL | NULL |

| Llama3 70B Q4 K_M Processing | NULL | 393.89 |

| Llama3 70B F16 Processing | NULL | NULL |

Key Performance Insights:

- We only have data for the NVIDIA 3090 24GB x2 for the Llama 3 70B model. The NVIDIA 3080 10GB doesn't have any data for this model, meaning it might not be able to handle the 70B model's workload efficiently.

- NVIDIA 3090 24GB x2 shows impressive capability in handling the massive 70B parameter model in Q4 K_M Generation and Processing.

Practical Implications:

The NVIDIA 3090 24GB x2 emerges as the clear winner when working with larger models like Llama 3 70B. It's crucial to note this if you're planning on developing projects that require large models. The NVIDIA 3080 10GB, due to its limited memory and processing power, may struggle with such models.

Performance Analysis: Understanding the Numbers

Let's break down the token speed generation numbers and understand what they mean for your LLM projects:

Higher token speeds mean faster inference: This translates to quicker responses and smoother interaction with your LLM. For example, if you're building a chatbot, a faster token speed will result in more natural and responsive conversations.

Quantization impacts performance: We see a significant difference in token speeds between Q4 KM and F16 configurations. Q4 KM refers to quantization using 4 bits for weights and 4 bits for activations, reducing the model's size and memory footprint. However, it also reduces performance compared to F16, which uses 16 bits for activations.

Model size matters: The 70B parameter Llama 3 model demands significantly more computational resources. Its token generation speeds are much lower than the 8B model, highlighting the need for more powerful hardware to handle larger models.

Strengths and Weaknesses: Choosing the Right Weapon

NVIDIA 3080 10GB: The Workhorse

Strengths:

- Solid Performance: Offers a good balance of speed and affordability for smaller models.

- Cost-effective: More budget-friendly compared to the 3090 x2 setup.

Weaknesses:

- Limited by Memory: May struggle with larger models like the 70B Llama 3 due to its 10GB memory capacity.

- SLI Not Available: The 3080 does not support SLI configuration, limiting potential for additional speed gains.

NVIDIA 3090 24GB x2: The Powerhouse

Strengths:

- Incredible Power: Delivers exceptional token speed generation, especially for larger models.

- Ample Memory: Its 48GB of combined memory handles even the most demanding LLMs with ease.

- SLI Advantage: The SLI configuration provides a significant boost to performance, maximizing its potential.

Weaknesses:

- Costly: The 3090 x2 setup comes with a hefty price tag.

- Power Consumption: Be prepared for high power consumption, ensuring your system has adequate power supply.

Practical Recommendations: Matching Your Needs to the Tool

- Smaller Models: If you're working with models like Llama 3 8B, the NVIDIA 3080 10GB offers a cost-effective solution with decent performance.

- Larger Models: For models like Llama 3 70B, the NVIDIA 3090 24GB x2 shines thanks to its immense processing power and generous memory.

- Budget Consideration: If cost is a primary concern, the NVIDIA 3080 10GB might be the better choice. If your budget allows, the NVIDIA 3090 24GB x2 unlocks unparalleled performance potential.

- Future-proofing: The NVIDIA 3090 24GB x2 is a better investment if you plan on working with even larger models or exploring more demanding LLM applications in the future.

Conclusion: The Final Verdict

Both the NVIDIA 3080 10GB and NVIDIA 3090 24GB x2 are powerful GPUs capable of running LLMs locally. The choice between these two ultimately depends on your specific needs, budget, and future plans.

If you're working with smaller models and cost is a concern, the NVIDIA 3080 10GB is a solid choice. However, if you're venturing into the realm of larger models or prioritizing maximum performance, the NVIDIA 3090 24GB x2 with its raw power and ample memory is the clear champion.

Remember, choosing the right hardware is crucial for a smooth and enjoyable experience in the exciting world of local LLM development!

FAQ

What is an LLM?

LLMs, or large language models, are a type of artificial intelligence designed to understand and generate human-like text. They are trained on massive datasets of text and code, enabling them to perform tasks like text summarization, translation, and even creative writing.

What is token speed generation?

Token speed generation refers to how many tokens (individual units of language) a GPU can process per second. It's a key metric for evaluating the performance of LLMs, as it directly impacts the speed at which they generate text.

What is quantization?

Quantization is a technique used to compress the size of LLMs. It involves reducing the number of bits used to represent the model's weights and activations, resulting in a smaller model that requires less memory. However, quantization can reduce performance.

What are the advantages of running LLMs locally?

Running LLMs locally offers several benefits:

- Privacy: You retain full control over your data and don't need to rely on cloud services.

- Speed: Local processing can be faster than cloud-based solutions, especially for small models.

- Flexibility: You can customize and modify the LLM without restrictions.

How do I choose the right GPU for my LLM project?

Consider the following factors when choosing a GPU:

- Model Size: Larger models require more powerful GPUs with ample memory.

- Performance Needs: Determine how fast you need your LLM to generate text.

- Budget: Set a realistic budget and choose a GPU that meets your needs.

Keywords

Large Language Models, LLM, Token Speed Generation, NVIDIA GeForce RTX 3080, NVIDIA GeForce RTX 3090, SLI, Llama 3, 8B, 70B, Quantization, Q4 K_M, F16, GPU, Performance Comparison, AI Development, Local Inference, Text Generation, Deep Learning, Machine Learning.