Which is Better for AI Development: NVIDIA 3080 10GB or NVIDIA 3080 Ti 12GB? Local LLM Token Speed Generation Benchmark

Introduction: Navigating the World of LLMs and GPUs

The world of Large Language Models (LLMs) is exploding, offering incredible possibilities for AI development. But these models, while powerful, are also incredibly demanding on computing resources. A key component for running LLMs efficiently is the choice of graphics processing unit (GPU).

This article dives into the performance of two popular GPUs, the NVIDIA 3080 10GB and the NVIDIA 3080 Ti 12GB, when running Llama models locally. We'll explore their capabilities, compare their strengths and weaknesses, and guide you towards the best option for your specific AI development needs.

Comparison of NVIDIA 3080 10GB and 3080 Ti 12GB for Local LLM Token Speed Generation

To understand the differences in performance, let's analyze the key factors:

Token Speed Generation for Llama 3 8B Model

What are tokens and token speed?

Tokens are the basic building blocks of language models, representing individual words or parts of words. Token speed (also known as "throughput") refers to the number of tokens that a GPU can process per second. In simpler terms, it's how fast your AI can read and understand the language you feed into it.

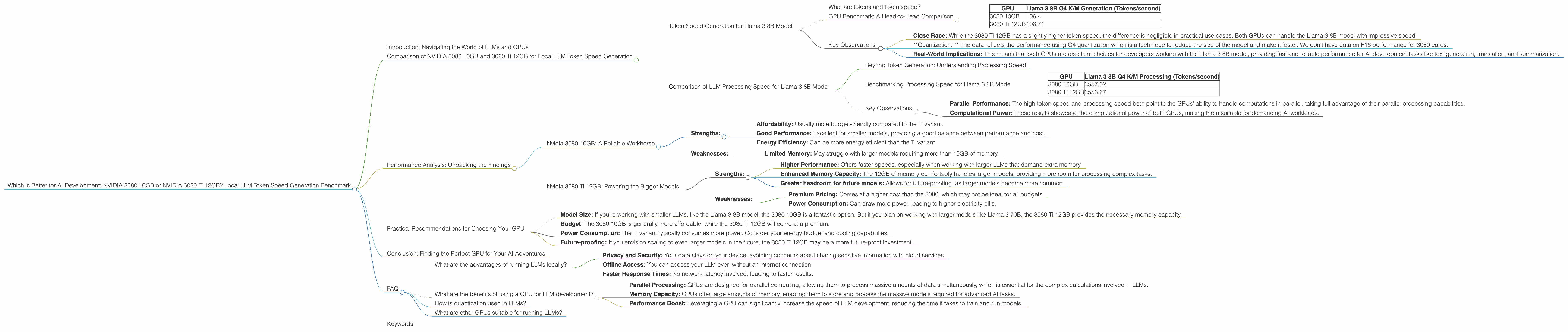

GPU Benchmark: A Head-to-Head Comparison

Both GPUs, when running the Llama 3 8B model with Q4 quantization and the K and M memory configuration, achieve approximately the same token speed generation of approximately 106 tokens per second.

| GPU | Llama 3 8B Q4 K/M Generation (Tokens/second) |

|---|---|

| 3080 10GB | 106.4 |

| 3080 Ti 12GB | 106.71 |

Key Observations:

- Close Race: While the 3080 Ti 12GB has a slightly higher token speed, the difference is negligible in practical use cases. Both GPUs can handle the Llama 3 8B model with impressive speed.

- *Quantization: * The data reflects the performance using Q4 quantization which is a technique to reduce the size of the model and make it faster. We don't have data on F16 performance for 3080 cards.

- Real-World Implications: This means that both GPUs are excellent choices for developers working with the Llama 3 8B model, providing fast and reliable performance for AI development tasks like text generation, translation, and summarization.

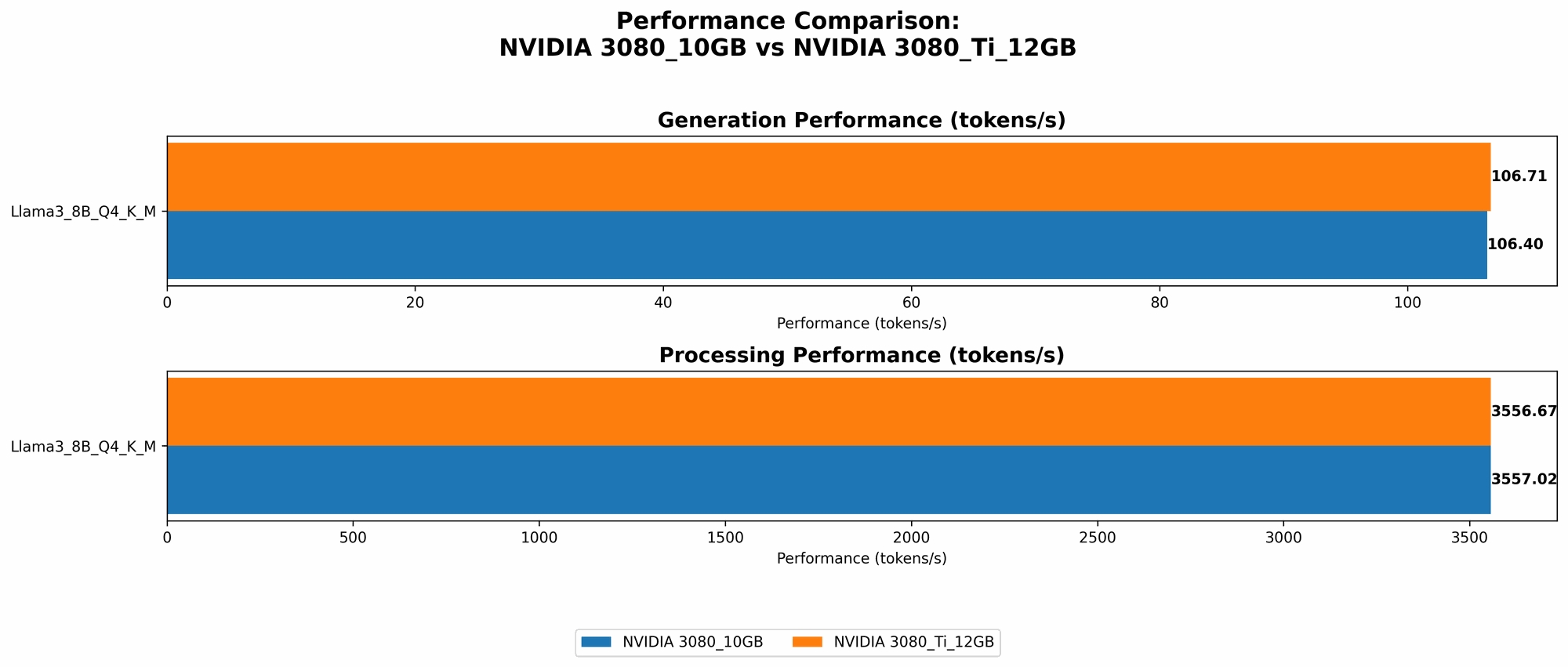

Comparison of LLM Processing Speed for Llama 3 8B Model

Beyond Token Generation: Understanding Processing Speed

Processing speed refers to the overall time it takes for a GPU to handle all the computations involved in running the LLM. This includes tasks beyond token speed, such as matrix multiplications, memory access, and other operations.

Benchmarking Processing Speed for Llama 3 8B Model

Again, the results demonstrate a near-identical performance for both GPUs, with a processing speed exceeding 3500 tokens per second.

| GPU | Llama 3 8B Q4 K/M Processing (Tokens/second) |

|---|---|

| 3080 10GB | 3557.02 |

| 3080 Ti 12GB | 3556.67 |

Key Observations:

- Parallel Performance: The high token speed and processing speed both point to the GPUs' ability to handle computations in parallel, taking full advantage of their parallel processing capabilities.

- Computational Power: These results showcase the computational power of both GPUs, making them suitable for demanding AI workloads.

Performance Analysis: Unpacking the Findings

Now that we've established the performance of these GPUs, let's delve deeper into what these results mean and provide practical recommendations for use cases.

Nvidia 3080 10GB: A Reliable Workhorse

The NVIDIA 3080 10GB is a reliable choice for various LLM tasks, offering a solid balance of performance and affordability. Its 10GB of memory is sufficient for running smaller to medium-sized LLMs, like the Llama 3 8B model.

Strengths:

- Affordability: Usually more budget-friendly compared to the Ti variant.

- Good Performance: Excellent for smaller models, providing a good balance between performance and cost.

- Energy Efficiency: Can be more energy efficient than the Ti variant.

Weaknesses:

- Limited Memory: May struggle with larger models requiring more than 10GB of memory.

Nvidia 3080 Ti 12GB: Powering the Bigger Models

The NVIDIA 3080 Ti 12GB is designed for higher performance and can handle larger models. The additional 2GB of memory compared to the 3080 makes it suitable for running models like Llama 3 70B.

Strengths:

- Higher Performance: Offers faster speeds, especially when working with larger LLMs that demand extra memory.

- Enhanced Memory Capacity: The 12GB of memory comfortably handles larger models, providing more room for processing complex tasks.

- Greater headroom for future models: Allows for future-proofing, as larger models become more common.

Weaknesses:

- Premium Pricing: Comes at a higher cost than the 3080, which may not be ideal for all budgets.

- Power Consumption: Can draw more power, leading to higher electricity bills.

Practical Recommendations for Choosing Your GPU

To make the best decision for your AI development needs, consider the following factors:

- Model Size: If you're working with smaller LLMs, like the Llama 3 8B model, the 3080 10GB is a fantastic option. But if you plan on working with larger models like Llama 3 70B, the 3080 Ti 12GB provides the necessary memory capacity.

- Budget: The 3080 10GB is generally more affordable, while the 3080 Ti 12GB will come at a premium.

- Power Consumption: The Ti variant typically consumes more power. Consider your energy budget and cooling capabilities.

- Future-proofing: If you envision scaling to even larger models in the future, the 3080 Ti 12GB may be a more future-proof investment.

Conclusion: Finding the Perfect GPU for Your AI Adventures

The choice between the NVIDIA 3080 10GB and 3080 Ti 12GB ultimately depends on your specific needs and budget. Both cards are capable of delivering excellent performance for running LLMs, but their strengths lie in different areas: the 3080 10GB offers affordability and a solid balance of performance for smaller models, while the 3080 Ti 12GB excels with its larger memory capacity for handling larger models.

FAQ

What are the advantages of running LLMs locally?

Running LLMs locally offers several benefits:

- Privacy and Security: Your data stays on your device, avoiding concerns about sharing sensitive information with cloud services.

- Offline Access: You can access your LLM even without an internet connection.

- Faster Response Times: No network latency involved, leading to faster results.

What are the benefits of using a GPU for LLM development?

GPUs are crucial for LLMs because:

- Parallel Processing: GPUs are designed for parallel computing, allowing them to process massive amounts of data simultaneously, which is essential for the complex calculations involved in LLMs.

- Memory Capacity: GPUs offer large amounts of memory, enabling them to store and process the massive models required for advanced AI tasks.

- Performance Boost: Leveraging a GPU can significantly increase the speed of LLM development, reducing the time it takes to train and run models.

How is quantization used in LLMs?

Quantization is a technique used to reduce the size of an LLM by representing its weights (parameters) with fewer bits. Think of it like converting a high-resolution image into a lower-resolution image. This reduces the memory requirements, making the models faster and more efficient to run.

What are other GPUs suitable for running LLMs?

The NVIDIA 3080 10GB and 3080 Ti 12GB are excellent choices, but other GPUs can be suitable depending on your requirements. Consider exploring options from the NVIDIA GeForce RTX series (RTX 3090, RTX 3090 Ti, RTX 40 series), the NVIDIA A100 and A40 designed specifically for AI workloads, or even AMD GPUs like the AMD Radeon RX 6900 XT.

Keywords:

NVIDIA 3080 10GB, NVIDIA 3080 Ti 12GB, LLM, Large Language Model, Token Speed, Processing Speed, GPU, GPU Benchmark, Llama 3, Llama 3 8B, Llama 3 70B, Quantization, Q4, AI Development, Local LLM, GPU Comparison, Performance Analysis, AI Workloads, Text Generation, Translation, Summarization, AI Models, Memory Capacity, AI Hardware,