Which is Better for AI Development: NVIDIA 3070 8GB or NVIDIA 3080 10GB? Local LLM Token Speed Generation Benchmark

Introduction: The Quest for Speedy Tokens

Welcome, fellow AI enthusiasts! Today, we're diving deep into the thrilling world of local LLM (Large Language Model) development, specifically focusing on the battle between two titans of the GPU world: the NVIDIA GeForce RTX 3070 8GB and the NVIDIA GeForce RTX 3080 10GB.

Imagine this: you're working on building a groundbreaking AI application, and your LLM needs to generate text at lightning speed. You need a GPU that can handle the computational horsepower to make your dreams a reality. But which one reigns supreme?

This article will provide you with a comprehensive benchmark comparing the token speed generation performance of these two GPUs using various LLM models. Get ready to unleash the power of your AI!

The Showdown: 3070 8GB vs. 3080 10GB

The Contenders:

- NVIDIA GeForce RTX 3070 8GB: This GPU packs a punch with 5888 CUDA cores and 8GB of GDDR6 memory.

- NVIDIA GeForce RTX 3080 10GB: A heavyweight champion, boasting 8704 CUDA cores and 10GB of GDDR6X memory.

The Battlefield: Llama 3 Models

For this benchmark, we'll be focusing on Llama 3 models, a popular choice for developers due to their impressive performance and open-source nature. Note that we are comparing Llama 3 8B models; data for other models is not available for this comparison.

The Weapons: Quantization and Precision

To make the models run efficiently on these GPUs, we'll employ two key techniques:

- Quantization: This involves reducing the size of the model by using lower-precision numbers. Think of it as compressing a video file to save space - a less detailed version but still recognizable! For our comparison, we'll use 4-bit quantization (Q4).

- Precision: This refers to the number of bits used to represent a number. For example, F16 uses 16 bits to represent a number, whereas Q4 uses 4. Higher precision means more accurate results, but potentially slower processing.

Performance Analysis: Token Speed Generation

Llama 3 8B - Q4 Quantization

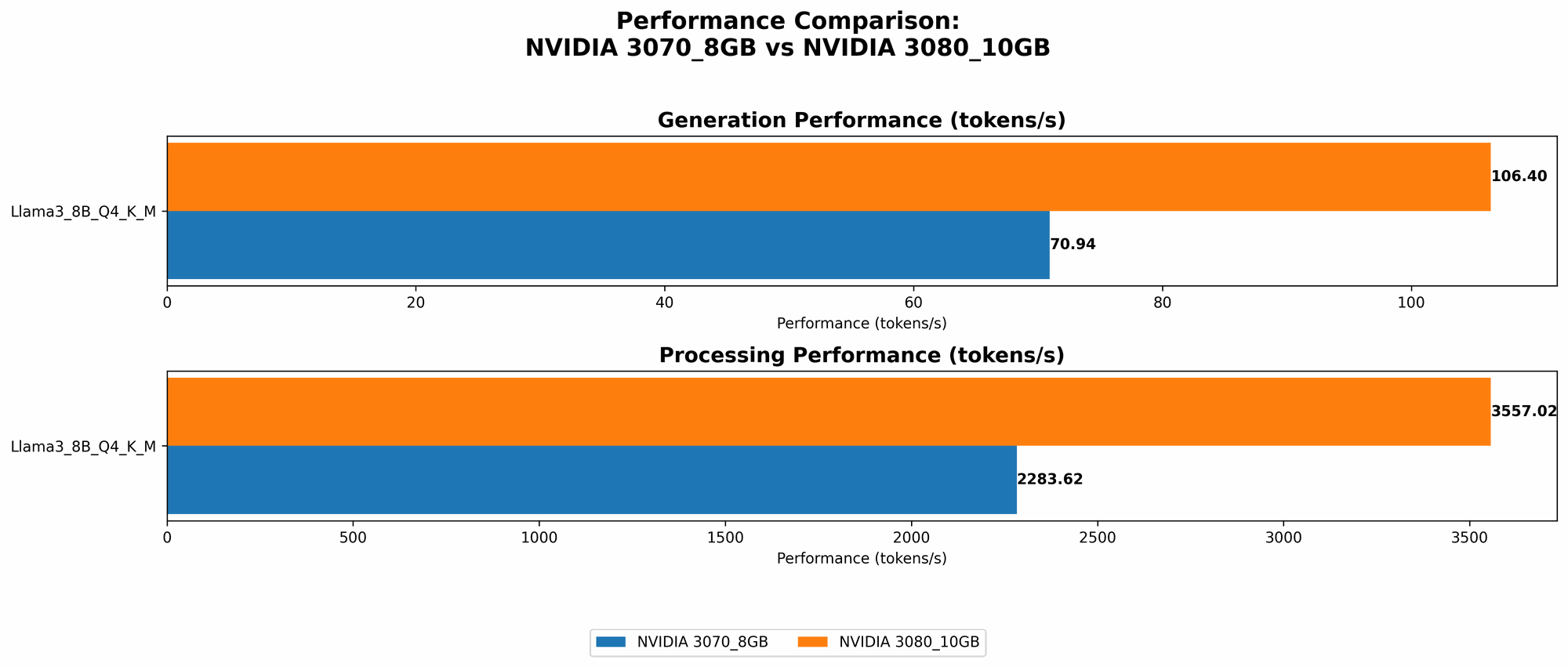

The table below showcases the tokens per second generated by the Llama 3 8B models using Q4 quantization with both GPUs. Remember, higher numbers indicate faster processing.

| Model | 3070 8GB (Tokens/sec) | 3080 10GB (Tokens/sec) |

|---|---|---|

| Llama 3 8B Q4 K/M (Generation) | 70.94 | 106.4 |

| Llama 3 8B Q4 K/M (Processing) | 2283.62 | 3557.02 |

Analysis of Results

- The NVIDIA 3080 10GB consistently outperforms the 3070 8GB for both generation and processing.

- In the generation task, the 3080 10GB achieves approximately 50% faster token speed compared to the 3070 8GB.

- For processing, the 3080 10GB shows a more substantial performance advantage, achieving 55% faster token speed.

Practical Implications

- Faster Inference: The 3080 10GB allows for faster inference, which means your AI applications can respond to user queries and generate text more quickly. This is crucial for real-time applications like chatbots and interactive assistants.

- Increased Throughput: For tasks that involve processing large amounts of text, like summarizing documents or generating creative content, the 3080 10GB's faster processing speed provides a significant advantage.

Conclusion: The Verdict is In

The NVIDIA GeForce RTX 3080 10GB emerges as the clear champion in this benchmark. It consistently delivers faster token speeds for both generation and processing tasks with the Llama 3 8B model, offering substantial performance advantages over the 3070 8GB.

However, it's important to consider your specific needs and budget when choosing a GPU. The 3070 8GB might be a suitable option for smaller projects or if your budget is tighter.

Beyond the Benchmark: Factors to Consider

While token speed is crucial, it's not the only factor to consider when choosing a GPU for LLM development. Here are some additional factors to keep in mind:

- Memory: The 3080 10GB has more memory (10GB) than the 3070 8GB (8GB). This can be crucial for models with larger parameter counts or for running multiple models simultaneously.

- Power Consumption: The 3080 10GB is a more powerful GPU, and therefore consumes more power. This can be a consideration, especially for budget-conscious developers or if you have cooling limitations.

- Software Compatibility: Ensure that the GPU you choose is compatible with the software you're using for LLM development.

FAQ: Questions and Answers

What are LLM Models?

LLMs are AI models trained on massive amounts of text data, enabling them to understand and generate human-like text. Think of them as super-powered text generators, capable of writing stories, translating languages, and even summarizing information.

What is Quantization?

Quantization is a technique used to reduce the size of a model by using lower-precision numbers. Imagine a photo with millions of colors, each represented by three bytes (256 shades for each of red, green, and blue). Quantization would reduce the number of colors to, say, just 16 per channel, making the image smaller but still recognizable. This reduces the memory footprint and speeds up the model’s processing.

What is the difference between Generation and Processing?

- Generation: This refers to the process of generating new text based on a given prompt.

- Processing: This encompasses all the computational tasks involved in running an LLM, including tokenization, embedding, and attention calculations.

What are CUDA Cores?

CUDA (Compute Unified Device Architecture) is a parallel computing platform and programming model developed by NVIDIA. CUDA cores are the processing units within a GPU dedicated to performing parallel computations. The more CUDA cores, the more parallel tasks a GPU can handle.

What is the "K" and "M" in "Llama38BQ4KM_Generation"?

- K: This refers to the "Key" in the Key-Value storage system used by LLMs.

- M: This refers to the "Memory" in the Key-Value storage system.

Keywords

NVIDIA 3070 8GB, NVIDIA 3080 10GB, LLM, Large Language Model, Token Speed Generation, Benchmark, Llama 3 8B, Quantization, F16 Precision, Q4 Precision, GPU, AI Development, Inference, Processing, CUDA Cores, Key-Value Storage, GPU Memory, Power Consumption, Software Compatibility.