Which is Better for AI Development: Apple M3 Max 400gb 40cores or NVIDIA RTX 6000 Ada 48GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is exploding, with new models being released almost daily. These models are capable of performing incredible feats, from generating realistic text to translating languages and writing code. But to train and run these models, you need powerful hardware.

In this article, we'll be comparing two titans of the computing world: the Apple M3 Max 400GB 40cores and the NVIDIA RTX 6000 Ada 48GB. We will specifically focus on their performance in generating tokens for local LLM deployments. Think of it as a race to see which device can spit out words at the fastest rate. We'll be diving into the nitty-gritty details of their performance with different LLM models and quantization levels, uncovering which device reigns supreme for specific use cases.

This comparison will provide valuable insights for developers and enthusiasts eager to find the perfect hardware for their LLM projects. Buckle up!

Comparison of Apple M3 Max 400GB 40cores and NVIDIA RTX 6000 Ada 48GB

Key Hardware Specifications

Let's first take a look at the specs of our contenders. The Apple M3 Max is a powerful CPU with 40 cores, packing massive memory with its 400GB of RAM. On the other side, we have the NVIDIA RTX 6000 Ada, a dedicated GPU with 48GB of memory.

While the M3 Max boasts more processors, the RTX 6000 Ada thrives on its dedicated architecture optimized for parallel processing, often favoured for tasks like deep learning. This difference in architecture will significantly affect their performance with LLMs.

Apple M3 Max Token Speed Generation

The Apple M3 Max stands out for its impressive performance with smaller LLM models, especially in the realm of text processing. It handles the computationally demanding task of processing the context and understanding the relationships between words efficiently.

Here's a breakdown of the M3 Max's token generation speeds:

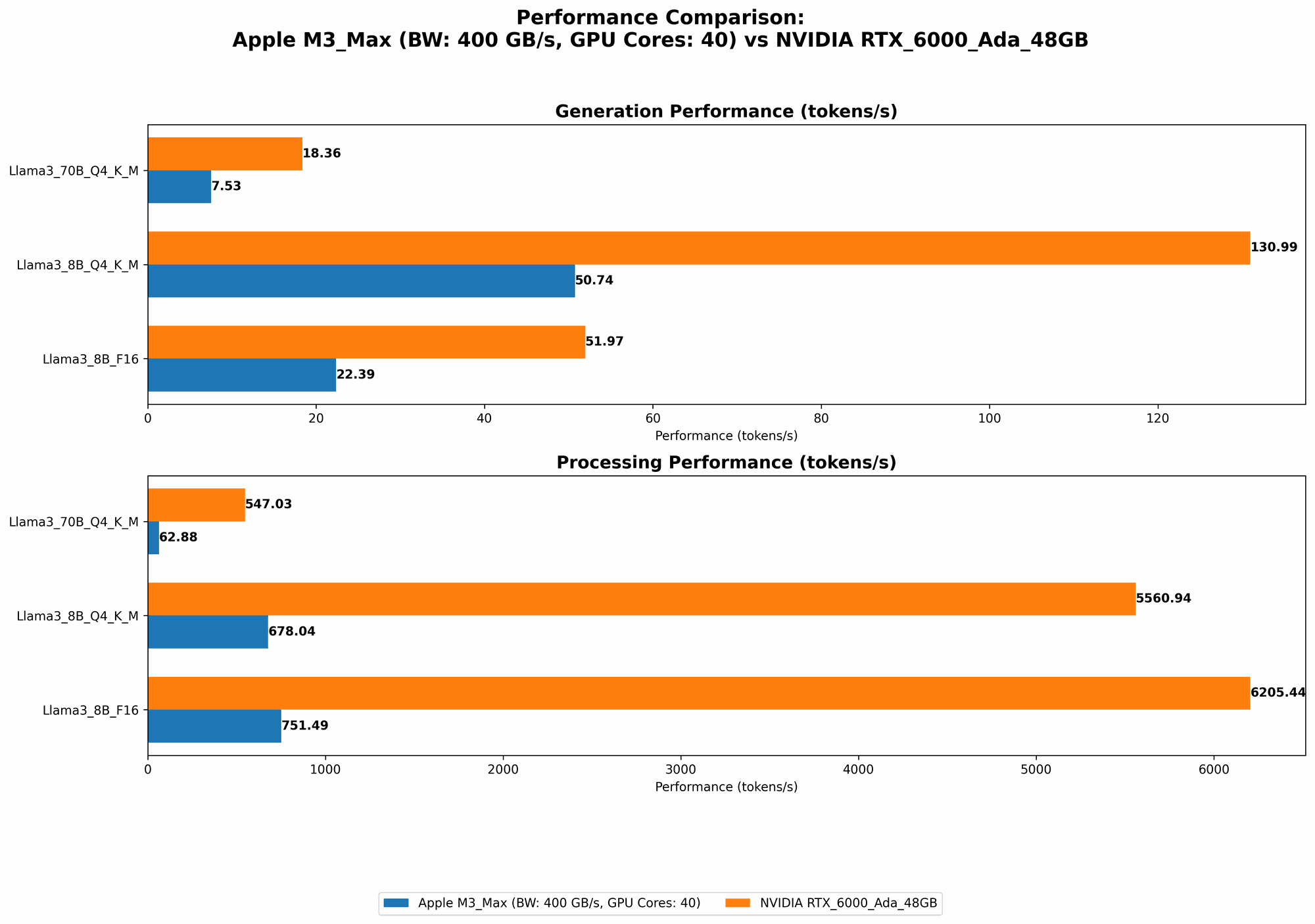

Llama 2 7B: The M3 Max showcases its prowess with Llama 2 7B, achieving impressive speeds in both processing and generation across various quantization levels:

- F16: 779.17 tokens/second (Processing) and 25.09 tokens/second (Generation)

- Q8_0: 757.64 tokens/second (Processing) and 42.75 tokens/second (Generation)

- Q4_0: 759.7 tokens/second (Processing) and 66.31 tokens/second (Generation)

Llama 3 8B: The M3 Max continues to impress with Llama 3 8B, proving its capabilities for larger models:

- Q4KM: 678.04 tokens/second (Processing) and 50.74 tokens/second (Generation)

- F16: 751.49 tokens/second (Processing) and 22.39 tokens/second (Generation)

Llama 3 70B: The M3 Max struggles with the larger Llama 3 70B model, showcasing performance limitations when dealing with larger models:

- Q4KM: 62.88 tokens/second (Processing) and 7.53 tokens/second (Generation)

- F16: Data unavailable for this configuration

NVIDIA RTX 6000 Ada Token Speed Generation

The NVIDIA RTX 6000 Ada shines when it comes to generating tokens for larger LLM models, particularly in processing. This is where its specialized GPU architecture truly shines, allowing it to process massive amounts of data concurrently.

Let's dive into the token generation performance of the RTX 6000 Ada:

Llama 3 8B: The RTX 6000 Ada delivers blazing fast token processing speeds with Llama 3 8B, showcasing its prowess with larger models:

- Q4KM: 5560.94 tokens/second (Processing) and 130.99 tokens/second (Generation)

- F16: 6205.44 tokens/second (Processing) and 51.97 tokens/second (Generation)

Llama 3 70B: The RTX 6000 Ada further demonstrates its strength with the massive Llama 3 70B model, handling it effortlessly:

- Q4KM: 547.03 tokens/second (Processing) and 18.36 tokens/second (Generation)

- F16: Data unavailable for this configuration

Performance Analysis: Strengths and Weaknesses

Apple M3 Max:

Strengths:

- Excellent performance with smaller LLMs: The M3 Max excels at running smaller models like Llama 2 7B and Llama 3 8B, offering impressive token generation speeds across different quantization levels. This makes it ideal for tasks requiring fast response times or working with computationally less demanding models.

- Energy efficiency: The M3 Max is known for its energy efficiency, which is a significant advantage for developers working with limited power budgets.

Weaknesses:

- Limited capabilities with larger models: The M3 Max struggles with generating tokens for larger models like Llama 3 70B, showcasing a performance bottleneck that might not be ideal for applications demanding high speeds with larger models.

NVIDIA RTX 6000 Ada:

Strengths:

- Exceptional performance with larger LLMs: The RTX 6000 Ada is a powerhouse when it comes to handling larger models like Llama 3 70B, boasting significantly faster token generation speeds compared to the M3 Max. This makes it suitable for applications requiring high performance with complex LLMs.

- Dedicated GPU Architecture: The RTX 6000 Ada's dedicated GPU architecture is optimized for parallel processing, which is a massive benefit for LLM workloads.

Weaknesses:

- High power consumption: GPUs like the RTX 6000 Ada are notorious for their high power consumption, which can be a concern for users with limited power budgets. This may require more powerful power supplies for the system.

- Less efficient with smaller models: While powerful with larger LLMs, the RTX 6000 Ada might not be the most efficient choice for running smaller models due to its architecture. This can result in less efficient token generation speeds for smaller models.

Practical Recommendations: Choosing the Right Device for Your Needs

For running smaller models like Llama 2 7B and Llama 3 8B: The Apple M3 Max is your best bet. Its impressive processing power and efficient energy consumption make it ideal for tasks requiring fast response times and energy efficiency.

For running larger models like Llama 3 70B: The NVIDIA RTX 6000 Ada is the clear winner. Its dedicated GPU architecture allows it to effortlessly handle the computational demands of these massive models, delivering significantly faster token generation speeds.

For developers working with limited power budgets: The Apple M3 Max is a more sustainable option, offering excellent performance with lower power consumption.

For applications requiring high performance with large LLMs: The NVIDIA RTX 6000 Ada is the go-to choice, providing unparalleled speed with larger models.

Ultimately, the best device for you depends on your specific needs and budget. Carefully consider your application requirements, the size of the models you intend to run, and your power consumption limitations before making your decision.

Conclusion

The race to generate tokens is fierce, and both the Apple M3 Max and NVIDIA RTX 6000 Ada have their strengths. The M3 Max shines with smaller models, emphasizing efficiency, while the RTX 6000 Ada proves its dominance with larger models, maximizing raw power.

Choosing the right device is like selecting the perfect tool for the job. If you need speed and efficiency, the M3 Max is your ally. If you're tackling massive models, the RTX 6000 Ada is the champion.

FAQ

What are the different types of LLM quantization?

LLM quantization is a technique used to reduce the memory footprint of LLM models by converting their weights from higher precision formats like F16 to lower precision formats like Q4 or Q8. It's like compressing a file to save space, but in the world of LLMs.

What is the difference between F16, Q4, and Q8 quantization?

- F16: This is the standard format for floating-point precision, offering decent accuracy.

- Q4: This format uses 4 bits to represent each number, resulting in a smaller memory footprint and potentially faster processing, but with a slight loss in accuracy.

- Q8: Similar to Q4, but with 8 bits for each number, providing a better balance between accuracy and memory.

How does the GPU architecture of the RTX 6000 Ada differ from the CPU architecture of the M3 Max?

The RTX 6000 Ada's GPU architecture is designed for parallel processing, allowing it to handle massive calculations simultaneously. It's like having a room full of assistants each working on a piece of the puzzle, allowing for much faster processing. The M3 Max, with its CPU architecture, handles tasks sequentially, like having a single person working through the puzzle.

Can I run LLMs locally without dedicated hardware like the M3 Max or RTX 6000 Ada?

While it's possible to run smaller LLMs on a regular laptop or desktop computer, you'll likely experience significantly slower performance and potentially face memory limitations. Dedicated hardware like the M3 Max or RTX 6000 Ada is recommended for optimal performance and smoother operation, especially with larger LLMs.

What other factors should I consider when choosing hardware for LLM development?

Besides token generation speed, consider factors like power consumption, cost, memory capacity, and the availability of software tools and libraries compatible with the hardware.

Keywords

Apple M3 Max, NVIDIA RTX 6000 Ada, LLM, Large Language Model, Token Speed, Token Generation, Quantization, F16, Q4, Q8, AI Development, Local Deployment, Performance Benchmark, Hardware Comparison, Developer Tools, GPU, CPU, Llama 2, Llama 3, Memory, Processing, Generation, Power Consumption, Cost, Efficiency, Speed, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, Conversational AI, Chatbot, Generative AI, AI Applications, AI Research, AI Development, AI Ethics, AI Future.