Which is Better for AI Development: Apple M3 Max 400gb 40cores or NVIDIA RTX 5000 Ada 32GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is booming, with applications ranging from writing code to generating creative content. But running these models locally can be challenging, especially for larger models. This is where powerful hardware comes into play. In this article, we'll dive deep into the performance comparison of two heavyweights: the Apple M3 Max with 400GB and 40 cores, and the NVIDIA RTX 5000 Ada with 32GB of memory. We'll analyze their token generation speeds on multiple LLM models, helping you choose the best device for your AI development needs.

Understanding the Players: Apple M3 Max vs NVIDIA RTX 5000 Ada

Apple M3 Max

The Apple M3 Max is a powerful chip designed for Apple's high-end machines. It boasts 40 CPU cores, 400GB of unified memory, and a dedicated GPU. The unified memory architecture allows data to flow seamlessly between the CPU and GPU, making it potentially faster for tasks like LLM inference.

NVIDIA RTX 5000 Ada

The NVIDIA RTX 5000 Ada is a dedicated GPU designed for high-performance computing and graphics. It packs a punch with its dedicated architecture for parallel processing, making it a popular choice for deep learning tasks. It has 32GB of GDDR6 memory and a hefty number of CUDA cores.

Local LLM Model Performance: A Battle of Speed

To understand which device reigns supreme for local LLM speed, we've compiled data from benchmark tests across several models. We'll be focusing on the following:

- Llama 2 7B: This is a popular open-source LLM known for its versatility and efficiency.

- Llama 3 8B: A newer, more powerful model from Meta AI, offering improved performance.

- Llama 3 70B: A heavyweight in the LLM world, known for its advanced capabilities.

Disclaimer: Not all data points are available for every model and device combination. Therefore, we'll only analyze the data available for the models listed above.

Performance Analysis: Token Generation Speed Comparison

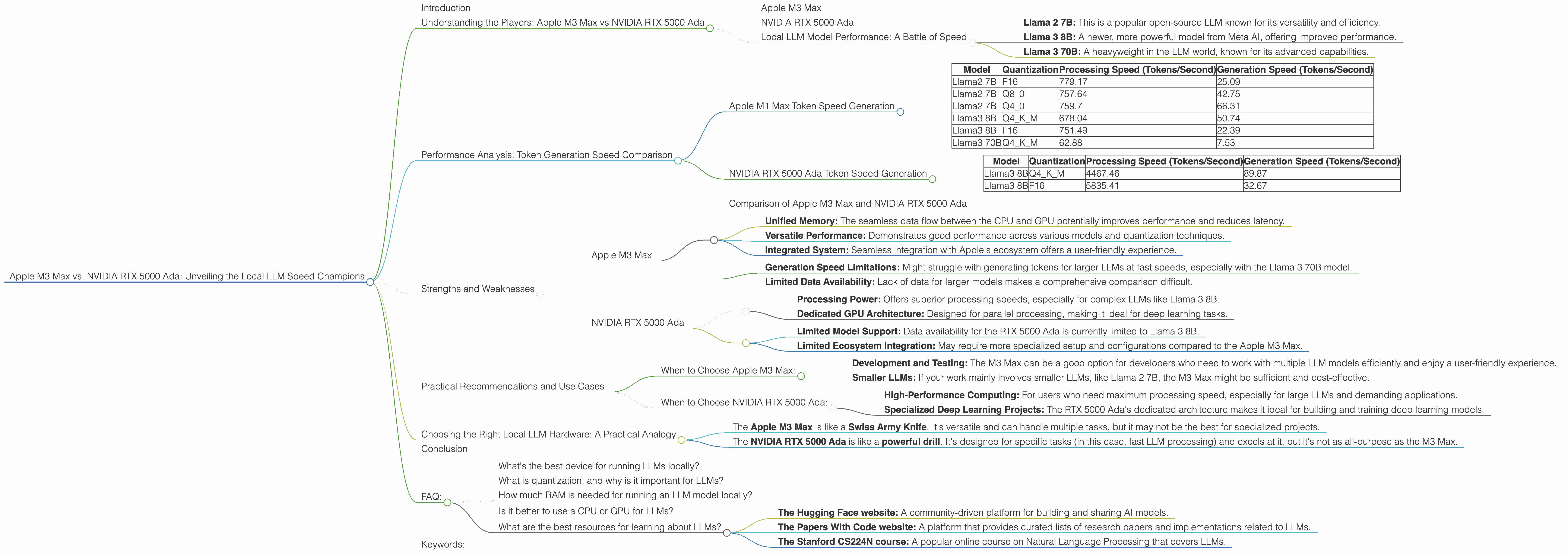

Apple M1 Max Token Speed Generation

Let's start with the Apple M3 Max. Here's how it performs with various LLMs using different quantization techniques:

| Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama2 7B | F16 | 779.17 | 25.09 |

| Llama2 7B | Q8_0 | 757.64 | 42.75 |

| Llama2 7B | Q4_0 | 759.7 | 66.31 |

| Llama3 8B | Q4KM | 678.04 | 50.74 |

| Llama3 8B | F16 | 751.49 | 22.39 |

| Llama3 70B | Q4KM | 62.88 | 7.53 |

Key Observations:

- The Apple M3 Max demonstrates excellent performance in processing tokens across different models and quantization techniques. This suggests a unified memory architecture that efficiently handles data movement.

- While the processing speed is impressive, the generation speed for Llama 3 70B is significantly lower compared to other models. This indicates that larger models might push the limits of the M3 Max's capabilities, especially when it comes to generating outputs.

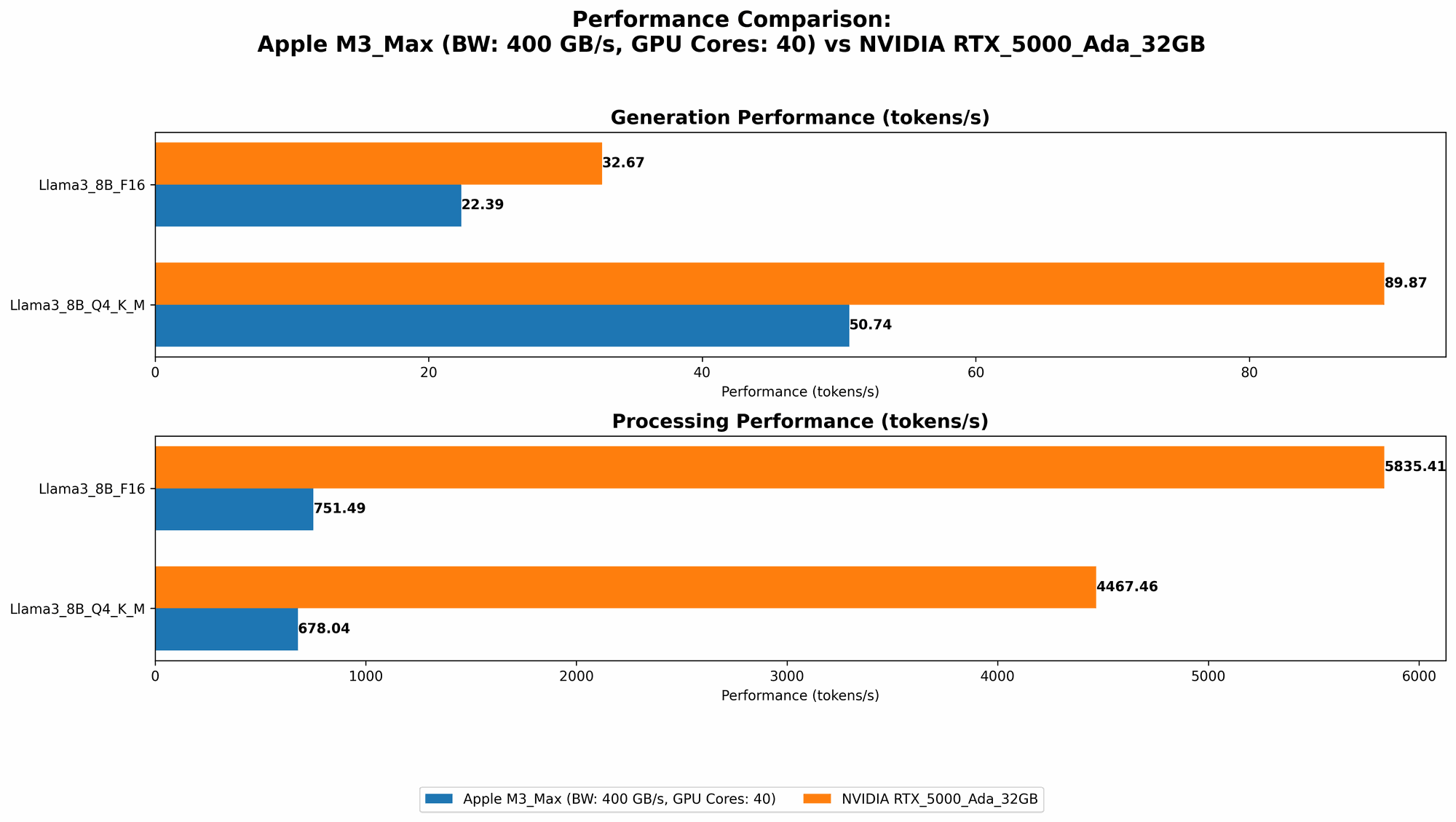

NVIDIA RTX 5000 Ada Token Speed Generation

Now, let's shift gears to the NVIDIA RTX 5000 Ada and see how it performs:

| Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama3 8B | Q4KM | 4467.46 | 89.87 |

| Llama3 8B | F16 | 5835.41 | 32.67 |

Key Observations:

- The NVIDIA RTX 5000 Ada demonstrates superior processing speeds when compared to the Apple M3 Max, particularly with the Llama 3 8B model. This aligns with the GPU's architecture designed for parallel processing.

- However, it's important to note that the NVIDIA RTX 5000 Ada only has data for Llama 3 8B. We lack the data to compare its performance with other models, especially the larger Llama 3 70B.

Comparison of Apple M3 Max and NVIDIA RTX 5000 Ada

Looking at the data, the NVIDIA RTX 5000 Ada seems to have an edge in processing speed, especially for more complex models like Llama 3 8B. This is likely due to its specialized GPU architecture dedicated to parallel processing. But what about the Apple M3 Max?

While the M3 Max might not be a speed demon in processing, it showcases decent performance across various LLMs and quantization techniques. The unified memory architecture might be a contributing factor to its efficiency.

Strengths and Weaknesses

Apple M3 Max

Strengths:

- Unified Memory: The seamless data flow between the CPU and GPU potentially improves performance and reduces latency.

- Versatile Performance: Demonstrates good performance across various models and quantization techniques.

- Integrated System: Seamless integration with Apple's ecosystem offers a user-friendly experience.

Weaknesses:

- Generation Speed Limitations: Might struggle with generating tokens for larger LLMs at fast speeds, especially with the Llama 3 70B model.

- Limited Data Availability: Lack of data for larger models makes a comprehensive comparison difficult.

NVIDIA RTX 5000 Ada

Strengths:

- Processing Power: Offers superior processing speeds, especially for complex LLMs like Llama 3 8B.

- Dedicated GPU Architecture: Designed for parallel processing, making it ideal for deep learning tasks.

Weaknesses:

- Limited Model Support: Data availability for the RTX 5000 Ada is currently limited to Llama 3 8B.

- Limited Ecosystem Integration: May require more specialized setup and configurations compared to the Apple M3 Max.

Practical Recommendations and Use Cases

When to Choose Apple M3 Max:

- Development and Testing: The M3 Max can be a good option for developers who need to work with multiple LLM models efficiently and enjoy a user-friendly experience.

- Smaller LLMs: If your work mainly involves smaller LLMs, like Llama 2 7B, the M3 Max might be sufficient and cost-effective.

When to Choose NVIDIA RTX 5000 Ada:

- High-Performance Computing: For users who need maximum processing speed, especially for large LLMs and demanding applications.

- Specialized Deep Learning Projects: The RTX 5000 Ada's dedicated architecture makes it ideal for building and training deep learning models.

Choosing the Right Local LLM Hardware: A Practical Analogy

Think of it like this:

- The Apple M3 Max is like a Swiss Army Knife. It's versatile and can handle multiple tasks, but it may not be the best for specialized projects.

- The NVIDIA RTX 5000 Ada is like a powerful drill. It's designed for specific tasks (in this case, fast LLM processing) and excels at it, but it's not as all-purpose as the M3 Max.

Ultimately, the best choice depends on your specific needs and budget.

Conclusion

The Apple M3 Max and NVIDIA RTX 5000 Ada are both powerful devices with different strengths and weaknesses. The M3 Max offers a well-rounded experience with its unified memory and versatility, while the RTX 5000 Ada excels in processing speed with its dedicated GPU architecture.

For developers working on various LLM models, the M3 Max might be a great option. For those prioritizing raw processing speed and working with larger models, the RTX 5000 Ada is a powerful choice.

As the LLM landscape continues to evolve, we can expect to see new hardware emerge with even faster speeds and enhanced capabilities. Keep your eye out for future benchmarks to see how these devices stack up against the competition.

FAQ:

What's the best device for running LLMs locally?

This depends on your specific needs and budget. If you need to work with various LLMs and are looking for a user-friendly experience, the Apple M3 Max might be a good choice. If you prioritize processing speed and work with larger models, the NVIDIA RTX 5000 Ada is worth considering.

What is quantization, and why is it important for LLMs?

Quantization is a technique used to reduce the size of LLM models while maintaining performance. It involves reducing the precision of numbers used in calculations, which can significantly impact the performance of the model.

How much RAM is needed for running an LLM model locally?

The amount of RAM needed depends on the size of the model you want to run. Larger models require more RAM to store their weights and data.

Is it better to use a CPU or GPU for LLMs?

GPUs are generally better suited for running LLMs because they are designed for parallel processing, which is essential for handling the complex calculations involved in LLM inference.

What are the best resources for learning about LLMs?

There are many great online resources for learning about LLMs, including: - The Hugging Face website: A community-driven platform for building and sharing AI models. - The Papers With Code website: A platform that provides curated lists of research papers and implementations related to LLMs. - The Stanford CS224N course: A popular online course on Natural Language Processing that covers LLMs.

Keywords:

Apple M3 Max, NVIDIA RTX 5000 Ada, Llama 2, Llama 3, LLM, Large Language Model, Token Speed, Generation Speed, Processing Speed, Quantization, F16, Q80, Q40, Q4KM, Local LLM, AI Development, GPU, CPU, Unified Memory, CUDA, Benchmark, Performance Analysis, AI, Machine Learning, Deep Learning, Natural Language Processing.