Which is Better for AI Development: Apple M3 Max 400gb 40cores or NVIDIA RTX 4000 Ada 20GB x4? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI development is buzzing with excitement, and Large Language Models (LLMs) are leading the charge! Creating and running these powerful models requires serious computing power, making the choice of hardware crucial for efficient development. Two popular contenders in this "hardware horsepower" race are the Apple M3 Max 400gb 40cores and the NVIDIA RTX 4000 Ada 20GB x4. This article dives deep into comparing these two powerful beasts, focusing on their performance in generating tokens locally for various LLM models. We'll use real benchmark data to analyze their strengths and weaknesses, helping you make an informed decision for your AI development journey.

Comparing Apple M3 Max 400gb 40cores and NVIDIA RTX 4000 Ada 20GB x4

Okay, so you've got your LLM model ready to roll, but you're stuck choosing between the Apple M3 Max and the NVIDIA RTX 4000 Ada. Think of it like deciding between a sleek sports car and a powerful truck. Both are amazing, but they're built for different things. Let's break down their performance and see which one reigns supreme for your AI needs!

Apple M3 Max: The All-Around Performer

The Apple M3 Max packs a punch with its 40 cores and generous 400GB of memory. You might be thinking, "Wow, that's a lot of cores! It must be lightning fast!" And you'd be right – it's definitely a beast for general-purpose tasks, and it can handle a lot of data at once. For LLM developers, this means it can handle larger models and datasets more efficiently.

NVIDIA RTX 4000 Ada 20GB x4: The GPU Powerhouse

NVIDIA's RTX 4000 Ada is the true GPU superstar, with its specialized architecture designed to accelerate AI workloads. Imagine it as a powerful engine optimized specifically for running AI models. The RTX 4000 Ada's multiple GPU cores mean that it can handle complex calculations quickly, making it perfect for running heavy-duty LLM models.

Local LLM Token Speed Generation Benchmark:

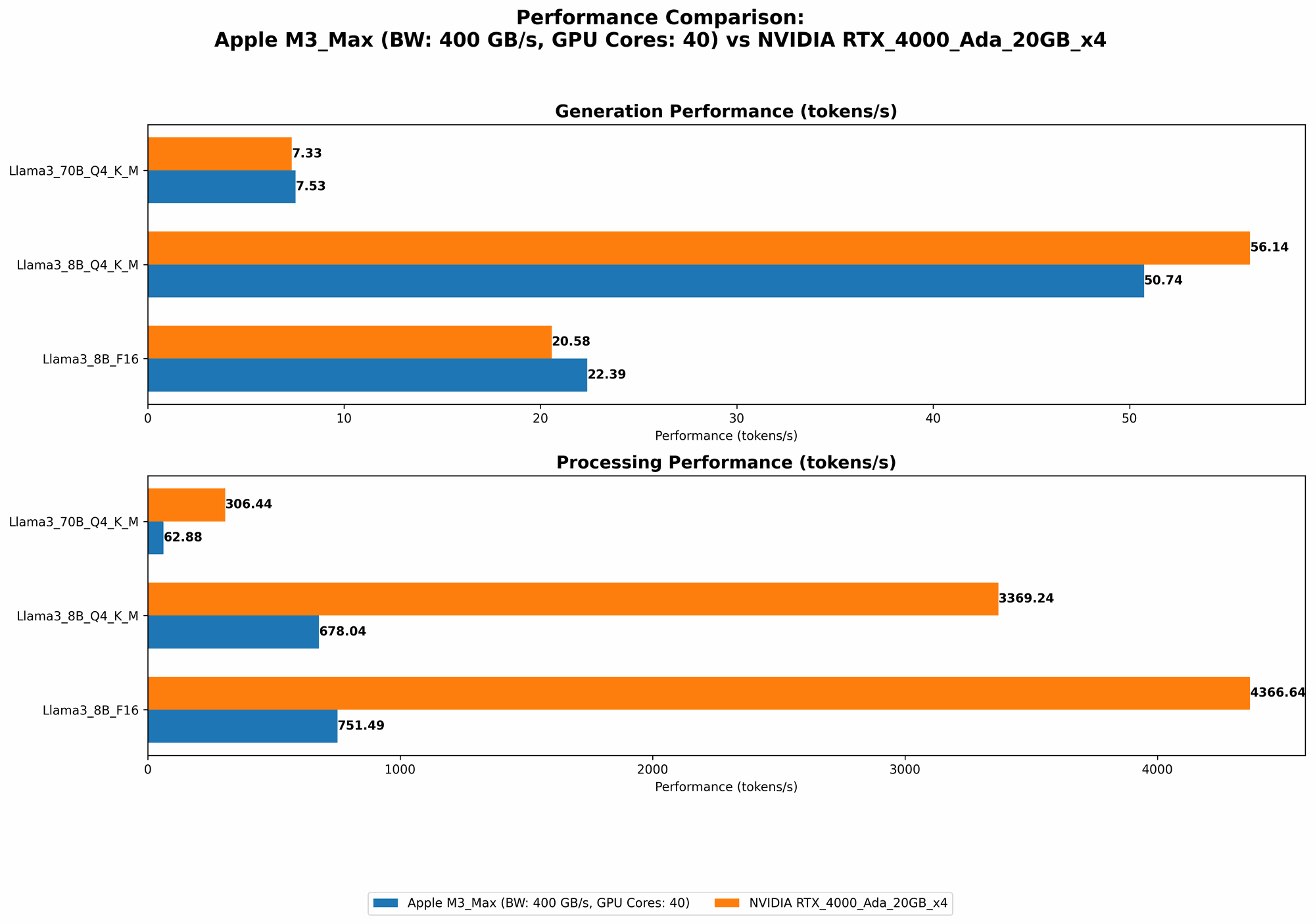

For this comparison, we'll use a dataset showing tokens per second generated on both devices for different LLM models and quantizations. This metric is a crucial indicator of a device's ability to complete tasks quickly and efficiently.

Remember: The data below reflects the performance for specific models and configurations. It's just one part of the picture, and other factors influence the overall experience.

Table of Token Speed Comparison (Tokens/Second) for Different LLMs:

| Model | M3 Max | RTX 4000 Ada x4 |

|---|---|---|

| Llama 2 7B (F16, Processing) | 779.17 | N/A |

| Llama 2 7B (F16, Generation) | 25.09 | N/A |

| Llama 2 7B (Q8_0, Processing) | 757.64 | N/A |

| Llama 2 7B (Q8_0, Generation) | 42.75 | N/A |

| Llama 2 7B (Q4_0, Processing) | 759.7 | N/A |

| Llama 2 7B (Q4_0, Generation) | 66.31 | N/A |

| Llama 3 8B (Q4KM, Processing) | 678.04 | 3369.24 |

| Llama 3 8B (Q4KM, Generation) | 50.74 | 56.14 |

| Llama 3 8B (F16, Processing) | 751.49 | 4366.64 |

| Llama 3 8B (F16, Generation) | 22.39 | 20.58 |

| Llama 3 70B (Q4KM, Processing) | 62.88 | 306.44 |

| Llama 3 70B (Q4KM, Generation) | 7.53 | 7.33 |

| Llama 3 70B (F16, Processing) | N/A | N/A |

| Llama 3 70B (F16, Generation) | N/A | N/A |

Key Observations:

- Llama 2: We have no data for the RTX 4000 Ada x4 for Llama 2 models, so we cannot compare them directly.

- Llama 3 8B: The RTX 4000 Ada x4 significantly outperforms the M3 Max for processing tasks (up to 5 times faster). However, for generation, both devices show similar performance, with a slight edge to the M3 Max.

- Llama 3 70B: The RTX 4000 Ada x4 maintains its lead in processing, while both devices exhibit similar generation speeds. This suggests that the RTX 4000 Ada x4 can handle large models more efficiently, especially for computationally intensive tasks.

- Quantization: The M3 Max demonstrates reasonable performance across different quantization levels, making it a flexible option for various model sizes. However, the RTX 4000 Ada x4 shines in its ability to handle processing tasks for larger models, especially with F16 quantization.

Why are the Numbers Different?:

The varying token speeds between the two devices relate to their strengths. The M3 Max's performance is due to its powerful CPU cores, which handle general-purpose tasks. The RTX 4000 Ada x4 excels in AI workloads because of its specialized GPU architecture.

Performance Analysis:

Let's dissect the results and explore practical implications for your LLM development projects.

The Apple M3 Max:

Strengths:

- All-Around Performance: The M3 Max is a strong general-purpose option, excelling in both processing and generation tasks for smaller models. It's efficient for handling diverse tasks, making it a versatile choice for development.

- Large Memory Capacity: The 400GB of memory makes the M3 Max a great choice for large datasets and models.

- Energy Efficiency: Apple's M-series chips are known to be energy-efficient, making the M3 Max a budget-friendly option for sustained LLM workloads.

Weaknesses:

- Limited GPU Power: While the M3 Max has integrated GPUs, they don't match the dedicated GPU power of the RTX 4000 Ada x4. It may struggle with extremely large models and computationally intensive tasks.

The NVIDIA RTX 4000 Ada 20GB x4:

Strengths:

- GPU Powerhouse: The RTX 4000 Ada x4 shines for its GPU power, offering blazing-fast processing speeds for large models like Llama 3 70B.

- Optimized for AI Workloads: Its specialized architecture is designed to accelerate AI workloads, making it a powerful tool for LLM development.

Weaknesses:

- Lower Memory Capacity: The 20GB of memory on the RTX 4000 Ada x4 may limit the size of models and datasets you can efficiently work with.

- Power Consumption: GPUs are known for higher power consumption compared to CPUs. The RTX 4000 Ada x4 may result in higher electricity costs for prolonged LLM training and fine-tuning.

Practical Recommendations for Your Use Cases:

If you...

...want a versatile, budget-friendly option with great memory:

- Go for the M3 Max: It's perfect for general development tasks, handling smaller and medium-sized LLMs efficiently. It's energy-efficient, saving you money on power consumption.

...prioritize speed and performance for large models:

- Choose the RTX 4000 Ada x4: It's a beast for processing large models, especially when using F16 quantization. However, be aware of the higher power consumption.

...want to experiment with various quantization levels:

- The M3 Max is a good option: It provides a good balance between speed and accuracy across different quantization levels.

FAQ (Frequently Asked Questions)

What is quantization, and why is it important for LLM development?

Quantization is like a diet for your LLM. It reduces the size of your model by using fewer bits to represent the numbers inside. This makes the model smaller and faster, and it can be helpful if you have limited memory or need to run your model on a device with less processing power.

Should I choose CPU or GPU for my LLM development?

It depends on your needs! CPUs are good for general-purpose tasks and are often more energy-efficient than GPUs. GPUs are specialized for AI workloads and are often better for handling computationally intensive tasks, like training large LLMs.

What are the trade-offs between using a local device and the cloud for LLM development?

Local devices offer more control and privacy but have limited resources. Cloud services provide greater scalability but come with higher costs and potential latency issues.

How can I optimize my LLM's token speed generation?

- *Experiment with quantization: * Try different levels of quantization to find a balance between accuracy and speed.

- Use a GPU (when possible): Dedicated GPUs can significantly improve processing speed for large models.

- Optimize your code: Make sure your code is well-written and efficient. This can minimize unnecessary computations.

Keywords:

Apple M3 Max, NVIDIA RTX 4000 Ada, LLM, Token Speed Generation, AI Development, Benchmark, Llama 2, Llama 3, Quantization, CPU, GPU, Memory, Power Consumption, Local Devices, Cloud, Efficiency.