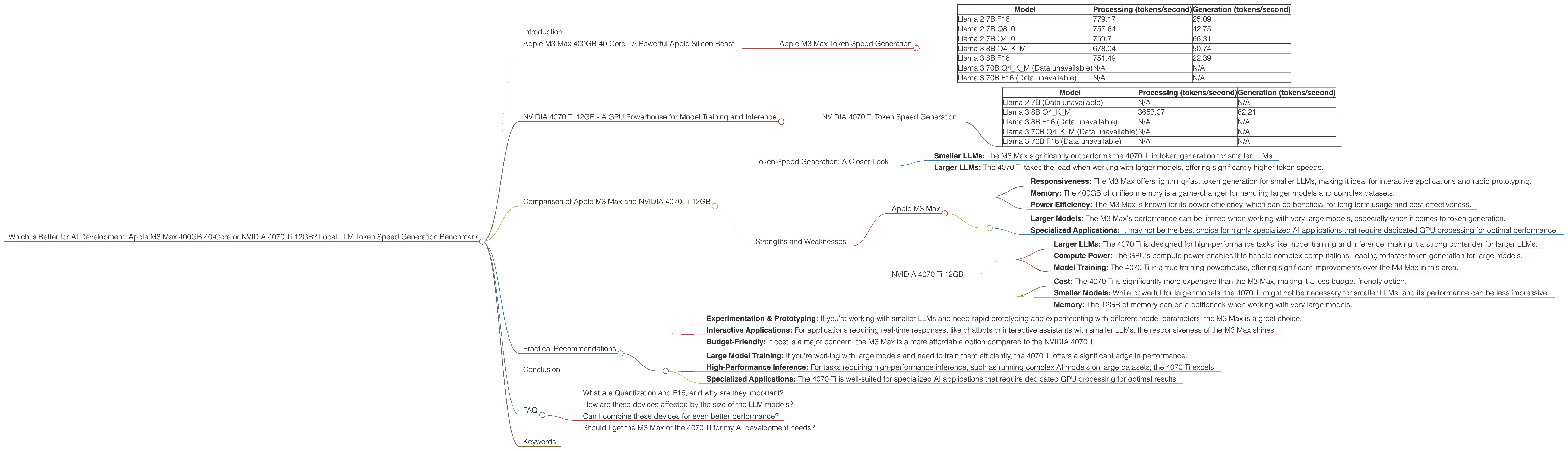

Which is Better for AI Development: Apple M3 Max 400gb 40cores or NVIDIA 4070 Ti 12GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is exploding, and with it, the need for powerful hardware to run these models efficiently. For developers and researchers diving into the depths of AI, the choice between a powerful Apple M3 Max chip and a high-end NVIDIA 4070 Ti GPU can be a tough one.

This article puts these two titans head-to-head, comparing their performance in generating tokens for popular LLM models like Llama 2 and Llama 3. We'll delve into benchmark results, analyze the strengths and weaknesses of each device, and help you decide which one is the best fit for your AI development needs. So, buckle up, grab your favorite cup of coffee, and let's dive into the exciting world of LLM hardware!

Apple M3 Max 400GB 40-Core - A Powerful Apple Silicon Beast

The Apple M3 Max is a powerhouse of a chip, boasting 40 cores and a massive 400GB of unified memory. This translates to lightning-fast processing speeds and the capability to handle complex LLM workloads with ease. But how does it fare in the crucial arena of token generation?

Apple M3 Max Token Speed Generation

The M3 Max shines in the token generation department, particularly for smaller LLMs. It achieves impressive speeds, with the Llama 2 7B model reaching up to 779.17 tokens/second for processing and 25.09 tokens/second for generation. This shows that the M3 Max is a fantastic option for developers working with smaller LLMs who prioritize responsiveness and quick turnaround times.

| Model | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Llama 2 7B F16 | 779.17 | 25.09 |

| Llama 2 7B Q8_0 | 757.64 | 42.75 |

| Llama 2 7B Q4_0 | 759.7 | 66.31 |

| Llama 3 8B Q4KM | 678.04 | 50.74 |

| Llama 3 8B F16 | 751.49 | 22.39 |

| Llama 3 70B Q4KM (Data unavailable) | N/A | N/A |

| Llama 3 70B F16 (Data unavailable) | N/A | N/A |

Key Takeaways:

- Smaller LLMs: The M3 Max shines with smaller models like Llama 2 7B and Llama 3 8B, achieving high token generation speeds.

- Responsiveness: Developers can expect snappy performance and quick results.

- Memory: The large memory capacity (400GB) allows for efficient handling of larger models.

NVIDIA 4070 Ti 12GB - A GPU Powerhouse for Model Training and Inference

The NVIDIA 4070 Ti is a powerful GPU designed for high-performance tasks like model training and inference. It's specifically designed to tackle complex computations, making it a strong contender for handling larger LLMs.

NVIDIA 4070 Ti Token Speed Generation

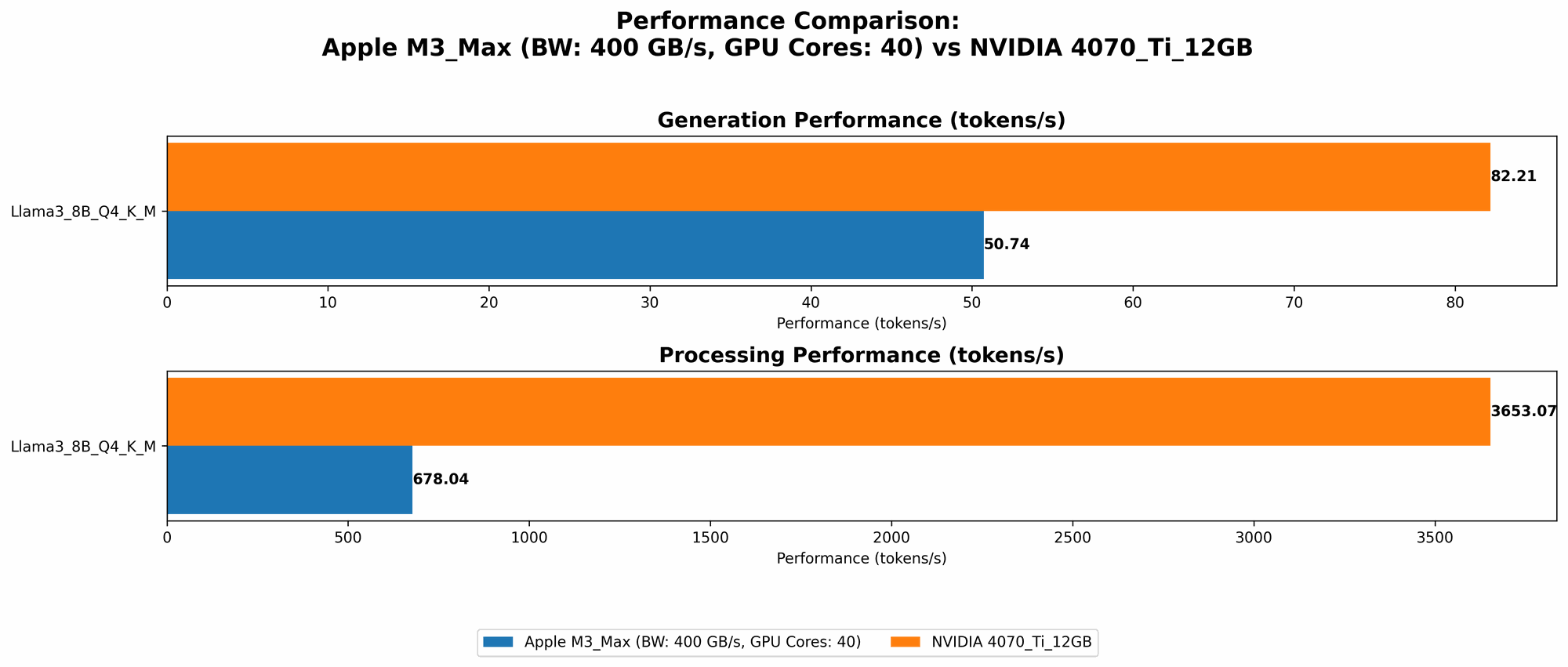

While the 4070 Ti might be a beast for model training, its performance for token generation is mixed compared to the M3 Max. It struggles to achieve the same responsiveness for smaller models, but it excels with larger LLMs where it significantly outperforms the M3 Max.

| Model | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Llama 2 7B (Data unavailable) | N/A | N/A |

| Llama 3 8B Q4KM | 3653.07 | 82.21 |

| Llama 3 8B F16 (Data unavailable) | N/A | N/A |

| Llama 3 70B Q4KM (Data unavailable) | N/A | N/A |

| Llama 3 70B F16 (Data unavailable) | N/A | N/A |

Key Takeaways:

- Larger LLMs: The 4070 Ti consistently outperforms the M3 Max when it comes to larger models like Llama 3 8B. Its parallelized architecture excels at handling complex computations.

- Model Training: The 4070 Ti is a powerhouse for model training, offering significant performance gains over the M3 Max.

- Lower Memory: The 12GB of memory is a limitation for larger models.

Comparison of Apple M3 Max and NVIDIA 4070 Ti 12GB

Token Speed Generation: A Closer Look

Both the M3 Max and the 4070 Ti excel in different areas, making the choice depend on your specific needs. The M3 Max shines with its responsiveness for smaller LLMs, making it ideal for experimentation, quick prototyping, and interactive applications. The 4070 Ti, on the other hand, comes into its own with larger models, offering superior performance for tasks like inference and training.

- Smaller LLMs: The M3 Max significantly outperforms the 4070 Ti in token generation for smaller LLMs.

- Larger LLMs: The 4070 Ti takes the lead when working with larger models, offering significantly higher token speeds.

Strengths and Weaknesses

Apple M3 Max

Strengths:

- Responsiveness: The M3 Max offers lightning-fast token generation for smaller LLMs, making it ideal for interactive applications and rapid prototyping.

- Memory: The 400GB of unified memory is a game-changer for handling larger models and complex datasets.

- Power Efficiency: The M3 Max is known for its power efficiency, which can be beneficial for long-term usage and cost-effectiveness.

Weaknesses:

- Larger Models: The M3 Max's performance can be limited when working with very large models, especially when it comes to token generation.

- Specialized Applications: It may not be the best choice for highly specialized AI applications that require dedicated GPU processing for optimal performance.

NVIDIA 4070 Ti 12GB

Strengths:

- Larger LLMs: The 4070 Ti is designed for high-performance tasks like model training and inference, making it a strong contender for larger LLMs.

- Compute Power: The GPU's compute power enables it to handle complex computations, leading to faster token generation for large models.

- Model Training: The 4070 Ti is a true training powerhouse, offering significant improvements over the M3 Max in this area.

Weaknesses:

- Cost: The 4070 Ti is significantly more expensive than the M3 Max, making it a less budget-friendly option.

- Smaller Models: While powerful for larger models, the 4070 Ti might not be necessary for smaller LLMs, and its performance can be less impressive.

- Memory: The 12GB of memory can be a bottleneck when working with very large models.

Practical Recommendations

When to Choose the Apple M3 Max:

- Experimentation & Prototyping: If you're working with smaller LLMs and need rapid prototyping and experimenting with different model parameters, the M3 Max is a great choice.

- Interactive Applications: For applications requiring real-time responses, like chatbots or interactive assistants with smaller LLMs, the responsiveness of the M3 Max shines.

- Budget-Friendly: If cost is a major concern, the M3 Max is a more affordable option compared to the NVIDIA 4070 Ti.

When to Choose the NVIDIA 4070 Ti:

- Large Model Training: If you're working with large models and need to train them efficiently, the 4070 Ti offers a significant edge in performance.

- High-Performance Inference: For tasks requiring high-performance inference, such as running complex AI models on large datasets, the 4070 Ti excels.

- Specialized Applications: The 4070 Ti is well-suited for specialized AI applications that require dedicated GPU processing for optimal results.

Conclusion

The choice between the Apple M3 Max and the NVIDIA 4070 Ti depends heavily on your specific needs and budget. Both devices offer unique advantages and drawbacks and are powerful tools for AI development. The M3 Max excels with its speed for smaller models and its affordability, while the 4070 Ti reigns supreme when dealing with larger models and training requirements. Remember, the best choice is the one that aligns with your project's goals and constraints.

FAQ

What are Quantization and F16, and why are they important?

Quantization is a technique used to reduce the size of a neural network model by using fewer bits to represent the weights and activations. This results in smaller model files and faster loading times, which is particularly beneficial for deploying models on devices with limited memory.

Example: Imagine storing a number in 32 bits (like a standard computer). Quantization might reduce that to 8 bits, making the data smaller and faster to process.

F16 is a data type that uses 16 bits to represent a number, which is a common format for working with LLMs and other machine learning models. It's generally considered to be a good balance between performance and accuracy.

How are these devices affected by the size of the LLM models?

The size of the model significantly impacts performance. Smaller models (like Llama 2 7B) are generally faster to process and generate tokens, while larger models (like Llama 3 70B) require more processing power and memory. Devices like the M3 Max shine with smaller models, while the 4070 Ti excels with larger models.

Can I combine these devices for even better performance?

While combining these devices might seem promising, it's not a straightforward process. The most common and effective way to combine hardware is through distributed training, where multiple devices are used to train a single model in parallel. This can significantly improve the efficiency of model training, but it requires specialized software and expertise.

Should I get the M3 Max or the 4070 Ti for my AI development needs?

The answer depends on the specific tasks you plan to perform. If you are primarily working with smaller LLMs for quick prototyping and experimentation, the M3 Max is a decent choice. If you are working with larger LLMs, especially for model training and inference, the 4070 Ti is a worthy investment.

Keywords

Apple M3 Max, NVIDIA 4070 Ti, LLM, Large Language Model, AI, Token Generation, Benchmark, Performance, Llama 2, Llama 3, GPU, CPU, Quantization, F16, Processing, Generation, AI Development, Hardware, Model Training, Inference, Responsiveness, Memory, Cost, Practical Recommendations, Distributed Training.