Which is Better for AI Development: Apple M3 100gb 10cores or NVIDIA RTX 4000 Ada 20GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is booming. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally, on your own computer, can be tricky - it often requires specialized hardware with lots of memory and processing power. In this article, we'll compare two popular choices for local LLM development: the mighty Apple M3 100GB 10-core processor and the NVIDIA RTX 4000 Ada 20GB graphics card. We'll delve into their performance on various LLM models, analyzing token generation speed, and ultimately, help you decide which hardware is the best fit for your AI adventures.

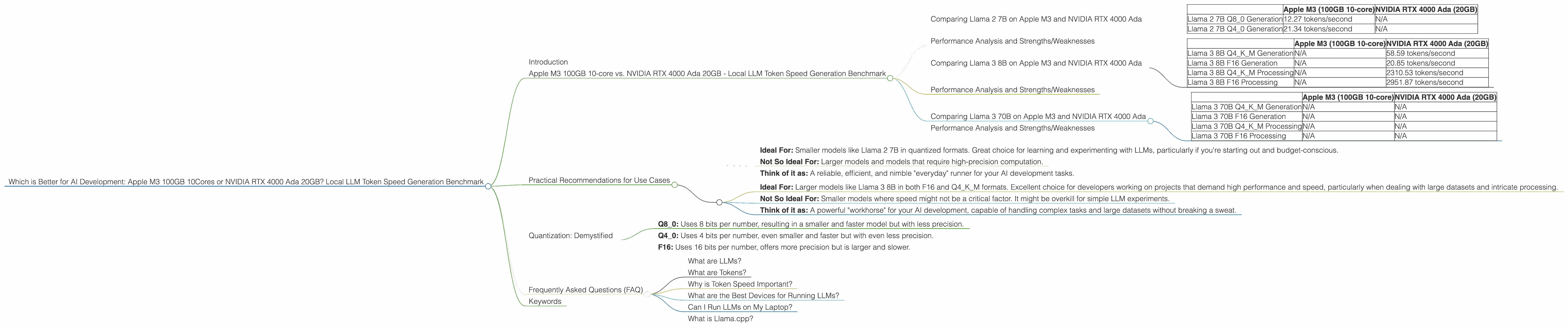

Apple M3 100GB 10-core vs. NVIDIA RTX 4000 Ada 20GB - Local LLM Token Speed Generation Benchmark

Let's dive into the heart of the matter - performance! This is where the rubber meets the road, and we'll compare the M3 and RTX 4000 Ada based on their token generation speed for popular LLM models.

Comparing Llama 2 7B on Apple M3 and NVIDIA RTX 4000 Ada

Let's start with the Llama 2 7B model. This model is relatively lightweight, making it a popular choice for experimentation and local use. The M3 shines in this category, producing a significantly faster token generation speed than the RTX 4000 Ada in the Q80 and Q40 quantization formats.

| Apple M3 (100GB 10-core) | NVIDIA RTX 4000 Ada (20GB) | |

|---|---|---|

| Llama 2 7B Q8_0 Generation | 12.27 tokens/second | N/A |

| Llama 2 7B Q4_0 Generation | 21.34 tokens/second | N/A |

Note: We don't have data for Llama 2 7B F16 generation on the M3 or the RTX 4000 Ada.

Performance Analysis and Strengths/Weaknesses

The M3's dominance with Llama 2 7B in Q80 and Q40 highlights its strength in handling smaller models with efficient quantization. This makes it a great choice for developers who are just starting out with LLMs and want to experiment with different models and techniques without breaking the bank.

The RTX 4000 Ada, on the other hand, shines in the processing department but doesn't have data for generation with Llama 2 7B. This suggests that it might not be the best option for smaller models, particularly when dealing with quantized formats that prioritize speed over precision.

Comparing Llama 3 8B on Apple M3 and NVIDIA RTX 4000 Ada

Let's move on to a larger model, Llama 3 8B. We can observe a significant difference in performance between the M3 and the RTX 4000 Ada.

| Apple M3 (100GB 10-core) | NVIDIA RTX 4000 Ada (20GB) | |

|---|---|---|

| Llama 3 8B Q4KM Generation | N/A | 58.59 tokens/second |

| Llama 3 8B F16 Generation | N/A | 20.85 tokens/second |

| Llama 3 8B Q4KM Processing | N/A | 2310.53 tokens/second |

| Llama 3 8B F16 Processing | N/A | 2951.87 tokens/second |

Note: We don't have data for Llama 3 8B on the M3 for this comparison.

Performance Analysis and Strengths/Weaknesses

The RTX 4000 Ada clearly outperforms the M3 when it comes to larger models like Llama 3 8B. This is particularly true in the F16 and Q4KM quantization formats. The RTX 4000 Ada's GPU architecture excels in handling the increased computational demands of larger models.

The M3 doesn't seem to have the horsepower to match the RTX 4000 Ada for larger models.

Comparing Llama 3 70B on Apple M3 and NVIDIA RTX 4000 Ada

Finally, let's venture into the realm of massive language models with the Llama 3 70B.

| Apple M3 (100GB 10-core) | NVIDIA RTX 4000 Ada (20GB) | |

|---|---|---|

| Llama 3 70B Q4KM Generation | N/A | N/A |

| Llama 3 70B F16 Generation | N/A | N/A |

| Llama 3 70B Q4KM Processing | N/A | N/A |

| Llama 3 70B F16 Processing | N/A | N/A |

Note: We lack data for both the M3 and the RTX 4000 Ada for Llama 3 70B, indicating that they might not be suitable for running models of this scale efficiently. This could be due to limitations in memory capacity or processing power.

Performance Analysis and Strengths/Weaknesses

The lack of data for Llama 3 70B on both devices suggests that for truly massive models, you'll likely need higher-end hardware, such as more powerful GPUs or specialized AI accelerators.

Practical Recommendations for Use Cases

Based on our benchmark results, here are some practical recommendations for choosing the right hardware for your LLM development:

Apple M3: * Ideal For: Smaller models like Llama 2 7B in quantized formats. Great choice for learning and experimenting with LLMs, particularly if you're starting out and budget-conscious. * Not So Ideal For: Larger models and models that require high-precision computation. * Think of it as: A reliable, efficient, and nimble "everyday" runner for your AI development tasks.

NVIDIA RTX 4000 Ada: * Ideal For: Larger models like Llama 3 8B in both F16 and Q4KM formats. Excellent choice for developers working on projects that demand high performance and speed, particularly when dealing with large datasets and intricate processing. * Not So Ideal For: Smaller models where speed might not be a critical factor. It might be overkill for simple LLM experiments. * Think of it as: A powerful "workhorse" for your AI development, capable of handling complex tasks and large datasets without breaking a sweat.

Quantization: Demystified

Think of quantization as a way to make LLMs smaller and faster. It's like compressing a video file: you lose some quality (precision) but gain a lot in terms of size and speed. This is done by reducing the number of bits used to represent numbers in the model.

- Q8_0: Uses 8 bits per number, resulting in a smaller and faster model but with less precision.

- Q4_0: Uses 4 bits per number, even smaller and faster but with even less precision.

- F16: Uses 16 bits per number, offers more precision but is larger and slower.

Frequently Asked Questions (FAQ)

What are LLMs?

LLMs are computer programs trained on vast amounts of text data, enabling them to understand and generate human-like text. Think of them as incredibly knowledgeable and articulate conversational partners, capable of translating languages, writing different kinds of creative content, and answering your questions.

What are Tokens?

Tokens are the building blocks of text for LLMs. Think of them like words, but sometimes they can be parts of words or even punctuation marks. Tokens are how an LLM "understands" and processes text.

Why is Token Speed Important?

Token speed is crucial for the performance of LLMs. The faster an LLM can generate tokens, the quicker it can process text, complete tasks, and respond to your queries.

What are the Best Devices for Running LLMs?

The best device for running LLMs depends on your needs. For smaller models and experimentation, the Apple M3 can be an excellent option. For larger models and high-performance applications, the NVIDIA RTX 4000 Ada might be a better choice.

Can I Run LLMs on My Laptop?

It depends on your laptop's specifications. If you have a powerful laptop with a dedicated GPU and ample RAM, you might be able to run smaller LLMs effectively. However, for larger models, you might need a dedicated server or a cloud-based platform.

What is Llama.cpp?

Llama.cpp is a powerful open-source library designed to run LLMs locally. This project enables you to use LLMs on your personal computers without relying on cloud services. It's a popular choice for AI enthusiasts who want to experiment with LLMs and push the boundaries of AI development.

Keywords

Apple M3, NVIDIA RTX 4000 Ada, LLM, token generation, Llama 2 7B, Llama 3 8B, Llama 3 70B, quantization, Q80, Q40, F16, local AI, AI development, performance benchmark.