Which is Better for AI Development: Apple M3 100gb 10cores or NVIDIA A100 PCIe 80GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is buzzing with excitement, and developers are constantly searching for the best hardware to fuel their AI projects. Two powerful contenders have emerged: the Apple M3 100GB 10 Core chip and the NVIDIA A100PCIe80GB GPU. Choosing the right device for your LLM development can significantly impact performance, cost, and workflow. This article dives into the heart of this competition, comparing their token speed generation capabilities for different LLM models using real benchmark data.

The Showdown: Token Speed Generation Showdown

This section will delve into the performance of each device in generating tokens for various LLM models. We'll analyze the benchmark data and uncover the strengths and weaknesses of each contender.

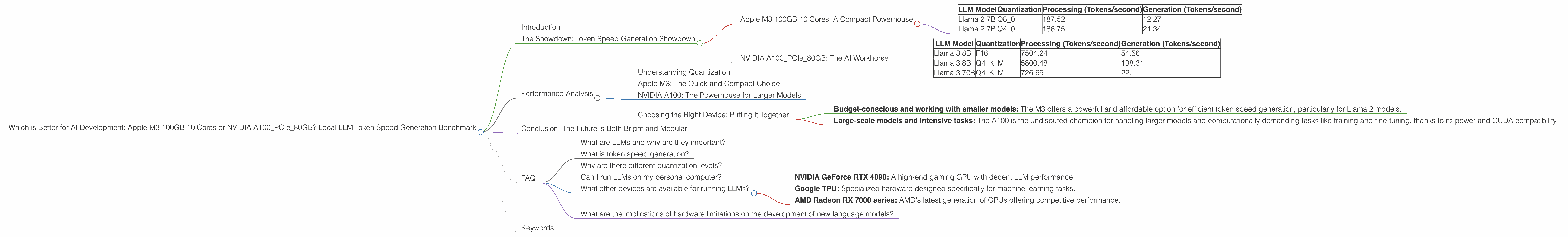

Apple M3 100GB 10 Cores: A Compact Powerhouse

The Apple M3, with its remarkable 100GB memory and 10 cores, is a beast of a processor designed for computationally intensive tasks, including AI. While it's relatively new, its performance has quickly attracted attention within the developer community.

Focusing on Llama 2 Models

We'll look at the performance of the M3 with Llama 2 models, comparing different quantization levels (Q8, Q4).

- Llama 2 7B models: The M3 demonstrates remarkable speed with both Q8 and Q4 quantization, showing impressive token generation rates.

- Processing (Q80): A blazing 187.52 tokens per second.

- Processing (Q40): An impressive 186.75 tokens per second.

- Generation (Q80): A solid 12.27 tokens per second.

- Generation (Q40): A respectable 21.34 tokens per second.

Here's a summary table to visualize the M3's performance:

| LLM Model | Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 187.52 | 12.27 |

| Llama 2 7B | Q4_0 | 186.75 | 21.34 |

M3 Strengths:

- Compact and Efficient: The M3's power efficiency is impressive.

- Memory Monster: The 100GB memory allows for larger models and faster processing.

- Cost-Effective: Compared to the A100, the M3 is more budget-friendly, making it an attractive option for developers and small teams.

M3 Weaknesses:

- Limited CUDA Support: lacks full CUDA support, which might hinder compatibility with certain LLM libraries and frameworks.

- No Llama 3 Data: We don't have data for Llama 3 models on the M3 device.

NVIDIA A100PCIe80GB: The AI Workhorse

The NVIDIA A100 is a workhorse in the AI world, known for its raw processing power and its extensive support for deep learning frameworks. It has been the go-to GPU for training and running large language models for years.

Exploring Llama 3 Models

The A100 shines with the Llama 3 family, showcasing impressive performance with both F16 and Q4 quantization.

- Llama 3 8B:

- Generation (F16): 54.56 tokens per second.

- Generation (Q4KM): 138.31 tokens per second.

- Processing (F16): 7504.24 tokens per second.

- Processing (Q4KM): 5800.48 tokens per second.

- Llama 3 70B:

- Generation (Q4KM): 22.11 tokens per second.

- Processing (Q4KM): 726.65 tokens per second.

A quick glance at the A100's stats:

| LLM Model | Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|

| Llama 3 8B | F16 | 7504.24 | 54.56 |

| Llama 3 8B | Q4KM | 5800.48 | 138.31 |

| Llama 3 70B | Q4KM | 726.65 | 22.11 |

A100 Strengths:

- Proven Powerhouse: The A100 has been the go-to for training and inference of LLMs, offering robust performance and stability.

- Massive CUDA Support: Full CUDA integration is a game-changer for AI development, enabling seamless workflow with libraries and frameworks.

- Widely Supported: The A100 is compatible with numerous LLM platforms and frameworks, including Tensorflow, PyTorch, and more.

A100 Weaknesses:

- Price Tag: The A100's high price tag may be a barrier for budget-conscious developers.

- Power Consumption: The A100 is a power-hungry beast, requiring substantial power supply and cooling infrastructure.

- No Data for Llama 2: We lack benchmark data for Llama 2 models on the A100.

Performance Analysis

Let's dissect the token speed generation data and draw some conclusions about the best device for specific use cases.

Understanding Quantization

Quantization helps reduce the size of LLMs and optimize their performance, especially on devices with limited memory. It's like compressing an image to save space, but for LLMs.

Imagine each word in a sentence as a number. A high-precision LLM might use 32 bits (or 4 bytes) to represent each number. Quantization reduces that to 8 bits or even 4 bits, making the LLM smaller and faster.

Apple M3: The Quick and Compact Choice

The M3's performance with Llama 2 7B models stands out. It achieves impressive speeds, especially in processing, making it a strong contender for tasks that require swift text processing and generation. This makes it particularly suitable for developers working on smaller models, mobile applications, or applications that require a balance between performance and power efficiency.

For those working with the Llama 2 family, the M3 offers a compelling package. However, without data for Llama 3 models, its suitability for larger models remains unknown.

NVIDIA A100: The Powerhouse for Larger Models

The A100 excels with its raw computational power, showcasing remarkable speed with larger Llama 3 models. This makes it ideal for tasks requiring the processing of massive amounts of text, such as training, fine-tuning, and inference of large-scale LLM models.

The A100's CUDA support is a significant advantage, making it a preferred choice for developers who want a seamless development workflow with popular AI libraries and frameworks.

Choosing the Right Device: Putting it Together

So, which device reigns supreme? It depends! The choice boils down to your specific needs:

- Budget-conscious and working with smaller models: The M3 offers a powerful and affordable option for efficient token speed generation, particularly for Llama 2 models.

- Large-scale models and intensive tasks: The A100 is the undisputed champion for handling larger models and computationally demanding tasks like training and fine-tuning, thanks to its power and CUDA compatibility.

Conclusion: The Future is Both Bright and Modular

The AI landscape is constantly shifting, and the choice between the M3 and A100 is just one snapshot of a dynamic landscape. The future may involve even more powerful processors and specialized AI hardware, offering even more choices for developers.

The key takeaway is that both the M3 and A100 have their distinct strengths and weaknesses, and the best device for you depends on your specific needs, budget, and project goals. The world of AI is exciting and ever-evolving, allowing developers to explore various hardware options to push the boundaries of what's possible with LLMs.

FAQ

What are LLMs and why are they important?

LLMs are computer programs that can understand and generate human-like text. They can answer questions, write stories, translate languages, and much more. LLMs are revolutionizing communication, education, and entertainment.

What is token speed generation?

Token speed generation refers to how fast a device can process and generate tokens, the individual units of text that LLMs use. The higher the token speed, the faster your LLM can process and generate text.

Why are there different quantization levels?

Quantization levels determine the precision used to represent numbers in an LLM. Lower quantization levels use fewer bits, making the LLM more compact and faster but potentially sacrificing some accuracy.

Can I run LLMs on my personal computer?

Yes! You can run smaller LLM models on your personal computer. However, larger LLM models typically require dedicated hardware like GPUs.

What other devices are available for running LLMs?

Other powerful devices include:

- NVIDIA GeForce RTX 4090: A high-end gaming GPU with decent LLM performance.

- Google TPU: Specialized hardware designed specifically for machine learning tasks.

- AMD Radeon RX 7000 series: AMD's latest generation of GPUs offering competitive performance.

What are the implications of hardware limitations on the development of new language models?

Limitations in hardware can slow down the development of new language models. As LLMs become larger and more complex, they demand more processing power and memory, pushing the limits of current technology. Research and development in hardware are crucial for pushing the boundaries of what's possible with AI.

Keywords

Apple M3, NVIDIA A100, LLM, Large Language Model, Token Speed Generation, Benchmark, Quantization, Llama 2, Llama 3, AI Development, Hardware, GPU, CUDA, Performance, Cost, Efficient, Power Consumption, Budget, Use Cases, Future of AI.