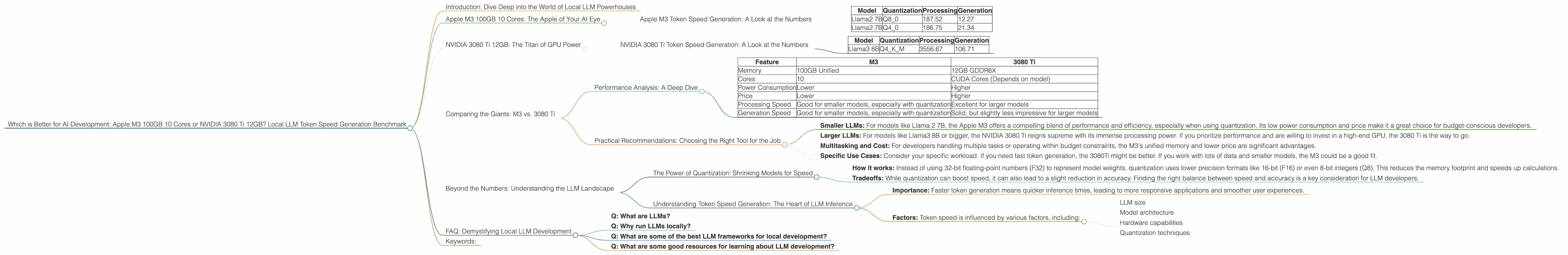

Which is Better for AI Development: Apple M3 100gb 10cores or NVIDIA 3080 Ti 12GB? Local LLM Token Speed Generation Benchmark

Introduction: Dive Deep into the World of Local LLM Powerhouses

The world of Large Language Models (LLMs) is booming, and developers are increasingly looking to run them locally for speed, privacy, and flexibility. But with a plethora of hardware options, choosing the right device for your LLM project can be tricky.

This article compares two powerful contenders – the Apple M3 100GB 10 Core processor and the NVIDIA 3080 Ti 12GB graphics card – in their ability to generate tokens for LLMs, focusing on the Llama family of models. We’ll analyze benchmark data, discuss performance differences, and provide practical recommendations for your LLM development journey.

Think of it as a friendly guide to help you choose the right weapon for your AI adventures!

Apple M3 100GB 10 Cores: The Apple of Your AI Eye

The Apple M3 processor, with its 100GB of unified memory and 10 cores, is a formidable force in the world of local LLM processing. Its architecture, designed for efficiency, offers impressive performance for specific LLM configurations.

Apple M3 Token Speed Generation: A Look at the Numbers

Let's dive into the benchmark data. The Apple M3 shines with the Llama 2 7B model, especially when using quantization techniques like Q40 and Q80:

Table 1: Apple M3 Token Speed Generation Benchmark (Tokens/Second)

| Model | Quantization | Processing | Generation |

|---|---|---|---|

| Llama2 7B | Q8_0 | 187.52 | 12.27 |

| Llama2 7B | Q4_0 | 186.75 | 21.34 |

Key Observations:

- Quantization Matters: The M3 thrives when using quantization. Q80 and Q40 significantly boost performance, particularly for the Llama 2 7B model.

- Processing vs. Generation: The M3 excels at processing tokens, but its generation speed lags behind. This difference can be critical for applications demanding high throughput during inference.

NVIDIA 3080 Ti 12GB: The Titan of GPU Power

The NVIDIA 3080 Ti 12GB is a powerhouse in the world of GPU computing, known for its massive parallel processing capabilities, making it a popular choice for tasks like LLM inference. Let's see how it performs in the token speed generation arena:

NVIDIA 3080 Ti Token Speed Generation: A Look at the Numbers

Table 2: NVIDIA 3080 Ti Token Speed Generation Benchmark (Tokens/Second)

| Model | Quantization | Processing | Generation |

|---|---|---|---|

| Llama3 8B | Q4KM | 3556.67 | 106.71 |

Key Observations:

- Llama3 Performance: The 3080 Ti boasts a remarkable performance with the Llama3 8B model, especially when using Q4KM quantization.

- Processing Powerhouse: The 3080 Ti demonstrates exceptional processing speed, generating tokens at a blazingly fast rate compared to the M3.

- Mixed Results: While the 3080 Ti delivers impressive processing speed, its generation speed is slightly lower than the M3 for the Llama2 7B model.

Comparing the Giants: M3 vs. 3080 Ti

The choice between the Apple M3 and NVIDIA 3080 Ti depends heavily on your LLM use case and specific needs.

Performance Analysis: A Deep Dive

Table 3: Key Performance Comparisons

| Feature | M3 | 3080 Ti |

|---|---|---|

| Memory | 100GB Unified | 12GB GDDR6X |

| Cores | 10 | CUDA Cores (Depends on model) |

| Power Consumption | Lower | Higher |

| Price | Lower | Higher |

| Processing Speed | Good for smaller models, especially with quantization | Excellent for larger models |

| Generation Speed | Good for smaller models, especially with quantization | Solid, but slightly less impressive for larger models |

Strengths and Weaknesses

Apple M3:

- 👍 Strengths:

- Excellent memory capacity for multi-tasking

- Low power consumption and price

- High processing speeds for quantized smaller models

- 👎 Weaknesses:

- Limited GPU horsepower for demanding LLM tasks

- Can struggle with large model inference, especially without quantization

NVIDIA 3080 Ti:

- 👍 Strengths:

- Unmatched GPU power

- Handles large models with ease

- Fast processing for diverse workloads

- 👎 Weaknesses:

- Lower memory capacity compared to the M3

- Higher power consumption and price

- May not be the best choice for smaller models

Practical Recommendations: Choosing the Right Tool for the Job

- Smaller LLMs: For models like Llama 2 7B, the Apple M3 offers a compelling blend of performance and efficiency, especially when using quantization. Its low power consumption and price make it a great choice for budget-conscious developers.

- Larger LLMs: For models like Llama3 8B or bigger, the NVIDIA 3080 Ti reigns supreme with its immense processing power. If you prioritize performance and are willing to invest in a high-end GPU, the 3080 Ti is the way to go.

- Multitasking and Cost: For developers handling multiple tasks or operating within budget constraints, the M3's unified memory and lower price are significant advantages.

- Specific Use Cases: Consider your specific workload. If you need fast token generation, the 3080Ti might be better. If you work with lots of data and smaller models, the M3 could be a good fit.

Beyond the Numbers: Understanding the LLM Landscape

The Power of Quantization: Shrinking Models for Speed

Think of quantization as a way to shrink your LLM, making it fit into a smaller space while retaining essential features. It's like compressing an image or a video without sacrificing too much quality.

- How it works: Instead of using 32-bit floating-point numbers (F32) to represent model weights, quantization uses lower precision formats like 16-bit (F16) or even 8-bit integers (Q8). This reduces the memory footprint and speeds up calculations.

- Tradeoffs: While quantization can boost speed, it can also lead to a slight reduction in accuracy. Finding the right balance between speed and accuracy is a key consideration for LLM developers.

Understanding Token Speed Generation: The Heart of LLM Inference

Token speed generation refers to the rate at which an LLM can generate text tokens during inference. A token is essentially a building block of language – it can be a word, a symbol, or even part of a word.

- Importance: Faster token generation means quicker inference times, leading to more responsive applications and smoother user experiences.

- Factors: Token speed is influenced by various factors, including:

- LLM size

- Model architecture

- Hardware capabilities

- Quantization techniques

FAQ: Demystifying Local LLM Development

- Q: What are LLMs?

A: LLMs are powerful AI models trained on massive datasets of text and code. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

- Q: Why run LLMs locally?

A: Running LLMs locally offers: * Speed: Faster results compared to cloud-based solutions * Privacy: Data stays on your device, enhancing security * Flexibility: Customizability and control over your setup

- Q: What are some of the best LLM frameworks for local development?

A: Popular frameworks include: * Llama.cpp: A high-performance, open-source C++ library for running LLMs locally * GPTQ: A library that quantizes large language models for faster and more efficient inference * Hugging Face Transformers: A widely-used library for training and deploying various LLMs.

- Q: What are some good resources for learning about LLM development?

A: * Hugging Face: Comprehensive documentation, tutorials, and resources for all levels of developers * Stanford AI Lab: Offers courses and materials on LLM development * Google AI Blog: Features articles and insights on the latest advancements in LLM research

Keywords:

Apple M3, NVIDIA 3080 Ti, LLM, Llama 2, Llama 3, Token Speed Generation, Quantization, Local AI, GPU, CPU, Inference, GPTQ, Hugging Face, AI Development