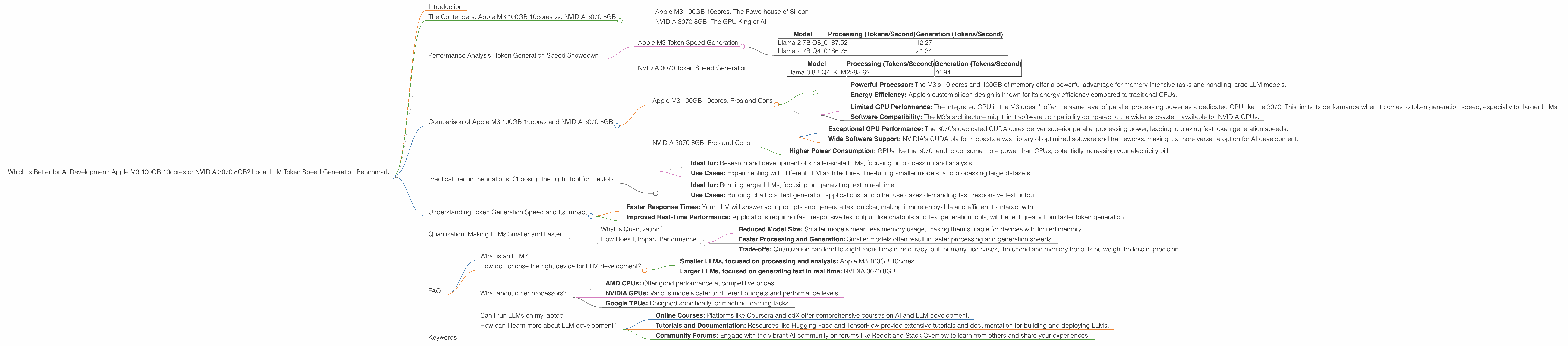

Which is Better for AI Development: Apple M3 100gb 10cores or NVIDIA 3070 8GB? Local LLM Token Speed Generation Benchmark

Introduction

Ever dreamed of running large language models (LLMs) directly on your own computer? Imagine having the power to interact with powerful AI models like ChatGPT or Bard without relying on cloud services. This dream is becoming more attainable with the increasing power of modern processors. In this article, we'll dive into the exciting battleground of local LLM deployment and compare two popular hardware choices: the Apple M3 100GB 10cores and the NVIDIA 3070 8GB. We'll benchmark their performance in generating tokens for common LLM models, revealing which chip reigns supreme for local AI development.

A little context: LLMs are the brains behind AI systems, driving conversational bots, generating creative content, and even translating languages – pretty amazing stuff. Now, imagine having this power right at your fingertips on your own machine. That's what we're exploring in this article.

The Contenders: Apple M3 100GB 10cores vs. NVIDIA 3070 8GB

Apple M3 100GB 10cores: The Powerhouse of Silicon

The Apple M3 100GB 10cores is a beast when it comes to raw processing power. Its 10 cores and 100GB of memory offer a significant advantage for handling large models and complex tasks. But is it the best choice for LLM development? Let's find out.

NVIDIA 3070 8GB: The GPU King of AI

The NVIDIA 3070 8GB is a popular GPU known for its stellar performance in gaming and other demanding applications. It's also a favourite among AI developers due to its powerful CUDA cores, specifically designed for parallel processing. How does it stack up against the M3 when it comes to generating tokens for LLMs?

Performance Analysis: Token Generation Speed Showdown

To understand which device is better for your LLM development needs, we must dive deep into their token generation speed. Token generation is the heart and soul of LLMs. It's the process of converting text into a numerical representation that the model can understand and process. The faster the token generation, the faster your LLM will respond to prompts and generate text.

Apple M3 Token Speed Generation

| Model | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama 2 7B Q8_0 | 187.52 | 12.27 |

| Llama 2 7B Q4_0 | 186.75 | 21.34 |

Key Observations:

- Processing Power: The M3 demonstrates impressive processing speeds, especially with Llama 2 7B quantized models, achieving over 180 tokens per second.

- Generation Bottleneck: The generation speed, however, is significantly slower compared to processing. This indicates that the M3 might struggle with generating text in real-time for larger LLM models.

NVIDIA 3070 Token Speed Generation

| Model | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4KM | 2283.62 | 70.94 |

Key Observations:

- GPU Power: The NVIDIA 3070 showcases incredible processing speed, easily surpassing the M3 by a considerable margin.

- Balanced Performance: It also maintains a respectable generation speed, much faster than the M3 for the same model. This suggests that the 3070 can handle both processing and generation tasks more efficiently.

Comparison of Apple M3 100GB 10cores and NVIDIA 3070 8GB

Let's compare the two devices head-to-head, considering their strengths and weaknesses:

Apple M3 100GB 10cores: Pros and Cons

Pros:

- Powerful Processor: The M3's 10 cores and 100GB of memory offer a powerful advantage for memory-intensive tasks and handling large LLM models.

- Energy Efficiency: Apple's custom silicon design is known for its energy efficiency compared to traditional CPUs.

Cons:

- Limited GPU Performance: The integrated GPU in the M3 doesn't offer the same level of parallel processing power as a dedicated GPU like the 3070. This limits its performance when it comes to token generation speed, especially for larger LLMs.

- Software Compatibility: The M3's architecture might limit software compatibility compared to the wider ecosystem available for NVIDIA GPUs.

NVIDIA 3070 8GB: Pros and Cons

Pros:

- Exceptional GPU Performance: The 3070's dedicated CUDA cores deliver superior parallel processing power, leading to blazing fast token generation speeds.

- Wide Software Support: NVIDIA's CUDA platform boasts a vast library of optimized software and frameworks, making it a more versatile option for AI development.

Cons:

- Higher Power Consumption: GPUs like the 3070 tend to consume more power than CPUs, potentially increasing your electricity bill.

Practical Recommendations: Choosing the Right Tool for the Job

Apple M3 100GB 10cores:

- Ideal for: Research and development of smaller-scale LLMs, focusing on processing and analysis.

- Use Cases: Experimenting with different LLM architectures, fine-tuning smaller models, and processing large datasets.

NVIDIA 3070 8GB:

- Ideal for: Running larger LLMs, focusing on generating text in real time.

- Use Cases: Building chatbots, text generation applications, and other use cases demanding fast, responsive text output.

Understanding Token Generation Speed and Its Impact

Think of token generation speed as the typing speed of your LLM. The faster it types, the quicker it can finish its work. In practical terms, faster token generation translates to:

- Faster Response Times: Your LLM will answer your prompts and generate text quicker, making it more enjoyable and efficient to interact with.

- Improved Real-Time Performance: Applications requiring fast, responsive text output, like chatbots and text generation tools, will benefit greatly from faster token generation.

Quantization: Making LLMs Smaller and Faster

What is Quantization?

Imagine shrinking a massive textbook into a pocket-sized guide. That's what quantization does to LLMs. It essentially reduces the size of the model by representing its numbers with fewer bits. Think of it as reducing the precision of the numbers but maintaining enough accuracy for good performance.

How Does It Impact Performance?

- Reduced Model Size: Smaller models mean less memory usage, making them suitable for devices with limited memory.

- Faster Processing and Generation: Smaller models often result in faster processing and generation speeds.

- Trade-offs: Quantization can lead to slight reductions in accuracy, but for many use cases, the speed and memory benefits outweigh the loss in precision.

FAQ

What is an LLM?

An LLM, or large language model, is a type of artificial intelligence that can understand and generate human-like text. Think of it as a super-smart chatbot that can write stories, translate languages, and even answer your questions in a conversational way.

How do I choose the right device for LLM development?

Consider the size of the LLMs you're working with and the type of task you're performing:

- Smaller LLMs, focused on processing and analysis: Apple M3 100GB 10cores

- Larger LLMs, focused on generating text in real time: NVIDIA 3070 8GB

What about other processors?

While we focused on the Apple M3 and NVIDIA 3070, other options exist depending on your budget and specific needs. Some popular choices include:

- AMD CPUs: Offer good performance at competitive prices.

- NVIDIA GPUs: Various models cater to different budgets and performance levels.

- Google TPUs: Designed specifically for machine learning tasks.

Can I run LLMs on my laptop?

Yes! Modern laptops equipped with dedicated GPUs can handle smaller to medium-sized LLMs, allowing you to explore the world of local AI development on the go.

How can I learn more about LLM development?

- Online Courses: Platforms like Coursera and edX offer comprehensive courses on AI and LLM development.

- Tutorials and Documentation: Resources like Hugging Face and TensorFlow provide extensive tutorials and documentation for building and deploying LLMs.

- Community Forums: Engage with the vibrant AI community on forums like Reddit and Stack Overflow to learn from others and share your experiences.

Keywords

Apple M3, NVIDIA 3070, LLM, Large Language Model, Token Generation Speed, GPU, CPU, AI Development, Local Inference, Quantization, Llama 2, Llama 3, Performance Benchmark, AI Hardware, Power Consumption, Software Compatibility, Real-Time Performance, Text Generation, Chatbots, Research and Development, Development Tools, Use Cases, Data Processing, Machine Learning, AI Community