Which is Better for AI Development: Apple M3 100gb 10cores or Apple M3 Pro 150gb 14cores? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the need for powerful computing devices to handle their processing and generation tasks. Apple's latest M3 chips, with their impressive performance and memory bandwidth, are making waves in the AI development landscape. But when it comes to choosing the right M3 chip for your LLM workflow, the decision can be tricky.

In this article, we'll delve into a head-to-head comparison of the Apple M3 100GB 10 Core and the Apple M3 Pro 150GB 14 Core, focusing on their performance in generating tokens for local LLM models. We'll analyze the token speed generation based on benchmark results, showcasing their strengths and weaknesses, and offering insights to help you decide which chip best fits your AI development needs.

The LLM Token Speed Showdown: Apple M3 vs. Apple M3 Pro

Let's get down to the nitty-gritty. We're going to be crunching numbers and comparing the performance of these chips using the "llama.cpp" framework, a popular choice for running LLMs locally. Our focus will be on Llama 2 7B models, a versatile and popular choice for various applications.

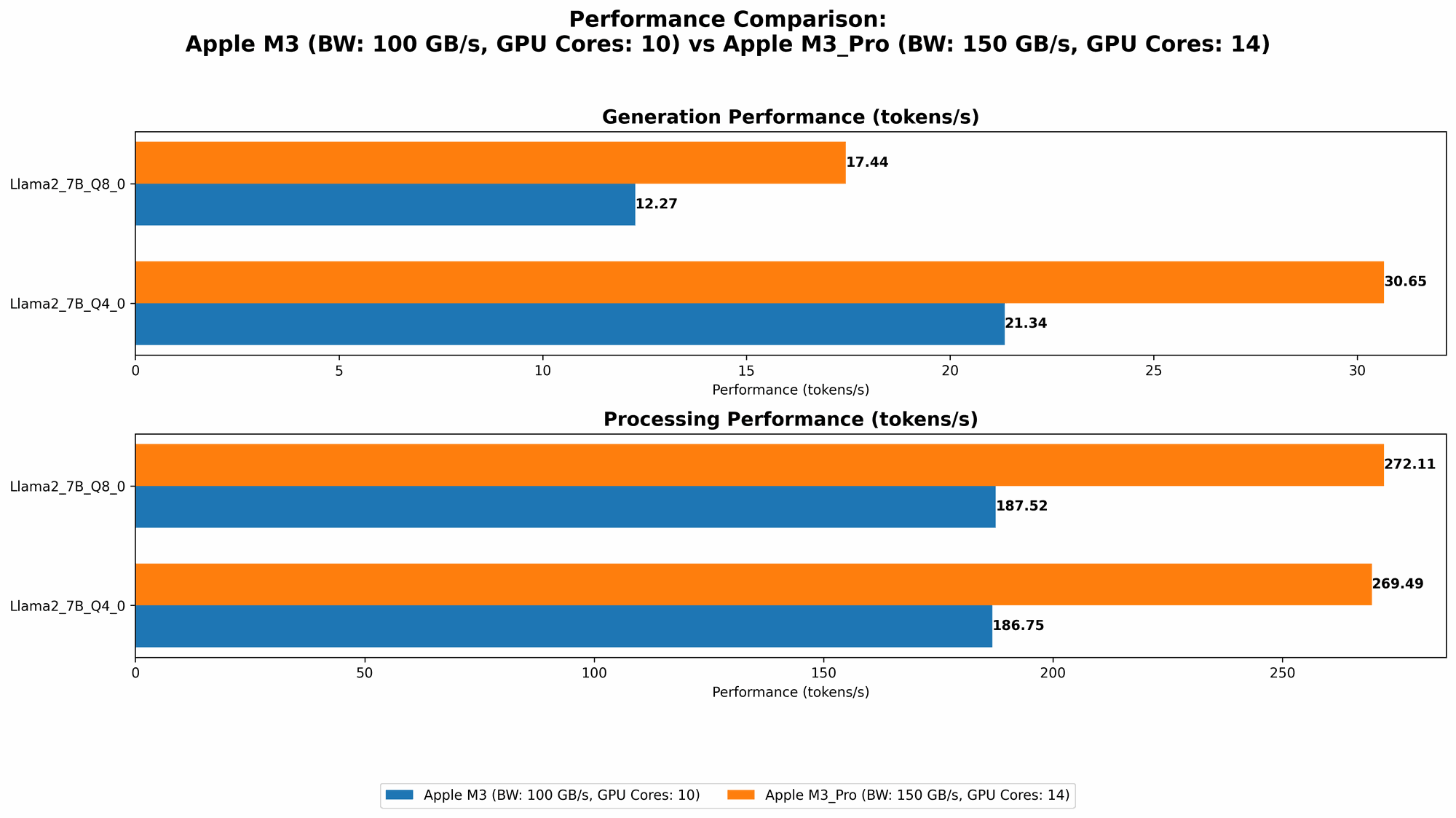

Benchmark Results: A Glimpse into Token Speed

We're using a series of benchmark results, sourced from the llama.cpp discussions and GPU Benchmarks on LLM Inference repositories, to compare the performance of the M3 and M3 Pro chips. Each entry represents the tokens per second achieved by these chips for different model configurations.

| Chip | Memory (GB) | CPU Cores | Llama 2 7B Q8_0 Processing (Tokens/sec) | Llama 2 7B Q8_0 Generation (Tokens/sec) | Llama 2 7B Q4_0 Processing (Tokens/sec) | Llama 2 7B Q4_0 Generation (Tokens/sec) |

|---|---|---|---|---|---|---|

| Apple M3 | 100 | 10 | 187.52 | 12.27 | 186.75 | 21.34 |

| Apple M3 Pro | 150 | 14 | 272.11 | 17.44 | 269.49 | 30.65 |

| Apple M3 Pro | 150 | 18 | 344.66 | 17.53 | 341.67 | 30.74 |

Note: Unfortunately, we lack data for the F16 processing and generation speeds for the M3 and M3 Pro chips with the Llama 2 7B model. This means there's no data to compare the performance of these chips when using the standard 16-bit floating-point format.

Performance Analysis: A Deep Dive into the Numbers

Let's analyze the benchmark results to understand the performance differences between the M3 and M3 Pro chips.

1. Processing Power:

The M3 Pro consistently outperforms the M3 in processing speed, whether using 8-bit or 4-bit quantization. This is likely due to the M3 Pro's larger memory bandwidth and higher core count. The M3 Pro with 18 cores achieves a noticeable performance boost compared to its 14-core counterpart.

2. Generation Speed:

While both chips demonstrate good generation speeds, the M3 Pro's performance is slightly superior to the M3's, particularly in Q4_0 quantization. This difference is likely attributed to the M3 Pro's higher GPU core count, enhancing its ability to generate tokens faster.

The Verdict: Choosing the Right Chip

Here's a breakdown of the best use cases for each chip:

Apple M3: A Solid Performer for Budget-Conscious Developers

- Recommendation: If you're working with smaller LLM models like Llama 2 7B and prioritize budget-friendly options, the M3 is a solid choice. Its performance is still respectable, especially in processing tasks.

Apple M3 Pro: The Powerhouse for Performance-Hungry Projects

- Recommendation: Choose the M3 Pro if you're working with larger LLMs, need high-performance processing and generation capabilities, or are dealing with intensive AI workflows. The 18-core M3 Pro offers an even greater performance advantage.

Beyond the Benchmarks: Real-World Considerations

Let's address some practical considerations that go beyond the pure benchmark numbers.

Understanding Quantization: A Key Factor in Performance

Both the M3 and M3 Pro excel in processing and generating tokens with 8-bit and 4-bit quantization. But what exactly is quantization, and how does it affect performance?

Quantization: Reducing the Size of LLMs

Think of it as a way of compressing your LLM. Instead of using standard 32-bit floating-point numbers, quantization reduces the number of bits used to represent each number. This has a significant impact on model size and processing performance:

- Smaller Model Size: Quantization significantly shrinks the LLM's size, allowing it to run on devices with less memory.

- Faster Processing and Generation: By using fewer bits, the chips can process information more efficiently, resulting in faster token speeds.

A Trade-Off: Accuracy vs. Speed

Quantization reduces the precision of the LLM's calculations. It can lead to slightly lower accuracy in the generated results. However, this trade-off is often acceptable, especially for tasks that don't require absolute precision.

Memory Bandwidth: A Critical Factor for LLMs

LLMs are memory-hungry beasts. A larger memory bandwidth allows the chips to access data quickly, leading to faster processing and generation. The M3 Pro's higher bandwidth gives it a significant advantage in this area, particularly when compared to the M3.

Beyond Tokens/Second: Consider Your AI Development Workflow

Remember that token speed is just one aspect of LLM performance. Here are other factors to consider:

- Model Size: Are you working with large LLMs like Llama 2 13B or 70B, or smaller models? Different chips may be better suited for different model sizes.

- Application: What are you using the LLM for? For example, applications requiring real-time responses might prioritize speed over accuracy, while those requiring high accuracy might use larger models and accept longer processing times.

- Resources: Your budget and availability of other hardware resources will also play a role in choosing the right chip.

FAQ: Your Burning Questions Answered

Here are some frequently asked questions about choosing the right M3 chip for your LLM development:

1. What are the best use cases for the M3 and M3 Pro chips?

- The M3 is a cost-effective option for projects using smaller models, while the M3 Pro is ideal for those requiring high performance and larger model sizes.

2. Are there any other factors to consider beyond the benchmark data?

- Yes, consider your model size, application requirements, resource constraints, and potential need for more powerful GPUs.

3. Should I always use the highest possible quantization for my LLM?

- Not necessarily. Consider the trade-off between accuracy and speed. Quantization can significantly improve performance but may lead to a slight decrease in accuracy.

4. What other hardware options are available for running LLMs locally?

- Other options include GPUs like the NVIDIA GeForce RTX 4090, Intel Arc A770, and AMD Radeon RX 7900 XT.

5. How can I find more information about LLM performance on different devices?

- The llama.cpp discussions, GPU Benchmarks on LLM Inference repositories, and forums like Reddit's r/LocalLLMs are excellent resources for benchmarking and performance data.

Keywords:

Apple M3, Apple M3 Pro, LLM, AI, AI Development, Llama 2, Token Generation, Token Speed Benchmark, Quantization, Memory Bandwidth, LocalLLMs, GPU, LLM Inference, AI Hardware, Performance Optimization.