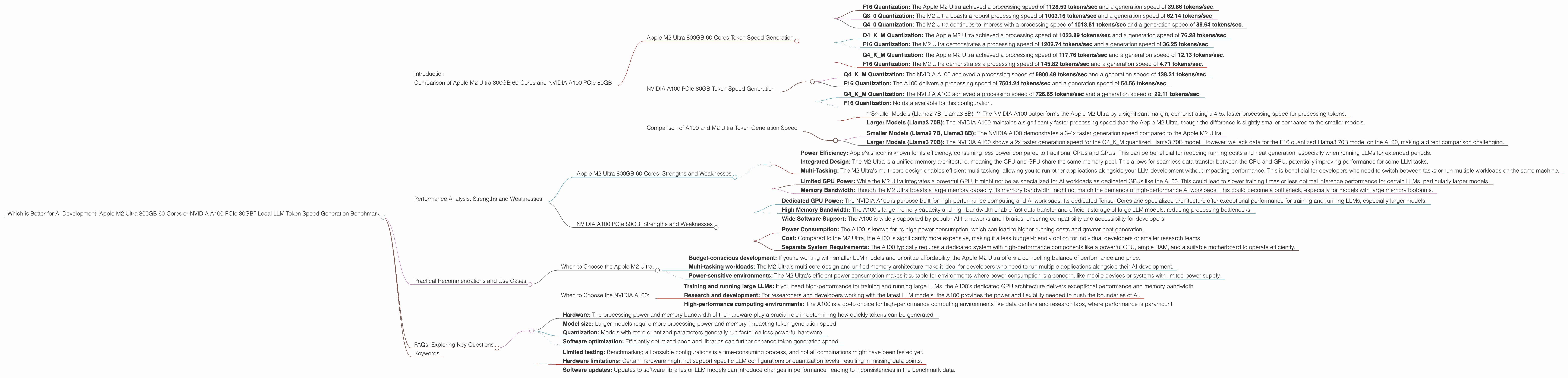

Which is Better for AI Development: Apple M2 Ultra 800gb 60cores or NVIDIA A100 PCIe 80GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and applications emerging every day. For developers and researchers working with LLMs, access to powerful hardware is crucial to effectively train and run these complex models. Two popular options for local LLM development are the Apple M2 Ultra 800GB 60-core processor and the NVIDIA A100 PCIe 80GB GPU. This article dives into the performance of these two devices in generating tokens for various LLM models, providing a comprehensive comparison to help you make informed decisions for your AI development needs.

Imagine trying to train a large language model on your laptop – you'd be waiting for days or even weeks to finish! That's why powerful hardware like the Apple M2 Ultra and NVIDIA A100 come into play. They’re like turbocharged engines for AI, allowing you to train and run LLMs much faster and more efficiently. We'll be looking at how these two devices stack up when it comes to generating text using popular LLM models like Llama 2 and Llama 3.

Comparison of Apple M2 Ultra 800GB 60-Cores and NVIDIA A100 PCIe 80GB

This section presents a detailed comparison of the Apple M2 Ultra 800GB 60-core processor and the NVIDIA A100 PCIe 80GB GPU in terms of their performance in generating tokens for various LLM models. We will analyze the results based on the token generation speed in tokens per second (tokens/sec) for different model sizes, quantizations, and model architectures.

Apple M2 Ultra 800GB 60-Cores Token Speed Generation

The Apple M2 Ultra 800GB 60-core processor is a powerful and versatile chip designed for a wide range of applications, including AI development. Its impressive processing power and large memory capacity make it an attractive option for local LLM development.

Let's dive into the specifics of the M2 Ultra's performance with different LLMs:

Llama2 7B (7 Billion Parameter) Model:

- F16 Quantization: The Apple M2 Ultra achieved a processing speed of 1128.59 tokens/sec and a generation speed of 39.86 tokens/sec.

- Q8_0 Quantization: The M2 Ultra boasts a robust processing speed of 1003.16 tokens/sec and a generation speed of 62.14 tokens/sec.

- Q4_0 Quantization: The M2 Ultra continues to impress with a processing speed of 1013.81 tokens/sec and a generation speed of 88.64 tokens/sec.

Llama3 8B (8 Billion Parameter) Model:

- Q4KM Quantization: The Apple M2 Ultra achieved a processing speed of 1023.89 tokens/sec and a generation speed of 76.28 tokens/sec.

- F16 Quantization: The M2 Ultra demonstrates a processing speed of 1202.74 tokens/sec and a generation speed of 36.25 tokens/sec.

Llama3 70B (70 Billion Parameter) Model:

- Q4KM Quantization: The Apple M2 Ultra achieved a processing speed of 117.76 tokens/sec and a generation speed of 12.13 tokens/sec.

- F16 Quantization: The M2 Ultra demonstrates a processing speed of 145.82 tokens/sec and a generation speed of 4.71 tokens/sec.

NVIDIA A100 PCIe 80GB Token Speed Generation

The NVIDIA A100 PCIe 80GB GPU is a powerhouse designed for high-performance computing tasks, including AI inference and training. Its powerful Tensor Cores and large memory capacity make it a favorite among researchers and developers working with LLMs.

Here's a breakdown of the A100's performance with different LLMs:

Llama3 8B (8 Billion Parameter) Model:

- Q4KM Quantization: The NVIDIA A100 achieved a processing speed of 5800.48 tokens/sec and a generation speed of 138.31 tokens/sec.

- F16 Quantization: The A100 delivers a processing speed of 7504.24 tokens/sec and a generation speed of 54.56 tokens/sec.

Llama3 70B (70 Billion Parameter) Model:

- Q4KM Quantization: The NVIDIA A100 achieved a processing speed of 726.65 tokens/sec and a generation speed of 22.11 tokens/sec.

- F16 Quantization: No data available for this configuration.

Comparison of A100 and M2 Ultra Token Generation Speed

*Processing Speed: *

- *Smaller Models (Llama2 7B, Llama3 8B): * The NVIDIA A100 outperforms the Apple M2 Ultra by a significant margin, demonstrating a 4-5x faster processing speed for processing tokens.

- Larger Models (Llama3 70B): The NVIDIA A100 maintains a significantly faster processing speed than the Apple M2 Ultra, though the difference is slightly smaller compared to the smaller models.

Generation Speed:

- Smaller Models (Llama2 7B, Llama3 8B): The NVIDIA A100 demonstrates a 3-4x faster generation speed compared to the Apple M2 Ultra.

- Larger Models (Llama3 70B): The NVIDIA A100 shows a 2x faster generation speed for the Q4KM quantized Llama3 70B model. However, we lack data for the F16 quantized Llama3 70B model on the A100, making a direct comparison challenging.

Overall: The NVIDIA A100 clearly dominates the M2 Ultra in terms of both processing and generation speed for all tested LLM models. The A100 seems particularly well-suited for handling larger models due to its powerful GPU architecture.

Performance Analysis: Strengths and Weaknesses

Apple M2 Ultra 800GB 60-Cores: Strengths and Weaknesses

Strengths:

- Power Efficiency: Apple's silicon is known for its efficiency, consuming less power compared to traditional CPUs and GPUs. This can be beneficial for reducing running costs and heat generation, especially when running LLMs for extended periods.

- Integrated Design: The M2 Ultra is a unified memory architecture, meaning the CPU and GPU share the same memory pool. This allows for seamless data transfer between the CPU and GPU, potentially improving performance for some LLM tasks.

- Multi-Tasking: The M2 Ultra's multi-core design enables efficient multi-tasking, allowing you to run other applications alongside your LLM development without impacting performance. This is beneficial for developers who need to switch between tasks or run multiple workloads on the same machine.

Weaknesses:

- Limited GPU Power: While the M2 Ultra integrates a powerful GPU, it might not be as specialized for AI workloads as dedicated GPUs like the A100. This could lead to slower training times or less optimal inference performance for certain LLMs, particularly larger models.

- Memory Bandwidth: Though the M2 Ultra boasts a large memory capacity, its memory bandwidth might not match the demands of high-performance AI workloads. This could become a bottleneck, especially for models with large memory footprints.

NVIDIA A100 PCIe 80GB: Strengths and Weaknesses

Strengths:

- Dedicated GPU Power: The NVIDIA A100 is purpose-built for high-performance computing and AI workloads. Its dedicated Tensor Cores and specialized architecture offer exceptional performance for training and running LLMs, especially larger models.

- High Memory Bandwidth: The A100's large memory capacity and high bandwidth enable fast data transfer and efficient storage of large LLM models, reducing processing bottlenecks.

- Wide Software Support: The A100 is widely supported by popular AI frameworks and libraries, ensuring compatibility and accessibility for developers.

Weaknesses:

- Power Consumption: The A100 is known for its high power consumption, which can lead to higher running costs and greater heat generation.

- Cost: Compared to the M2 Ultra, the A100 is significantly more expensive, making it a less budget-friendly option for individual developers or smaller research teams.

- Separate System Requirements: The A100 typically requires a dedicated system with high-performance components like a powerful CPU, ample RAM, and a suitable motherboard to operate efficiently.

Practical Recommendations and Use Cases

When to Choose the Apple M2 Ultra:

- Budget-conscious development: If you're working with smaller LLM models and prioritize affordability, the Apple M2 Ultra offers a compelling balance of performance and price.

- Multi-tasking workloads: The M2 Ultra's multi-core design and unified memory architecture make it ideal for developers who need to run multiple applications alongside their AI development.

- Power-sensitive environments: The M2 Ultra's efficient power consumption makes it suitable for environments where power consumption is a concern, like mobile devices or systems with limited power supply.

When to Choose the NVIDIA A100:

- Training and running large LLMs: If you need high-performance for training and running large LLMs, the A100's dedicated GPU architecture delivers exceptional performance and memory bandwidth.

- Research and development: For researchers and developers working with the latest LLM models, the A100 provides the power and flexibility needed to push the boundaries of AI.

- High-performance computing environments: The A100 is a go-to choice for high-performance computing environments like data centers and research labs, where performance is paramount.

FAQs: Exploring Key Questions

What is Quantization in the context of LLMs?

Quantization is like simplifying a complex recipe by using fewer ingredients. When we "quantize" an LLM, we reduce the size of the model's parameters, which are like the numbers that store the model's knowledge. This makes the model smaller and more efficient, allowing it to run faster on less powerful hardware. Think of it like compressing a large image file to make it smaller and easier to share online.

What are the implications of faster generation speeds?

Faster token generation speeds mean faster responses and more efficient interactions with LLMs. For example, a chatbot using a faster model will respond more quickly to your questions, while a text summarization tool will generate summaries in less time. This translates to a more seamless and interactive user experience.

What factors influence token generation speed?

Various factors influence token generation speed, including:

- Hardware: The processing power and memory bandwidth of the hardware play a crucial role in determining how quickly tokens can be generated.

- Model size: Larger models require more processing power and memory, impacting token generation speed.

- Quantization: Models with more quantized parameters generally run faster on less powerful hardware.

- Software optimization: Efficiently optimized code and libraries can further enhance token generation speed.

Why are some data points missing in the benchmark data?

Some data points might be missing due to various reasons, such as:

- Limited testing: Benchmarking all possible configurations is a time-consuming process, and not all combinations might have been tested yet.

- Hardware limitations: Certain hardware might not support specific LLM configurations or quantization levels, resulting in missing data points.

- Software updates: Updates to software libraries or LLM models can introduce changes in performance, leading to inconsistencies in the benchmark data.

Keywords

Apple M2 Ultra, NVIDIA A100, LLM, Llama 2, Llama 3, Token Speed, Generation, Processing, Quantization, F16, Q80, Q40, Q4KM, AI Development, Benchmark, Hardware Comparison, Performance Analysis, Strengths, Weaknesses, Use Cases, FAQs, AI, Machine Learning, Deep Learning, NLP, Natural Language Processing