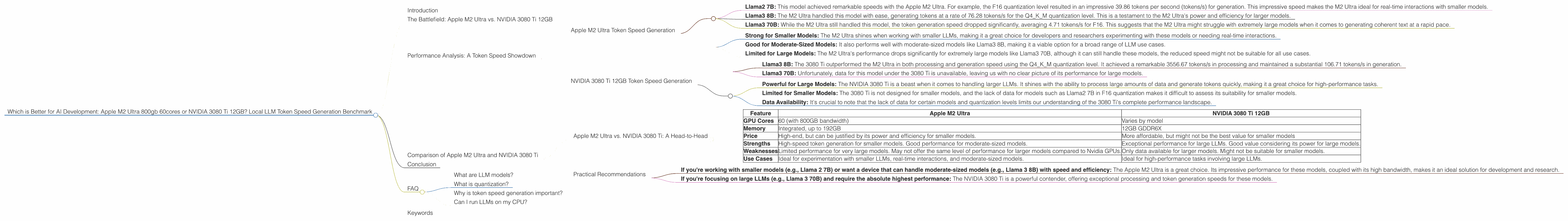

Which is Better for AI Development: Apple M2 Ultra 800gb 60cores or NVIDIA 3080 Ti 12GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is booming, and with it comes the need for powerful hardware to run these models effectively. Whether you're a developer building the next revolutionary AI application or a researcher pushing the boundaries of language understanding, choosing the right hardware can be a critical decision.

This article will compare two popular devices, the Apple M2 Ultra 800gb 60cores and NVIDIA 3080 Ti 12GB, and their suitability for running LLMs locally. We'll delve into their performance on different LLM models and analyze their strengths and weaknesses. By the end, you'll have a clear understanding of which device is the better choice for your specific needs and use cases.

The Battlefield: Apple M2 Ultra vs. NVIDIA 3080 Ti 12GB

Imagine two warriors standing ready for a showdown. On one side we have the Apple M2 Ultra, a powerful beast armed with 60 cores and a massive 800GB of bandwidth. On the other, we have the NVIDIA 3080 Ti, a veteran in the graphics processing market, known for its lightning-fast processing capabilities. But how do they fare in the arena of LLM token generation? Let's dive into the data and find out.

Performance Analysis: A Token Speed Showdown

Apple M2 Ultra Token Speed Generation

The Apple M2 Ultra demonstrated impressive token generation speeds across various LLM models and quantization levels.

Breakdown of Apple M2 Ultra Performance:

- Llama2 7B: This model achieved remarkable speeds with the Apple M2 Ultra. For example, the F16 quantization level resulted in an impressive 39.86 tokens per second (tokens/s) for generation. This impressive speed makes the M2 Ultra ideal for real-time interactions with smaller models.

- Llama3 8B: The M2 Ultra handled this model with ease, generating tokens at a rate of 76.28 tokens/s for the Q4KM quantization level. This is a testament to the M2 Ultra's power and efficiency for larger models.

- Llama3 70B: While the M2 Ultra still handled this model, the token generation speed dropped significantly, averaging 4.71 tokens/s for F16. This suggests that the M2 Ultra might struggle with extremely large models when it comes to generating coherent text at a rapid pace.

Key Takeaways from Apple M2 Ultra Performance:

- Strong for Smaller Models: The M2 Ultra shines when working with smaller LLMs, making it a great choice for developers and researchers experimenting with these models or needing real-time interactions.

- Good for Moderate-Sized Models: It also performs well with moderate-sized models like Llama3 8B, making it a viable option for a broad range of LLM use cases.

- Limited for Large Models: The M2 Ultra's performance drops significantly for extremely large models like Llama3 70B, although it can still handle these models, the reduced speed might not be suitable for all use cases.

NVIDIA 3080 Ti 12GB Token Speed Generation

The NVIDIA 3080 Ti is a powerhouse when it comes to processing and generation speed for larger LLMs. However, it lacks data for smaller models like Llama2 7B and Llama3 7B in F16 quantization.

Breakdown of NVIDIA 3080 Ti Performance:

- Llama3 8B: The 3080 Ti outperformed the M2 Ultra in both processing and generation speed using the Q4KM quantization level. It achieved a remarkable 3556.67 tokens/s in processing and maintained a substantial 106.71 tokens/s in generation.

- Llama3 70B: Unfortunately, data for this model under the 3080 Ti is unavailable, leaving us with no clear picture of its performance for large models.

Key Takeaways from NVIDIA 3080 Ti Performance:

- Powerful for Large Models: The NVIDIA 3080 Ti is a beast when it comes to handling larger LLMs. It shines with the ability to process large amounts of data and generate tokens quickly, making it a great choice for high-performance tasks.

- Limited for Smaller Models: The 3080 Ti is not designed for smaller models, and the lack of data for models such as Llama2 7B in F16 quantization makes it difficult to assess its suitability for smaller models.

- Data Availability: It's crucial to note that the lack of data for certain models and quantization levels limits our understanding of the 3080 Ti's complete performance landscape.

Comparison of Apple M2 Ultra and NVIDIA 3080 Ti

Apple M2 Ultra vs. NVIDIA 3080 Ti: A Head-to-Head

| Feature | Apple M2 Ultra | NVIDIA 3080 Ti 12GB |

|---|---|---|

| GPU Cores | 60 (with 800GB bandwidth) | Varies by model |

| Memory | Integrated, up to 192GB | 12GB GDDR6X |

| Price | High-end, but can be justified by its power and efficiency for smaller models. | More affordable, but might not be the best value for smaller models |

| Strengths | High-speed token generation for smaller models. Good performance for moderate-sized models. | Exceptional performance for large LLMs. Good value considering its power for large models. |

| Weaknesses | Limited performance for very large models. May not offer the same level of performance for larger models compared to Nvidia GPUs. | Only data available for larger models. Might not be suitable for smaller models. |

| Use Cases | Ideal for experimentation with smaller LLMs, real-time interactions, and moderate-sized models. | Ideal for high-performance tasks involving large LLMs. |

Practical Recommendations

- If you're working with smaller models (e.g., Llama 2 7B) or want a device that can handle moderate-sized models (e.g., Llama 3 8B) with speed and efficiency: The Apple M2 Ultra is a great choice. Its impressive performance for these models, coupled with its high bandwidth, makes it an ideal solution for development and research.

- If you're focusing on large LLMs (e.g., Llama 3 70B) and require the absolute highest performance: The NVIDIA 3080 Ti is a powerful contender, offering exceptional processing and token generation speeds for these models.

Conclusion

Choosing the right device for running LLMs locally depends on your specific needs and use cases. Both the Apple M2 Ultra and NVIDIA 3080 Ti have their strengths and weaknesses. The Apple M2 Ultra shines with its impressive performance for smaller and moderate-sized models, while the NVIDIA 3080 Ti is a powerhouse for handling large LLMs. Ultimately, the best device is the one that best fits your workflow and the specific models you intend to work with.

FAQ

What are LLM models?

LLM stands for Large Language Model. These are AI models trained on massive datasets of text and code. LLMs are capable of understanding and generating human-like language, making them valuable for diverse applications like chatbots, text summarization, machine translation, and creative writing.

What is quantization?

Quantization is a technique used to reduce the size of LLM models without sacrificing much performance. It involves converting the model's parameters from high-precision floating-point numbers to lower-precision integers. This significantly shrinks the model's memory footprint, making it more efficient and allowing it to run on devices with limited memory.

Why is token speed generation important?

Token speed generation refers to the rate at which an LLM can process and generate text. A faster token speed translates to quicker responses from LLMs, making them more responsive and suitable for real-time applications like chatbots or interactive storytelling.

Can I run LLMs on my CPU?

Yes, you can run LLMs on your CPU. However, CPUs are generally less efficient than GPUs for handling the massive parallel computations required by LLMs. Using a GPU will significantly speed up the model's processing and token generation, leading to faster responses and improved performance.

Keywords

LLM, Large Language Model, Apple M2 Ultra, NVIDIA 3080 Ti, token speed, generation, processing, Llama2, Llama3, quantization, F16, Q4KM, AI development, local inference, benchmark, performance comparison.