Which is Better for AI Development: Apple M2 Ultra 800gb 60cores or Apple M3 Max 400gb 40cores? Local LLM Token Speed Generation Benchmark

Introduction

The world of Artificial Intelligence (AI) is rapidly evolving, with Large Language Models (LLMs) at the forefront of innovation. These powerful models require significant computational resources for training and inference. For developers seeking to build and experiment with LLMs locally, the choice of hardware becomes crucial.

This article compares two Apple silicon chips, the M2 Ultra and the M3 Max, both known for their high performance in demanding tasks like AI development. We'll dissect their performance in generating tokens for various LLM models, providing a comprehensive benchmark based on real-world data. This analysis will help you determine which chip best suits your needs, whether you are an AI enthusiast, a researcher, or a developer building AI-powered applications.

Understanding the Powerhouse Players: M2 Ultra vs. M3 Max

Imagine a race between two super-powered cars: the M2 Ultra with its massive 800GB of bandwidth and 60 powerful cores, and the M3 Max, a sleek powerhouse with 400GB bandwidth and 40 cores. Which one sprints ahead in the world of LLM token generation?

Apple M2 Ultra: The Bandwidth Beast

The M2 Ultra boasts impressive bandwidth, allowing it to handle vast amounts of data quickly. Think of it like a highway with multiple lanes, making data transfer smoother and faster.

Apple M3 Max: The Focused Performer

The M3 Max is a more focused performer, optimized for efficiency. It's like a streamlined sports car, delivering impressive power in a more compact package.

Local LLM Token Speed Generation Benchmark

Let's dive into the numbers! We'll be using the tokens/second metric to measure the token generation speed of both chips across a variety of popular LLM models.

Data Source and Methodology

The data presented in this benchmark is collected from publicly available resources:

- Performance of llama.cpp on various devices: This source (https://github.com/ggerganov/llama.cpp/discussions/4167) provides token generation speed benchmarks for various devices using the llama.cpp library.

- GPU Benchmarks on LLM Inference: This repository (https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference) offers comprehensive GPU benchmarks for LLM inference, providing valuable insights into performance across different hardware and models.

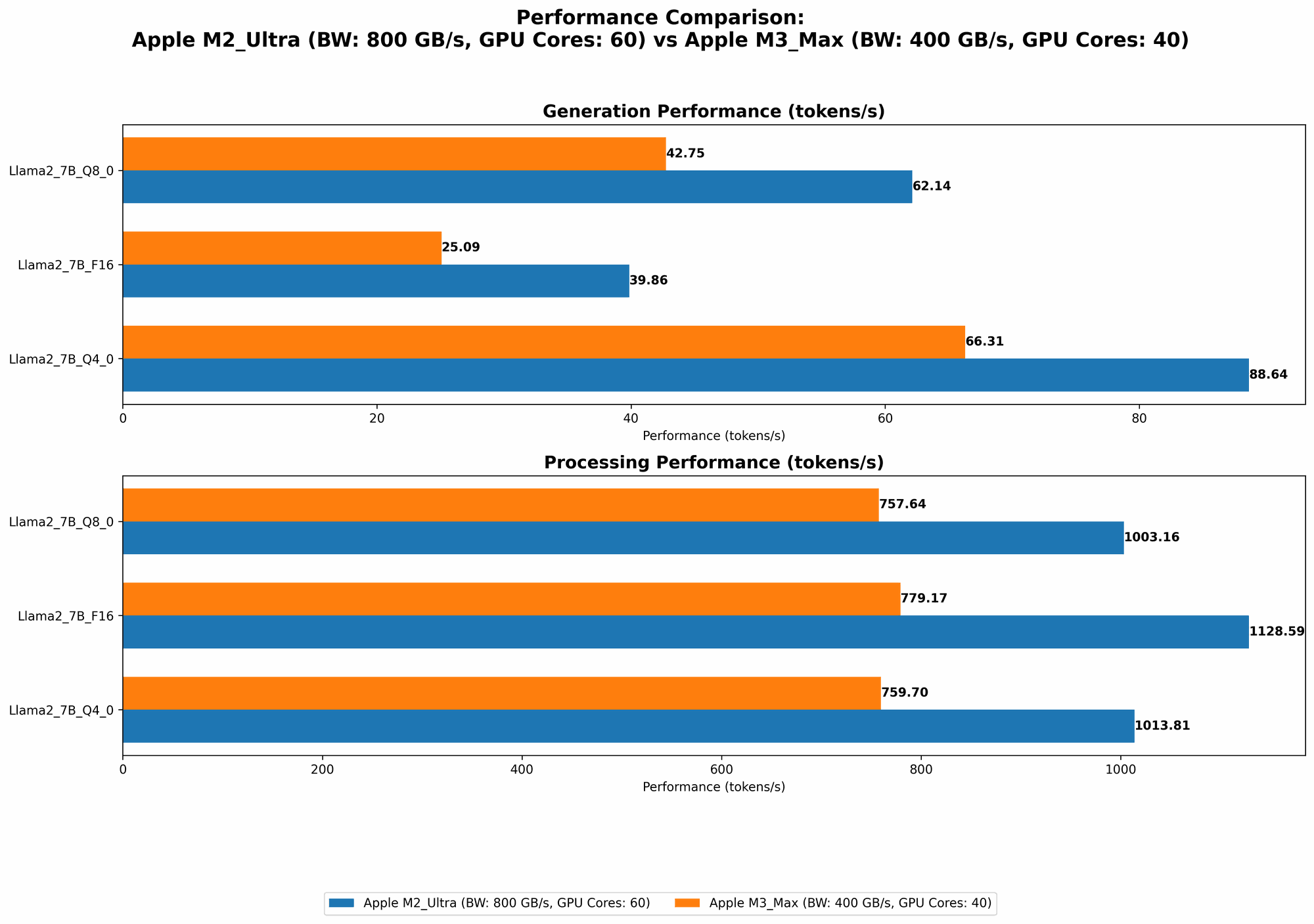

Benchmark Results: Unveiling the Differences

| Model | Device | BW (GB) | GPUCores | Processing (Tokens/sec) | Generation (Tokens/sec) |

|---|---|---|---|---|---|

| Llama2 7B F16 | M2 Ultra | 800 | 60 | 1128.59 | 39.86 |

| Llama2 7B F16 | M2 Ultra | 800 | 76 | 1401.85 | 41.02 |

| Llama2 7B Q8_0 | M2 Ultra | 800 | 60 | 1003.16 | 62.14 |

| Llama2 7B Q8_0 | M2 Ultra | 800 | 76 | 1248.59 | 66.64 |

| Llama2 7B Q4_0 | M2 Ultra | 800 | 60 | 1013.81 | 88.64 |

| Llama2 7B Q4_0 | M2 Ultra | 800 | 76 | 1238.48 | 94.27 |

| Llama3 8B Q4KM | M2 Ultra | 800 | 76 | 1023.89 | 76.28 |

| Llama3 8B F16 | M2 Ultra | 800 | 76 | 1202.74 | 36.25 |

| Llama3 70B Q4KM | M2 Ultra | 800 | 76 | 117.76 | 12.13 |

| Llama3 70B F16 | M2 Ultra | 800 | 76 | 145.82 | 4.71 |

| Llama2 7B F16 | M3 Max | 400 | 40 | 779.17 | 25.09 |

| Llama2 7B Q8_0 | M3 Max | 400 | 40 | 757.64 | 42.75 |

| Llama2 7B Q4_0 | M3 Max | 400 | 40 | 759.7 | 66.31 |

| Llama3 8B Q4KM | M3 Max | 400 | 40 | 678.04 | 50.74 |

| Llama3 8B F16 | M3 Max | 400 | 40 | 751.49 | 22.39 |

| Llama3 70B Q4KM | M3 Max | 400 | 40 | 62.88 | 7.53 |

Note: Data for Llama3 70B F16 on the M3 Max is not available.

Performance Analysis: A Deeper Dive

The benchmark results reveal some interesting patterns:

- M2 Ultra Dominates Processing: Across the board, the M2 Ultra significantly outperforms the M3 Max in terms of model processing speed (tokens/second). This is likely due to its larger bandwidth and higher core count.

- M3 Max Holds its Own in Generation: For token generation, the M3 Max shows its efficiency. It does not fall far behind the M2 Ultra in speed for smaller models like Llama2 7B.

- Larger Models: M2 Ultra Reigns Supreme: When dealing with larger models like Llama3 70B, the M2 Ultra's greater bandwidth and GPU cores become even more crucial, resulting in substantially faster token generation.

Quantization Explained: A Trade-off for Speed

Quantization is a technique used to reduce the memory footprint of LLMs without significantly affecting accuracy. Think of it like compressing an image—you reduce the file size while maintaining the essence of the picture.

In our benchmark, you'll notice different model configurations like "F16" (floating point 16-bit), "Q80" (quantized 8-bits), and "Q4K_M" (quantized 4-bits). These configurations represent various levels of quantization.

- Lower Precision, Higher Speed: Using Q4KM or Q8_0 often results in faster token generation compared to F16 because the model requires less processing.

- Accuracy Trade-off: While quantization can boost speed, it sometimes comes with a slight reduction in accuracy. You need to strike a balance between speed and accuracy based on your project's requirements.

Comparison of M2 Ultra and M3 Max: Strengths and Weaknesses

M2 Ultra: The Powerhouse for Large LLMs and Complex Workloads

- Strengths:

- Exceptional processing speed, perfect for training or running large LLMs like Llama3 70B.

- Vast bandwidth for handling massive datasets and complex computations.

- More GPU cores for parallel processing.

- Weaknesses:

- Higher power consumption compared to the M3 Max.

- More expensive than the M3 Max.

M3 Max: The Efficient Option for Everyday AI Tasks

- Strengths:

- Efficient performance with good speed for smaller LLMs.

- Lower power consumption, making it suitable for workloads that don't demand sustained peak performance.

- More affordable than the M2 Ultra.

- Weaknesses:

- May struggle with the most demanding LLM training or inference tasks, particularly for larger models.

- Lower bandwidth compared to the M2 Ultra.

Practical Recommendations: Choosing the Right Tool for the Job

- Large Language Model Developers: If you work with large LLMs, especially models above 30B parameters, the M2 Ultra is the clear winner. Its vast bandwidth and processing power will help you train and run models efficiently.

- AI Enthusiasts and Beginners: For experimenting with smaller models or getting started with AI, the M3 Max is a suitable option. Its efficiency and affordability make it a great entry point.

- Performance-Sensitive Projects: If speed is paramount for your project, the M2 Ultra is likely the better choice.

FAQ

What are the differences between Llama 7B and Llama 70B?

Llama 7B and Llama 70B are large language models developed by Meta. The difference lies in their size, or the number of parameters. Llama 7B has 7 billion parameters, while Llama 70B has 70 billion parameters. Larger models generally have better performance but require more computational resources.

What are the limitations of running an LLM locally?

Running LLMs locally can be challenging due to the high computational requirements. You might encounter limitations like:

- Hardware Requirements: LLMs demand powerful hardware, which can be expensive.

- Memory Constraints: Large models require substantial memory, potentially exceeding your device's capabilities.

- Time for Training and Inference: Training and running LLMs locally can be time-consuming, depending on the model size and hardware.

Keywords

Apple M2 Ultra, Apple M3 Max, LLM, Large Language Model, Token Generation, Token Speed, Benchmark, AI Development, Llama 2, Llama3, Quantization, F16, Q80, Q4K_M, GPU Cores, Bandwidth, Processing Power, Token Inference.