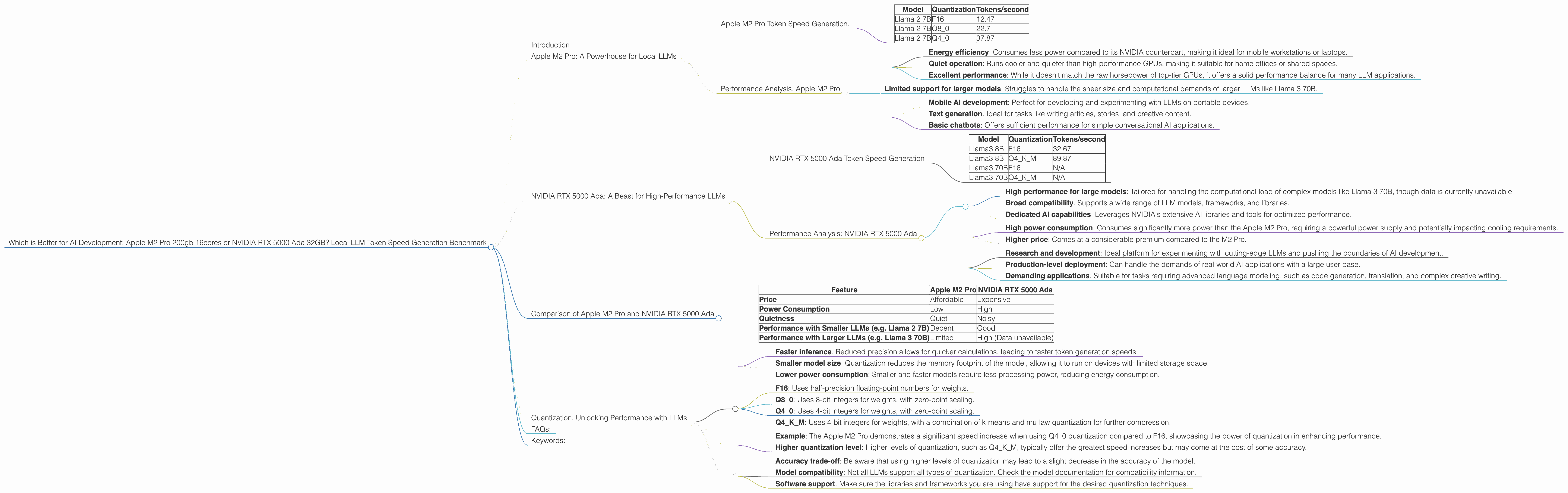

Which is Better for AI Development: Apple M2 Pro 200gb 16cores or NVIDIA RTX 5000 Ada 32GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI development is buzzing with excitement surrounding Large Language Models (LLMs). These powerful tools, capable of generating human-like text and performing complex tasks, are revolutionizing fields like natural language processing, code generation, and even creative writing. However, training and running these models locally requires significant computing power. This begs the question: what hardware is best for this task?

This article dives deep into the performance of two popular choices for local LLM development: the Apple M2 Pro 200gb 16cores and the NVIDIA RTX 5000 Ada 32GB. We'll compare their token generation speeds across different models, quantization formats, and use cases, giving you the insights needed to make an informed decision for your AI projects.

Let's get ready to unleash the potential of LLMs and explore the best hardware for the job!

Apple M2 Pro: A Powerhouse for Local LLMs

The Apple M2 Pro chip, known for its exceptional performance and energy efficiency, is a compelling option for developers working with LLMs. Let's see how it performs in practice.

Apple M2 Pro Token Speed Generation:

The following table showcases the token speed generation for the Apple M2 Pro:

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama 2 7B | F16 | 12.47 |

| Llama 2 7B | Q8_0 | 22.7 |

| Llama 2 7B | Q4_0 | 37.87 |

Key Observations:

- Significant speedup with quantization: As expected, the M2 Pro excels in token generation speed when using quantization techniques like Q80 and Q40. This is because these techniques reduce the precision of the model's weights, allowing for faster processing on the chip.

- Llama 2 7B: The M2 Pro demonstrates solid performance with the Llama 2 7B model, generating tokens at a respectable rate.

Performance Analysis: Apple M2 Pro

The Apple M2 Pro delivers a powerful combination of speed and efficiency. Its ability to leverage quantization techniques to enhance performance makes it an excellent choice for developers looking to run smaller LLMs locally, especially those focusing on tasks like text generation and basic conversation.

Strengths:

- Energy efficiency: Consumes less power compared to its NVIDIA counterpart, making it ideal for mobile workstations or laptops.

- Quiet operation: Runs cooler and quieter than high-performance GPUs, making it suitable for home offices or shared spaces.

- Excellent performance: While it doesn't match the raw horsepower of top-tier GPUs, it offers a solid performance balance for many LLM applications.

Weaknesses:

- Limited support for larger models: Struggles to handle the sheer size and computational demands of larger LLMs like Llama 3 70B.

Use Cases:

- Mobile AI development: Perfect for developing and experimenting with LLMs on portable devices.

- Text generation: Ideal for tasks like writing articles, stories, and creative content.

- Basic chatbots: Offers sufficient performance for simple conversational AI applications.

NVIDIA RTX 5000 Ada: A Beast for High-Performance LLMs

The NVIDIA RTX 5000 Ada, powered by the cutting-edge Ada Lovelace architecture, boasts impressive performance and an extensive ecosystem for high-performance computing. Now, let's see how this GPU fares in generating tokens for our LLMs.

NVIDIA RTX 5000 Ada Token Speed Generation

The following table shows the token generation speed for the RTX 5000 Ada:

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama3 8B | F16 | 32.67 |

| Llama3 8B | Q4KM | 89.87 |

| Llama3 70B | F16 | N/A |

| Llama3 70B | Q4KM | N/A |

Key Observations:

- No Llama 3 70B data: Unfortunately, there is no data available for the performance of the RTX 5000 Ada with the Llama 3 70B model. This is likely due to the model's sheer size, which may strain the GPU's memory or processing capabilities.

- Significant speedup with Q4KM: The combination of Q4KM quantization and the RTX 5000 Ada results in a substantial increase in token generation speed compared to F16.

- Impressive performance with Llama 3 8B: Even with the F16 quantization, the RTX 5000 Ada generates tokens significantly faster than the Apple M2 Pro for the Llama 3 8B model.

Performance Analysis: NVIDIA RTX 5000 Ada

The RTX 5000 Ada stands out as a champion for users who demand maximum performance, especially when working with larger and more complex LLMs.

Strengths:

- High performance for large models: Tailored for handling the computational load of complex models like Llama 3 70B, though data is currently unavailable.

- Broad compatibility: Supports a wide range of LLM models, frameworks, and libraries.

- Dedicated AI capabilities: Leverages NVIDIA's extensive AI libraries and tools for optimized performance.

Weaknesses:

- High power consumption: Consumes significantly more power than the Apple M2 Pro, requiring a powerful power supply and potentially impacting cooling requirements.

- Higher price: Comes at a considerable premium compared to the M2 Pro.

Use Cases:

- Research and development: Ideal platform for experimenting with cutting-edge LLMs and pushing the boundaries of AI development.

- Production-level deployment: Can handle the demands of real-world AI applications with a large user base.

- Demanding applications: Suitable for tasks requiring advanced language modeling, such as code generation, translation, and complex creative writing.

Comparison of Apple M2 Pro and NVIDIA RTX 5000 Ada

Let's summarize the key considerations when comparing the Apple M2 Pro and the NVIDIA RTX 5000 Ada:

| Feature | Apple M2 Pro | NVIDIA RTX 5000 Ada |

|---|---|---|

| Price | Affordable | Expensive |

| Power Consumption | Low | High |

| Quietness | Quiet | Noisy |

| Performance with Smaller LLMs (e.g. Llama 2 7B) | Decent | Good |

| Performance with Larger LLMs (e.g. Llama 3 70B) | Limited | High (Data unavailable) |

Practical Considerations:

- Budget: If you're budget-conscious, the Apple M2 Pro offers an attractive price point while still delivering solid performance for many use cases.

- Model size: For working with large LLMs, the NVIDIA RTX 5000 Ada is likely the superior choice, but its price tag and power consumption may be prohibitive for some users.

- Use case: Consider the specific tasks you intend to perform with your LLMs. If you're primarily focused on text generation or basic chatbots, the Apple M2 Pro may be sufficient. For research, development, or demanding applications, the RTX 5000 Ada will deliver the power you need.

Quantization: Unlocking Performance with LLMs

Quantization is a technique that reduces the precision of weights in LLM models, leading to smaller models that are faster to process. Think of it like using a smaller ruler to measure something. You might not get as much precision, but you can measure things much faster.

Why is it important?

- Faster inference: Reduced precision allows for quicker calculations, leading to faster token generation speeds.

- Smaller model size: Quantization reduces the memory footprint of the model, allowing it to run on devices with limited storage space.

- Lower power consumption: Smaller and faster models require less processing power, reducing energy consumption.

Types of Quantization:

- F16: Uses half-precision floating-point numbers for weights.

- Q8_0: Uses 8-bit integers for weights, with zero-point scaling.

- Q4_0: Uses 4-bit integers for weights, with zero-point scaling.

- Q4KM: Uses 4-bit integers for weights, with a combination of k-means and mu-law quantization for further compression.

Real-World Impact:

- Example: The Apple M2 Pro demonstrates a significant speed increase when using Q4_0 quantization compared to F16, showcasing the power of quantization in enhancing performance.

- Higher quantization level: Higher levels of quantization, such as Q4KM, typically offer the greatest speed increases but may come at the cost of some accuracy.

Practical Considerations:

- Accuracy trade-off: Be aware that using higher levels of quantization may lead to a slight decrease in the accuracy of the model.

- Model compatibility: Not all LLMs support all types of quantization. Check the model documentation for compatibility information.

- Software support: Make sure the libraries and frameworks you are using have support for the desired quantization techniques.

FAQs:

Q: What is a Large Language Model (LLM)?

A: An LLM is a sophisticated type of artificial intelligence that is trained on massive datasets of text and code. This training allows the model to understand and generate human-like language, perform various tasks like text summarization, translation, and even creative writing.

Q: How does token speed affect LLM performance?

A: Token speed measures how quickly a model can generate tokens, the basic units of text in an LLM. Higher token speeds mean faster response times and more efficient processing, leading to a smoother and more enjoyable user experience.

Q: What is the best device for LLM development?

A: The best device for LLM development depends on your specific needs and budget. If you are primarily focused on smaller models and portability, the Apple M2 Pro is a great choice. For maximum performance with larger models, the NVIDIA RTX 5000 Ada is the go-to option.

Q: Is it possible to run LLMs on a laptop?

A: Yes, you can run smaller LLMs on a laptop with sufficient processing power. The Apple M2 Pro is particularly well-suited for this, offering strong performance and portability.

Q: What are the limitations of local LLM development?

A: Local LLM development can be limited by the hardware resources available, the size and complexity of the models, and the need for specialized software and libraries. Larger and more sophisticated models may require powerful workstations or cloud computing services for efficient processing.

Keywords:

Apple M2 Pro, NVIDIA RTX 5000 Ada, LLM, Large Language Model, Token Speed, Quantization, AI Development, Llama 2, Llama 3, F16, Q80, Q40, Q4KM, Local LLM, Performance Benchmark, GPU, CPU, Inference, Text Generation, Conversational AI, Coding, Creative Writing, AI Research, Cloud Computing, Hardware, Software, Budget, Use Case, Compatibility