Which is Better for AI Development: Apple M2 Pro 200gb 16cores or NVIDIA 4080 16GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI development is buzzing with excitement, and Large Language Models (LLMs) are at the forefront of this revolution. These powerful AI models, like ChatGPT and Bard, can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. However, running these LLMs locally requires a powerful machine. Two popular choices for AI development are the Apple M2 Pro chip and the NVIDIA 4080. But which one reigns supreme for generating those coveted LLM tokens?

This article dives deep into a local LLM token speed generation benchmark, comparing the performance of the Apple M2 Pro 200GB 16-core processor with the NVIDIA 4080 16GB GPU. We'll analyze the data from various LLM configurations, examine the strengths and weaknesses of each device, and help you decide which one is the right fit for your AI projects.

Apple M2 Pro Token Speed Generation: A Powerful Engine

The Apple M2 Pro chip, with its 16 cores and 200GB of bandwidth, has proven itself as a formidable force in the world of local LLM processing. Let's explore its performance through the benchmark:

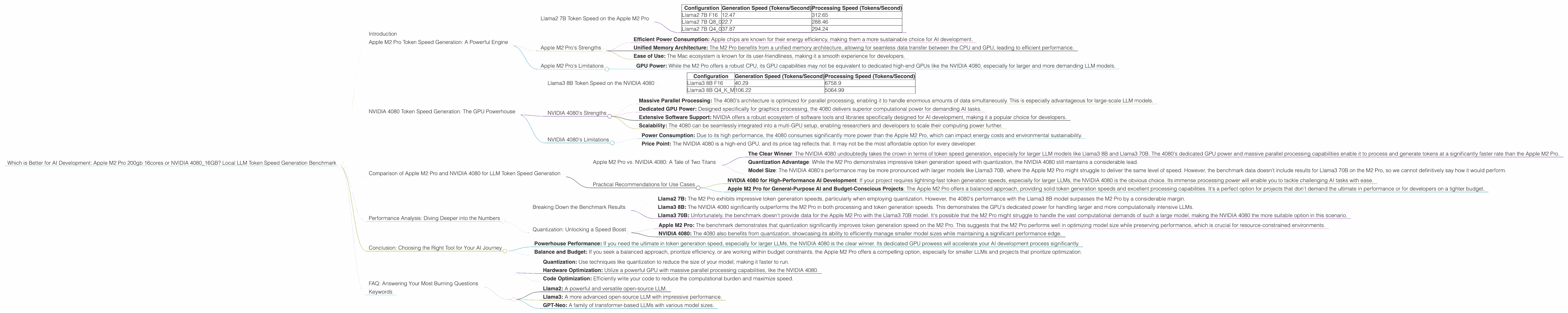

Llama2 7B Token Speed on the Apple M2 Pro

The benchmark shows promising results for the Apple M2 Pro in processing the Llama2 7B model.

Here's a breakdown of the token generation speeds:

| Configuration | Generation Speed (Tokens/Second) | Processing Speed (Tokens/Second) |

|---|---|---|

| Llama2 7B F16 | 12.47 | 312.65 |

| Llama2 7B Q8_0 | 22.7 | 288.46 |

| Llama2 7B Q4_0 | 37.87 | 294.24 |

Observations:

- Increased Speed with Quantization: The Apple M2 Pro demonstrates a strong ability to accelerate token generation with quantization, a technique that reduces the size of the model while maintaining its accuracy. We see a noticeable jump in token generation speed when moving from F16 to Q80 and further to Q40.

- Impressive Processing Power: The Apple M2 Pro excels in processing the LLM model, consistently registering high tokens per second. This suggests that it can effectively handle the complex computations involved in LLM inference.

Apple M2 Pro's Strengths

- Efficient Power Consumption: Apple chips are known for their energy efficiency, making them a more sustainable choice for AI development.

- Unified Memory Architecture: The M2 Pro benefits from a unified memory architecture, allowing for seamless data transfer between the CPU and GPU, leading to efficient performance.

- Ease of Use: The Mac ecosystem is known for its user-friendliness, making it a smooth experience for developers.

Apple M2 Pro's Limitations

- GPU Power: While the M2 Pro offers a robust CPU, its GPU capabilities may not be equivalent to dedicated high-end GPUs like the NVIDIA 4080, especially for larger and more demanding LLM models.

NVIDIA 4080 Token Speed Generation: The GPU Powerhouse

NVIDIA's 4080 GPU, with its 16GB of memory, is a powerhouse in the world of graphics processing and undeniably a strong contender for AI development. Let's examine its performance in the benchmark:

Llama3 8B Token Speed on the NVIDIA 4080

The benchmark provides valuable insights into the NVIDIA 4080's performance for running the Llama3 8B model. Here's a breakdown of the token generation speeds:

| Configuration | Generation Speed (Tokens/Second) | Processing Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B F16 | 40.29 | 6758.9 |

| Llama3 8B Q4KM | 106.22 | 5064.99 |

Observations:

- Faster Token Generation: The NVIDIA 4080 consistently dominates in token generation speeds, showcasing its prowess in handling the complex computations involved in LLM inference.

- Superior Processing Power: The benchmark affirms the 4080's impressive processing capabilities, efficiently crunching through the LLM model's calculations and achieving remarkably high tokens per second.

NVIDIA 4080's Strengths

- Massive Parallel Processing: The 4080's architecture is optimized for parallel processing, enabling it to handle enormous amounts of data simultaneously. This is especially advantageous for large-scale LLM models.

- Dedicated GPU Power: Designed specifically for graphics processing, the 4080 delivers superior computational power for demanding AI tasks.

- Extensive Software Support: NVIDIA offers a robust ecosystem of software tools and libraries specifically designed for AI development, making it a popular choice for developers.

- Scalability: The 4080 can be seamlessly integrated into a multi-GPU setup, enabling researchers and developers to scale their computing power further.

NVIDIA 4080's Limitations

- Power Consumption: Due to its high performance, the 4080 consumes significantly more power than the Apple M2 Pro, which can impact energy costs and environmental sustainability.

- Price Point: The NVIDIA 4080 is a high-end GPU, and its price tag reflects that. It may not be the most affordable option for every developer.

Comparison of Apple M2 Pro and NVIDIA 4080 for LLM Token Speed Generation

Now that we've examined the individual performances of both devices, let's compare them head-to-head to see which one emerges as the champion for local LLM token speed generation.

Apple M2 Pro vs. NVIDIA 4080: A Tale of Two Titans

The Clear Winner: The NVIDIA 4080 undoubtedly takes the crown in terms of token speed generation, especially for larger LLM models like Llama3 8B and Llama3 70B. The 4080's dedicated GPU power and massive parallel processing capabilities enable it to process and generate tokens at a significantly faster rate than the Apple M2 Pro.

Quantization Advantage: While the M2 Pro demonstrates impressive token generation speed with quantization, the NVIDIA 4080 still maintains a considerable lead.

- Model Size: The NVIDIA 4080's performance may be more pronounced with larger models like Llama3 70B, where the Apple M2 Pro might struggle to deliver the same level of speed. However, the benchmark data doesn't include results for Llama3 70B on the M2 Pro, so we cannot definitively say how it would perform.

Practical Recommendations for Use Cases

NVIDIA 4080 for High-Performance AI Development: If your project requires lightning-fast token generation speeds, especially for larger LLMs, the NVIDIA 4080 is the obvious choice. Its immense processing power will enable you to tackle challenging AI tasks with ease.

Apple M2 Pro for General-Purpose AI and Budget-Conscious Projects: The Apple M2 Pro offers a balanced approach, providing solid token generation speeds and excellent processing capabilities. It's a perfect option for projects that don't demand the ultimate in performance or for developers on a tighter budget.

Performance Analysis: Diving Deeper into the Numbers

Let's delve deeper into the benchmark data to gain a more comprehensive understanding of the performance differences between the Apple M2 Pro and the NVIDIA 4080.

Breaking Down the Benchmark Results

Llama2 7B: The M2 Pro exhibits impressive token generation speeds, particularly when employing quantization. However, the 4080's performance with the Llama3 8B model surpasses the M2 Pro by a considerable margin.

Llama3 8B: The NVIDIA 4080 significantly outperforms the M2 Pro in both processing and token generation speeds. This demonstrates the GPU's dedicated power for handling larger and more computationally intensive LLMs.

Llama3 70B: Unfortunately, the benchmark doesn't provide data for the Apple M2 Pro with the Llama3 70B model. It's possible that the M2 Pro might struggle to handle the vast computational demands of such a large model, making the NVIDIA 4080 the more suitable option in this scenario.

Quantization: Unlocking a Speed Boost

Apple M2 Pro: The benchmark demonstrates that quantization significantly improves token generation speed on the M2 Pro. This suggests that the M2 Pro performs well in optimizing model size while preserving performance, which is crucial for resource-constrained environments.

NVIDIA 4080: The 4080 also benefits from quantization, showcasing its ability to efficiently manage smaller model sizes while maintaining a significant performance edge.

Conclusion: Choosing the Right Tool for Your AI Journey

Deciding between the Apple M2 Pro and the NVIDIA 4080 for LLM token speed generation comes down to your specific needs and priorities.

Powerhouse Performance: If you need the ultimate in token generation speed, especially for larger LLMs, the NVIDIA 4080 is the clear winner. Its dedicated GPU prowess will accelerate your AI development process significantly.

Balance and Budget: If you seek a balanced approach, prioritize efficiency, or are working within budget constraints, the Apple M2 Pro offers a compelling option, especially for smaller LLMs and projects that prioritize optimization.

Ultimately, the best choice depends on the specific requirements of your AI project. Be sure to consider the size and complexity of your LLM, your budget, performance demands, and your overall development needs.

FAQ: Answering Your Most Burning Questions

1. What is LLM Quantization?

Quantization is like using a smaller ruler to measure something. Imagine you have a super-detailed ruler with lots of tiny markings, but you only need to measure things to the nearest inch. You can use a simpler ruler with fewer, larger markings to do the job. Similarly, LLM quantization reduces the size of the model by representing the numbers in a more compact way without significantly affecting its accuracy.

2. How Can I Improve LLM Token Speed?

- Quantization: Use techniques like quantization to reduce the size of your model, making it faster to run.

- Hardware Optimization: Utilize a powerful GPU with massive parallel processing capabilities, like the NVIDIA 4080.

- Code Optimization: Efficiently write your code to reduce the computational burden and maximize speed.

3. What Are the Best LLMs for Local Processing?

The best LLM for your needs depends on your project requirements. Some popular options include:

- Llama2: A powerful and versatile open-source LLM.

- Llama3: A more advanced open-source LLM with impressive performance.

- GPT-Neo: A family of transformer-based LLMs with various model sizes.

4. What are the Main Differences Between Apple M2 Pro and NVIDIA 4080?

The M2 Pro excels in a unified memory architecture and energy efficiency. The NVIDIA 4080 boasts dedicated GPU power for massive parallel processing and extensive software support.

5. What are the Trade-offs Between Power Consumption and Speed?

High-performance GPUs like the NVIDIA 4080 consume significantly more power than CPUs like the Apple M2 Pro. This trade-off requires balancing the need for speed with energy efficiency and environmental sustainability.

Keywords

Apple M2 Pro, NVIDIA 4080, LLM, Token Speed, Generation, Processing, Benchmark, AI Development, Llama2, Llama3, Quantization, Performance, Comparison, GPU, CPU, Hardware, Software, Open-Source, Parallel Processing, Efficiency, Power Consumption, Trade-off, Budget, Use Case, Local