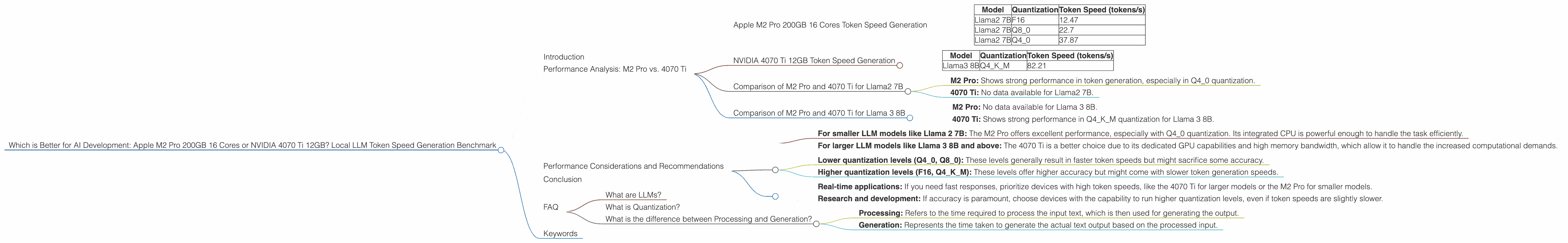

Which is Better for AI Development: Apple M2 Pro 200gb 16cores or NVIDIA 4070 Ti 12GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is exploding, fueled by the exciting potential of AI. Running these models locally, however, poses a significant challenge due to their immense computational demands. This article delves into the performance of two popular choices for local LLM development: the Apple M2 Pro 200GB 16 Cores and the NVIDIA 4070 Ti 12GB. We will compare their token generation speeds across various LLM models and quantization levels, providing insights into their strengths and weaknesses for different use cases.

Imagine trying to fit the entire Library of Congress into your pocket. That's the sheer volume of data LLMs process - mind-boggling! To handle this, we need powerful hardware, and the M2 Pro and the 4070 Ti are contenders in this race.

Performance Analysis: M2 Pro vs. 4070 Ti

To gauge the performance of these devices, we'll use token speed, measured in tokens per second (tokens/s). This metric reflects how quickly a device can process and generate text from an LLM. We'll analyze the performance for different model sizes and quantization levels.

Apple M2 Pro 200GB 16 Cores Token Speed Generation

The Apple M2 Pro is a powerful processor designed for high-performance computing. Its 16 cores and 200GB bandwidth provide ample processing power for handling complex tasks like running LLMs. However, it lacks dedicated GPU capabilities, which can limit its potential in certain scenarios.

Apple M2 Pro 200GB 16 Cores Token Speed Generation:

| Model | Quantization | Token Speed (tokens/s) |

|---|---|---|

| Llama2 7B | F16 | 12.47 |

| Llama2 7B | Q8_0 | 22.7 |

| Llama2 7B | Q4_0 | 37.87 |

As you can see, the M2 Pro performs well for Llama2 7B, especially at lower quantization levels, surpassing the 4070 Ti in Q4_0 generation speed. However, it lacks data for larger models like Llama 3, which we will see later.

NVIDIA 4070 Ti 12GB Token Speed Generation

The NVIDIA 4070 Ti is a dedicated graphics card, specifically designed for high-performance graphics and computing. The 12GB of dedicated memory and powerful GPU cores make it a formidable contender for LLM tasks. However, its performance is significantly dependent on the model size and quantization level.

NVIDIA 4070 Ti 12GB Token Speed Generation:

| Model | Quantization | Token Speed (tokens/s) |

|---|---|---|

| Llama3 8B | Q4KM | 82.21 |

We see that the 4070 Ti performs well for Llama 3 8B in Q4KM quantization. However, data for other quantization levels and larger models like Llama 3 70B are currently unavailable for this device.

Comparison of M2 Pro and 4070 Ti for Llama2 7B

The M2 Pro shows a clear advantage in Llama2 7B generation speed, particularly in Q4_0 quantization. The 4070 Ti data for this model is not available in the dataset.

Here's a breakdown of the performance:

- M2 Pro: Shows strong performance in token generation, especially in Q4_0 quantization.

- 4070 Ti: No data available for Llama2 7B.

Comparison of M2 Pro and 4070 Ti for Llama 3 8B

The 4070 Ti excels in Llama 3 8B token generation, particularly in Q4KM quantization. However, the M2 Pro data for this model is not available in the dataset.

Here's a breakdown of the performance:

- M2 Pro: No data available for Llama 3 8B.

- 4070 Ti: Shows strong performance in Q4KM quantization for Llama 3 8B.

Performance Considerations and Recommendations

Choosing the Right Device:

For smaller LLM models like Llama 2 7B: The M2 Pro offers excellent performance, especially with Q4_0 quantization. Its integrated CPU is powerful enough to handle the task efficiently.

For larger LLM models like Llama 3 8B and above: The 4070 Ti is a better choice due to its dedicated GPU capabilities and high memory bandwidth, which allow it to handle the increased computational demands.

Quantization:

Lower quantization levels (Q40, Q80): These levels generally result in faster token speeds but might sacrifice some accuracy.

Higher quantization levels (F16, Q4KM): These levels offer higher accuracy but might come with slower token generation speeds.

Use Cases:

- Real-time applications: If you need fast responses, prioritize devices with high token speeds, like the 4070 Ti for larger models or the M2 Pro for smaller models.

- Research and development: If accuracy is paramount, choose devices with the capability to run higher quantization levels, even if token speeds are slightly slower.

Conclusion

Both the Apple M2 Pro and the NVIDIA 4070 Ti offer impressive performance for local LLM development. The choice depends on your specific model size, desired quantization level, and performance expectations. For smaller models like Llama 2 7B, the Apple M2 Pro offers a compelling balance of performance and cost efficiency. For larger models, the NVIDIA 4070 Ti emerges as the champion with its dedicated GPU power.

Remember, this is just the tip of the iceberg in the world of LLMs. As models become even larger and more complex, the demand for specialized hardware will continue to grow. The M2 Pro and 4070 Ti represent the current state of the art, and the future holds even more exciting advancements in this rapidly evolving field.

FAQ

What are LLMs?

Large Language Models are powerful AI systems trained on massive datasets of text and code. They can understand, generate, and translate human language, making them incredibly versatile tools for a wide range of applications like text generation, translation, code completion, and more.

What is Quantization?

Think of quantization like compressing a large image. It reduces the size of an LLM by representing data using fewer bits. While this means a smaller footprint, it can sometimes affect the model's accuracy.

What is the difference between Processing and Generation?

- Processing: Refers to the time required to process the input text, which is then used for generating the output.

- Generation: Represents the time taken to generate the actual text output based on the processed input.

Keywords

LLMs, Large Language Models, Token Speed, Apple M2 Pro, NVIDIA 4070 Ti, Quantization, Model Size, F16, Q40, Q80, Q4KM, Llama 2, Llama 3, AI Development, Local LLM, GPU, CPU, Performance Benchmark, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP.